七、实现UpsertStreamTableSink操作

import com.springk.flink.bean.StudentInfo;

import org.apache.commons.lang3.StringUtils;

import org.apache.flink.api.common.functions.FlatMapFunction;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.datastream.SingleOutputStreamOperator;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.table.api.Table;

import org.apache.flink.table.api.java.StreamTableEnvironment;

import org.apache.flink.util.Collector;

public class TableStreamFlinkStudentUpsertTest {

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

StreamTableEnvironment streamTableEnvironment = StreamTableEnvironment.create(env);

//source,这里使用socket连接获取数据

DataStreamSource<String> text = env.socketTextStream("127.0.0.1", 9999, "\n");

//处理输入数据流,转换为StudentInfo类型,方便后续处理

SingleOutputStreamOperator<StudentInfo> dataStreamStudent = text.flatMap(new FlatMapFunction<String, StudentInfo>() {

@Override

public void flatMap(String s, Collector<StudentInfo> collector){

String infos[] = s.split(",");

if(StringUtils.isNotBlank(s) && infos.length==4){

StudentInfo studentInfo = new StudentInfo();

studentInfo.setName(infos[0]);

studentInfo.setSex(infos[1]);

studentInfo.setCourse(infos[2]);

studentInfo.setScore(Float.parseFloat(infos[3]));

collector.collect(studentInfo);

}

}

});

//注册dataStreamStudent流到表中,表名为:studentInfo

streamTableEnvironment.registerDataStream("studentInfo",dataStreamStudent,"name,sex,course,score");

//注册UpsertStreamTableSink

Table rTable = streamTableEnvironment.sqlQuery("select name,sum(score) as sum_total_score from studentInfo group by name");

streamTableEnvironment.registerTableSink("upsertStream", new StreamUpsertStreamTableSink(rTable.getSchema()));

rTable.insertInto("upsertStream");

env.execute("studentScoreAnalyse");

}

}

import com.springk.flink.bean.StudentScoreResult;

import org.apache.flink.api.common.typeinfo.TypeHint;

import org.apache.flink.api.common.typeinfo.TypeInformation;

import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.datastream.DataStreamSink;

import org.apache.flink.streaming.api.functions.sink.SinkFunction;

import org.apache.flink.table.api.TableSchema;

import org.apache.flink.table.sinks.TableSink;

import org.apache.flink.table.sinks.UpsertStreamTableSink;

public class StreamUpsertStreamTableSink implements UpsertStreamTableSink<StudentScoreResult> {

private TableSchema tableSchema;

public StreamUpsertStreamTableSink(TableSchema schema) {

this.tableSchema = schema;

}

@Override

public void setKeyFields(String[] keys) {

}

@Override

public void setIsAppendOnly(Boolean isAppendOnly) {

}

@Override

public TypeInformation<StudentScoreResult> getRecordType() {

return TypeInformation.of(new TypeHint<StudentScoreResult>(){});

}

@Override

public void emitDataStream(DataStream<Tuple2<Boolean, StudentScoreResult>> dataStream) {

}

@Override

public DataStreamSink<?> consumeDataStream(DataStream<Tuple2<Boolean, StudentScoreResult>> dataStream) {

return dataStream.addSink(new SinkFunction<Tuple2<Boolean, StudentScoreResult>>() {

@Override

public void invoke(Tuple2<Boolean, StudentScoreResult> value){

System.out.println(value);

}

});

}

@Override

public TableSchema getTableSchema() {

return tableSchema;

}

@Override

public TableSink<Tuple2<Boolean, StudentScoreResult>> configure(String[] strings, TypeInformation<?>[] typeInformations) {

return null;

}

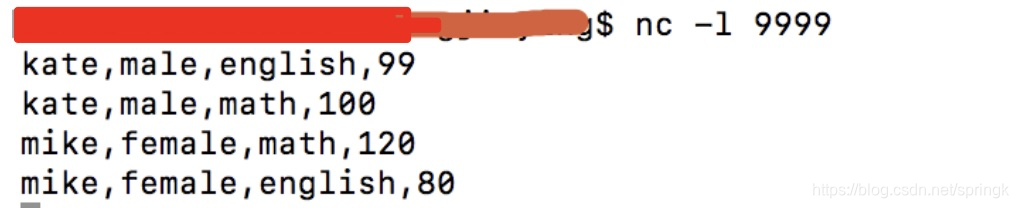

} 命令行中输入如下:

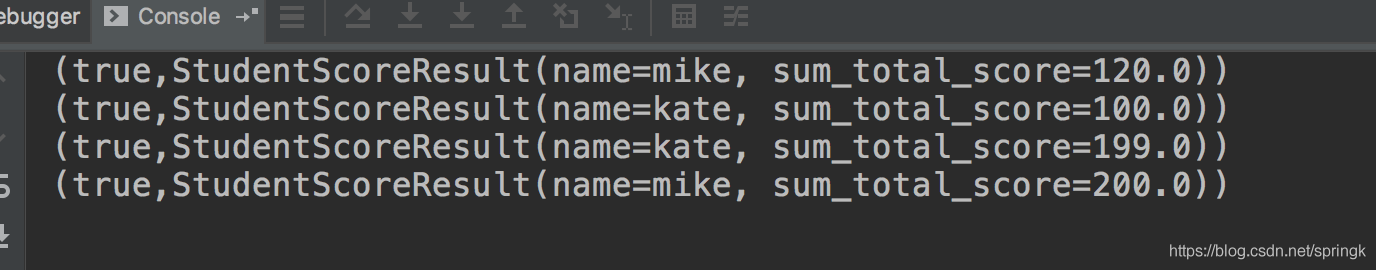

打印输出内容如下:

每次更新只有一条数据输出,

RetractStreamTableSink中: Insert被编码成一条Add消息; Delete被编码成一条Retract消息;Update被编码成两条消息(先是一条Retract消息,再是一条Add消息),即先删除再增加。

UpsertStreamTableSink: Insert和Update均被编码成一条消息(Upsert消息); Delete被编码成一条Delete消息。

UpsertStreamTableSink和RetractStreamTableSink最大的不同在于Update编码成一条消息,效率上比RetractStreamTableSink高。

907

907

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?