Skipfish是一个积极的Web应用程序的安全性侦查工具。它准备了一个互动为目标的网站的站点地图进行一个递归爬网和基于字典的探头。然后,将得到的地图是带注释的与许多活性安全检查的输出。最终报告工具生成的是,作为一个专业的网络应用程序安全评估的基础。

skipfish的相关参数如下:

Authentication and access options:

验证和访问选项:

-A user:pass - use specified HTTP authentication credentials

使用特定的http验证

-F host=IP - pretend that 'host' resolves to 'IP'

-C name=val - append a custom cookie to all requests

对所有请求添加一个自定的cookie

-H name=val - append a custom HTTP header to all requests

对所有请求添加一个自定的http请求头

-b (i|f|p) - use headers consistent with MSIE / Firefox / iPhone

伪装成IE/FIREFOX/IPHONE的浏览器

-N - do not accept any new cookies

不允许新的cookies

--auth-form url - form authentication URL

--auth-user user - form authentication user

--auth-pass pass - form authentication password

--auth-verify-url - URL for in-session detection

Crawl scope options:

爬范围选择:

-d max_depth - maximum crawl tree depth (16)最大抓取深度

-c max_child - maximum children to index per node (512)最大抓取节点

-x max_desc - maximum descendants to index per branch (8192)

每个索引分支抓取后代数

-r r_limit - max total number of requests to send (100000000)

最大请求数量

-p crawl% - node and link crawl probability (100%) 节点连接抓取几率

-q hex - repeat probabilistic scan with given seed

-I string - only follow URLs matching 'string' URL必须匹配字符串

-X string - exclude URLs matching 'string' URL排除字符串

-K string - do not fuzz parameters named 'string'

-D domain - crawl cross-site links to another domain 跨域扫描

-B domain - trust, but do not crawl, another domain

-Z - do not descend into 5xx locations 5xx错误时不再抓取

-O - do not submit any forms 不尝实提交表单

-P - do not parse HTML, etc, to find new links

不解析HTML查找连接

Reporting options:

报告选项:

-o dir - write output to specified directory (required)

-M - log warnings about mixed content / non-SSL passwords

-E - log all HTTP/1.0 / HTTP/1.1 caching intent mismatches

-U - log all external URLs and e-mails seen

-Q - completely suppress duplicate nodes in reports

-u - be quiet, disable realtime progress stats

-v - enable runtime logging (to stderr)

Dictionary management options:

字典管理选项:

-W wordlist - use a specified read-write wordlist (required)

-S wordlist - load a supplemental read-only wordlist

-L - do not auto-learn new keywords for the site

-Y - do not fuzz extensions in directory brute-force

-R age - purge words hit more than 'age' scans ago

-T name=val - add new form auto-fill rule

-G max_guess - maximum number of keyword guesses to keep (256)

-z sigfile - load signatures from this file

Performance settings:

性能设置:

-g max_conn - max simultaneous TCP connections, global (40)

最大全局TCP链接

-m host_conn - max simultaneous connections, per target IP (10)

最大链接/目标IP

-f max_fail - max number of consecutive HTTP errors (100)

最大http错误

-t req_tmout - total request response timeout (20 s) 请求超时时间

-w rw_tmout - individual network I/O timeout (10 s)

-i idle_tmout - timeout on idle HTTP connections (10 s)

-s s_limit - response size limit (400000 B) 限制大小

-e - do not keep binary responses for reporting

不报告二进制响应

Other settings:

其他设置:

-l max_req - max requests per second (0.000000)

-k duration - stop scanning after the given duration h:m:s

--config file - load the specified configuration file

一、使用Skipfish扫描器扫描网站

1.1在操作机kali上桌面空白处单击右键,选择“打开终端”。如图1所示

图1

1.2在打开的终端输入命令“skipfish -o txt http://192.168.1.3”,-o是指定扫描完记录放哪。如图所示

图2

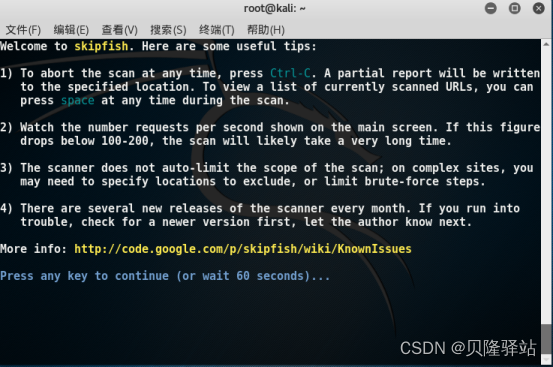

1.3回车得到如下信息。如图3所示

图3

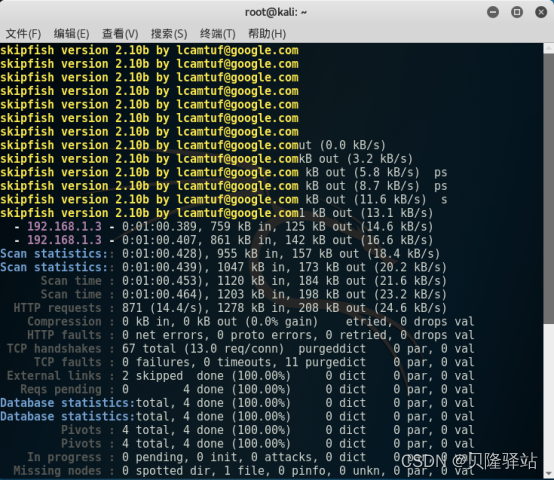

1.4按任意键继续,得到如下结果。如图4所示

图4

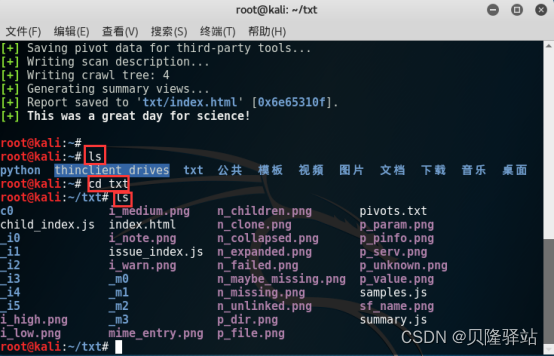

二、输出结果

2.1在命令行输入命令“ls”,可以看到在当前目录下有个txt文件夹,存放刚才扫描的结果,可输入命令“cd txt”,再输入命令“ls”进行查看。如图5所示

图5

2.2单击浏览器图标。如图6所示

图6

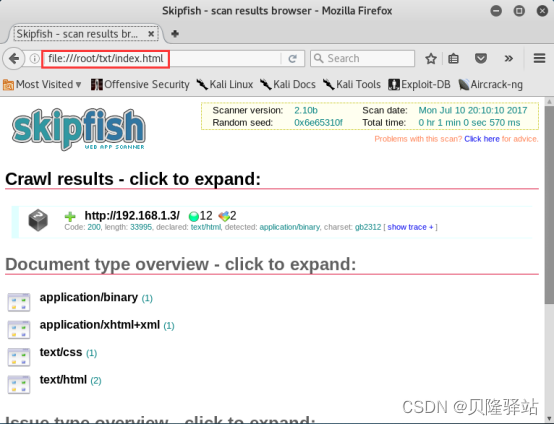

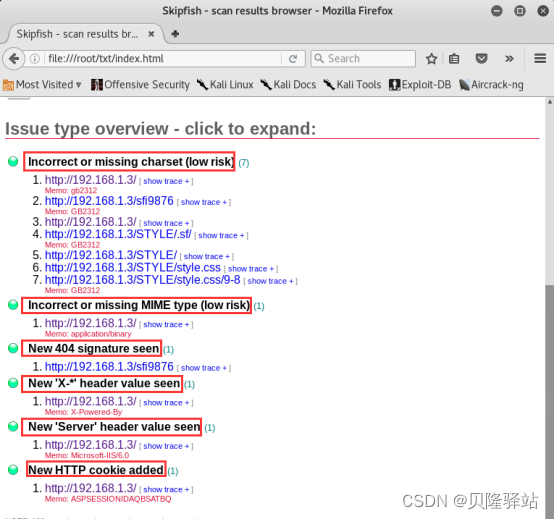

2.3在浏览器输入file:///root/txt/index.html,回车进行访问。如图7所示

图7

2.4向下拉动滑动条,单击问题类型进行展开查看。如图8所示

图8

774

774

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?