首先可以用ffmpeg命令行对视频进行裁剪,原始文件是1920x1080尺寸。

ffmpeg -i e:/in-vs.mp4 -vf crop=‘1280:640:200:200’ out_crop.mp4

其中1280和640代表裁剪后的宽和高,200和200分别代表裁剪的x坐标和y坐标(基于原始图像)。

下面用代码给予说明:

ret = avfilter_graph_create_filter(&m_pFilterCtxSrcVideoA, filter_src_videoA, pad_name_videoA, args_videoA, NULL, m_pFilterGraph);

if (ret < 0)

{

break;

}

AVFilterContext *cropFilter_ctx;

ret = avfilter_graph_create_filter(&cropFilter_ctx, filter_crop, name_crop, filter_desc, NULL, m_pFilterGraph);

if (ret < 0)

{

break;

}

ret = avfilter_graph_create_filter(&m_pFilterCtxSink, filter_sink, "out", NULL, NULL, m_pFilterGraph);

if (ret < 0)

{

break;

}

这里面构建了三个滤镜,buffer,crop,buffersink。

其中buffer用于接收读取到的yuv数据,crop用于裁剪, buffersink用于接收裁剪后的数据。

下面是滤镜的连接:

ret = avfilter_link(m_pFilterCtxSrcVideoA, 0, cropFilter_ctx, 0);

if (ret != 0)

{

break;

}

ret = avfilter_link(cropFilter_ctx, 0, m_pFilterCtxSink, 0);

if (ret != 0)

{

break;

}

ret = avfilter_graph_config(m_pFilterGraph, NULL);

if (ret < 0)

{

break;

}

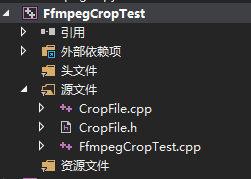

在开发过程中,出现一个很低级的错误,后面用写yuv数据,然后用相应的yuv查看工具才发现代码中的具体错误位置,CropFile.cpp文件中的SaveAvFrame用于将AVFrame写入到文件。

代码结构如下:

main函数所在文件FfmpegCropTest.cpp的内容如下:

#include <iostream>

#include "CropFile.h"

#ifdef __cplusplus

extern "C"

{

#endif

#pragma comment(lib, "avcodec.lib")

#pragma comment(lib, "avformat.lib")

#pragma comment(lib, "avutil.lib")

#pragma comment(lib, "avdevice.lib")

#pragma comment(lib, "avfilter.lib")

#pragma comment(lib, "postproc.lib")

#pragma comment(lib, "swresample.lib")

#pragma comment(lib, "swscale.lib")

#ifdef __cplusplus

};

#endif

int main()

{

CCropFile cVideoCopy;

const char *pFileA = "E:\\learn\\ffmpeg\\FfmpegFilterTest\\x64\\Release\\in-vs.mp4";

const char *pFileOut = "E:\\learn\\ffmpeg\\FfmpegFilterTest\\x64\\Release\\out-crop.mp4";

cVideoCopy.StartCrop(200, 200, 1280, 640, pFileA, pFileOut);

cVideoCopy.WaitFinish();

return 0;

}

CropFile.h内容如下:

#pragma once

#include <Windows.h>

#ifdef __cplusplus

extern "C"

{

#endif

#include "libavcodec/avcodec.h"

#include "libavformat/avformat.h"

#include "libswscale/swscale.h"

#include "libswresample/swresample.h"

#include "libavdevice/avdevice.h"

#include "libavutil/audio_fifo.h"

#include "libavutil/avutil.h"

#include "libavutil/fifo.h"

#include "libavutil/frame.h"

#include "libavutil/imgutils.h"

#include "libavfilter/avfilter.h"

#include "libavfilter/buffersink.h"

#include "libavfilter/buffersrc.h"

#ifdef __cplusplus

};

#endif

class CCropFile

{

public:

CCropFile();

~CCropFile();

public:

int StartCrop(int x, int y, int width, int height, const char *pFileA, const char *pFileOut);

int WaitFinish();

private:

int OpenFileA(const char *pFileA);

int OpenOutPut(const char *pFileOut);

int InitFilter(const char* filter_desc);

private:

static DWORD WINAPI VideoAReadProc(LPVOID lpParam);

void VideoARead();

static DWORD WINAPI VideoCropProc(LPVOID lpParam);

void VideoCrop();

private:

AVFormatContext *m_pFormatCtx_FileA = NULL;

AVCodecContext *m_pReadCodecCtx_VideoA = NULL;

AVCodec *m_pReadCodec_VideoA = NULL;

AVCodecContext *m_pCodecEncodeCtx_Video = NULL;

AVFormatContext *m_pFormatCtx_Out = NULL;

AVFifoBuffer *m_pVideoAFifo = NULL;

int m_iOutWidth = 1280;

int m_iOutHeight = 640;

int m_iYuv420FrameSize = 0;

private:

AVFilterGraph* m_pFilterGraph = NULL;

AVFilterContext* m_pFilterCtxSrcVideoA = NULL;

AVFilterContext* m_pFilterCtxSink = NULL;

private:

CRITICAL_SECTION m_csVideoASection;

HANDLE m_hVideoAReadThread = NULL;

HANDLE m_hVideoCrophread = NULL;

};

CropFile.cpp内容如下:

#include "CropFile.h"

//#include "log/log.h"

void SaveAvFrame(AVFrame *avFrame)

{

FILE *fDump = fopen("e:\\my.yuv", "ab");

uint32_t pitchY = avFrame->linesize[0];

uint32_t pitchU = avFrame->linesize[1];

uint32_t pitchV = avFrame->linesize[2];

uint8_t *avY = avFrame->data[0];

uint8_t *avU = avFrame->data[1];

uint8_t *avV = avFrame->data[2];

for (uint32_t i = 0; i < avFrame->height; i++) {

fwrite(avY, avFrame->width, 1, fDump);

avY += pitchY;

}

for (uint32_t i = 0; i < avFrame->height / 2; i++) {

fwrite(avU, avFrame->width / 2, 1, fDump);

avU += pitchU;

}

for (uint32_t i = 0; i < avFrame->height / 2; i++) {

fwrite(avV, avFrame->width / 2, 1, fDump);

avV += pitchV;

}

fclose(fDump);

}

CCropFile::CCropFile()

{

InitializeCriticalSection(&m_csVideoASection);

}

CCropFile::~CCropFile()

{

DeleteCriticalSection(&m_csVideoASection);

}

int CCropFile::StartCrop(int x, int y, int width, int height, const char *pFileA, const char *pFileOut)

{

int ret = -1;

do

{

m_iOutWidth = width;

m_iOutHeight = height;

ret = OpenFileA(pFileA);

if (ret != 0)

{

break;

}

ret = OpenOutPut(pFileOut);

if (ret != 0)

{

break;

}

char filter_desc[512] = { 0 };

/*_snprintf(filter_desc, sizeof(filter_desc),

"[in0]crop=w=%d:h=%d:x=%d:y=%d[out]",

width, height, x, y);*/

_snprintf(filter_desc, sizeof(filter_desc),

"w=%d:h=%d:x=%d:y=%d",

width, height, x, y);

ret = InitFilter(filter_desc);

if (ret < 0)

{

break;

}

m_iYuv420FrameSize = av_image_get_buffer_size(AV_PIX_FMT_YUV420P, m_pReadCodecCtx_VideoA->width, m_pReadCodecCtx_VideoA->height, 1);

//申请30帧缓存

m_pVideoAFifo = av_fifo_alloc(30 * m_iYuv420FrameSize);

m_hVideoAReadThread = CreateThread(NULL, 0, VideoAReadProc, this, 0, NULL);

m_hVideoCrophread = CreateThread(NULL, 0, VideoCropProc, this, 0, NULL);

} while (0);

return ret;

}

int CCropFile::WaitFinish()

{

int ret = 0;

do

{

if (NULL == m_hVideoAReadThread)

{

break;

}

WaitForSingleObject(m_hVideoAReadThread, INFINITE);

CloseHandle(m_hVideoAReadThread);

m_hVideoAReadThread = NULL;

WaitForSingleObject(m_hVideoCrophread, INFINITE);

CloseHandle(m_hVideoCrophread);

m_hVideoCrophread = NULL;

} while (0);

return ret;

}

int CCropFile::OpenFileA(const char *pFileA)

{

int ret = -1;

do

{

if ((ret = avformat_open_input(&m_pFormatCtx_FileA, pFileA, 0, 0)) < 0) {

printf("Could not open input file.");

break;

}

if ((ret = avformat_find_stream_info(m_pFormatCtx_FileA, 0)) < 0) {

printf("Failed to retrieve input stream information");

break;

}

if (m_pFormatCtx_FileA->streams[0]->codecpar->codec_type != AVMEDIA_TYPE_VIDEO)

{

break;

}

m_pReadCodec_VideoA = (AVCodec *)avcodec_find_decoder(m_pFormatCtx_FileA->streams[0]->codecpar->codec_id);

m_pReadCodecCtx_VideoA = avcodec_alloc_context3(m_pReadCodec_VideoA);

if (m_pReadCodecCtx_VideoA == NULL)

{

break;

}

avcodec_parameters_to_context(m_pReadCodecCtx_VideoA, m_pFormatCtx_FileA->streams[0]->codecpar);

m_pReadCodecCtx_VideoA->framerate = m_pFormatCtx_FileA->streams[0]->r_frame_rate;

if (avcodec_open2(m_pReadCodecCtx_VideoA, m_pReadCodec_VideoA, NULL) < 0)

{

break;

}

ret = 0;

} while (0);

return ret;

}

int CCropFile::OpenOutPut(const char *pFileOut)

{

int iRet = -1;

AVStream *pAudioStream = NULL;

AVStream *pVideoStream = NULL;

do

{

avformat_alloc_output_context2(&m_pFormatCtx_Out, NULL, NULL, pFileOut);

{

AVCodec* pCodecEncode_Video = (AVCodec *)avcodec_find_encoder(m_pFormatCtx_Out->oformat->video_codec);

m_pCodecEncodeCtx_Video = avcodec_alloc_context3(pCodecEncode_Video);

if (!m_pCodecEncodeCtx_Video)

{

break;

}

pVideoStream = avformat_new_stream(m_pFormatCtx_Out, pCodecEncode_Video);

if (!pVideoStream)

{

break;

}

int frameRate = 10;

m_pCodecEncodeCtx_Video->flags |= AV_CODEC_FLAG_QSCALE;

m_pCodecEncodeCtx_Video->bit_rate = 4000000;

m_pCodecEncodeCtx_Video->rc_min_rate = 4000000;

m_pCodecEncodeCtx_Video->rc_max_rate = 4000000;

m_pCodecEncodeCtx_Video->bit_rate_tolerance = 4000000;

m_pCodecEncodeCtx_Video->time_base.den = frameRate;

m_pCodecEncodeCtx_Video->time_base.num = 1;

m_pCodecEncodeCtx_Video->width = m_iOutWidth;

m_pCodecEncodeCtx_Video->height = m_iOutHeight;

//pH264Encoder->pCodecCtx->frame_number = 1;

m_pCodecEncodeCtx_Video->gop_size = 12;

m_pCodecEncodeCtx_Video->max_b_frames = 0;

m_pCodecEncodeCtx_Video->thread_count = 4;

m_pCodecEncodeCtx_Video->pix_fmt = AV_PIX_FMT_YUV420P;

m_pCodecEncodeCtx_Video->codec_id = AV_CODEC_ID_H264;

m_pCodecEncodeCtx_Video->codec_type = AVMEDIA_TYPE_VIDEO;

av_opt_set(m_pCodecEncodeCtx_Video->priv_data, "b-pyramid", "none", 0);

av_opt_set(m_pCodecEncodeCtx_Video->priv_data, "preset", "superfast", 0);

av_opt_set(m_pCodecEncodeCtx_Video->priv_data, "tune", "zerolatency", 0);

if (m_pFormatCtx_Out->oformat->flags & AVFMT_GLOBALHEADER)

m_pCodecEncodeCtx_Video->flags |= AV_CODEC_FLAG_GLOBAL_HEADER;

if (avcodec_open2(m_pCodecEncodeCtx_Video, pCodecEncode_Video, 0) < 0)

{

//编码器打开失败,退出程序

break;

}

}

if (!(m_pFormatCtx_Out->oformat->flags & AVFMT_NOFILE))

{

if (avio_open(&m_pFormatCtx_Out->pb, pFileOut, AVIO_FLAG_WRITE) < 0)

{

break;

}

}

avcodec_parameters_from_context(pVideoStream->codecpar, m_pCodecEncodeCtx_Video);

if (avformat_write_header(m_pFormatCtx_Out, NULL) < 0)

{

break;

}

iRet = 0;

} while (0);

if (iRet != 0)

{

if (m_pCodecEncodeCtx_Video != NULL)

{

avcodec_free_context(&m_pCodecEncodeCtx_Video);

m_pCodecEncodeCtx_Video = NULL;

}

if (m_pFormatCtx_Out != NULL)

{

avformat_free_context(m_pFormatCtx_Out);

m_pFormatCtx_Out = NULL;

}

}

return iRet;

}

int CCropFile::InitFilter(const char* filter_desc)

{

int ret = 0;

char args_videoA[512];

const char* pad_name_videoA = "in0";

const char* name_crop = "crop2";

AVFilter* filter_src_videoA = (AVFilter *)avfilter_get_by_name("buffer");

AVFilter* filter_sink = (AVFilter *)avfilter_get_by_name("buffersink");

AVFilter *filter_crop = (AVFilter *)avfilter_get_by_name("crop");

AVFilterInOut* filter_output_videoA = avfilter_inout_alloc();

AVFilterInOut* filter_input = avfilter_inout_alloc();

m_pFilterGraph = avfilter_graph_alloc();

AVRational timeBase;

timeBase.num = 1;

timeBase.den = 10;

AVRational timeAspect;

timeAspect.num = 0;

timeAspect.den = 1;

_snprintf(args_videoA, sizeof(args_videoA),

"video_size=%dx%d:pix_fmt=%d:time_base=%d/%d:pixel_aspect=%d/%d",

1920, 1080, AV_PIX_FMT_YUV420P,

timeBase.num, timeBase.den,

timeAspect.num,

timeAspect.den);

AVFilterInOut* filter_outputs[1];

do

{

ret = avfilter_graph_create_filter(&m_pFilterCtxSrcVideoA, filter_src_videoA, pad_name_videoA, args_videoA, NULL, m_pFilterGraph);

if (ret < 0)

{

break;

}

AVFilterContext *cropFilter_ctx;

ret = avfilter_graph_create_filter(&cropFilter_ctx, filter_crop, name_crop, filter_desc, NULL, m_pFilterGraph);

if (ret < 0)

{

break;

}

ret = avfilter_graph_create_filter(&m_pFilterCtxSink, filter_sink, "out", NULL, NULL, m_pFilterGraph);

if (ret < 0)

{

break;

}

ret = av_opt_set_bin(m_pFilterCtxSink, "pix_fmts", (uint8_t*)&m_pCodecEncodeCtx_Video->pix_fmt, sizeof(m_pCodecEncodeCtx_Video->pix_fmt), AV_OPT_SEARCH_CHILDREN);

ret = avfilter_link(m_pFilterCtxSrcVideoA, 0, cropFilter_ctx, 0);

if (ret != 0)

{

break;

}

ret = avfilter_link(cropFilter_ctx, 0, m_pFilterCtxSink, 0);

if (ret != 0)

{

break;

}

ret = avfilter_graph_config(m_pFilterGraph, NULL);

if (ret < 0)

{

break;

}

ret = 0;

} while (0);

avfilter_inout_free(&filter_input);

av_free(filter_src_videoA);

avfilter_inout_free(filter_outputs);

char* temp = avfilter_graph_dump(m_pFilterGraph, NULL);

return ret;

}

DWORD WINAPI CCropFile::VideoAReadProc(LPVOID lpParam)

{

CCropFile *pVideoMerge = (CCropFile *)lpParam;

if (pVideoMerge != NULL)

{

pVideoMerge->VideoARead();

}

return 0;

}

void CCropFile::VideoARead()

{

AVFrame *pFrame;

pFrame = av_frame_alloc();

int y_size = m_pReadCodecCtx_VideoA->width * m_pReadCodecCtx_VideoA->height;

char *pY = new char[y_size];

char *pU = new char[y_size / 4];

char *pV = new char[y_size / 4];

AVPacket packet = { 0 };

int ret = 0;

while (1)

{

av_packet_unref(&packet);

ret = av_read_frame(m_pFormatCtx_FileA, &packet);

if (ret == AVERROR(EAGAIN))

{

continue;

}

else if (ret == AVERROR_EOF)

{

break;

}

else if (ret < 0)

{

break;

}

ret = avcodec_send_packet(m_pReadCodecCtx_VideoA, &packet);

if (ret >= 0)

{

ret = avcodec_receive_frame(m_pReadCodecCtx_VideoA, pFrame);

if (ret == AVERROR(EAGAIN))

{

continue;

}

else if (ret == AVERROR_EOF)

{

break;

}

else if (ret < 0) {

break;

}

while (1)

{

if (av_fifo_space(m_pVideoAFifo) >= m_iYuv420FrameSize)

{

///Y

int contY = 0;

for (int i = 0; i < pFrame->height; i++)

{

memcpy(pY + contY, pFrame->data[0] + i * pFrame->linesize[0], pFrame->width);

contY += pFrame->width;

}

///U

int contU = 0;

for (int i = 0; i < pFrame->height / 2; i++)

{

memcpy(pU + contU, pFrame->data[1] + i * pFrame->linesize[1], pFrame->width / 2);

contU += pFrame->width / 2;

}

///V

int contV = 0;

for (int i = 0; i < pFrame->height / 2; i++)

{

memcpy(pV + contV, pFrame->data[2] + i * pFrame->linesize[2], pFrame->width / 2);

contV += pFrame->width / 2;

}

EnterCriticalSection(&m_csVideoASection);

av_fifo_generic_write(m_pVideoAFifo, pY, y_size, NULL);

av_fifo_generic_write(m_pVideoAFifo, pU, y_size / 4, NULL);

av_fifo_generic_write(m_pVideoAFifo, pV, y_size / 4, NULL);

LeaveCriticalSection(&m_csVideoASection);

break;

}

else

{

Sleep(100);

}

}

}

if (ret == AVERROR(EAGAIN))

{

continue;

}

}

av_frame_free(&pFrame);

delete[] pY;

delete[] pU;

delete[] pV;

}

DWORD WINAPI CCropFile::VideoCropProc(LPVOID lpParam)

{

CCropFile *pVideoMerge = (CCropFile *)lpParam;

if (pVideoMerge != NULL)

{

pVideoMerge->VideoCrop();

}

return 0;

}

void CCropFile::VideoCrop()

{

int ret = 0;

AVFrame *pFrameVideoA = av_frame_alloc();

uint8_t *videoA_buffer_yuv420 = (uint8_t *)av_malloc(m_iYuv420FrameSize);

av_image_fill_arrays(pFrameVideoA->data, pFrameVideoA->linesize, videoA_buffer_yuv420, AV_PIX_FMT_YUV420P, m_pReadCodecCtx_VideoA->width, m_pReadCodecCtx_VideoA->height, 1);

int iYuv420FrameoutSize = av_image_get_buffer_size(AV_PIX_FMT_YUV420P, m_iOutWidth, m_iOutHeight, 1);

AVFrame* pFrame_out = av_frame_alloc();

uint8_t *out_buffer_yuv420 = (uint8_t *)av_malloc(iYuv420FrameoutSize);

av_image_fill_arrays(pFrame_out->data, pFrame_out->linesize, out_buffer_yuv420, AV_PIX_FMT_YUV420P, m_iOutWidth, m_iOutHeight, 1);

AVPacket packet = { 0 };

int iPicCount = 0;

while (1)

{

if (NULL == m_pVideoAFifo)

{

break;

}

int iVideoASize = av_fifo_size(m_pVideoAFifo);

if (iVideoASize >= m_iYuv420FrameSize)

{

EnterCriticalSection(&m_csVideoASection);

av_fifo_generic_read(m_pVideoAFifo, videoA_buffer_yuv420, m_iYuv420FrameSize, NULL);

LeaveCriticalSection(&m_csVideoASection);

pFrameVideoA->pkt_dts = pFrameVideoA->pts = av_rescale_q_rnd(iPicCount, m_pCodecEncodeCtx_Video->time_base, m_pFormatCtx_Out->streams[0]->time_base, (AVRounding)(AV_ROUND_NEAR_INF | AV_ROUND_PASS_MINMAX));

pFrameVideoA->pkt_duration = 0;

pFrameVideoA->pkt_pos = -1;

pFrameVideoA->width = m_pReadCodecCtx_VideoA->width;

pFrameVideoA->height = m_pReadCodecCtx_VideoA->height;

pFrameVideoA->format = AV_PIX_FMT_YUV420P;

ret = av_buffersrc_add_frame(m_pFilterCtxSrcVideoA, pFrameVideoA);

if (ret < 0)

{

break;

}

ret = av_buffersink_get_frame(m_pFilterCtxSink, pFrame_out);

if (ret < 0)

{

//printf("Mixer: failed to call av_buffersink_get_frame_flags\n");

break;

}

pFrame_out->pkt_dts = pFrame_out->pts = av_rescale_q_rnd(iPicCount, m_pCodecEncodeCtx_Video->time_base, m_pFormatCtx_Out->streams[0]->time_base, (AVRounding)(AV_ROUND_NEAR_INF | AV_ROUND_PASS_MINMAX));

pFrame_out->pkt_duration = 0;

pFrame_out->pkt_pos = -1;

pFrame_out->width = m_iOutWidth;

pFrame_out->height = m_iOutHeight;

pFrame_out->format = AV_PIX_FMT_YUV420P;

//SaveAvFrame(pFrame_out);

ret = avcodec_send_frame(m_pCodecEncodeCtx_Video, pFrame_out);

ret = avcodec_receive_packet(m_pCodecEncodeCtx_Video, &packet);

av_write_frame(m_pFormatCtx_Out, &packet);

iPicCount++;

}

else

{

if (m_hVideoAReadThread == NULL)

{

break;

}

Sleep(1);

}

}

av_write_trailer(m_pFormatCtx_Out);

avio_close(m_pFormatCtx_Out->pb);

av_frame_free(&pFrameVideoA);

}

FFmpeg视频裁剪实战

FFmpeg视频裁剪实战

本文介绍使用FFmpeg进行视频裁剪的方法,包括命令行操作及C++代码实现过程。详细解析了如何通过构建filter滤镜进行视频裁剪,并演示了具体的代码实现。

本文介绍使用FFmpeg进行视频裁剪的方法,包括命令行操作及C++代码实现过程。详细解析了如何通过构建filter滤镜进行视频裁剪,并演示了具体的代码实现。

450

450

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?