声明:工程使用的是Scala语言开发的

需求:在工程中加入log日志记录

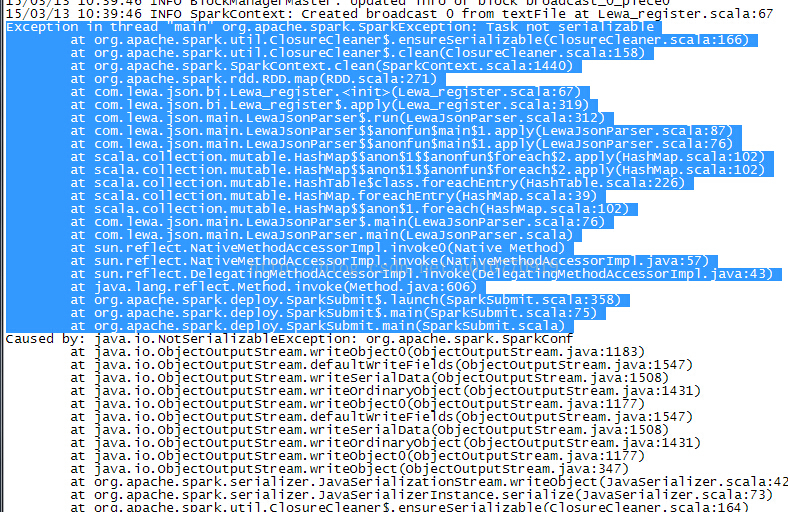

问题:Exception in thread "main" org.apache.spark.SparkException: Task not serializable,序列化异常

Exception in thread "main" org.apache.spark.SparkException: Task not serializable

解决方案一:

直接使用常规的 log = LoggerFactory.getLogger(), 不行的, 报错, 序列化问题

解决方案二:

通过继承,序列化Logger

class LocalLogger(name: String) extends Logger(name) with Serializable { }

解决方案三:

定义一个trait, 混入到各个类中

/**

* @author Administrator

*

*/

trait DefLogger {

// Make the log field transient so that objects with Logging can

// be serialized and used on another machine

@transient private var log_ : Logger = null

// Method to get or create the logger for this object

protected def log: Logger = {

if (log_ == null) {

var className = this.getClass.getName

// Ignore trailing $'s in the class names for Scala objects

if (className.endsWith("$")) {

className = className.substring(0, className.length - 1)

}

log_ = LoggerFactory.getLogger(className)

}

log_

}

}方案四:

其实方案三 是在看了spark的logging源代码以后发现的问题, Scala和spark的类编译成.class以后名称有点不太一样, 特别是Java的静态方法和Scala的Object 的区别

Scala的object解析后的。class以$ 结尾, 所以会有以上字符串判断操作

在使用第四种解决方案后, 记录日志基本上是没有问题的, 但是, 我在一个RDD的transform 和 action 时 依然出现序列化的问题; 代码如下

在类中定义的log如下:

@transient private var log_ : Logger = null

// Method to get or create the logger for this object

protected def log: Logger = {

if (log_ == null) {

var className = this.getClass.getName

// Ignore trailing $'s in the class names for Scala objects

if (className.endsWith("$")) {

className = className.substring(0, className.length - 1)

}

log_ = LoggerFactory.getLogger(className)

}

log_

}

在rdd的map操作中遇到异常时 记录异常

case e: ArrayIndexOutOfBoundsException => {

log.error("lewa_device_upgrade ArrayIndexOutOfBoundsException: " + line)

Row("NO", "NO", "NO", "NO", "NO", "NO", "NO", "NO", "NO", 0, 0, "NO", "NO", "NO", "NO", "NO", "NO", "NO", "NO", "NO", "NO",

"NO", 0, "NO", 0, "NO", "NO", 0, "NO", "NO", "NO", "NO", "NO", "NO", 0, 0, 0, 0)

}

突然间想起来之前在spark的官网上看文档时一直理解不了的一段话:

细看了一下文档说明,向spark传递fun有两种方法

(1)匿名函数

(2)单例静态方法

我使用的是单例静态方法:

在类的伴生对象中定义log,代码 如下

/**

* constructor the object

*/

object Lewa_register extends Serializable {

def apply(master: String, savepath: String, srcfilepath: String) = new Lewa_register(master, savepath, srcfilepath)

// Make the log field transient so that objects with Logging can

// be serialized and used on another machine

@transient private var log_ : Logger = null

// Method to get or create the logger for this object

protected def log: Logger = {

if (log_ == null) {

var className = this.getClass.getName

// Ignore trailing $'s in the class names for Scala objects

if (className.endsWith("$")) {

className = className.substring(0, className.length - 1)

}

log_ = LoggerFactory.getLogger(className)

}

log_

}

}然后在对应的位置调用

Lewa_register.log.info()运行结果没有问题, 查看一下日志

下图是我在RDD的map操作时 随便记录的日志

下一步操作时配置自定义的log4j.properties文件,将自己记录的日志和spark的日志分开存储。

2900

2900

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?