本人的其他博客中提到了可以用矩阵求导的方法来运算,然后这里简单讲下。

首先,直接贴Ng老师的课后作业的课件(exercise3.pdf)

其实感觉挺巧的,虽然这里Ng老师给出来一个很完美的式子,一步就把gradient矩阵写出来了,但问题就是没把问题解释明白,换了个激活函数,以我这种人的智商,肯定要懵逼的。所以我给出一个更加具有一般性的证明,虽然最后的结果是一样的。

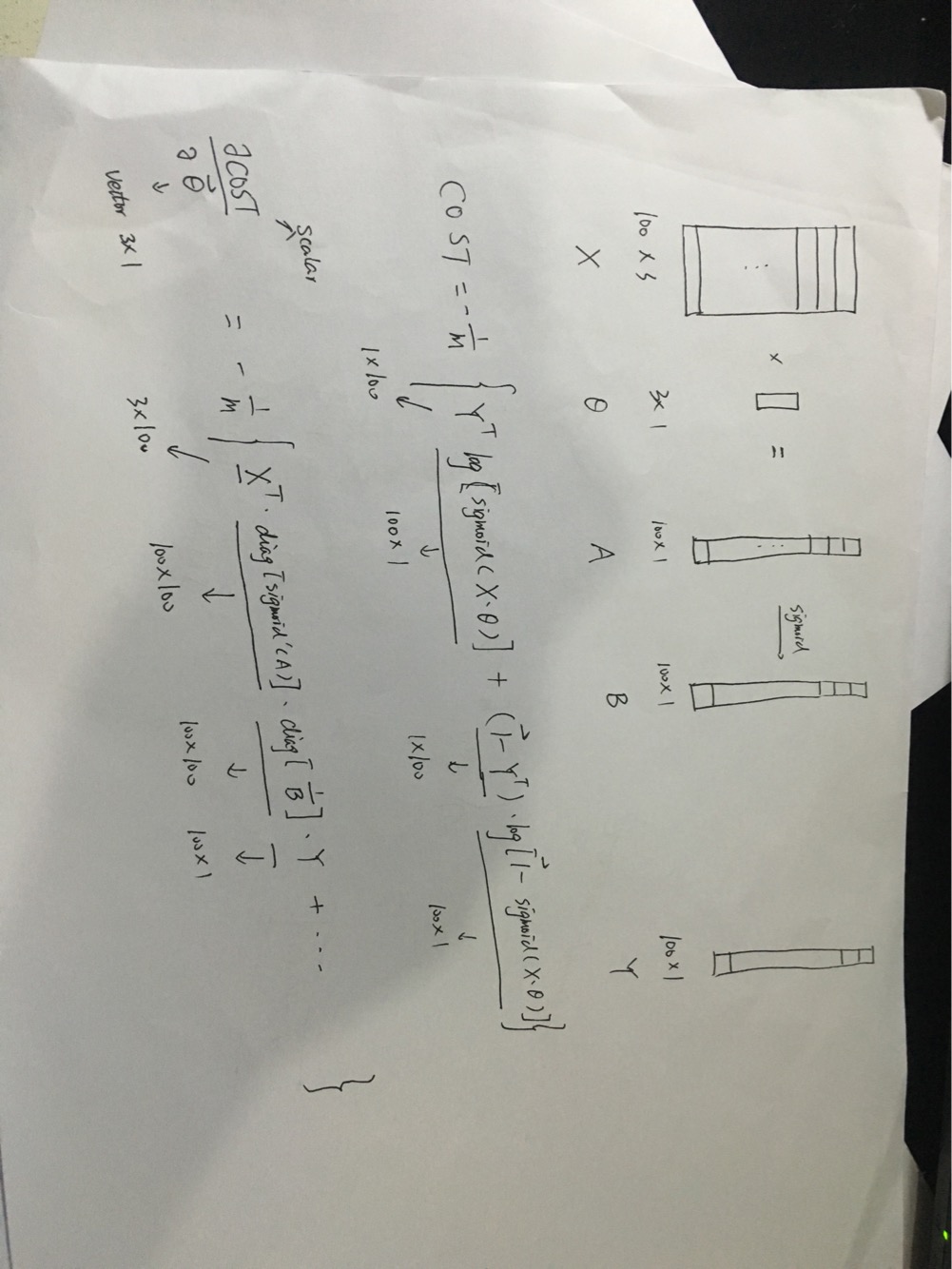

然后我给出我的结论,假设我们有100个training example,然后每个training example有3个feature,则

X是一个100*3的矩阵,X*theta为一个100*1的向量(记为A),对A求sigmoid,得到B(100*1),然后下面的图就能证明一切了,比较核心的地方是diag,这一点我在智华馆突然脑洞大开才想出来的。

由于需要把宝贵的时间留给熟练工技能,所以逻辑回归的python库我就没时间写了,下面贴上当年写的matlab代码

% 版权所有,侵权不究% typhoonbxq% the University of Hong Kong------------分割线,惯性逼格--------------------function [J, grad] = costFunctionReg(theta, X, y, lambda)

%COSTFUNCTIONREG Compute cost and gradient for logistic regression with regularization

% J = COSTFUNCTIONREG(theta, X, y, lambda) computes the cost of using

% theta as the parameter for regularized logistic regression and the

% gradient of the cost w.r.t. to the parameters.

% Initialize some useful values

m = length(y); % number of training examples

n = size(theta,1); % number of parameters of theta

% You need to return the following variables correctly

J = 0;

grad = zeros(size(theta));

% ====================== YOUR CODE HERE ======================

% Instructions: Compute the cost of a particular choice of theta.

% You should set J to the cost.

% Compute the partial derivatives and set grad to the partial

% derivatives of the cost w.r.t. each parameter in theta

sum1 = 0;

sum2 = 0;

% for i = 1:m

% sum1 = sum1 + 1/m*((-y(i)*log(sigmoid(X(i,:)*theta))) - (1-y(i))*log(1-sigmoid(X(i,:)*theta)));

% end

% for j =2:n

% sum2 = sum2 + lambda/2/m*(theta(j)^2);

% end

% for i=1:m

% grad(1) = grad(1) + 1/m*(sigmoid(X(i,:)*theta) - y(i))*X(i,1);

% end

% for i=2:n

% for j=1:m

% grad(i) = grad(i) + 1/m*(sigmoid(X(j,:)*theta) - y(j))*X(j,i);

% end

% grad(i) = grad(i) + lambda/m*theta(i);

% end

sum1 = -1/m * ( y' * log(sigmoid(X*theta)) + (1-y')*log(1-sigmoid(X*theta)));

sum2 = lambda / 2 / m * (theta(2:end)' * theta(2:end));

J = sum1 + sum2;

u = X * theta;

v_1 = 1./ ( 1 + exp(-X * theta));

v_2 = 1 - v_1;

grad = (-X' * diag( exp(-u)./(1 + exp(-u)).^2 )* diag(1./v_1)*y - X' * diag(exp(-u)./(1+exp(-u)).^2)*diag(1./v_2)*(y-1))/m;

temp = lambda / m * [0;theta(2:end)];

grad = grad + temp;

% =============================================================

end

有兴趣的读者可以下下载一下看看

直接跑ex2_reg.m文件就行了,上面贴的代码是costFunctionReg.m

CSDN居然不让上传我当年写的作业,醉醉哒。。。

287

287

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?