这个网络的结果是:

input–>conv1–>relu–>maxpooling–>dropout–>conv2–>reul–>maxpooling –>dropout–>reshape–>innerproduct–>relu–>dropout–>innerproduct–>softmax_cross_entropy_with_logits

pooling 和 convolution的stride是针对每一个维度的stride。

convolution的kernel是h×w×c×n

# -*- coding: utf-8 -*-

"""

Created on Mon Sep 19 09:44:16 2016

@author: yang

"""

import tensorflow as tf

import sys

sys.path.append('/home/yang/tensorflow/tensorflow/examples/tutorials/mnist')

import input_data

mnist = input_data.read_data_sets("/home/yang/data", one_hot=True)

learning_rate = 0.001

training_iters = 100000

batch_size = 128

display_step = 10

n_input = 784 #mnist输入图片的维度

n_class = 10 #类别数

dropout = 0.75 #dropout的系数

x = tf.placeholder(tf.float32, [None, n_input])

y = tf.placeholder(tf.float32, [None, n_class])

keep_prob = tf.placeholder(tf.float32)

def conv2d(img, w, b):

return tf.nn.relu(tf.nn.bias_add(tf.nn.conv2d(img, w, strides=[1, 1, 1, 1], padding='SAME'),b))

def max_pool(img, k):

return tf.nn.max_pool(img, ksize=[1, k, k, 1], strides=[1, k, k, 1], padding='SAME')

#构建网络

def conv_net (_X, _weights, _biases, _dropout):

_X = tf.reshape(_X, shape=[-1, 28, 28, 1])

conv1 = conv2d(_X, _weights['wc1'], _biases['bc1'])

conv1 = max_pool(conv1, k=2)

conv1 = tf.nn.dropout(conv1, _dropout)

conv2 = conv2d(conv1, _weights['wc2'], _biases['bc2'])

conv2 = max_pool(conv2, k=2)

conv2 = tf.nn.dropout(conv2, _dropout)

dense1 = tf.reshape(conv2, [-1, _weights['wd1'].get_shape().as_list()[0]])

dense1 = tf.nn.relu(tf.add(tf.matmul(dense1, _weights['wd1']), _biases['bd1']))

dense1 = tf.nn.dropout(dense1, _dropout)

out = tf.add(tf.matmul(dense1, _weights['out']), _biases['out'])

return out

weights = {

'wc1': tf.Variable(tf.random_normal([5, 5, 1, 32])),

'wc2': tf.Variable(tf.random_normal([5, 5, 32, 64])),

'wd1': tf.Variable(tf.random_normal([7*7*64, 1024])),

'out': tf.Variable(tf.random_normal([1024, n_class]))

}

biases = {

'bc1': tf.Variable(tf.random_normal([32])),

'bc2': tf.Variable(tf.random_normal([64])),

'bd1': tf.Variable(tf.random_normal([1024])),

'out': tf.Variable(tf.random_normal([n_class]))

}

pred = conv_net(x, weights, biases, keep_prob)

cost = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(pred, y))

optimizer = tf.train.AdamOptimizer(learning_rate=learning_rate).minimize(cost)

correct_pred = tf.equal(tf.argmax(pred,1), tf.argmax(y,1))

accuracy = tf.reduce_mean(tf.cast(correct_pred, tf.float32))

init = tf.initialize_all_variables()

tf.device("/gpu:0")

with tf.Session() as sess:

sess.run(init)

step = 1

while step*batch_size < training_iters:

batch_xs, batch_ys = mnist.train.next_batch(batch_size)

sess.run(optimizer, feed_dict={x: batch_xs, y: batch_ys, keep_prob: dropout})

if step % display_step == 0:

batch_xs, batch_ys = mnist.test.next_batch(batch_size)

acc = sess.run(accuracy, feed_dict={x: batch_xs, y: batch_ys, keep_prob: 1.})

loss = sess.run(cost, feed_dict={x: batch_xs, y: batch_ys, keep_prob: 1.})

print "Iter " + str(step*batch_size) + ", Minibatch Loss= " + "{:.6f}".format(loss) + ", Training Accuracy= " + "{:.5f}".format(acc)

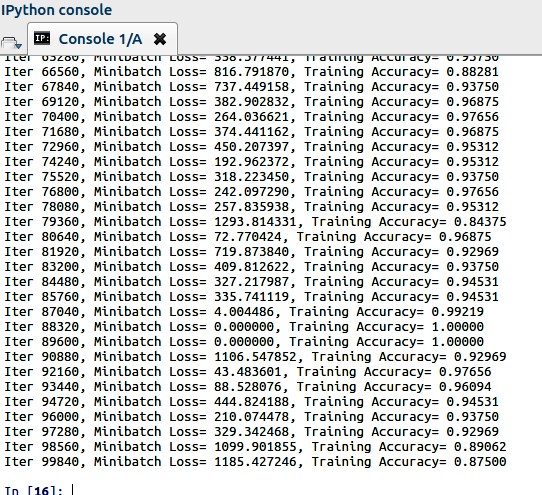

step+=1运行结果

tensorflow语法补充学习:

tf.nn.max_pool(value, ksize, strides, padding, data_format='NHWC', name=None)

Performs the max pooling on the input.

Args:

value: A 4-DTensorwith shape[batch, height, width, channels]and

typetf.float32.ksize: A list of ints that has length >= 4. The size of the window for

each dimension of the input tensor.strides: A list of ints that has length >= 4. The stride of the sliding

window for each dimension of the input tensor.padding: A string, either'VALID'or'SAME'. The padding algorithm.

See the comment heredata_format: A string. ‘NHWC’ and ‘NCHW’ are supported.name: Optional name for the operation.

Returns:

A Tensor with type tf.float32. The max pooled output tensor.

### tf.reshape(tensor, shape, name=None) {#reshape}

Reshapes a tensor.

Given tensor, this operation returns a tensor that has the same values

as tensor with shape shape.

If one component of shape is the special value -1, the size of that dimension

is computed so that the total size remains constant. In particular, a shape

of [-1] flattens into 1-D. At most one component of shape can be -1.

If shape is 1-D or higher, then the operation returns a tensor with shape

shape filled with the values of tensor. In this case, the number of elements

implied by shape must be the same as the number of elements in tensor.

For example:

# tensor 't' is [1, 2, 3, 4, 5, 6, 7, 8, 9]

# tensor 't' has shape [9]

reshape(t, [3, 3]) ==> [[1, 2, 3],

[4, 5, 6],

[7, 8, 9]]

# tensor 't' is [[[1, 1], [2, 2]],

# [[3, 3], [4, 4]]]

# tensor 't' has shape [2, 2, 2]

reshape(t, [2, 4]) ==> [[1, 1, 2, 2],

[3, 3, 4, 4]]

# tensor 't' is [[[1, 1, 1],

# [2, 2, 2]],

# [[3, 3, 3],

# [4, 4, 4]],

# [[5, 5, 5],

# [6, 6, 6]]]

# tensor 't' has shape [3, 2, 3]

# pass '[-1]' to flatten 't'

reshape(t, [-1]) ==> [1, 1, 1, 2, 2, 2, 3, 3, 3, 4, 4, 4, 5, 5, 5, 6, 6, 6]

# -1 can also be used to infer the shape

# -1 is inferred to be 9:

reshape(t, [2, -1]) ==> [[1, 1, 1, 2, 2, 2, 3, 3, 3],

[4, 4, 4, 5, 5, 5, 6, 6, 6]]

# -1 is inferred to be 2:

reshape(t, [-1, 9]) ==> [[1, 1, 1, 2, 2, 2, 3, 3, 3],

[4, 4, 4, 5, 5, 5, 6, 6, 6]]

# -1 is inferred to be 3:

reshape(t, [ 2, -1, 3]) ==> [[[1, 1, 1],

[2, 2, 2],

[3, 3, 3]],

[[4, 4, 4],

[5, 5, 5],

[6, 6, 6]]]

# tensor 't' is [7]

# shape `[]` reshapes to a scalar

reshape(t, []) ==> 7Args:

tensor: ATensor.shape: ATensor. Must be one of the following types:int32,int64.

Defines the shape of the output tensor.name: A name for the operation (optional).

Returns:

A Tensor. Has the same type as tensor.

739

739

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?