原文地址:http://blog.csdn.net/uq_jin/article/details/52235121

1、解压Hadoop到任意目录

比如:D:\soft\dev\hadoop-2.7.2

2、设置环境变量

HADOOP_HOME:D:\soft\dev\hadoop-2.7.2

HADOOP_BIN_PATH:%HADOOP_HOME%\bin

HADOOP_PREFIX:%HADOOP_HOME%

在Path后面加上%HADOOP_HOME%\bin;%HADOOP_HOME%\sbin;3、新建项目

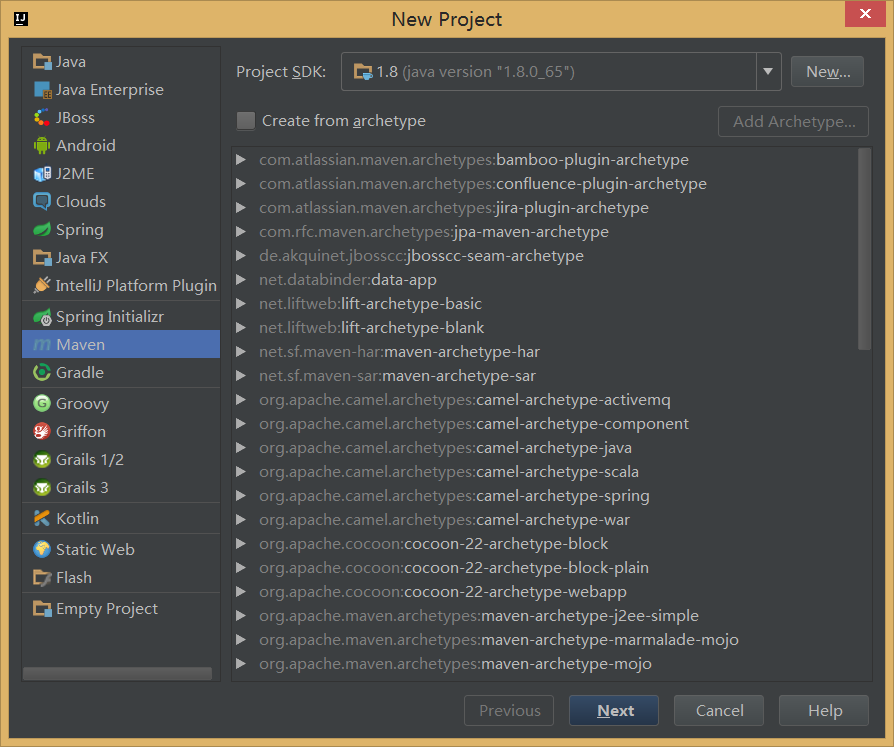

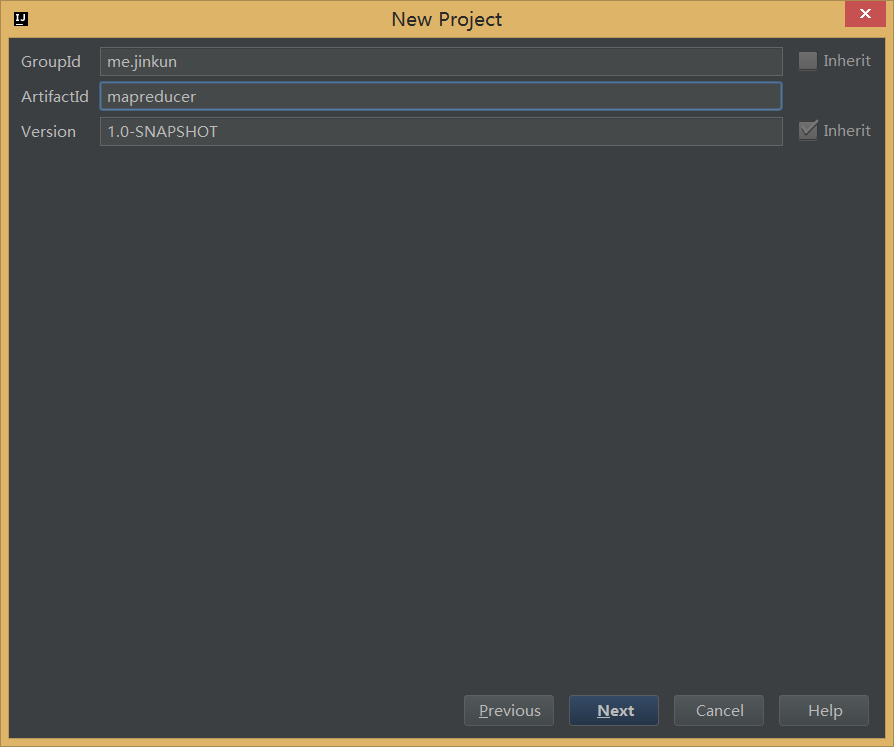

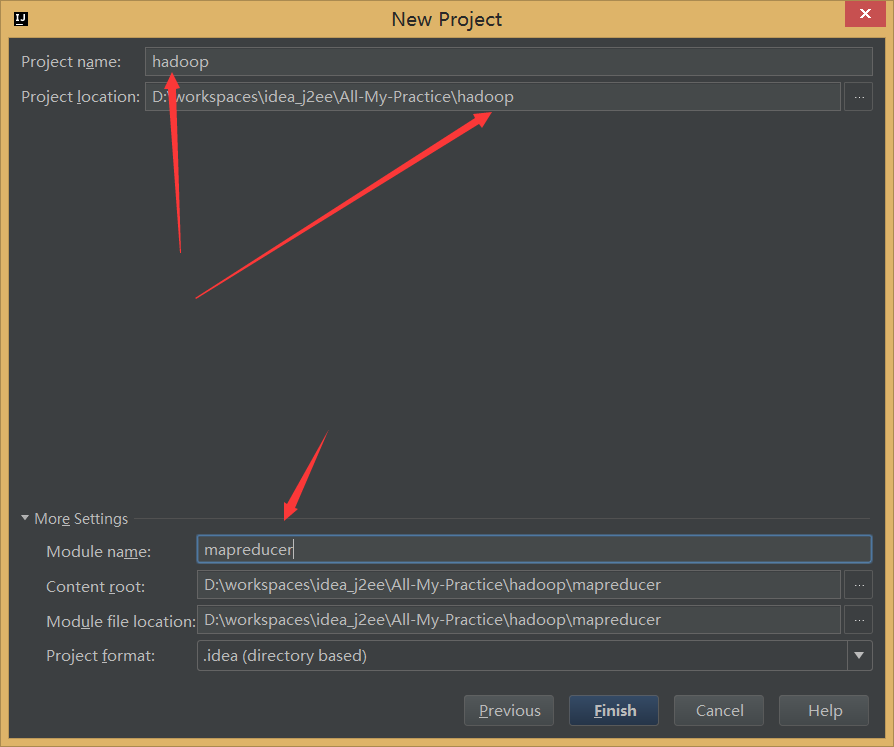

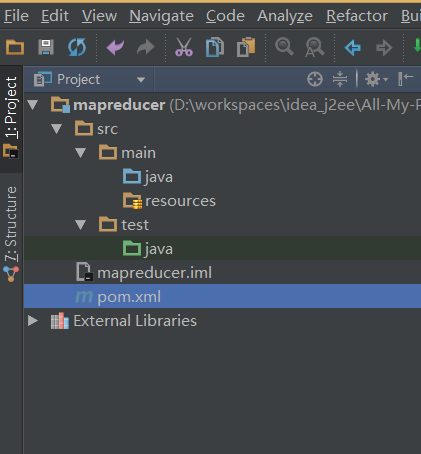

3.1、新建Maven项目

3.2、加入依赖

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>4.12</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>2.7.2</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-common</artifactId>

<version>2.7.2</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-hdfs</artifactId>

<version>2.7.2</version>

</dependency>3.3、编写WordCount程序

WcMapper.java

public class WcMapper extends Mapper<LongWritable, Text, Text, IntWritable> {

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

String[] words = StringUtils.split(value.toString(), ' ');

for (String w : words) {

context.write(new Text(w), new IntWritable(1));

}

}

}WcReducer.java

public class WcReducer extends Reducer<Text, IntWritable, Text, IntWritable> {

@Override

protected void reduce(Text key, Iterable<IntWritable> values, Context context) throws IOException, InterruptedException {

int sum = 0;

for (IntWritable i : values) {

sum = sum + i.get();

}

context.write(key, new IntWritable(sum));

}

}RunJob.java

public class RunJob {

public static void main(String[] args) throws Exception {

Configuration config = new Configuration();

//设置hdfs的通讯地址

config.set("fs.defaultFS", "hdfs://node1:8020");

//设置RN的主机

config.set("yarn.resourcemanager.hostname", "node1");

try {

FileSystem fs = FileSystem.get(config);

Job job = Job.getInstance(config);

job.setJarByClass(RunJob.class);

job.setJobName("wc");

job.setMapperClass(WcMapper.class);

job.setReducerClass(WcReducer.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(IntWritable.class);

FileInputFormat.addInputPath(job, new Path("/usr/input/wc.txt"));

Path outpath = new Path("/usr/output/wc");

if (fs.exists(outpath)) {

fs.delete(outpath, true);

}

FileOutputFormat.setOutputPath(job, outpath);

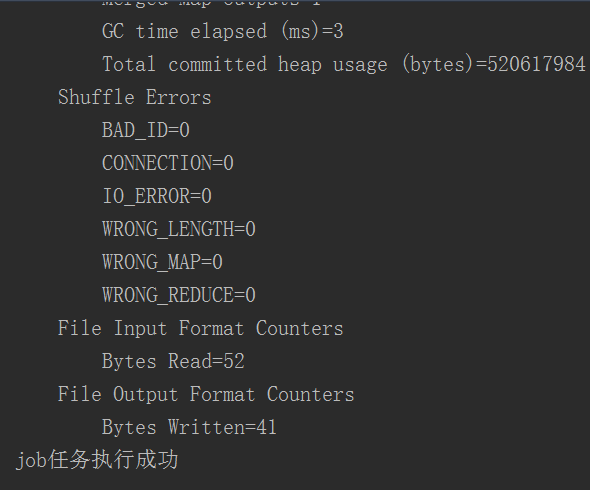

boolean f = job.waitForCompletion(true);

if (f) {

System.out.println("job任务执行成功");

}

} catch (Exception e) {

e.printStackTrace();

}

}

}注意:(改自己的主机)

//设置hdfs的通讯地址

config.set(“fs.defaultFS”, “hdfs://node1:8020”);

//设置RN的主机

config.set(“yarn.resourcemanager.hostname”, “node1”);

日志文件:log4j.properties

log4j.appender.console=org.apache.log4j.ConsoleAppender

log4j.appender.console.Target=System.out

log4j.appender.console.layout=org.apache.log4j.PatternLayout

log4j.appender.console.layout.ConversionPattern=%d{ABSOLUTE} %5p %c{1}:%L - %m%n

log4j.rootLogger=INFO, console3.4、运行WordCount程序

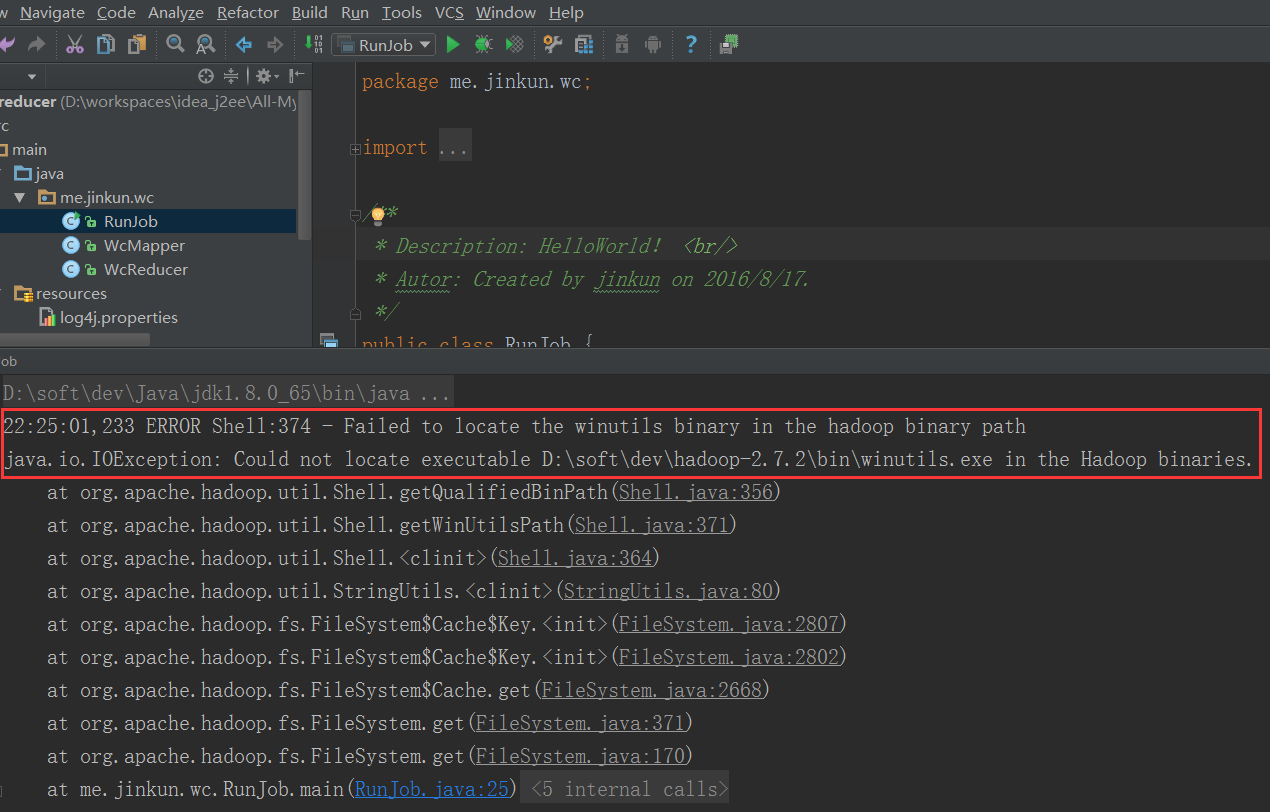

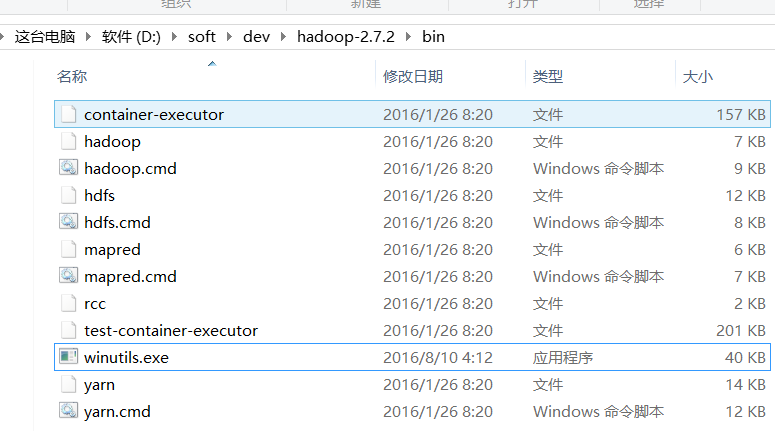

发现报错缺少 winutils.exe 这个程序,下载:http://download.csdn.net/detail/u010435203/9606355 放到Hadoop/bin下面:

再次运行:

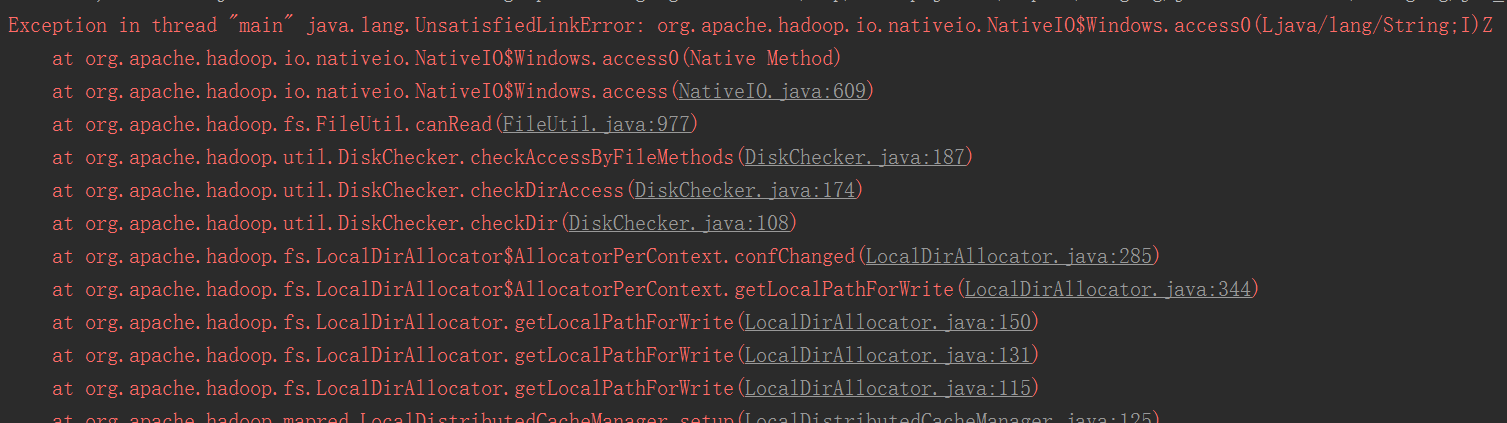

报错:java.lang.UnsatisfiedLinkError: org.apache.hadoop.io.nativeio.NativeIO$Windows.access0(Ljava/lang/String;I)Z

参考:http://blog.csdn.net/congcong68/article/details/42043093

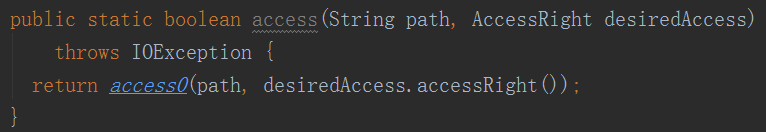

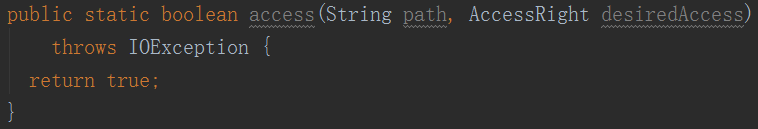

修改org.apache.hadoop.io.nativeio.NativeIO源码:

为:

直接下载:http://download.csdn.net/detail/u010435203/9606129

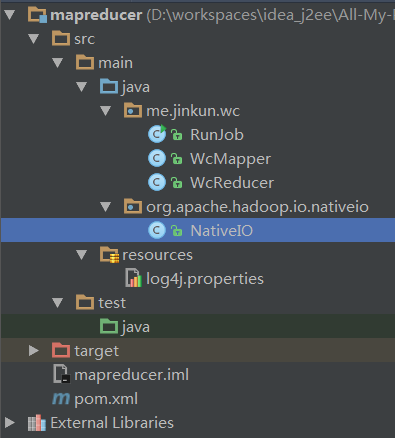

拷贝到src下如下结构:

3.5、再次运行WordCount程序

如果提示权限异常则:

修改hdfs-site.xml

<property>

<name>dfs.permissions</name>

<value>false</value>

</property>重启hdfs 即可

6819

6819

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?