In this article, I am discussing an educational project work in the fascinating field of Data Science while trying to learn the ropes of Data Science. This write-up intends to share the project journey with the larger world and the outcome.

在本文中,我将在尝试学习数据科学的精髓的同时,讨论数据科学引人入胜的领域中的教育项目工作。 本文旨在与更广阔的世界分享项目历程和成果。

Data science is a discipline that is both artistic and scientific simultaneously. A typical project journey in Data Science involves extracting and gathering insightful knowledge from data that can either be structured or unstructured. The entire tour commences with data gathering and ends with exploring the data entirely for deriving business value. The cleansing of the data, selecting the right algorithm to use on the data, and finally devising a machine learning function is the objective in this journey. The machine learning function derived is the outcome of this art that would solve the business problems creatively.

数据科学是一门同时具有艺术性和科学性的学科。 数据科学中的典型项目历程涉及从可以结构化或非结构化的数据中提取和收集有见地的知识。 整个导览从数据收集开始,最后是完全探索数据以获取业务价值。 数据清理,选择对数据使用正确的算法以及最终设计机器学习功能是此过程的目标。 派生的机器学习功能是该技术的成果,它将创造性地解决业务问题。

I will be focussing exclusively on the Data cleansing, imputation, exploration, and visualization of the data. I presume the reader has a basic knowledge of Python or even any equivalent language such as Java or C or Cplusplus to follow the code snippets. The coding was done in Python and executed using Jupyter notebook. I will describe the steps we undertook in this project journey, forming this write-up’s crux.

我将只专注于数据的数据清理,插补,探索和可视化。 我假设读者具有Python甚至是Java,C或Cplusplus之类的任何等效语言的基本知识,可以遵循代码段。 编码是用Python完成的,并使用Jupyter notebook执行。 我将描述我们在此项目旅程中采取的步骤,从而构成本文的重点。

Import Libraries

导入库

We began by importing the libraries that are needed to preprocess, impute, and render the data. The Python libraries that we used are Numpy, random, re, Mat- plotlib, Seaborn, and Pandas. Numpy for everything mathematical, random for random numbers, re for regular expression, Pandas for importing and managing the datasets, Matplotlib.pyplot, and Seaborn for drawing figures. Import the libraries with a shortcut alias as below.

我们首先导入预处理,估算和呈现数据所需的库。 我们使用的Python库是Numpy,random,re,Matplotlib,Seaborn和Pandas。 对于数学上的所有事物都是小块,对于随机数是随机的,对于正则表达式是re,对于导入和管理数据集,Pandas,对于Matplotlib.pyplot,是Seaborn,用于绘制图形。 如下导入带有快捷方式别名的库。

Loading the data set

加载数据集

The dataset provided was e-commerce data to explore. The data set was loaded using Pandas.

提供的数据集是要探索的电子商务数据。 数据集是使用Pandas加载的。

The ‘info’ method gets a summary of the dataset object.

“ info”方法获取数据集对象的摘要。

The shape of the dataset was determined to be 10000 rows and 14 columns. The describe specifies the basic statistics of the dataset.

数据集的形状确定为10000行14列。 describe指定数据集的基本统计信息。

The ‘nunique’ method gives the number of unique elements in the dataset.

“唯一”方法给出了数据集中唯一元素的数量。

Initial exploration of the data set

初步探索数据集

This step involved exploring the various facets of the loaded data. This step helps in understanding the data set columns and also the contents.

此步骤涉及探索已加载数据的各个方面。 此步骤有助于理解数据集列以及内容。

Interpreting and transforming the data set

解释和转换数据集

In a real-world scenario, the data information that one starts with could be either raw or unsuitable for Machine Learning purposes. We will need to transform the incoming data suitably.

在现实世界中,一开始的数据信息可能是原始数据,也可能不适合机器学习目的。 我们将需要适当地转换传入的数据。

We wanted to drop any duplicate rows in the data set using the ‘duplicate’ method. However, as you would note below, the data set we received did not contain any duplicates.

我们想使用“ duplicate”方法删除数据集中的所有重复行。 但是,正如您将在下面指出的那样,我们收到的数据集不包含任何重复项。

Impute the data

估算数据

While looking for invalid values in the data set, we determined that the data set was clean. The question placed on us in the project was to introduce errors at the rate of 10% overall if the data set supplied was clean. So given this ask, we decided to introduced errors into a data set column forcibly. We consciously choose to submit the errors in the

在数据集中寻找无效值时,我们确定该数据集是干净的。 项目中摆在我们面前的问题是,如果提供的数据集是干净的,那么总体上以10%的比率引入错误。 因此,鉴于此问题,我们决定将错误强行引入数据集列。 我们有意识地选择将错误提交给

‘Purchase Price’ column as this has the maximum impact on the dataset outcome. Thus, about 10% of the ‘Purchase Price’ data randomly set with ‘numpy — NaN.’

“购买价格”列,因为这对数据集结果具有最大的影响。 因此,约有10%的“购买价格”数据随机设置为“ numpy-NaN”。

We could have used the Imputer class from the scikit-learn library to fill in missing values with the data (mean, median, most frequent). However, to keep experimenting with hand made code; instead, I wrote a re-usable data frame impute class named ‘DataFrameWithImputor’ that has the following capabilities.

我们本可以使用scikit-learn库中的Imputer类用数据(均值,中位数,最频繁)填充缺失值。 但是,要继续尝试手工代码; 相反,我编写了一个名为“ DataFrameWithImputor”的可重复使用的数据框架归因类,它具有以下功能。

- Be instantiated with a data frame as a parameter in the constructor. 在构造函数中以数据框作为参数实例化。

- Introduce errors to any numeric column of a data frame at a specified error rate. 以指定的错误率将错误引入数据框的任何数字列。

- Introduce errors across the dataframe in any cell of the dataframe at a specified error rate. 在数据框的任何单元中以指定的错误率在数据框内引入错误。

- Impute error values in a column of the data set. 在数据集的列中估算错误值。

- Find empty strings in rows of the data set. 在数据集的行中查找空字符串。

- Get the’ nan’ count in the data set. 获取数据集中的“ nan”计数。

- Possess an ability to describe the entire data set in the imputed object. 具备描述估算对象中整个数据集的能力。

- Have an ability to express any column of the dataset in the impute object. 能够表达归因对象中数据集的任何列。

- Perform forward fill on the entire data set. 对整个数据集执行正向填充。

- Perform backward fill on the entire data set. 向后填充整个数据集。

Shown below is the impute class.

下面显示的是归类。

The un-imputed data set was checked for any Nan or missing strings for one final time before introducing errors.

在引入错误之前,最后一次对未插补的数据集进行了任何Nan或缺失字符串检查。

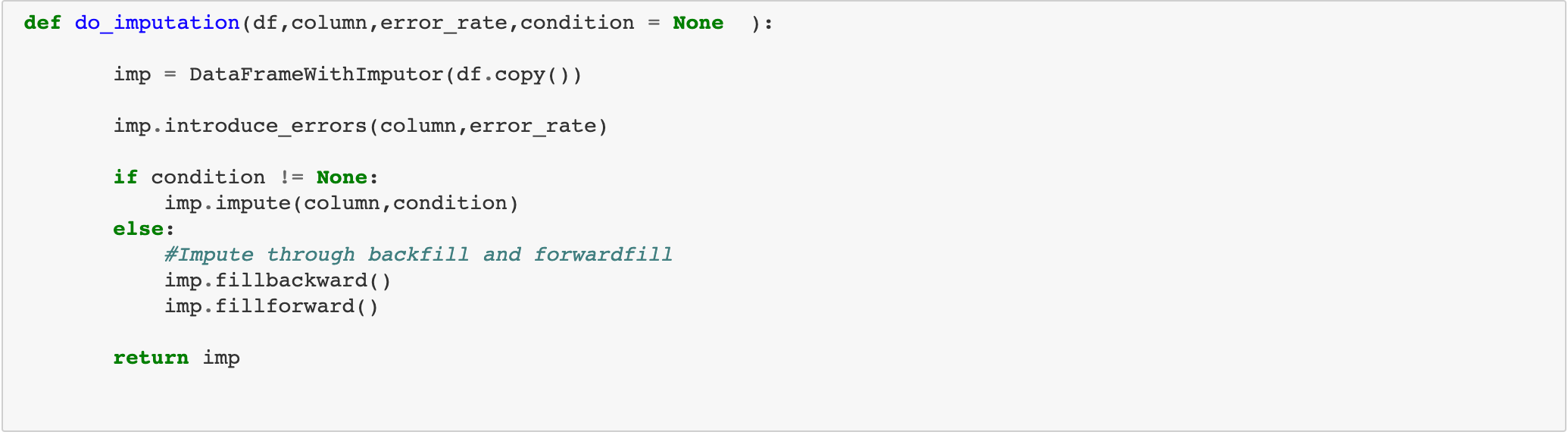

A helper function ‘do_impute’ was defined to introduce errors in a column of the data set and impute the data set column afterwords. This function would take a condition parameter to perform imputation.

定义了一个辅助函数'do_impute ',以在数据集的列中引入错误并插补数据集的列后缀。 此函数将采用条件参数来执行插补。

To introduce error, random cells in the ‘Purchase Price’ column is set to ‘NaN.’ Once set, there are several ways to fill up missing values or ‘NaN’:

为了引入错误,将“购买价格”列中的随机单元格设置为“ NaN”。 设置好之后,可以通过以下几种方法来填充缺失值或“ NaN”:

- We can remove the missing value rows itself from the data set. However, in this case, the error percentage is low at just 10%, so this method was not needed. 我们可以从数据集中删除缺失值行本身。 但是,在这种情况下,错误百分率很低,仅为10%,因此不需要此方法。

- Fill in the null cell in the data set column with a constant value. 用常量值填充数据集列中的空单元格。

- Filling the invalid section with mean and median values 用平均值和中值填充无效部分

- Fill the nulls with a random value. 用一个随机值填充空值。

- Filling null using data frame backfill and forward fill 使用数据框回填和正向填充填充null

The above mentioned are some common strategies applied to impute the data set. However, there are no limits to designing a radically different approach to the data set imputation itself.

上面提到的是一些适用于估算数据集的常用策略。 但是,对数据集估算本身设计根本不同的方法没有任何限制。

Each of the imputed outcomes was studied separately — the fill (backfill and forward fill) and constant value imputations outcome shown below.

每个推算结果都分别进行了研究-填充(回填和正向填充)和恒定值推算结果如下所示。

The median and random value imputations are in the code below.

中值和随机值的估算在下面的代码中。

Then the mean imputed outcome is visually compared with an un-imputed or clean data column as below.

然后,将平均估算结果与未估算或干净数据列进行目视比较,如下所示。

From the above techniques, mean imputation was found closer to the un- imputed clean data, thus preferred. Other choices such as fill(forward and backward) also seemed to produce data set column qualitatively very close to clean data from the study above. However, the mean imputation was preferred as it gives a consistent result and a more widespread impute technique.

通过上述技术,发现平均插值更接近于未插值的干净数据,因此是优选的。 诸如填充(向前和向后)之类的其他选择似乎也定性地产生了与上面研究中的干净数据非常接近的数据集列。 但是,平均插补是可取的,因为它可以提供一致的结果和更广泛的插补技术。

The data frame adopted for further visualization was the mean imputed data set.

进一步可视化所采用的数据框是平均估算数据集。

Exploring and Analysing the data

探索和分析数据

A cleaned up and structured data is suitable for analyzing and finding exemplars using visualization.

清理和结构化的数据适用于使用可视化分析和查找示例。

Find relationship between Job designation and purchase amount?

查找工作指定和购买金额之间的关系?

2. How does purchase value depend on the Internet Browser used and Job (Profession) of the purchaser?

2.购买价值如何取决于所使用的Internet浏览器和购买者的工作(专业)?

3.根据位置(州)和购买时间(上午或下午),购买的模式(如果有)是什么? (3. What are the patterns, if any, on the purchases based on Location (State) and time of purchase (AM or PM)?)

4.购买如何取决于“ CC”提供商和购买时间“ AM或PM”? (4. How does purchase depend on ‘CC’ provider and time of purchase ‘AM or PM’?)

5.购买的前5个位置(州)是什么? (5. What are top 5 Location(State) for purchases?)

We plotted a sub-plot as below.

我们绘制了如下子图。

We can similarly repeat this subplot to view the top 5 credit cards, the top 5 email providers, and the top 5 languages involved in purchases. There are many other visualizations techniques beyond what I have described in this article, with each one capable of giving unique insights into the dataset.

我们可以类似地重复此子图,以查看购物涉及的前5名信用卡,前5名电子邮件提供商和前5种语言。 除了我在本文中介绍的内容外,还有许多其他可视化技术,每种技术都可以对数据集提供独特的见解。

Acknowledgment

致谢

I acknowledge my fellow project collaborators below, without whose contribution this project would not have been so exciting.

我感谢以下我的项目合作伙伴,没有他们的贡献,这个项目就不会那么令人兴奋。

The Team

团队

Rajesh Ramachander: linkedin.com/in/rramachander/

拉杰什(Rajesh Ramachander): linkedin.com/in/rramachander/

Ranjith Gnana Suthakar Alphonse Raj: linkedin.com/in/ranjith-alphonseraj-21666323/

Ranjith Gnana Suthakar Alphonse Raj: linkedin.com/in/ranjith-alphonseraj-21666323/

Yashaswi Gurumurthy: linkedin.com/mwlite/in/yashaswi-gurumurthy-020521113

Yashaswi Gurumurthy:l inkedin.com/mwlite/in/yashaswi-gurumurthy-020521113

Praveen Manohar G: linkedin.com/in/praveen-manohar-g-9006a232

Praveen Manohar G: linkedin.com/in/praveen-manohar-g-9006a232

Rahul A linkedin.com/in/rahulayyappan

Closing Words

结束语

The Github link to the codebase is https://github.com/RajeshRamachander/ecom/blob/master/ecom_eda.ipynb.

Github到代码库的链接是https://github.com/RajeshRamachander/ecom/blob/master/ecom_eda.ipynb 。

We had fun and many learnings while doing some of these fundamental steps required to work through a large data set, clean, impute, and visualize the data for further work. We finished the project here, and of course, the real journey does not end here as it will progress into modeling, training, and testing phases. For us, this is only the beginning of a long trip. Every data science project that has a better and cleaner data will generate awe-inspiring results!

我们在完成一些通过大型数据集进行工作,清理,估算和可视化数据以进行进一步工作所需的一些基本步骤时,从中获得了很多乐趣和学习。 我们在这里完成了项目,当然,真正的旅程不会在这里结束,因为它将进入建模,培训和测试阶段。 对我们来说,这只是漫长旅程的开始。 每个拥有更好,更干净数据的数据科学项目都将产生令人敬畏的结果!

4466

4466

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?