由于目前很多spark程序资料都是用scala语言写的,但是现在需要用python来实现,于是在网上找了scala写的例子改为python实现

1、集群测试实例

代码如下:

from pyspark.sql import SparkSession

if __name__ == "__main__":

spark = SparkSession\

.builder\

.appName("PythonWordCount")\

.master("spark://mini1:7077") \

.getOrCreate()

spark.conf.set("spark.executor.memory", "500M")

sc = spark.sparkContext

a = sc.parallelize([1, 2, 3])

b = a.flatMap(lambda x: (x,x ** 2))

print(a.collect())

print(b.collect())

1

2

3

4

5

6

7

8

9

10

11

12

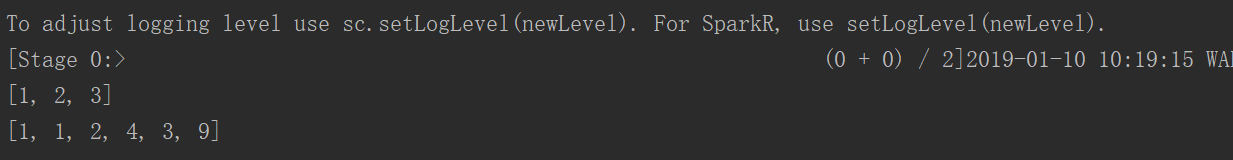

运行结果:

2、从文件中读取

为了方便调试,这里采用本地模式进行测试

from py4j.compat import long

from pyspark.sql import SparkSession

def formatData(arr):

# arr = arr.split(",")

mb = (arr[0], arr[2])

flag = arr[3]

time = long(arr[1])

# time = arr[1]

if flag == "1":

time = -time

return (mb,time)

if __name__ == "__main__":

spark = SparkSession\

.builder\

.appName("PythonWordCount")\

.master("local")\

.getOrCreate()

sc = spark.sparkContext

# sc = spark.sparkContext

line = sc.textFile("D:\\code\\hadoop\\data\\spark\\day1\\bs_log").map(lambda x: x.split(','))

count = line.map(lambda x: formatData(x))

rdd0 = count.reduceByKey(lambda agg, obj: agg + obj)

# print(count.collect())

line2 = sc.textFile("D:\\code\

由于目前很多spark程序资料都是用scala语言写的,但是现在需要用python来实现,于是在网上找了scala写的例子改为python实现1、集群测试实例代码如下:from pyspark.sql import SparkSessionif __name__ == "__main__":spark = SparkSession\.builder\.appName("PythonWordCoun...

由于目前很多spark程序资料都是用scala语言写的,但是现在需要用python来实现,于是在网上找了scala写的例子改为python实现1、集群测试实例代码如下:from pyspark.sql import SparkSessionif __name__ == "__main__":spark = SparkSession\.builder\.appName("PythonWordCoun...

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

2023

2023

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?