caffe源码阅读

结构

主要两个目录

src: 包含源码实现

include: 头文件

src目录的架构,主要代码在caffe目录中,包含net.cpp, solver.cpp, blob.cpp, layer.cpp, blob.cpp, common.cpp, layers目录主要包含一些层,是caffe核心。proto中只有一个caffe.proto文件,里面使用protobuf语言描述了各种对象的成员变量, solvers主要提供不同的优化器,sgd, adam, rmsprop, adagrad,test目录包含一些单元测试用例, util常用工具函数:

├── caffe

│ ├── layers

│ ├── proto

│ ├── solvers

│ ├── test

│ │ └── test_data

│ └── util

└── gtest

首先来看caffe目录下的几个cpp:

blob.cpp

common.cpp

data_transformer.cpp

internal_thread.cpp

layer.cpp

layer_factory.cpp

net.cpp

parallel.cpp

solver.cpp

syncedmem.cpp

blob.cpp是caffe中主要的数据传输类型。

common.cpp

从tools出发

在根目录下有一个tools目录,主要用来编译一个caffe的可执行档,里面提供了caffe的一些可执行参数,通过配置参数来达到使用caffe的目的。

caffe.cpp

compute_image_mean.cpp

convert_imageset.cpp

device_query.cpp

extract_features.cpp

finetune_net.cpp

net_speed_benchmark.cpp

test_net.cpp

train_net.cpp

upgrade_net_proto_binary.cpp

upgrade_net_proto_text.cpp

upgrade_solver_proto_text.cpp

main.cpp中分别注册了几个函数到g_brew_map中,分别是train, test, time, device_query。

首先来看train函数,使用一个solver_param对象来解析solver参数,

caffe::SolverParameter solver_param;

caffe::ReadSolverParamsFromTextFileOrDie(FLAGS_solver, &solver_param);

通过SolverRegistery::CreateSolver创建一个solver对象, solver对象有一个 shared_ptr<Net<Dtype> > net_成员变量:

shared_ptr<caffe::Solver<float> >

solver(caffe::SolverRegistry<float>::CreateSolver(solver_param));

Net对象是整个网络的主体,那么一个Net究竟包含什么呢?最主要的是三个变量, layers_, params_, blobs_,如下:

template <typename Dtype>

class Net {

private:

vector<shared_ptr<Layer<Dtype> > > layers_;

vector<shared_ptr<Blob<Dtype> > > params_;

vector<shared_ptr<Blob<Dtype> > > blobs_;

};

layers_是构成网络的基本组件; params_是每层的滤波器参数,这个变量和每层layer的blobs_变量是共享数据的,即这边的params_存储的是layer的blobs_的指针; blobs_是各层的中间数据。

Net构造函数接收一个NetParameter参数,只是调用了一下Init函数:

template <typename Dtype>

Net<Dtype>::Net(const NetParameter& param) {

Init(param);

}

NetParameter在caffe.proto的定义如下:

message NetParameter {

optional string name = 1;

repeated string input = 3;

repeated BlobShape input_shape = 8;

repeated int32 input_dim = 4;

optional bool force_backward = 5 [default = false];

optional NetState state = 6;

repeated LayerParameter layer = 100; // ID 100 so layers are printed last.

}

message LayerParameter {

optional string name = 1; // the layer name

optional string type = 2; // the layer type

repeated string bottom = 3; // the name of each bottom blob

repeated string top = 4; // the name of each top blob

// The blobs containing the numeric parameters of the layer.

repeated BlobProto blobs = 7;

optional TransformationParameter transform_param = 100;

}

NetParameter的核心是LayerParameter,LayerParamter(定义进行了简化)的核心是bottom名, top名, 以及参数blobs。

这个NetParamter利用protobuf从train.prototxt, vgg.caffemodel进行读取初始化,然后去构造Net对象,有了Net整个网络也就搭建起来了。

之后可以调用solver->Solve();函数来开始整个网络的训练,而在Solve()函数中,则调用Step()函数,Step()函数主要用来进行每次的迭代,里面有个循环,每个循环是一次iter,每个iter进行iter_size次前向反向传播(FowardBackward()),并对这个batch的loss取平均更新优化器。

这里的iter_size参数是为了防止由于GPU内存不足导致无法使用较大的batch size带来的问题,因为它实际更新loss的迭代次数是iter_size * batch_size,这样就可以与使用较大的batch size是相同的结果。例如网络在batch_size = 128时取得较好的结果,但由于GPU内存不够,只够32张图片,那么可以将batch_size设为32,将iter_size设为4,取得的效果与batch_size = 128一样。

while (iter_ < stop_iter) {

// ...

Dtype loss = 0;

for (int i = 0; i < param_.iter_size(); ++i) {

loss += net_->ForwardBackward();

}

loss /= param_.iter_size();

// average the loss across iterations for smoothed reporting

UpdateSmoothedLoss(loss, start_iter, average_loss);

// ...

ApplyUpdate();

// ...

}

查看FowardBackward()实现如下,分别进行了Forward, Backward,并在前向传播时记录了loss:

Dtype ForwardBackward() {

Dtype loss;

Forward(&loss);

Backward();

return loss;

}

再看Foward(&loss)实现,调用了FowardFromTo(0, layers_.size() - 1)函数:

template <typename Dtype>

const vector<Blob<Dtype>*>& Net<Dtype>::Forward(Dtype* loss) {

if (loss != NULL) {

*loss = ForwardFromTo(0, layers_.size() - 1);

} else {

ForwardFromTo(0, layers_.size() - 1);

}

return net_output_blobs_;

}

FowardFromTo(0, layers_.szie()-1)遍历了每个层,使每个层分别调用Forward()函数,bottom_vecs_,top_vecs_的类型是vector<vector<Blob<Dtype>*> >,传入每层的类型是vector<Blob<Dtype>*>,这个vector表示层可能有多个输入或输出:

template <typename Dtype>

Dtype Net<Dtype>::ForwardFromTo(int start, int end) {

Dtype loss = 0;

for (int i = start; i <= end; ++i) {

Dtype layer_loss = layers_[i]->Forward(bottom_vecs_[i], top_vecs_[i]);

loss += layer_loss;

}

return loss;

}

所以,以上的solver, net都是为了layer服务,核心的功能实现还是在layer当中,我们先来看卷积层(conv_layer.cpp)的Forward实现。

LayerFactory: 工厂模式

为了对layer有足够的理解,我们先来阅读与layer相关的对象。所有layer的基类是Layer,由于实现的类都是使用模板编程,如果没有静态地调用相关模板类,编译器是不会进行特化的。而我们的调用过程都是通过配置文件train.prototxt进行动态初始化相关的类,这样就会发现找不到这个类。为了避免这个问题,在类定义后面都进行一下声明,这样确保在使用的时候可以找到这个类,使用的是一个宏:

INSTANTIATE_CLASS(ConvolutionLayer);

宏的定义如下:

#define INSTANTIATE_CLASS(classname) \

char gInstantiationGuard##classname; \

template class classname<float>; \

template class classname<double>

实际上就是声明了一下ConvolutionLayer<float>, ConvolutionLayer<double>:

char gInstantiationGuardConvolutionLayer;

template class ConvolutionLayer<float>;

template class ConvolutionLayer<double>;

除此之外,有那么多的Layer,caffe实现了一个工厂模型(layer_factory.cpp),将layer进行统一管理,也就是需要将所有Layer都注册到一个map,里面的key对应Layer名,value是生成相应的Layer函数,这样在使用的时候就可以根据类型实例化相应的Layer对象了。提供了两个宏定义:

#define REGISTER_LAYER_CREATOR(type, creator) \

static LayerRegisterer<float> g_creator_f_##type(#type, creator<float>); \

static LayerRegisterer<double> g_creator_d_##type(#type, creator<double>) \

#define REGISTER_LAYER_CLASS(type) \

template <typename Dtype> \

shared_ptr<Layer<Dtype> > Creator_##type##Layer(const LayerParameter& param) \

{ \

return shared_ptr<Layer<Dtype> >(new type##Layer<Dtype>(param)); \

} \

REGISTER_LAYER_CREATOR(type, Creator_##type##Layer)

先看第一个宏,传入两个参数,一个是类型(Convolution),第二个是创建函数,如在layer_factory.cpp中有如下代码(进行了简化):

template <typename Dtype>

shared_ptr<Layer<Dtype> > GetConvolutionLayer(const LayerParameter& param) {

// 简化...

return shared_ptr<Layer<Dtype> >(new ConvolutionLayer<Dtype>(param));

// 简化...

}

REGISTER_LAYER_CREATOR(Convolution, GetConvolutionLayer);

那么宏翻译过来就是如下:

static LayerRegisterer<float> g_creator_f_Convolution("Convolution", creator<float>);

static LayerRegisterer<double> g_creator_d_Convolution("Convolution", creator<double>) ;

所以我们再来看看LayerRegisterer这个类干了什么:

LayerRegistry<Dtype>::AddCreator(type, creator);

调用了静态函数LayerRegistry<Dtype>::AddCreator,继续看:

class LayerRegistry {

public:

static CreatorRegistry& Registry() {

static CreatorRegistry* g_registry_ = new map<string, Creator>();

return *g_registry_;

}

static void AddCreator(const string& type, Creator creator) {

CreatorRegistry& registry = Registry();

CHECK_EQ(registry.count(type), 0) << "Layer type " << type << " already registered.";

registry[type] = creator;

}

}

可以看到维护了一个单例map类型对象g_registry_,这个对象存储了类型与对应的创建函数。

第二个宏,假如是这样调用REGISTER_LAYER_CLASS(Convolution),则可以翻译成下面的样子:

template <typename Dtype>

shared_ptr<Layer<Dtype> > Creator_ConvolutionLayer(const LayerParameter& param)

{

return shared_ptr<Layer<Dtype> >(new ConvolutionLayer<Dtype>(param));

}

REGISTER_LAYER_CREATOR(type, Creator_ConvolutionLayer)

就是这个类不需要特殊创建,直接使用这个默认创建方法(Creator_ConvolutionLayer)就可以。而一些特殊的例子比如Convolution要进行其它的处理,所以要特殊写创建函数(GetConvolutionLayer),当然大多数层都可以直接调用这个默认的函数进行创建。

数据Blob

caffe中的数据的基本存储、操作对象就是Blob,还提供了CPU、GPU数据同步功能。

Blob的数据基本存储就是数组,是按照行存储的。

Blob主要存储了两个数据,data_, diff_,分别是数据与梯度。

blob是一个四维的数组。维度从高到低分别是:(num_,channels_,height_,width_)对于图像数据来说就是:图片个数,彩色通道个数,宽,高,比如说有10张图片,分别是512*256大小,彩色三通道,则为:(10,3,256,512):

template <typename Blob>

class Blob {

public:

inline int num() const { return LegacyShape(0); }

inline int channels() const { return LegacyShape(1); }

inline int height() const { return LegacyShape(2); }

inline int width() const { return LegacyShape(3); }

inline const shared_ptr<SyncedMemory>& data() const {

return data_;

}

inline const shared_ptr<SyncedMemory>& diff() const {

return diff_;

}

void Update() {

caffe_axpy<Dtype>(count_, Dtype(-1), static_cast<const Dtype*>(diff_->cpu_data()), static_cast<Dtype*>(data_->mutable_cpu_data()));

}; // 数据更新,即减去当前计算出来的梯度

void FromProto(const BlobProto& proto, bool reshape = true); // 将数据进行反序列化,从磁盘导入之前存储的blob

void ToProto(BlobProto* proto, bool write_diff = false) const; // 将数据进行序列化,便于存储

protected:

shared_ptr<SyncedMemory> data_;

shared_ptr<SyncedMemory> diff_;

shared_ptr<SyncedMemory> shape_data_;

vector<int> shape_;

int count_;

int capacity_;

DISABLE_COPY_AND_ASSIGN(Blob);

}; // class Blob

回到Layer

Layer基类的Forward方法,注意这并非是一个virtual方法,也就意味着它不希望子类对这个函数进行修改,即可以认为所有Layer都是使用的这个Forward函数,所以我们来看看具体的步骤:

template <typename Dtype>

class Layer {

public:

explicit Layer(const LayerParameter& param) : layer_param_(param) {

phase_ = param.phase();

if (layer_param_.blobs_size() > 0) {

blobs_.resize(layer_param_.blobs_size());

for (int i = 0; i < layer_param_.blobs_size(); ++i) {

blobs_[i].reset(new Blob<Dtype>());

blobs_[i]->FromProto(layer_param_.blobs(i));

}

}

}

virtual ~Layer() {}

void SetUp(const vector<Blob<Dtype>*>& bottom,

const vector<Blob<Dtype>*>& top) {

CheckBlobCounts(bottom, top);

LayerSetUp(bottom, top);

Reshape(bottom, top);

SetLossWeights(top);

}

/**

* @brief Does layer-specific setup: your layer should implement this function

* as well as Reshape.

*

* @param bottom

* the preshaped input blobs, whose data fields store the input data for

* this layer

* @param top

* the allocated but unshaped output blobs

*

* This method should do one-time layer specific setup. This includes reading

* and processing relevent parameters from the <code>layer_param_</code>.

* Setting up the shapes of top blobs and internal buffers should be done in

* <code>Reshape</code>, which will be called before the forward pass to

* adjust the top blob sizes.

*/

virtual void LayerSetUp(const vector<Blob<Dtype>*>& bottom,

const vector<Blob<Dtype>*>& top) {}

/**

* @brief Adjust the shapes of top blobs and internal buffers to accommodate

* the shapes of the bottom blobs.

*

* @param bottom the input blobs, with the requested input shapes

* @param top the top blobs, which should be reshaped as needed

*

* This method should reshape top blobs as needed according to the shapes

* of the bottom (input) blobs, as well as reshaping any internal buffers

* and making any other necessary adjustments so that the layer can

* accommodate the bottom blobs.

*/

virtual void Reshape(const vector<Blob<Dtype>*>& bottom,

const vector<Blob<Dtype>*>& top) = 0;

/**

* @brief Given the bottom blobs, compute the top blobs and the loss.

*

* @param bottom

* the input blobs, whose data fields store the input data for this layer

* @param top

* the preshaped output blobs, whose data fields will store this layers'

* outputs

* \return The total loss from the layer.

*

* The Forward wrapper calls the relevant device wrapper function

* (Forward_cpu or Forward_gpu) to compute the top blob values given the

* bottom blobs. If the layer has any non-zero loss_weights, the wrapper

* then computes and returns the loss.

*

* Your layer should implement Forward_cpu and (optionally) Forward_gpu.

*/

inline Dtype Forward(const vector<Blob<Dtype>*>& bottom,

const vector<Blob<Dtype>*>& top);

/**

* @brief Given the top blob error gradients, compute the bottom blob error

* gradients.

*

* @param top

* the output blobs, whose diff fields store the gradient of the error

* with respect to themselves

* @param propagate_down

* a vector with equal length to bottom, with each index indicating

* whether to propagate the error gradients down to the bottom blob at

* the corresponding index

* @param bottom

* the input blobs, whose diff fields will store the gradient of the error

* with respect to themselves after Backward is run

*

* The Backward wrapper calls the relevant device wrapper function

* (Backward_cpu or Backward_gpu) to compute the bottom blob diffs given the

* top blob diffs.

*

* Your layer should implement Backward_cpu and (optionally) Backward_gpu.

*/

inline void Backward(const vector<Blob<Dtype>*>& top,

const vector<bool>& propagate_down,

const vector<Blob<Dtype>*>& bottom);

vector<shared_ptr<Blob<Dtype> > >& blobs() {

return blobs_;

}

const LayerParameter& layer_param() const { return layer_param_; }

protected:

/** The protobuf that stores the layer parameters */

LayerParameter layer_param_; //层的参数: 卷积核大小,步长

Phase phase_;

/** The vector that stores the learnable parameters as a set of blobs. */

vector<shared_ptr<Blob<Dtype> > > blobs_; //滤波器参数

vector<bool> param_propagate_down_;

vector<Dtype> loss_;

virtual void Forward_cpu(const vector<Blob<Dtype>*>& bottom,

const vector<Blob<Dtype>*>& top) = 0;

virtual void Forward_gpu(const vector<Blob<Dtype>*>& bottom,

const vector<Blob<Dtype>*>& top) {

// LOG(WARNING) << "Using CPU code as backup.";

return Forward_cpu(bottom, top);

}

virtual void Backward_cpu(const vector<Blob<Dtype>*>& top,

const vector<bool>& propagate_down,

const vector<Blob<Dtype>*>& bottom) = 0;

virtual void Backward_gpu(const vector<Blob<Dtype>*>& top,

const vector<bool>& propagate_down,

const vector<Blob<Dtype>*>& bottom) {

// LOG(WARNING) << "Using CPU code as backup.";

Backward_cpu(top, propagate_down, bottom);

}

private:

DISABLE_COPY_AND_ASSIGN(Layer);

};

template <typename Dtype>

inline Dtype Layer<Dtype>::Forward(const vector<Blob<Dtype>*>& bottom,

const vector<Blob<Dtype>*>& top) {

Dtype loss = 0;

Reshape(bottom, top);

switch (Caffe::mode()) {

case Caffe::CPU:

Forward_cpu(bottom, top);

for (int top_id = 0; top_id < top.size(); ++top_id) {

if (!this->loss(top_id)) { continue; }

const int count = top[top_id]->count();

const Dtype* data = top[top_id]->cpu_data();

const Dtype* loss_weights = top[top_id]->cpu_diff();

loss += caffe_cpu_dot(count, data, loss_weights);

}

break;

case Caffe::GPU:

Forward_gpu(bottom, top);

#ifndef CPU_ONLY

for (int top_id = 0; top_id < top.size(); ++top_id) {

if (!this->loss(top_id)) { continue; }

const int count = top[top_id]->count();

const Dtype* data = top[top_id]->gpu_data();

const Dtype* loss_weights = top[top_id]->gpu_diff();

Dtype blob_loss = 0;

caffe_gpu_dot(count, data, loss_weights, &blob_loss);

loss += blob_loss;

}

#endif

break;

default:

LOG(FATAL) << "Unknown caffe mode.";

}

return loss;

}

在Layer中比较重要的几个函数,Setup, LayerSetup, Reshape, Forward, BackWard, Forward_cpu, Forward_gpu, Backward_cpu, Backward_gpu。

Reshape,Forward_cpu,Backward_cpu函数是纯虚函数,子类一定要对其进行实现;LayerSetup,Forward_gpu,Backward_gpu是虚函数,可以根据需要进行重写。Setup,Forward,BackWard是普通函数,不要重写;

由于卷积也有许多种,所以在中间加了BaseConvolutionLayer类,做为所有卷积类的基类。实现了如下函数,并将Reshape函数由纯虚函数变为了虚函数:

LayerSetUp(const vector<Blob<Dtype>*>& bottom, const vector<Blob<Dtype>*>& top)

Reshape(const vector<Blob<Dtype>*>& bottom, const vector<Blob<Dtype>*>& top)

forward_cpu_gemm(const Dtype* input, const Dtype* weights, Dtype* output, bool skip_im2col)

forward_cpu_bias(Dtype* output, const Dtype* bias)

backward_cpu_gemm(const Dtype* output, const Dtype* weights, Dtype* input)

weight_cpu_gemm(const Dtype* input, const Dtype* output, Dtype* weights)

backward_cpu_bias(Dtype* bias, const Dtype* input)

forward_gpu_gemm(const Dtype* input, const Dtype* weights, Dtype* output, bool skip_im2col)

forward_gpu_bias(Dtype* output, const Dtype* bias)

backward_gpu_gemm(const Dtype* output, const Dtype* weights, Dtype* input)

weight_gpu_gemm(const Dtype* input, const Dtype* output, Dtype* weights)

backward_gpu_bias(Dtype* bias, const Dtype* input)

ConvolutionLayer继承BaseConvolutionLayer,实现了如下函数:

Forward_cpu(const vector<Blob<Dtype>*>& bottom, const vector<Blob<Dtype>*>& top)

Backward_cpu(const vector<Blob<Dtype>*>& top, const vector<bool>& propagate_down, const vector<Blob<Dtype>*>& bottom)

在Layer的Forward函数中,首先调用Reshape函数,这时调用的是BaseConvolutionLayer::Reshape函数,caffe的数据组织类型为Blob,在输入(bottom)大小已知,卷积参数已知的情况下,是可以计算输出(top)的Blob的shape,如下:

// Shape the tops.

bottom_shape_ = &bottom[0]->shape();

compute_output_shape();

vector<int> top_shape(bottom[0]->shape().begin(),

bottom[0]->shape().begin() + channel_axis_);

top_shape.push_back(num_output_);

for (int i = 0; i < num_spatial_axes_; ++i) {

top_shape.push_back(output_shape_[i]);

}

for (int top_id = 0; top_id < top.size(); ++top_id) {

top[top_id]->Reshape(top_shape);

}

里面对每个输出top[i]调用了其成员函数Reshape,Blob的Reshape函数如下:

template <typename Dtype>

void Blob<Dtype>::Reshape(const vector<int>& shape) {

CHECK_LE(shape.size(), kMaxBlobAxes);

count_ = 1;

shape_.resize(shape.size());

if (!shape_data_ || shape_data_->size() < shape.size() * sizeof(int)) {

shape_data_.reset(new SyncedMemory(shape.size() * sizeof(int)));

}

int* shape_data = static_cast<int*>(shape_data_->mutable_cpu_data());

for (int i = 0; i < shape.size(); ++i) {

CHECK_GE(shape[i], 0);

if (count_ != 0) {

CHECK_LE(shape[i], INT_MAX / count_) << "blob size exceeds INT_MAX";

}

count_ *= shape[i];

shape_[i] = shape[i]; //拷到内部

shape_data[i] = shape[i];

}

if (count_ > capacity_) { //内存不够

capacity_ = count_;

data_.reset(new SyncedMemory(capacity_ * sizeof(Dtype))); //重新申请

diff_.reset(new SyncedMemory(capacity_ * sizeof(Dtype)));

}

}

其实就是将传入的shape复制到Blob的内部变量shape_中,并判断内存是否满足要求,不满足要求的话重新申请内存。

前向传播这里我们分析cpu的情况,Reshape之后是Forward_cpu,现在调用的是ConvolutionLayer::Forward_cpu函数:

template <typename Dtype>

void ConvolutionLayer<Dtype>::Forward_cpu(const vector<Blob<Dtype>*>& bottom,

const vector<Blob<Dtype>*>& top) {

const Dtype* weight = this->blobs_[0]->cpu_data();

for (int i = 0; i < bottom.size(); ++i) {

const Dtype* bottom_data = bottom[i]->cpu_data();

Dtype* top_data = top[i]->mutable_cpu_data();

for (int n = 0; n < this->num_; ++n) {

this->forward_cpu_gemm(bottom_data + n * this->bottom_dim_, weight,

top_data + n * this->top_dim_);

if (this->bias_term_) {

const Dtype* bias = this->blobs_[1]->cpu_data();

this->forward_cpu_bias(top_data + n * this->top_dim_, bias);

}

}

}

}

代码主要是对于每个bottom、top,要做num_(batch_size)次矩阵乘法(forward_cpu_gemm),将bottom_data与weight相乘,结果保存到top_data中,这里mutable_cpu_data表示要对这个地址进行写数据,具体地矩阵乘法:

template <typename Dtype>

void BaseConvolutionLayer<Dtype>::forward_cpu_gemm(const Dtype* input,

const Dtype* weights, Dtype* output, bool skip_im2col) {

const Dtype* col_buff = input;

if (!is_1x1_) {

if (!skip_im2col) {

conv_im2col_cpu(input, col_buffer_.mutable_cpu_data());

}

col_buff = col_buffer_.cpu_data();

}

for (int g = 0; g < group_; ++g) {

caffe_cpu_gemm<Dtype>(CblasNoTrans, CblasNoTrans, conv_out_channels_ /

group_, conv_out_spatial_dim_, kernel_dim_,

(Dtype)1., weights + weight_offset_ * g, col_buff + col_offset_ * g,

(Dtype)0., output + output_offset_ * g);

}

}

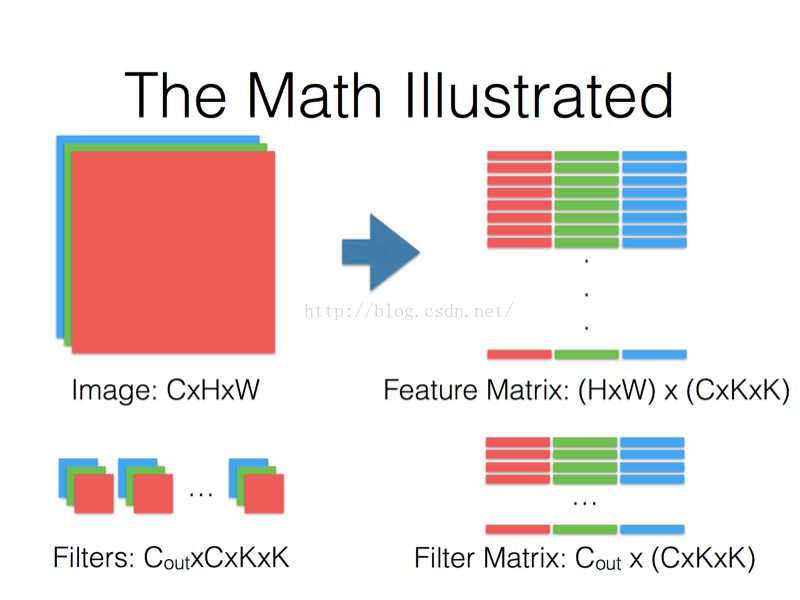

这里有conv_im2col_cpu函数。如果我们不进行转换,我们需要循环进行多次矩阵乘法,这里使用这个函数将每个patch(kxkxC)拉直,然后将这些patch堆在一起,这样就可以只进行一次卷积就可以求出所有结果,caffe_cpu_gemm就是封装的cblas的矩阵乘法ouput = weights * col_buff。

再回到Forward函数中,做完Forward_cpu后,会遍历所有层判断是否是loss层,如果是则根据cpu_diff()计算loss:

inline Dtype Layer<Dtype>::Forward(const vector<Blob<Dtype>*>& bottom,

const vector<Blob<Dtype>*>& top) {

Dtype loss = 0;

Reshape(bottom, top);

Forward_cpu(bottom, top);

for (int top_id = 0; top_id < top.size(); ++top_id) {

if (!this->loss(top_id)) { continue; }

const int count = top[top_id]->count();

const Dtype* data = top[top_id]->cpu_data();

const Dtype* loss_weights = top[top_id]->cpu_diff();

loss += caffe_cpu_dot(count, data, loss_weights);

}

}

这样Forward函数就结束了,下面开始进入Backward函数,直接来看Layer的Backward函数,如下:

template <typename Dtype>

inline void Layer<Dtype>::Backward(const vector<Blob<Dtype>*>& top,

const vector<bool>& propagate_down,

const vector<Blob<Dtype>*>& bottom) {

switch (Caffe::mode()) {

case Caffe::CPU:

Backward_cpu(top, propagate_down, bottom);

break;

case Caffe::GPU:

Backward_gpu(top, propagate_down, bottom);

break;

default:

LOG(FATAL) << "Unknown caffe mode.";

}

}

里面直接调用Backward_cpu函数,来看ConvolutionLayer的Backward_cpu函数,如下:

template <typename Dtype>

void ConvolutionLayer<Dtype>::Backward_cpu(const vector<Blob<Dtype>*>& top,

const vector<bool>& propagate_down, const vector<Blob<Dtype>*>& bottom) {

const Dtype* weight = this->blobs_[0]->cpu_data();

Dtype* weight_diff = this->blobs_[0]->mutable_cpu_diff();

for (int i = 0; i < top.size(); ++i) {

const Dtype* top_diff = top[i]->cpu_diff();

const Dtype* bottom_data = bottom[i]->cpu_data();

Dtype* bottom_diff = bottom[i]->mutable_cpu_diff();

// Bias gradient, if necessary.

if (this->bias_term_ && this->param_propagate_down_[1]) {

Dtype* bias_diff = this->blobs_[1]->mutable_cpu_diff();

for (int n = 0; n < this->num_; ++n) {

this->backward_cpu_bias(bias_diff, top_diff + n * this->top_dim_);

}

}

if (this->param_propagate_down_[0] || propagate_down[i]) {

for (int n = 0; n < this->num_; ++n) {

// gradient w.r.t. weight. Note that we will accumulate diffs.

if (this->param_propagate_down_[0]) {

this->weight_cpu_gemm(bottom_data + n * this->bottom_dim_,

top_diff + n * this->top_dim_, weight_diff);

}

// gradient w.r.t. bottom data, if necessary.

if (propagate_down[i]) {

this->backward_cpu_gemm(top_diff + n * this->top_dim_, weight,

bottom_diff + n * this->bottom_dim_);

}

}

}

}

}

里面根据top_diff分别更新了当前层的weight_diff(weight_cpu_gemm),和bottom_diff(backward_cpu_gemm)(计算bottom_diff实际上是为了weight_diff)。

那么Backward也结束了,它分别计算了各层的权重参数的梯度(weight_diff)、以及各层blob的梯度(bottom_diff)。

再回到solver.Solver函数中,发现下面是执行ApplyUpdate()函数,才是真正更新参数的时候,solver.ApplyUpdate()实际上调用了Net.Update()函数,如下:

template <typename Dtype>

void Net<Dtype>::Update() {

for (int i = 0; i < learnable_params_.size(); ++i) {

learnable_params_[i]->Update();

}

}

这里的learnable_params_实际上就是每层可训练的参数,也就是每层的权重参数Blob,我们之前更新了这些Blob里的diff值,那我们再继续看看Blob.Update()函数里做了什么:

void Blob<Dtype>::Update() {

// We will perform update based on where the data is located.

switch (data_->head()) {

case SyncedMemory::HEAD_AT_CPU:

// perform computation on CPU

caffe_axpy<Dtype>(count_, Dtype(-1),

static_cast<const Dtype*>(diff_->cpu_data()),

static_cast<Dtype*>(data_->mutable_cpu_data()));

break;

//...

}

}

主要是做了如下的计算data_ = data_ - diff_,caffe_axpy实际上是封装了cblas的函数,主要做两个函数相加,由于传入的系数是Dtype(-1),所以是进行了相减更新data_,至此,每层的权重参数都得到了更新,那么一次迭代更新也就结束了。下面就是多次调用这个过程,直到训练得到一个较好的权重参数。

test阶段

测试test阶段,不需要solver,直接使用Net进行Forward就可以得到结果:

Net<float> caffe_net(FLAGS_model, caffe::TEST, FLAGS_level, &stages);

const vector<Blob<float>*>& result = caffe_net.Forward(&iter_loss);

pycaffe

首先有一个_caffe.cpp文件,里面将所有caffe框架编译成一个_caffe.so,而pycaffe.py相当于一个wrapper,封装了一些python接口。pycaffe中可以将_caffe.so中的对象import进来,当作python对象使用,如下:

from ._caffe import Net, SGDSolver, NesterovSolver, AdaGradSolver, \

RMSPropSolver, AdaDeltaSolver, AdamSolver, NCCL, Timer

之所以可以导入直接使用,这是因为在_caffe.cpp中使用BOOST_PYTHON_MODULE进行了导出:

BOOST_PYTHON_MODULE(_caffe) {

...

}

如下是导出一个类的方法:

#include<string>

#include<boost/python.hpp>

using namespace std;

using namespace boost::python;

struct World

{

void set(string msg) { this->msg = msg; }

string greet() { return msg; }

string msg;

};

BOOST_PYTHON_MODULE(hello) //导出的module 名字

{

class_<World>("World")

.def("greet", &World::greet)

.def("set", &World::set);

}

如下是python中调用导出的方法:

import hello

planet = hello.World() # 调用默认构造函数,产生类对象

planet.set("howdy") # 调用对象的方法

print planet.greet() # 调用对象的方法

如果不想导出任何构造函数,则使用no_init:

class_<Abstract>("Abstract",no_init)

最后,caffe目录中提供了一个__init__.py文件,将整个caffe目录变成一个python包:

from .pycaffe import Net, SGDSolver, NesterovSolver, AdaGradSolver, RMSPropSolver, AdaDeltaSolver, AdamSolver, NCCL, Timer

from ._caffe import init_log, log, set_mode_cpu, set_mode_gpu, set_device, Layer, get_solver, layer_type_list, set_random_seed, solver_count, set_solver_count, solver_rank, set_solver_rank, set_multiprocess, has_nccl

from ._caffe import __version__

from .proto.caffe_pb2 import TRAIN, TEST

from .classifier import Classifier

from .detector import Detector

from . import io

from .net_spec import layers, params, NetSpec, to_proto

这样,外面就可以使用caffe.Net, caffe.init_log, caffe.__version__, caffe.TRAIN, caffe.Classifier caffe.Detector caffe.io...去使用caffe的Python接口了。

205

205

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?