项目背景:

java调用python 部署的深度学习模型,java前端是用rabbitmq中的队列send存储客户发送的识别请求,现在为了实现一步到位的效果,需要对rabbitmq中的指定队列send消息进行监测,进而调用模型服务,进行消费,再将结果返回到rabbitmq指定队列receive,供java前端进行获取结果。

方案如下:

一、基于celery与rabbitmq、redis

celery 进行rabbitmq队列的send消息进行任务分发,模型进行消费,结果返回存储到redis或者rpc中

1、若用rpc://,则会根据线程id的创建交换机及队列名称,作为结果存储,需要在使用之后,将该队列、交换机删除,否则会导致太多的队列。

如图所示:

但可设置任务执行结果保存时限,进行自动删除,参数如下

# 任务执行结果的超时时间

result_expires=1 * 3 * 60, # 24 * 60 * 60 # 小时、分、秒

为了满足指定交换机、指定队列保存结果,需要对源码进行修改。

以下代码均在celery/backends/rpc.py中。

exchange = exchange or conf.result_exchange # 队列名

exchange_type = exchange_type or conf.result_exchange_type # 配置文件设置指定交换机名称 为 "se_re",否则为''

self.exchange = self._create_exchange(

exchange, exchange_type, self.delivery_mode,

) # 创建交换机

def _create_exchange(self, name, type='direct', delivery_mode=2):

# uses direct to queue routing (anon exchange).

# return self.Exchange(None) 这里没问题 # 此处是之前的,

return self.Exchange(name, type, delivery_mode=2) # 这是改过的

@property

def binding(self):

SS = self.Queue(

self.oid, self.exchange, self.oid,

durable=False,

auto_delete=True,

expires=self.expires,

)

# 无效尝试,还是原来的

# SS = self.Queue(

# "receive", self.exchange, "receive",

# # durable=False,

# durable=True,

# # auto_delete=True,

# auto_delete=False,

# # expires=self.expires,

# )

return SS

@cached_property

def oid(self):

# cached here is the app thread OID: name of queue we receive results on.

# 这里缓存的是应用线程OID:我们接收结果的队列名称。

dd = "receive" # 无效尝试

dd = self.app.thread_oid # 原来的代码

return dd

为了满足java查询rabbitmq的需求,作为指定交换机、指定队列,做以下修改

def __init__(self, app, connection=None, exchange=None, exchange_type=None,

persistent=None, serializer=None, auto_delete=True, **kwargs):

super().__init__(app, **kwargs)

conf = self.app.conf

self._connection = connection

self._out_of_band = {}

self.persistent = self.prepare_persistent(persistent)

self.delivery_mode = 2 if self.persistent else 1

exchange = exchange or conf.result_exchange # 配置文件设置指定交换机名称 为 "se_re"

exchange_type = exchange_type or conf.result_exchange_type # 配置文件设置指定交换机类型 为 "direct"

self.exchange = self._create_exchange(

exchange, exchange_type, self.delivery_mode,

)

# 添加以下代码,配置文件设置指定队列名字'receive',接收结果

***if "result_queue" in conf: # 判断该参数是否存在配置文件中,不存在设置为None

self.receive_name = conf.result_queue

else:

self.receive_name = None***

self.serializer = serializer or conf.result_serializer

self.auto_delete = auto_delete

self.result_consumer = self.ResultConsumer(

self, self.app, self.accept,

self._pending_results, self._pending_messages,

)

if register_after_fork is not None:

register_after_fork(self, _on_after_fork_cleanup_backend)

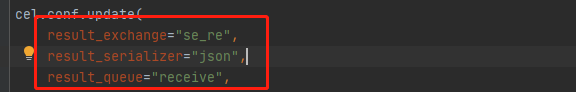

配置文件设置:

还需修改结果返回代码:

def store_result(self, task_id, result, state,

traceback=None, request=None, **kwargs):

"""Send task return value and state."""

routing_key, correlation_id = self.destination_for(task_id, request)

if not routing_key:

return

with self.app.amqp.producer_pool.acquire(block=True) as producer:

**if self.receive_name:

producer.publish(

self._to_result(task_id, state, result, traceback, request),

exchange=self.exchange,

routing_key=**self.receive_name**, # 指定的接收队列

correlation_id=correlation_id,

serializer=self.serializer,

retry=True, retry_policy=self.retry_policy,

declare=self.on_reply_declare(task_id),

delivery_mode=self.delivery_mode,

)

else:

producer.publish(

self._to_result(task_id, state, result, traceback, request),

exchange=self.exchange,

routing_key=**routing_key**, # 线程创建的队列,若直接修改为指定队列,无效

correlation_id=correlation_id,

serializer=self.serializer,

retry=True, retry_policy=self.retry_policy,

declare=self.on_reply_declare(task_id),

delivery_mode=self.delivery_mode,

)

return result**

2、若是用redis存储结果,还需要做定时任务进行查询redis库中的结果,再将结果取出,并修改置位符;

定时任务用celery进行实现

二、基于rabbitmq、pika的消息队列,以及结合多线程、进程相结合。

1409

1409

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?