声明下使用的框架使用的版本

- spark - 2.3.4

- spark-streaming-kafka-0-10_2.11 - 2.3.4

- zookeeper - 3.4.14

- kafka - 2.3.1

看了下源码好多老的API都不能使用了。

KafkaCluster不存在了,ZKUtils 也 替换为 AdminClient 了。

接着上次实现的自定义分区消费来做Exactly Once

官网例子上有使用kafka保存offset的,关闭offset的自动提交,然后在计算完后手动提交。

我的写法是用zookeeper的,过程就不细说了,按代码肯定可以看懂。

object SparkStreamingKafka010WithZKExample {

val BROKER_LIST = "localhost:9092"

val ZK_SERVERS = "localhost:2181"

val GROUP_ID = "group5"

val TOPIC = "xxx"

val ZK_QUORUM = "youhostname:2181"

def main(args: Array[String]): Unit = {

val conf = new SparkConf().setMaster("local[*]").setAppName("kafkaWithZK")

val streamingContext = new StreamingContext(conf, Seconds(5))

val kafkaParams = Map[String, Object](

"bootstrap.servers" -> BROKER_LIST,

"key.deserializer" -> classOf[StringDeserializer],

"value.deserializer" -> classOf[StringDeserializer],

"group.id" -> "group2",

"auto.offset.reset" -> "latest",

"enable.auto.commit" -> (false: java.lang.Boolean) //自动向Kafka提交偏移量

)

val topics = Array(TOPIC)

//初始化ZkClient

val zkClient = new ZkClient(ZK_QUORUM,6000,6000,new ZkSerializer {

override def serialize(o: Any): Array[Byte] = {

o.toString.getBytes("UTF-8")

}

override def deserialize(bytes: Array[Byte]): AnyRef = {

new String(bytes,"UTF-8")

}

})

val zkPath = new ZkPath(TOPIC, GROUP_ID)

//获取存储offset目录的Path

val zkOffsetsPath = zkPath.getOffsetsPath()

val zkPartitionsPath = zkPath.getTopicPartitionsPath()

//存储每个分区的偏移量信息

var partitionAndOffsetMap: Map[Int, Long] = Map()

//判断路径是否存在,不存在或是目录下没有文件则从起始位置开始消费

if(!zkClient.exists(zkOffsetsPath) || zkClient.countChildren(zkOffsetsPath) == 0){

//获取该topic下的分区数量

val partitions = zkClient.countChildren(zkPartitionsPath)

//如果父级目录不存在则创建

if(!zkClient.exists(zkOffsetsPath)) zkClient.createPersistent(zkOffsetsPath,true)

//创建每个分区的offset文件,初始给0.并给map中设定偏移量

for(i <- 0 until partitions){

zkClient.create(zkOffsetsPath +"/"+ i,"0", CreateMode.PERSISTENT)

partitionAndOffsetMap += (i -> 0L)

}

} else{

val partitionIndex = zkClient.countChildren(zkOffsetsPath)

//读取偏移量信息放入map

for(i <- 0 until partitionIndex){

val partitionFilePath = zkOffsetsPath + "/" + i

val offset = zkClient.readData[String](partitionFilePath).toLong

partitionAndOffsetMap += (i -> offset)

}

}

//定义topic的每个特定分区的起始偏移量

val offsetEachPartition: Map[TopicPartition, Long] = partitionAndOffsetMap.map { tuple =>

new TopicPartition(TOPIC, tuple._1) -> tuple._2

}

//设定消费规则

val subscribeRule = Subscribe[String, String](topics, kafkaParams,offsetEachPartition)

val stream = KafkaUtils.createDirectStream[String, String](

streamingContext,

PreferConsistent,

subscribeRule,

new UDPerPartitionConfig(12L)

)

stream.foreachRDD{rdd=>

val offsetRanges: Array[OffsetRange] = rdd.asInstanceOf[HasOffsetRanges].offsetRanges

//无业务逻辑

//假设逻辑为打印到控制台

rdd.foreach(println)

//业务已成功处理,将offset回写给zk

offsetRanges.foreach{offsetRange=>

zkClient.writeData(zkOffsetsPath + "/" + offsetRange.partition , offsetRange.untilOffset.toString)

}

}

streamingContext.start()

streamingContext.awaitTermination()

}

//设定消费速度

private class UDPerPartitionConfig(numOfMessages:Long) extends PerPartitionConfig(){

override def maxRatePerPartition(topicPartition: TopicPartition): Long = numOfMessages

}

class ZkPath(topic:String,group_id:String){

def getOffsetsPath() :String = "/consumers/" + group_id + "/offsets/" + topic

def getTopicPartitionsPath():String = "/brokers/topics/"+topic+"/partitions"

}

}

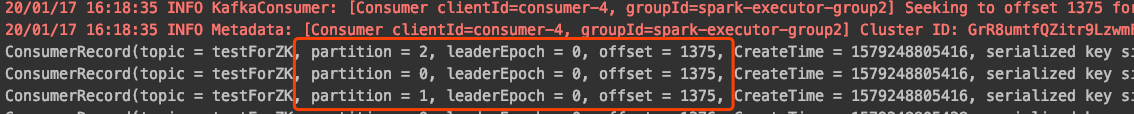

来测试一下

中断任务,再次进行消费

成功

5806

5806

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?