Andrew Ng coursera上的《机器学习》ex4

按照课程所给的ex4的文档要求,ex4要求完成以下几个计算过程的代码编写:

| exerciseName | description |

|---|---|

| sigmoidGradient.m | compute the grident of the sigmoid function |

| randInitializedWeights.m | randomly initialize weights |

| nnCostFunction.m | Neutral network function |

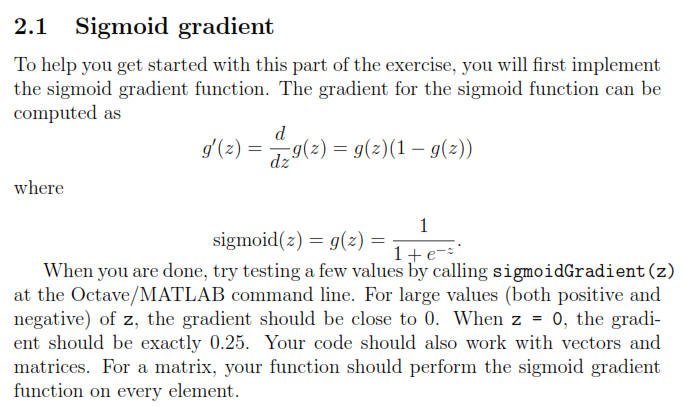

1.sigmoidGradient.m

要求如下:

根据作业文档给出的要求以及逻辑回归的梯度下降的表达式。得出如下的Octave代码:

function g = sigmoidGradient(z)

%SIGMOIDGRADIENT returns the gradient of the sigmoid function

%evaluated at z

% g = SIGMOIDGRADIENT(z) computes the gradient of the sigmoid function

% evaluated at z. This should work regardless if z is a matrix or a

% vector. In particular, if z is a vector or matrix, you should return

% the gradient for each element.

g = zeros(size(z));

% ====================== YOUR CODE HERE ======================

% Instructions: Compute the gradient of the sigmoid function evaluated at

% each value of z (z can be a matrix, vector or scalar).

g = sigmoid(z) .* (1 - sigmoid(z));

% =============================================================

end- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

需要注意的是文档中给出的表达式只是针对一个训练数据集的,需要对所有的训练数据集进行操作的话,需要用到Octave的一个运算符.,小数点表示对所有数据都进行操作。

2. randInitializedWeights.m

该块代码不需要自己写,所有忽略。

3.nnCostFunction.m

要求如下:

Octave代码如下:

function [J grad] = nnCostFunction(nn_params, ...

input_layer_size, ...

hidden_layer_size, ...

num_labels, ...

X, y, lambda)

%NNCOSTFUNCTION Implements the neural network cost function for a two layer

%neural network which performs classification

% [J grad] = NNCOSTFUNCTON(nn_params, hidden_layer_size, num_labels, ...

% X, y, lambda) computes the cost and gradient of the neural network. The

% parameters for the neural network are "unrolled" into the vector

% nn_params and need to be converted back into the weight matrices.

%

% The returned parameter grad should be a "unrolled" vector of the

% partial derivatives of the neural network.

%

% Reshape nn_params back into the parameters Theta1 and Theta2, the weight matrices

% for our 2 layer neural network

Theta1 = reshape(nn_params(1:hidden_layer_size * (input_layer_size + 1)), ...

hidden_layer_size, (input_layer_size + 1));

Theta2 = reshape(nn_params((1 + (hidden_layer_size * (input_layer_size + 1))):end), ...

num_labels, (hidden_layer_size + 1));

% Setup some useful variables

m = size(X, 1);

% You need to return the following variables correctly

J = 0;

Theta1_grad = zeros(size(Theta1));

Theta2_grad = zeros(size(Theta2));

% ====================== YOUR CODE HERE ======================

% Instructions: You should complete the code by working through the

% following parts.

%

% Part 1: Feedforward the neural network and return the cost in the

% variable J. After implementing Part 1, you can verify that your

% cost function computation is correct by verifying the cost

% computed in ex4.m

%

% Part 2: Implement the backpropagation algorithm to compute the gradients

% Theta1_grad and Theta2_grad. You should return the partial derivatives of

% the cost function with respect to Theta1 and Theta2 in Theta1_grad and

% Theta2_grad, respectively. After implementing Part 2, you can check

% that your implementation is correct by running checkNNGradients

%

% Note: The vector y passed into the function is a vector of labels

% containing values from 1..K. You need to map this vector into a

% binary vector of 1's and 0's to be used with the neural network

% cost function.

%

% Hint: We recommend implementing backpropagation using a for-loop

% over the training examples if you are implementing it for the

% first time.

%

% Part 3: Implement regularization with the cost function and gradients.

%

% Hint: You can implement this around the code for

% backpropagation. That is, you can compute the gradients for

% the regularization separately and then add them to Theta1_grad

% and Theta2_grad from Part 2.

%

a1 = [ones(m, 1) X];

z2 = a1 * Theta1';

a2 = sigmoid(z2);

a2 = [ones(m, 1) a2];

z3 = a2 * Theta2';

h = sigmoid(z3);

yk = zeros(m, num_labels);

for i = 1:m

yk(i, y(i)) = 1;

end

J = (1/m)* sum(sum(((-yk) .* log(h) - (1 - yk) .* log(1 - h))));

r = (lambda / (2 * m)) * (sum(sum(Theta1(:, 2:end) .^ 2))

+ sum(sum(Theta2(:, 2:end) .^ 2)));

J = J + r;

for row = 1:m

a1 = [1 X(row,:)]';

z2 = Theta1 * a1;

a2 = sigmoid(z2);

a2 = [1; a2];

z3 = Theta2 * a2;

a3 = sigmoid(z3);

z2 = [1; z2];

delta3 = a3 - yk'(:, row);

delta2 = (Theta2' * delta3) .* sigmoidGradient(z2);

delta2 = delta2(2:end);

Theta1_grad = Theta1_grad + delta2 * a1';

Theta2_grad = Theta2_grad + delta3 * a2';

end

Theta1_grad = Theta1_grad ./ m;

Theta1_grad(:, 2:end) = Theta1_grad(:, 2:end) ...

+ (lambda/m) * Theta1(:, 2:end);

Theta2_grad = Theta2_grad ./ m;

Theta2_grad(:, 2:end) = Theta2_grad(:, 2:end) + ...

+ (lambda/m) * Theta2(:, 2:end);

% -------------------------------------------------------------

% =========================================================================

% Unroll gradients

grad = [Theta1_grad(:) ; Theta2_grad(:)];

end- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 74

- 75

- 76

- 77

- 78

- 79

- 80

- 81

- 82

- 83

- 84

- 85

- 86

- 87

- 88

- 89

- 90

- 91

- 92

- 93

- 94

- 95

- 96

- 97

- 98

- 99

- 100

- 101

- 102

- 103

- 104

- 105

- 106

- 107

- 108

- 109

- 110

- 111

- 112

- 113

以上的代码分成了三个部分:

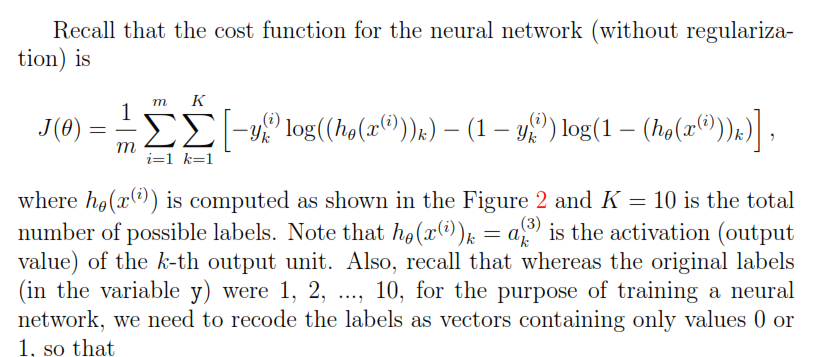

Part 1: Feedforward the neural network and return the cost in the variable J。

Part 2: Implement the backpropagation algorithm to compute the gradients Theta1_grad and Theta2_grad. You should return the partial derivatives of the cost function with respect to Theta1 and Theta2 in Theta1_grad and Theta2_grad, respectively.

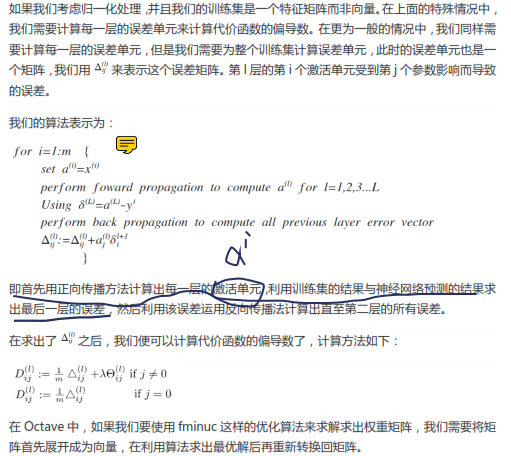

关于反向传播的思想如下:

Part 3: Implement regularization with the cost function and gradients.

三个部分分别对应着不同的代码,上面的代码从上往下依次为Part1,part2,part3。

1117

1117

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?