源码

import jieba.analyse

import imageio

import jieba.posseg as pseg

def jieba_cut():

# 停用词

fr = open('jinyong.txt', 'r',encoding='utf-8')

stop_word_list = fr.readlines()

new_stop_word_list = []

for stop_word in stop_word_list:

stop_word = stop_word.replace('\ufeef', '').strip()

new_stop_word_list.append(stop_word)

print(stop_word_list) #输出停用词

# 词语出现的次数

fr_xyj=open('xyj.txt','r',encoding='utf-8')

s=fr_xyj.read()

words=jieba.cut(s,cut_all=False)

word_dict={}

word_list=''

for word in words:

if (len(word) > 1 and not word in new_stop_word_list):

word_list = word_list + ' ' + word

if (word_dict.get(word)):

word_dict[word] = word_dict[word] + 1

else:

word_dict[word] = 1

fr.close()

print(word_list)

#print(word_dict) # 词语出现的次数

#按次数进行排序

sort_words=sorted(word_dict.items(),key=lambda x:x[1],reverse=True)

sort_words.append(sort_words[0])

sort_words.append(sort_words[1])

print(sort_words[0:101])#输出前0-100的词

from wordcloud import WordCloud

color_mask =imageio.imread("1.jpg")

wc = WordCloud(

background_color="black", # 背景颜色

max_words=5000, # 显示最大词数

font_path="C:\\Users\\ASUS\\Desktop\\simsun.ttc", # 使用字体

min_font_size=15,

max_font_size=50,

width=400,

height=860,

mask=color_mask) # 图幅宽度

i=str('why')

wc.generate(word_list)

wc.to_file(str(i)+".jpg")

jieba_cut()

运行结果(部分)

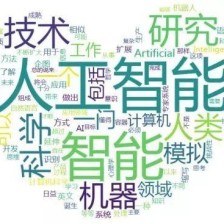

查看词云

附件

注:jinyong.txt 和 xyj.txt的内容是一样的,字体附上链接,可下载

字体 simsun.ttc

1126

1126

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?