Informer: Beyond Efficient Transformer for Long Sequence Time-Series Forecasting

注:大家觉得博客好的话,别忘了点赞收藏呀~

论文:https://arxiv.org/abs/2012.07436

代码:https://github.com/zhouhaoyi/Informer2020

本文参考:https://zhuanlan.zhihu.com/p/374936725

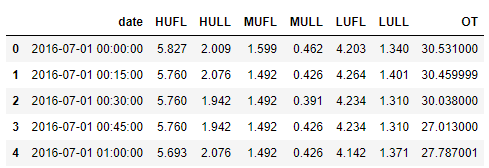

1 数据集

该数据集每条记录由8个特征组成,每个特征会经过conv1d变为512维向量。如果进行多变量预测任务,则预测为后7列变量的值,如果进行的是单变量预测任务,则预测最后一列变量的值。

将date列的内容编码为时间戳,主要是通过utils中的timeFeatures.py文件实现,主要是进行以下的转换(以freq='h’为例),转化后的4维变量每一维分别代表【月份、日期、星期、小时】:

| 转换前 | 转换后 |

|---|---|

| 2016-07-01 00:00:00 | [ 7, 1, 4, 0] |

| 2016-07-01 00:15:00 | [ 7, 1, 4, 1] |

| … | … |

| 2017-06-25 23:00:00 | [ 6,25, 6,23] |

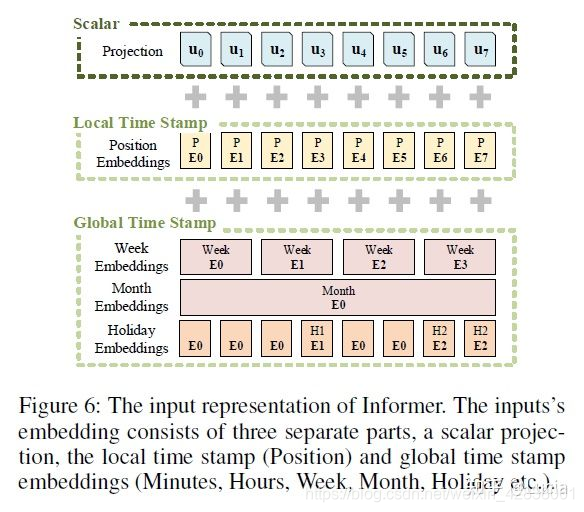

2 Embedding

如图所示,数据的embedding由三个部分组成

- Scalar是采用conv1d将1维转换为512维向量

- Local TIme Stamp采用Transformer中的Positional Emebdding

- Gloabal Time Stamp 则是上述处理后的时间戳经过Eemdding

最后,使用三者相加得到最后的输入(shape:[batch_size,seq_len,d_model)

1.Projection

对输入的原始数据进行一个1维卷积得到,将输入数据从Cin=7维映射为d_model=512维。

class TokenEmbedding(nn.Module):

def __init__(self, c_in, d_model):

super(TokenEmbedding, self).__init__()

padding = 1 if torch.__version__>='1.5.0' else 2

self.tokenConv = nn.Conv1d(in_channels=c_in, out_channels=d_model,

kernel_size=3, padding=padding, padding_mode='circular')

for m in self.modules():

if isinstance(m, nn.Conv1d):

nn.init.kaiming_normal_(m.weight,mode='fan_in',nonlinearity='leaky_relu')

def forward(self, x):

x = self.tokenConv(x.permute(0, 2, 1)).transpose(1,2)

return x

2.Position Embedding

和Transformer中的位置编码一样,公式如下

P E ( p o s , 2 i ) = s i n ( p o s / 1000 0 2 i / d m o d e l ) PE_{(pos,2i)} = sin(pos/10000^{2i/dmodel} ) PE(pos,2i)=sin(pos/100002i/dmodel)

P E ( p o s , 2 i + 1 ) = c o s ( p o s / 1000 0 2 i / d m o d e l ) PE_{(pos,2i+1)} = cos(pos/10000^{2i/dmodel} ) PE(pos,2i+1)=cos(pos/100002i/dmodel)

class PositionalEmbedding(nn.Module):

def __init__(self, d_model, max_len=5000):

super(PositionalEmbedding, self).__init__()

# Compute the positional encodings once in log space.

pe = torch.zeros(max_len, d_model).float()

pe.require_grad = False

position = torch.arange(0, max_len).float().unsqueeze(1)

div_term = (torch.arange(0, d_model, 2).float() * -(math.log(10000.0) / d_model)).exp()

pe[:, 0::2] = torch.sin(position * div_term)

pe[:, 1::2] = torch.cos(position * div_term)

pe = pe.unsqueeze(0)

self.register_buffer('pe', pe)

def forward(self, x):

return self.pe[:, :x.size(1)]

3.时间戳编码

对时间戳的编码主要分为TemporalEmbedding和TimeFeatureEmbedding这两种方式,前者使用month_embed、day_embed、weekday_embed、hour_embed和minute_embed(可选)多个embedding层处理输入的时间戳,将结果相加;后者直接使用一个全连接层将输入的时间戳映射到512维的embedding。

TemporalEmbedding中的embedding即可以使用像Position Embedding中的绝对位置编码(embed_type=='fixed'),也可以使用nn.Embedding让网络训练

class FixedEmbedding(nn.Module):

def __init__(self, c_in, d_model):

super(FixedEmbedding, self).__init__()

w = torch.zeros(c_in, d_model).float()

w.require_grad = False

position = torch.arange(0, c_in).float().unsqueeze(1)

div_term = (torch.arange(0, d_model, 2).float() * -(math.log(10000.0) / d_model)).exp()

w[:, 0::2] = torch.sin(position * div_term)

w[:, 1::2] = torch.cos(position * div_term)

self.emb = nn.Embedding(c_in, d_model)

self.emb.weight = nn.Parameter(w, requires_grad=False)

def forward(self, x):

return self.emb(x).detach()

class TemporalEmbedding(nn.Module):

def __init__(self, d_model, embed_type='fixed', freq='h'):

super(TemporalEmbedding, self).__init__()

minute_size = 4; hour_size = 24

weekday_size = 7; day_size = 32; month_size = 13

Embed = FixedEmbedding if embed_type=='fixed' else nn.Embedding

if freq=='t':

self.minute_embed = Embed(minute_size, d_model)

self.hour_embed = Embed(hour_size, d_model)

self.weekday_embed = Embed(weekday_size, d_model)

self.day_embed = Embed(day_size, d_model)

self.month_embed = Embed(month_size, d_model)

def forward(self, x):

x = x.long()

minute_x = self.minute_embed(x[:,:,4]) if hasattr(self, 'minute_embed') else 0.

hour_x = self.hour_embed(x[:,:,3])

weekday_x = self.weekday_embed(x[:,:,2])

day_x = self.day_embed(x[:,:,1])

month_x = self.month_embed(x[:,:,0])

return hour_x + weekday_x + day_x + month_x + minute_x

class TimeFeatureEmbedding(nn.Module):

def __init__(self, d_model, embed_type='timeF', freq='h'):

super(TimeFeatureEmbedding, self).__init__()

freq_map = {

'h':4, 't':5, 's':6, 'm':1, 'a'

本文详细介绍了Informer模型,它适用于长序列时序预测任务,提高了Transformer的效率。内容涵盖数据集处理、Embedding结构(包括Projection、Position Embedding、时间戳编码)、Encoder和Decoder的组件,以及特有的ProbSparse Self-Attention机制。Informer通过Distilling操作减小计算量,Decoder中应用mask机制确保自注意力的因果性。

本文详细介绍了Informer模型,它适用于长序列时序预测任务,提高了Transformer的效率。内容涵盖数据集处理、Embedding结构(包括Projection、Position Embedding、时间戳编码)、Encoder和Decoder的组件,以及特有的ProbSparse Self-Attention机制。Informer通过Distilling操作减小计算量,Decoder中应用mask机制确保自注意力的因果性。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

3576

3576

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?