在上一篇介绍了关于AudioPolicyService与AudioFlinger服务的启动及初始化。这里探索一下AudioTrack与AudioFlinger做了那些事情。

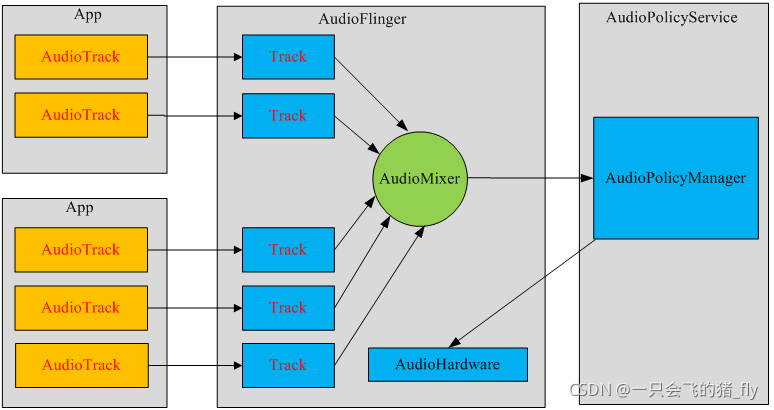

MediaPlayer会在framework层创建相应的音频解码器。而AudioTrack仅仅能播放已经解码的PCM数据流,MediaPlayer在framework层还会创建AudioTrack,把解码后的PCM数流传递给AudioTrack。AudioTrack再传递给AudioFlinger进行混音,然后才传递给硬件播放,所以是MediaPlayer包括了AudioTrack。

例:

AudioTrack audio = new AudioTrack(

AudioManager.STREAM_MUSIC, // 指定流的类型

32000, // 设置音频数据的採样率 32k,假设是44.1k就是44100

AudioFormat.CHANNEL_OUT_STEREO, // 设置输出声道为双声道立体声,而CHANNEL_OUT_MONO类型是单声道

AudioFormat.ENCODING_PCM_16BIT, // 设置音频数据块是8位还是16位。这里设置为16位。

好像如今绝大多数的音频都是16位的了

AudioTrack.MODE_STREAM // 设置模式类型,在这里设置为流类型,第二种MODE_STATIC貌似没有什么效果

);

audio.play(); // 启动音频设备。以下就能够真正開始音频数据的播放了

// 打开mp3文件,读取数据,解码等操作省略 ...

byte[] buffer = new buffer[4096];

int count;

while(true)

{

// 最关键的是将解码后的数据,从缓冲区写入到AudioTrack对象中

audio.write(buffer, 0, 4096);

if(文件结束) break;

}

//关闭并释放资源

audio.stop();

audio.release();每个音频流都会创建AudioTrack类的一个实例,每个AudioTrack会在创建时注冊到 AudioFlinger中。由AudioFlinger把全部的AudioTrack进行混合(Mixer)。然后输送到 AudioHardware中进行播放。 (网上资源)

下面我们分析一下源码:

AudioTtack.java

/**

* streamType:音频流类型

* sampleRateInHz:採样率

* channelConfig:音频声道

* audioFormat:音频格式

* bufferSizeInBytes缓冲区大小:

* mode:音频数据载入模式

* sessionId:会话id

*/

private AudioTrack(AudioAttributes attributes, AudioFormat format, int bufferSizeInBytes,

int mode, int sessionId, boolean offload, int encapsulationMode,

@Nullable TunerConfiguration tunerConfiguration)

throws IllegalArgumentException {

super(attributes, AudioPlaybackConfiguration.PLAYER_TYPE_JAM_AUDIOTRACK);

// mState already == STATE_UNINITIALIZED

mConfiguredAudioAttributes = attributes; // object copy not needed, immutable.

if (format == null) {

throw new IllegalArgumentException("Illegal null AudioFormat");

}

// Check if we should enable deep buffer mode(检查我们是否应该启用深度缓冲模式)

if (shouldEnablePowerSaving(mAttributes, format, bufferSizeInBytes, mode)) {

mAttributes = new AudioAttributes.Builder(mAttributes)

.replaceFlags((mAttributes.getAllFlags()

| AudioAttributes.FLAG_DEEP_BUFFER)

& ~AudioAttributes.FLAG_LOW_LATENCY)

.build();

}

// remember which looper is associated with the AudioTrack instantiation

//记住哪个循环器与 AudioTrack 实例化相关联

Looper looper;

if ((looper = Looper.myLooper()) == null) {

looper = Looper.getMainLooper();

}

int rate = format.getSampleRate();

if (rate == AudioFormat.SAMPLE_RATE_UNSPECIFIED) {

rate = 0;

}

int channelIndexMask = 0;

if ((format.getPropertySetMask()

& AudioFormat.AUDIO_FORMAT_HAS_PROPERTY_CHANNEL_INDEX_MASK) != 0) {

channelIndexMask = format.getChannelIndexMask();

}

int channelMask = 0;

if ((format.getPropertySetMask()

& AudioFormat.AUDIO_FORMAT_HAS_PROPERTY_CHANNEL_MASK) != 0) {

channelMask = format.getChannelMask();

} else if (channelIndexMask == 0) { // if no masks at all, use stereo

channelMask = AudioFormat.CHANNEL_OUT_FRONT_LEFT

| AudioFormat.CHANNEL_OUT_FRONT_RIGHT;

}

int encoding = AudioFormat.ENCODING_DEFAULT;

if ((format.getPropertySetMask() & AudioFormat.AUDIO_FORMAT_HAS_PROPERTY_ENCODING) != 0) {

encoding = format.getEncoding();

}

/**

* 參数检查

* 1.检查streamType是否为:STREAM_ALARM、STREAM_MUSIC、STREAM_RING、STREAM_SYSTEM、STREAM_VOICE_CALL、

* STREAM_NOTIFICATION、STREAM_BLUETOOTH_SCO、STREAM_BLUETOOTH_SCO,并赋值给mStreamType

* 2.检查sampleRateInHz是否在4000到48000之间。并赋值给mSampleRate

* 3.设置mChannels:

* CHANNEL_OUT_DEFAULT、CHANNEL_OUT_MONO、CHANNEL_CONFIGURATION_MONO ---> CHANNEL_OUT_MONO

* CHANNEL_OUT_STEREO、CHANNEL_CONFIGURATION_STEREO ---> CHANNEL_OUT_STEREO

* 4.设置mAudioFormat:

* ENCODING_PCM_16BIT、ENCODING_DEFAULT ---> ENCODING_PCM_16BIT

* ENCODING_PCM_8BIT ---> ENCODING_PCM_8BIT

* 5.设置mDataLoadMode:

* MODE_STREAM

* MODE_STATIC

*/

audioParamCheck(rate, channelMask, channelIndexMask, encoding, mode);

mOffloaded = offload;

mStreamType = AudioSystem.STREAM_DEFAULT;

/**

* buffer大小检查,计算每帧字节大小,假设是ENCODING_PCM_16BIT,则为mChannelCount * 2

* mNativeBufferSizeInFrames为帧数

*/

audioBuffSizeCheck(bufferSizeInBytes);

mInitializationLooper = looper;

if (sessionId < 0) {

throw new IllegalArgumentException("Invalid audio session ID: "+sessionId);

}

//进入native层初始化

int[] sampleRate = new int[] {mSampleRate};

int[] session = new int[1];

session[0] = sessionId;

// native initialization

int initResult = native_setup(new WeakReference<AudioTrack>(this), mAttributes,

sampleRate, mChannelMask, mChannelIndexMask, mAudioFormat,

mNativeBufferSizeInBytes, mDataLoadMode, session, 0 /*nativeTrackInJavaObj*/,

offload, encapsulationMode, tunerConfiguration);

if (initResult != SUCCESS) {

loge("Error code "+initResult+" when initializing AudioTrack.");

return; // with mState == STATE_UNINITIALIZED

}

mSampleRate = sampleRate[0];

mSessionId = session[0];

// TODO: consider caching encapsulationMode and tunerConfiguration in the Java object.

if ((mAttributes.getFlags() & AudioAttributes.FLAG_HW_AV_SYNC) != 0) {

int frameSizeInBytes;

if (AudioFormat.isEncodingLinearFrames(mAudioFormat)) {

frameSizeInBytes = mChannelCount * AudioFormat.getBytesPerSample(mAudioFormat);

} else {

frameSizeInBytes = 1;

}

mOffset = ((int) Math.ceil(HEADER_V2_SIZE_BYTES / frameSizeInBytes)) * frameSizeInBytes;

}

if (mDataLoadMode == MODE_STATIC) {

mState = STATE_NO_STATIC_DATA;

} else {

mState = STATE_INITIALIZED;

}

baseRegisterPlayer();

}AudioTrack有两种数据载入模式:

MODE_STREAM:上层持续write音频数据流到AudioTrack中,write堵塞直到数据流从Java层传输到native层,同时添加到播放队列中。这中模式适用于播放较大音频数据,但会造成了一定的延时。一般用于播放在线音乐,或是较大的音频文件。

MODE_STATIC:在播放之前,先把全部数据一次性write到AudioTrack的内部缓冲区中。适用于播放内存占用小、延时要求较高的音频数据。一般用于提示音,按键音等.....

AudioTrack的构造中上面做了一些参数的检查,这里的native_setup函数是通过Jni在C++层创建一个AudioTrack。

android_media_AudioTrack.cpp

static const JNINativeMethod gMethods[] = {

// name, signature, funcPtr

{"native_is_direct_output_supported", "(IIIIIII)Z",

(void *)android_media_AudioTrack_is_direct_output_supported},

{"native_start", "()V", (void *)android_media_AudioTrack_start},

{"native_stop", "()V", (void *)android_media_AudioTrack_stop},

{"native_pause", "()V", (void *)android_media_AudioTrack_pause},

{"native_flush", "()V", (void *)android_media_AudioTrack_flush},

{"native_setup", "(Ljava/lang/Object;Ljava/lang/Object;[IIIIII[IJZILjava/lang/Object;)I",

(void *)android_media_AudioTrack_setup},

...

...

}JNI使用的是动态注册的方式,JNI相关的知识这里不做介绍。

static jint android_media_AudioTrack_setup(JNIEnv *env, jobject thiz, jobject weak_this,

jobject jaa, jintArray jSampleRate,

jint channelPositionMask, jint channelIndexMask,

jint audioFormat, jint buffSizeInBytes, jint memoryMode,

jintArray jSession, jlong nativeAudioTrack,

jboolean offload, jint encapsulationMode,

jobject tunerConfiguration) {

...

...

...

// create the native AudioTrack object

lpTrack = new AudioTrack();

// read the AudioAttributes values

auto paa = JNIAudioAttributeHelper::makeUnique();

jint jStatus = JNIAudioAttributeHelper::nativeFromJava(env, jaa, paa.get());

if (jStatus != (jint)AUDIO_JAVA_SUCCESS) {

return jStatus;

}

ALOGV("AudioTrack_setup for usage=%d content=%d flags=0x%#x tags=%s",

paa->usage, paa->content_type, paa->flags, paa->tags);

// initialize the callback information:

// this data will be passed with every AudioTrack callback

// 创建存储音频数据的容器

lpJniStorage = new AudioTrackJniStorage();

//将Java层的AudioTrack引用保存到AudioTrackJniStorage中

lpJniStorage->mCallbackData.audioTrack_class = (jclass)env->NewGlobalRef(clazz);

// we use a weak reference so the AudioTrack object can be garbage collected.

lpJniStorage->mCallbackData.audioTrack_ref = env->NewGlobalRef(weak_this);

lpJniStorage->mCallbackData.isOffload = offload;

lpJniStorage->mCallbackData.busy = false;

audio_offload_info_t offloadInfo;

if (offload == JNI_TRUE) {

offloadInfo = AUDIO_INFO_INITIALIZER;

offloadInfo.format = format;

offloadInfo.sample_rate = sampleRateInHertz;

offloadInfo.channel_mask = nativeChannelMask;

offloadInfo.has_video = false;

offloadInfo.stream_type = AUDIO_STREAM_MUSIC; //required for offload

}

// initialize the native AudioTrack object

//初始化不同模式下的native AudioTrack对象

status_t status = NO_ERROR;

switch (memoryMode) { //stream模式

case MODE_STREAM:

status = lpTrack->set(

AUDIO_STREAM_DEFAULT,// stream type, but more info conveyed in paa (last argument)

sampleRateInHertz,

format,// word length, PCM

nativeChannelMask,

offload ? 0 : frameCount,

offload ? AUDIO_OUTPUT_FLAG_COMPRESS_OFFLOAD : AUDIO_OUTPUT_FLAG_NONE,

audioCallback, &(lpJniStorage->mCallbackData),//callback, callback data (user)

0,// notificationFrames == 0 since not using EVENT_MORE_DATA to feed the AudioTrack

0,//stream模式下的共享内存在AudioFlinger中创建

true,// thread can call Java

sessionId,// audio session ID

offload ? AudioTrack::TRANSFER_SYNC_NOTIF_CALLBACK : AudioTrack::TRANSFER_SYNC,

offload ? &offloadInfo : NULL,

-1, -1, // default uid, pid values

paa.get());

break;

case MODE_STATIC: //static模式

// AudioTrack is using shared memory

// 为AudioTrack分配共享内存区域

if (!lpJniStorage->allocSharedMem(buffSizeInBytes)) {

ALOGE("Error creating AudioTrack in static mode: error creating mem heap base");

goto native_init_failure;

}

status = lpTrack->set(

AUDIO_STREAM_DEFAULT,// stream type, but more info conveyed in paa (last argument)

sampleRateInHertz,

format,// word length, PCM

nativeChannelMask,

frameCount,

AUDIO_OUTPUT_FLAG_NONE,

audioCallback, &(lpJniStorage->mCallbackData),//callback, callback data (user));

0,// notificationFrames == 0 since not using EVENT_MORE_DATA to feed the AudioTrack

lpJniStorage->mMemBase,// shared mem

true,// thread can call Java

sessionId,// audio session ID

AudioTrack::TRANSFER_SHARED,

NULL, // default offloadInfo

-1, -1, // default uid, pid values

paa.get());

break;

default:

ALOGE("Unknown mode %d", memoryMode);

goto native_init_failure;

}

if (status != NO_ERROR) {

ALOGE("Error %d initializing AudioTrack", status);

goto native_init_failure;

}

// Set caller name so it can be logged in destructor.

// MediaMetricsConstants.h: AMEDIAMETRICS_PROP_CALLERNAME_VALUE_JAVA

lpTrack->setCallerName("java");

} else { // end if (nativeAudioTrack == 0)

lpTrack = (AudioTrack*)nativeAudioTrack;

// TODO: We need to find out which members of the Java AudioTrack might

// need to be initialized from the Native AudioTrack

// these are directly returned from getters:

// mSampleRate

// mAudioFormat

// mStreamType

// mChannelConfiguration

// mChannelCount

// mState (?)

// mPlayState (?)

// these may be used internally (Java AudioTrack.audioParamCheck():

// mChannelMask

// mChannelIndexMask

// mDataLoadMode

// initialize the callback information:

// this data will be passed with every AudioTrack callback

// 创建存储音频数据的容器

lpJniStorage = new AudioTrackJniStorage();

lpJniStorage->mCallbackData.audioTrack_class = (jclass)env->NewGlobalRef(clazz);

// we use a weak reference so the AudioTrack object can be garbage collected.

lpJniStorage->mCallbackData.audioTrack_ref = env->NewGlobalRef(weak_this);

lpJniStorage->mCallbackData.busy = false;

}

lpJniStorage->mAudioTrackCallback =

new JNIAudioTrackCallback(env, thiz, lpJniStorage->mCallbackData.audioTrack_ref,

javaAudioTrackFields.postNativeEventInJava);

lpTrack->setAudioTrackCallback(lpJniStorage->mAudioTrackCallback);

nSession = (jint *) env->GetPrimitiveArrayCritical(jSession, NULL);

if (nSession == NULL) {

ALOGE("Error creating AudioTrack: Error retrieving session id pointer");

goto native_init_failure;

}

// read the audio session ID back from AudioTrack in case we create a new session

...

...

...

...

return (jint) AUDIOTRACK_ERROR_SETUP_NATIVEINITFAILED;

}在android_media_AudioTrack_setup()函数中做了几个很重要的事情:

1,创建Native层的Audiorack。

2,创建存储音频数据的容器。

3,将Java层的AudioTrack引用保存到AudioTrackJniStorage中

struct audiotrack_callback_cookie {

jclass audioTrack_class;

jobject audioTrack_ref;

bool busy;

Condition cond;

bool isOffload;

};

class AudioTrackJniStorage {

public:

sp<MemoryHeapBase> mMemHeap;

sp<MemoryBase> mMemBase;

audiotrack_callback_cookie mCallbackData{};

sp<JNIDeviceCallback> mDeviceCallback;

sp<JNIAudioTrackCallback> mAudioTrackCallback;

bool allocSharedMem(int sizeInBytes) {

mMemHeap = new MemoryHeapBase(sizeInBytes, 0, "AudioTrack Heap Base");

if (mMemHeap->getHeapID() < 0) {

return false;

}

mMemBase = new MemoryBase(mMemHeap, 0, sizeInBytes);

return true;

}

};

bool allocSharedMem(int sizeInBytes) {

//创建一个匿名共享内存

mMemHeap = new MemoryHeapBase(sizeInBytes, 0, "AudioTrack Heap Base");

if (mMemHeap->getHeapID() < 0) {

return false;

}

mMemBase = new MemoryBase(mMemHeap, 0, sizeInBytes);

return true;

}

};

//创建匿名共享内存区域

MemoryHeapBase::MemoryHeapBase(size_t size, uint32_t flags, char const * name)

: mFD(-1), mSize(0), mBase(MAP_FAILED), mFlags(flags),

mDevice(nullptr), mNeedUnmap(false), mOffset(0)

{

//获取内存页大小

const size_t pagesize = getpagesize();

//字节对齐

size = ((size + pagesize-1) & ~(pagesize-1));

//创建共享内存。打开/dev/ashmem设备。得到一个文件描写叙述符

int fd = ashmem_create_region(name == nullptr ? "MemoryHeapBase" : name, size);

ALOGE_IF(fd<0, "error creating ashmem region: %s", strerror(errno));

if (fd >= 0) {

//通过mmap将匿名共享内存映射到当前进程地址空间

if (mapfd(fd, size) == NO_ERROR) {

if (flags & READ_ONLY) {

ashmem_set_prot_region(fd, PROT_READ);

}

}

}

}4,初始化不同模式下的native AudioTrack对象,并调用了lpTrack的set()方法。

AudioTrack.cpp

status_t AudioTrack::set(

audio_stream_type_t streamType,

uint32_t sampleRate,

audio_format_t format,

audio_channel_mask_t channelMask,

size_t frameCount,

audio_output_flags_t flags,

callback_t cbf,

void* user,

int32_t notificationFrames,

const sp<IMemory>& sharedBuffer,

bool threadCanCallJava,

audio_session_t sessionId,

transfer_type transferType,

const audio_offload_info_t *offloadInfo,

uid_t uid,

pid_t pid,

const audio_attributes_t* pAttributes,

bool doNotReconnect,

float maxRequiredSpeed,

audio_port_handle_t selectedDeviceId)

{

status_t status;

uint32_t channelCount;

pid_t callingPid;

pid_t myPid;

...

...

mThreadCanCallJava = threadCanCallJava;

mSelectedDeviceId = selectedDeviceId;

mSessionId = sessionId;

//设置音频传输数据类型

switch (transferType) {

case TRANSFER_DEFAULT:

if (sharedBuffer != 0) {

transferType = TRANSFER_SHARED;

} else if (cbf == NULL || threadCanCallJava) {

transferType = TRANSFER_SYNC;

} else {

transferType = TRANSFER_CALLBACK;

}

break;

case TRANSFER_CALLBACK:

case TRANSFER_SYNC_NOTIF_CALLBACK:

if (cbf == NULL || sharedBuffer != 0) {

ALOGE("%s(): Transfer type %s but cbf == NULL || sharedBuffer != 0",

convertTransferToText(transferType), __func__);

status = BAD_VALUE;

goto exit;

}

break;

case TRANSFER_OBTAIN:

case TRANSFER_SYNC:

if (sharedBuffer != 0) {

ALOGE("%s(): Transfer type TRANSFER_OBTAIN but sharedBuffer != 0", __func__);

status = BAD_VALUE;

goto exit;

}

break;

case TRANSFER_SHARED:

if (sharedBuffer == 0) {

ALOGE("%s(): Transfer type TRANSFER_SHARED but sharedBuffer == 0", __func__);

status = BAD_VALUE;

goto exit;

}

break;

default:

ALOGE("%s(): Invalid transfer type %d",

__func__, transferType);

status = BAD_VALUE;

goto exit;

}

mSharedBuffer = sharedBuffer;

mTransfer = transferType;

mDoNotReconnect = doNotReconnect;

ALOGV_IF(sharedBuffer != 0, "%s(): sharedBuffer: %p, size: %zu",

__func__, sharedBuffer->unsecurePointer(), sharedBuffer->size());

ALOGV("%s(): streamType %d frameCount %zu flags %04x",

__func__, streamType, frameCount, flags);

// invariant that mAudioTrack != 0 is true only after set() returns successfully

if (mAudioTrack != 0) {

ALOGE("%s(): Track already in use", __func__);

status = INVALID_OPERATION;

goto exit;

}

// handle default values first.

//音频格式设置

if (streamType == AUDIO_STREAM_DEFAULT) {

streamType = AUDIO_STREAM_MUSIC;

}

if (pAttributes == NULL) {

if (uint32_t(streamType) >= AUDIO_STREAM_PUBLIC_CNT) {

ALOGE("%s(): Invalid stream type %d", __func__, streamType);

status = BAD_VALUE;

goto exit;

}

mStreamType = streamType;

} else {

// stream type shouldn't be looked at, this track has audio attributes

memcpy(&mAttributes, pAttributes, sizeof(audio_attributes_t));

ALOGV("%s(): Building AudioTrack with attributes:"

" usage=%d content=%d flags=0x%x tags=[%s]",

__func__,

mAttributes.usage, mAttributes.content_type, mAttributes.flags, mAttributes.tags);

mStreamType = AUDIO_STREAM_DEFAULT;

audio_flags_to_audio_output_flags(mAttributes.flags, &flags);

}

...

...

...

//假设设置了提供音频数据的回调函数,则启动AudioTrackThread线程来提供音频数据

if (cbf != NULL) {

mAudioTrackThread = new AudioTrackThread(*this);

mAudioTrackThread->run("AudioTrack", ANDROID_PRIORITY_AUDIO, 0 /*stack*/);

// thread begins in paused state, and will not reference us until start()

}

// create the IAudioTrack

{

AutoMutex lock(mLock);

status = createTrack_l();//继续

}

if (status != NO_ERROR) {

if (mAudioTrackThread != 0) {

mAudioTrackThread->requestExit(); // see comment in AudioTrack.h

mAudioTrackThread->requestExitAndWait();

mAudioTrackThread.clear();

}

goto exit;

}

...

...

...

mStatus = status;

return status;

}set()函数中对参数做了一些处理,继续看一下createTrack_l()。

status_t AudioTrack::createTrack_l()

{

status_t status;

bool callbackAdded = false;

//得到AudioFlinger的代理对象

const sp<IAudioFlinger>& audioFlinger = AudioSystem::get_audio_flinger();

if (audioFlinger == 0) {

ALOGE("%s(%d): Could not get audioflinger",

__func__, mPortId);

status = NO_INIT;

goto exit;

}

{

...

...

...

...

IAudioFlinger::CreateTrackInput input;

if (mStreamType != AUDIO_STREAM_DEFAULT) {

input.attr = AudioSystem::streamTypeToAttributes(mStreamType);

} else {

input.attr = mAttributes;

}

input.config = AUDIO_CONFIG_INITIALIZER;

input.config.sample_rate = mSampleRate;

input.config.channel_mask = mChannelMask;

input.config.format = mFormat;

input.config.offload_info = mOffloadInfoCopy;

input.clientInfo.clientUid = mClientUid;

input.clientInfo.clientPid = mClientPid;

input.clientInfo.clientTid = -1;

if (mFlags & AUDIO_OUTPUT_FLAG_FAST) {

// It is currently meaningless to request SCHED_FIFO for a Java thread. Even if the

// application-level code follows all non-blocking design rules, the language runtime

// doesn't also follow those rules, so the thread will not benefit overall.

if (mAudioTrackThread != 0 && !mThreadCanCallJava) {

input.clientInfo.clientTid = mAudioTrackThread->getTid();

}

}

input.sharedBuffer = mSharedBuffer;

input.notificationsPerBuffer = mNotificationsPerBufferReq;

input.speed = 1.0;

if (audio_has_proportional_frames(mFormat) && mSharedBuffer == 0 &&

(mFlags & AUDIO_OUTPUT_FLAG_FAST) == 0) {

input.speed = !isPurePcmData_l() || isOffloadedOrDirect_l() ? 1.0f :

max(mMaxRequiredSpeed, mPlaybackRate.mSpeed);

}

input.flags = mFlags;

input.frameCount = mReqFrameCount;

input.notificationFrameCount = mNotificationFramesReq;

input.selectedDeviceId = mSelectedDeviceId;

input.sessionId = mSessionId;

input.audioTrackCallback = mAudioTrackCallback;

IAudioFlinger::CreateTrackOutput output;

sp<IAudioTrack> track = audioFlinger->createTrack(input,

output,

&status);

...\

...

...

...

...

// FIXME compare to AudioRecord

sp<IMemory> iMem = track->getCblk();

if (iMem == 0) {

ALOGE("%s(%d): Could not get control block", __func__, mPortId);

status = NO_INIT;

goto exit;

}

// TODO: Using unsecurePointer() has some associated security pitfalls

// (see declaration for details).

// Either document why it is safe in this case or address the

// issue (e.g. by copying).

void *iMemPointer = iMem->unsecurePointer();

if (iMemPointer == NULL) {

ALOGE("%s(%d): Could not get control block pointer", __func__, mPortId);

status = NO_INIT;

goto exit;

}

// invariant that mAudioTrack != 0 is true only after set() returns successfully

if (mAudioTrack != 0) {

IInterface::asBinder(mAudioTrack)->unlinkToDeath(mDeathNotifier, this);

mDeathNotifier.clear();

}

//将创建的Track代理对象、匿名共享内存代理对象保存到AudioTrack的成员变量中

mAudioTrack = track;

mCblkMemory = iMem;

IPCThreadState::self()->flushCommands();

//保存匿名共享内存的首地址。在匿名共享内存的头部存放了一个audio_track_cblk_t对象

audio_track_cblk_t* cblk = static_cast<audio_track_cblk_t*>(iMemPointer);

mCblk = cblk;

mAwaitBoost = false;

if (mFlags & AUDIO_OUTPUT_FLAG_FAST) {

if (output.flags & AUDIO_OUTPUT_FLAG_FAST) {

ALOGI("%s(%d): AUDIO_OUTPUT_FLAG_FAST successful; frameCount %zu -> %zu",

__func__, mPortId, mReqFrameCount, mFrameCount);

if (!mThreadCanCallJava) {

mAwaitBoost = true;

}

} else {

ALOGD("%s(%d): AUDIO_OUTPUT_FLAG_FAST denied by server; frameCount %zu -> %zu",

__func__, mPortId, mReqFrameCount, mFrameCount);

}

}

mFlags = output.flags;

//mOutput != output includes the case where mOutput == AUDIO_IO_HANDLE_NONE for first creation

if (mDeviceCallback != 0) {

if (mOutput != AUDIO_IO_HANDLE_NONE) {

AudioSystem::removeAudioDeviceCallback(this, mOutput, mPortId);

}

AudioSystem::addAudioDeviceCallback(this, output.outputId, output.portId);

callbackAdded = true;

}

mPortId = output.portId;

// We retain a copy of the I/O handle, but don't own the reference

mOutput = output.outputId;

mRefreshRemaining = true;

// Starting address of buffers in shared memory. If there is a shared buffer, buffers

// is the value of pointer() for the shared buffer, otherwise buffers points

// immediately after the control block. This address is for the mapping within client

// address space. AudioFlinger::TrackBase::mBuffer is for the server address space.

void* buffers;

if (mSharedBuffer == 0) {

buffers = cblk + 1;

} else {

// TODO: Using unsecurePointer() has some associated security pitfalls

// (see declaration for details).

// Either document why it is safe in this case or address the

// issue (e.g. by copying).

buffers = mSharedBuffer->unsecurePointer();

if (buffers == NULL) {

ALOGE("%s(%d): Could not get buffer pointer", __func__, mPortId);

status = NO_INIT;

goto exit;

}

}

mAudioTrack->attachAuxEffect(mAuxEffectId);

// If IAudioTrack is re-created, don't let the requested frameCount

// decrease. This can confuse clients that cache frameCount().

if (mFrameCount > mReqFrameCount) {

mReqFrameCount = mFrameCount;

}

// reset server position to 0 as we have new cblk.

mServer = 0;

// update proxy

if (mSharedBuffer == 0) {

mStaticProxy.clear();

mProxy = new AudioTrackClientProxy(cblk, buffers, mFrameCount, mFrameSize);

} else {

mStaticProxy = new StaticAudioTrackClientProxy(cblk, buffers, mFrameCount, mFrameSize);

mProxy = mStaticProxy;

}

mProxy->setVolumeLR(gain_minifloat_pack(

gain_from_float(mVolume[AUDIO_INTERLEAVE_LEFT]),

gain_from_float(mVolume[AUDIO_INTERLEAVE_RIGHT])));

mProxy->setSendLevel(mSendLevel);

const uint32_t effectiveSampleRate = adjustSampleRate(mSampleRate, mPlaybackRate.mPitch);

const float effectiveSpeed = adjustSpeed(mPlaybackRate.mSpeed, mPlaybackRate.mPitch);

const float effectivePitch = adjustPitch(mPlaybackRate.mPitch);

mProxy->setSampleRate(effectiveSampleRate);

AudioPlaybackRate playbackRateTemp = mPlaybackRate;

playbackRateTemp.mSpeed = effectiveSpeed;

playbackRateTemp.mPitch = effectivePitch;

mProxy->setPlaybackRate(playbackRateTemp);

mProxy->setMinimum(mNotificationFramesAct);

mDeathNotifier = new DeathNotifier(this);

IInterface::asBinder(mAudioTrack)->linkToDeath(mDeathNotifier, this);

// This is the first log sent from the AudioTrack client.

// The creation of the audio track by AudioFlinger (in the code above)

// is the first log of the AudioTrack and must be present before

// any AudioTrack client logs will be accepted.

...

...

...

...

...

mStatus = status;

// sp<IAudioTrack> track destructor will cause releaseOutput() to be called by AudioFlinger

return status;

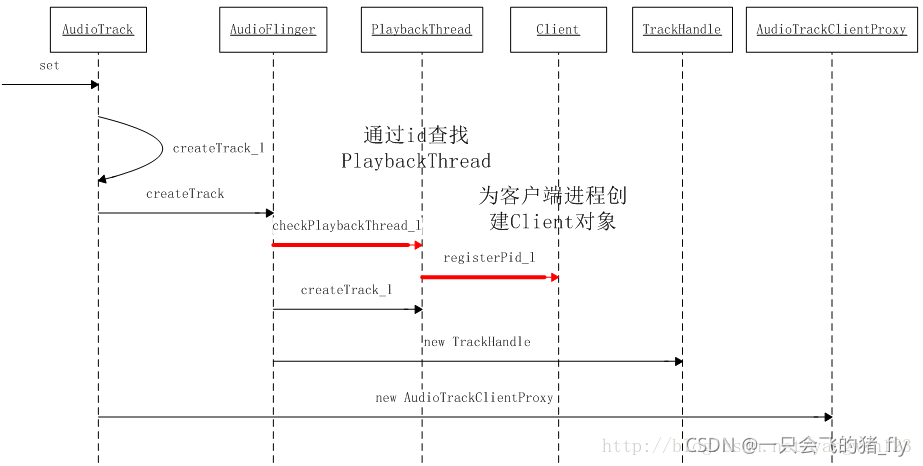

}上一篇文章中讲到,AudioPolicyService初始化的时候创建了AudioPolicyManager并调用了AudioFlinger::openOutput()函数打开一个默认的音频输出PlaybackThread线程,同时为该线程分配一个全局唯一的audio_io_handle_t值,并以键值对的形式保存在AudioFlinger的成员变量mPlaybackThreads中。在这里首先依据音频參数通过调用AudioSystem::getOutput()函数得到当前音频输出接口的PlaybackThread线程id号。同时传递给createTrack函数用于创建Track。

音频播放需要AudioTrack写入音频数据,同时AudioFlinger拿到数据后做混音,因此要在AudioTrack与AudioFlinger之间建立数据通道,而AudioTrack与AudioFlinger又分属不同的进程空间,Android系统採用Binder通信方式来通信。

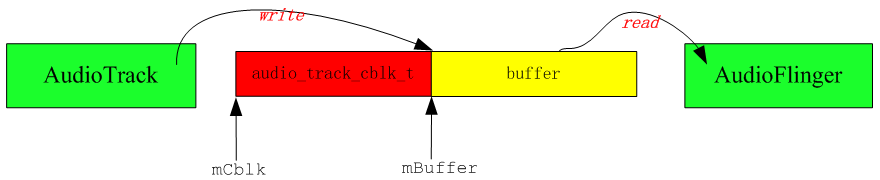

IAudioTrack建立了AudioTrack与AudioFlinger之间的关系,在static模式下,用于存放音频数据的匿名共享内存在AudioTrack这边创建。在stream播放模式下,匿名共享内存却是在AudioFlinger这边创建。这两种播放模式下创建的匿名共享内存是有差别的,stream模式下的匿名共享内存头部会创建一个audio_track_cblk_t对象,用于协调生产者AudioTrack和消费者AudioFlinger之间的步调。createTrack就是在AudioFlinger中创建一个Track对象。

AudioFlinger.cpp

sp<IAudioTrack> AudioFlinger::createTrack(const CreateTrackInput& input,

CreateTrackOutput& output,

status_t *status)

{

sp<PlaybackThread::Track> track; //创建一个track对象

sp<TrackHandle> trackHandle;

sp<Client> client;

status_t lStatus;

audio_stream_type_t streamType;

audio_port_handle_t portId = AUDIO_PORT_HANDLE_NONE;

std::vector<audio_io_handle_t> secondaryOutputs;

bool updatePid = (input.clientInfo.clientPid == -1);

const uid_t callingUid = IPCThreadState::self()->getCallingUid();

uid_t clientUid = input.clientInfo.clientUid;

audio_io_handle_t effectThreadId = AUDIO_IO_HANDLE_NONE;

std::vector<int> effectIds;

audio_attributes_t localAttr = input.attr;

if (!isAudioServerOrMediaServerUid(callingUid)) {

ALOGW_IF(clientUid != callingUid,

"%s uid %d tried to pass itself off as %d",

__FUNCTION__, callingUid, clientUid);

clientUid = callingUid;

updatePid = true;

}

pid_t clientPid = input.clientInfo.clientPid;

const pid_t callingPid = IPCThreadState::self()->getCallingPid();

if (updatePid) {

ALOGW_IF(clientPid != -1 && clientPid != callingPid,

"%s uid %d pid %d tried to pass itself off as pid %d",

__func__, callingUid, callingPid, clientPid);

clientPid = callingPid;

}

audio_session_t sessionId = input.sessionId;

if (sessionId == AUDIO_SESSION_ALLOCATE) {

sessionId = (audio_session_t) newAudioUniqueId(AUDIO_UNIQUE_ID_USE_SESSION);

} else if (audio_unique_id_get_use(sessionId) != AUDIO_UNIQUE_ID_USE_SESSION) {

lStatus = BAD_VALUE;

goto Exit;

}

output.sessionId = sessionId;

output.outputId = AUDIO_IO_HANDLE_NONE;

output.selectedDeviceId = input.selectedDeviceId;

//获取输出设备(这个函数最终会在AudioPolicyManager.cpp中实现,通过mEngine直接获取device)

lStatus = AudioSystem::getOutputForAttr(&localAttr, &output.outputId, sessionId, &streamType,

clientPid, clientUid, &input.config, input.flags,

&output.selectedDeviceId, &portId, &secondaryOutputs);

if (lStatus != NO_ERROR || output.outputId == AUDIO_IO_HANDLE_NONE) {

ALOGE("createTrack() getOutputForAttr() return error %d or invalid output handle", lStatus);

goto Exit;

}

....

....

....

....

....

{

Mutex::Autolock _l(mLock);

//依据播放线程ID号查找出相应的PlaybackThread,在openout时,播放线程以key/value形式保存在AudioFlinger的mPlaybackThreads中

PlaybackThread *thread = checkPlaybackThread_l(output.outputId);

if (thread == NULL) {

ALOGE("no playback thread found for output handle %d", output.outputId);

lStatus = BAD_VALUE;

goto Exit;

}

//依据客户端进程pid查找是否已经为该客户进程创建了Client对象。假设没有,则创建一个Client对象

client = registerPid(clientPid);

PlaybackThread *effectThread = NULL;

// check if an effect chain with the same session ID is present on another

// output thread and move it here.

//遍历全部的播放线程。不包含输出线程,假设该线程中Track的sessionId与当前同样,则取出该线程作为当前Track的effectThread。

for (size_t i = 0; i < mPlaybackThreads.size(); i++) {

sp<PlaybackThread> t = mPlaybackThreads.valueAt(i);

if (mPlaybackThreads.keyAt(i) != output.outputId) {

uint32_t sessions = t->hasAudioSession(sessionId);

if (sessions & ThreadBase::EFFECT_SESSION) {

effectThread = t.get();

break;

}

}

}

ALOGV("createTrack() sessionId: %d", sessionId);

output.sampleRate = input.config.sample_rate;

output.frameCount = input.frameCount;

output.notificationFrameCount = input.notificationFrameCount;

output.flags = input.flags;

//在找到的PlaybackThread线程中创建Track

track = thread->createTrack_l(client, streamType, localAttr, &output.sampleRate,

input.config.format, input.config.channel_mask,

&output.frameCount, &output.notificationFrameCount,

input.notificationsPerBuffer, input.speed,

input.sharedBuffer, sessionId, &output.flags,

callingPid, input.clientInfo.clientTid, clientUid,

&lStatus, portId, input.audioTrackCallback);

LOG_ALWAYS_FATAL_IF((lStatus == NO_ERROR) && (track == 0));

// we don't abort yet if lStatus != NO_ERROR; there is still work to be done regardless

output.afFrameCount = thread->frameCount();

output.afSampleRate = thread->sampleRate();

output.afLatencyMs = thread->latency();

output.portId = portId;

if (lStatus == NO_ERROR) {

// Connect secondary outputs. Failure on a secondary output must not imped the primary

// Any secondary output setup failure will lead to a desync between the AP and AF until

// the track is destroyed.

TeePatches teePatches;

for (audio_io_handle_t secondaryOutput : secondaryOutputs) {

PlaybackThread *secondaryThread = checkPlaybackThread_l(secondaryOutput);

if (secondaryThread == NULL) {

ALOGE("no playback thread found for secondary output %d", output.outputId);

continue;

}

....

....

....

....

....

....

//此时Track已成功创建,还须要为该Track创建代理对象TrackHandle

// return handle to client

trackHandle = new TrackHandle(track);

Exit:

if (lStatus != NO_ERROR && output.outputId != AUDIO_IO_HANDLE_NONE) {

AudioSystem::releaseOutput(portId);

}

*status = lStatus;

return trackHandle;

}AudioFlinger中为应用程序进程创建一个Client对象,与客户进程通信。然后跟据播放线程ID找出相应的PlaybackThread。并将创建Track的任务转交给它,PlaybackThread完毕Track创建后,因为Track没有通信功能,因此还须要为其创建一个代理通信业务的TrackHandle对象。

(网上资源)

//依据进程pid。为请求播放音频的client创建一个Client对象。

sp<AudioFlinger::Client> AudioFlinger::registerPid(pid_t pid)

{

Mutex::Autolock _cl(mClientLock);

// If pid is already in the mClients wp<> map, then use that entry

// (for which promote() is always != 0), otherwise create a new entry and Client.

sp<Client> client = mClients.valueFor(pid).promote();

if (client == 0) {

client = new Client(this, pid);

mClients.add(pid, client);

}

return client;

}

AudioFlinger的变量mClients以键值对的形式保存pid和Client对象。这里首先取出pid相应的Client对象,如果没有,则为client进程创建一个新的Client对象。

AudioFlinger::Client::Client(const sp<AudioFlinger>& audioFlinger, pid_t pid)

: RefBase(),

mAudioFlinger(audioFlinger),

mPid(pid)

{

mMemoryDealer = new MemoryDealer(

audioFlinger->getClientSharedHeapSize(),

(std::string("AudioFlinger::Client(") + std::to_string(pid) + ")").c_str());

}创建了一个MemoryDealer对象,该对象用于分配共享内存

MemoryDealer::MemoryDealer(size_t size, const char* name, uint32_t flags)

: mHeap(new MemoryHeapBase(size, flags, name)), //创建指定大小的共享内存

mAllocator(new SimpleBestFitAllocator(size)) //创建内存分配器

{

}

SimpleBestFitAllocator::SimpleBestFitAllocator(size_t size)

{

size_t pagesize = getpagesize();

mHeapSize = ((size + pagesize-1) & ~(pagesize-1));

chunk_t* node = new chunk_t(0, mHeapSize / kMemoryAlign);

mList.insertHead(node);

}当应用程序进程中的AudioTrack请求AudioFlinger在PlaybackThread中创建Track对象时,AudioFlinger首先会为应用程序进程创建一个Client对象,同一时候创建一块大小为2M的共享内存。在创建Track时,Track将在2M共享内存中分配buffer用于音频播放。

Threads.cpp

// PlaybackThread::createTrack_l() must be called with AudioFlinger::mLock held

sp<AudioFlinger::PlaybackThread::Track> AudioFlinger::PlaybackThread::createTrack_l(

const sp<AudioFlinger::Client>& client,

audio_stream_type_t streamType,

const audio_attributes_t& attr,

uint32_t *pSampleRate,

audio_format_t format,

audio_channel_mask_t channelMask,

size_t *pFrameCount,

size_t *pNotificationFrameCount,

uint32_t notificationsPerBuffer,

float speed,

const sp<IMemory>& sharedBuffer,

audio_session_t sessionId,

audio_output_flags_t *flags,

pid_t creatorPid,

pid_t tid,

uid_t uid,

status_t *status,

audio_port_handle_t portId,

const sp<media::IAudioTrackCallback>& callback)

{

size_t frameCount = *pFrameCount;

size_t notificationFrameCount = *pNotificationFrameCount;

sp<Track> track;

status_t lStatus;

audio_output_flags_t outputFlags = mOutput->flags;

audio_output_flags_t requestedFlags = *flags;

uint32_t sampleRate;

if (sharedBuffer != 0 && checkIMemory(sharedBuffer) != NO_ERROR) {

lStatus = BAD_VALUE;

goto Exit;

}

if (*pSampleRate == 0) {

*pSampleRate = mSampleRate;

}

sampleRate = *pSampleRate;

// special case for FAST flag considered OK if fast mixer is present

if (hasFastMixer()) {

outputFlags = (audio_output_flags_t)(outputFlags | AUDIO_OUTPUT_FLAG_FAST);

}

....

....

....

....

....

track = new Track(this, client, streamType, attr, sampleRate, format,

channelMask, frameCount,

nullptr /* buffer */, (size_t)0 /* bufferSize */, sharedBuffer,

sessionId, creatorPid, uid, *flags, TrackBase::TYPE_DEFAULT, portId);

lStatus = track != 0 ? track->initCheck() : (status_t) NO_MEMORY;

if (lStatus != NO_ERROR) {

ALOGE("createTrack_l() initCheck failed %d; no control block?", lStatus);

// track must be cleared from the caller as the caller has the AF lock

goto Exit;

}

mTracks.add(track);

{

Mutex::Autolock _atCbL(mAudioTrackCbLock);

if (callback.get() != nullptr) {

mAudioTrackCallbacks.emplace(callback);

}

}

sp<EffectChain> chain = getEffectChain_l(sessionId);

if (chain != 0) {

ALOGV("createTrack_l() setting main buffer %p", chain->inBuffer());

track->setMainBuffer(chain->inBuffer());

chain->setStrategy(AudioSystem::getStrategyForStream(track->streamType()));

chain->incTrackCnt();

}

if ((*flags & AUDIO_OUTPUT_FLAG_FAST) && (tid != -1)) {

pid_t callingPid = IPCThreadState::self()->getCallingPid();

// we don't have CAP_SYS_NICE, nor do we want to have it as it's too powerful,

// so ask activity manager to do this on our behalf

sendPrioConfigEvent_l(callingPid, tid, kPriorityAudioApp, true /*forApp*/);

}

}

lStatus = NO_ERROR;

Exit:

*status = lStatus;

return track;

}上面函数中创建了一个Track,而Track继承了TrackBase所以我们看下它的构造

// TrackBase constructor must be called with AudioFlinger::mLock held

AudioFlinger::ThreadBase::TrackBase::TrackBase(

ThreadBase *thread,

const sp<Client>& client,

const audio_attributes_t& attr,

uint32_t sampleRate,

audio_format_t format,

audio_channel_mask_t channelMask,

size_t frameCount,

void *buffer,

size_t bufferSize,

audio_session_t sessionId,

pid_t creatorPid,

uid_t clientUid,

bool isOut,

alloc_type alloc,

track_type type,

audio_port_handle_t portId,

std::string metricsId)

: RefBase(),

mThread(thread),

mClient(client),

mCblk(NULL),

// mBuffer, mBufferSize

mState(IDLE),

mAttr(attr),

mSampleRate(sampleRate),

mFormat(format),

mChannelMask(channelMask),

mChannelCount(isOut ?

audio_channel_count_from_out_mask(channelMask) :

audio_channel_count_from_in_mask(channelMask)),

mFrameSize(audio_has_proportional_frames(format) ?

mChannelCount * audio_bytes_per_sample(format) : sizeof(int8_t)),

mFrameCount(frameCount),

mSessionId(sessionId),

mIsOut(isOut),

mId(android_atomic_inc(&nextTrackId)),

mTerminated(false),

mType(type),

mThreadIoHandle(thread ? thread->id() : AUDIO_IO_HANDLE_NONE),

mPortId(portId),

mIsInvalid(false),

mTrackMetrics(std::move(metricsId), isOut),

mCreatorPid(creatorPid)

{

const uid_t callingUid = IPCThreadState::self()->getCallingUid();

if (!isAudioServerOrMediaServerUid(callingUid) || clientUid == AUDIO_UID_INVALID) {

ALOGW_IF(clientUid != AUDIO_UID_INVALID && clientUid != callingUid,

"%s(%d): uid %d tried to pass itself off as %d",

__func__, mId, callingUid, clientUid);

clientUid = callingUid;

}

// clientUid contains the uid of the app that is responsible for this track, so we can blame

// battery usage on it.

//得到应用进程uid

mUid = clientUid;

// ALOGD("Creating track with %d buffers @ %d bytes", bufferCount, bufferSize);

size_t minBufferSize = buffer == NULL ? roundup(frameCount) : frameCount;

// check overflow when computing bufferSize due to multiplication by mFrameSize.

if (minBufferSize < frameCount // roundup rounds down for values above UINT_MAX / 2

|| mFrameSize == 0 // format needs to be correct

|| minBufferSize > SIZE_MAX / mFrameSize) {

android_errorWriteLog(0x534e4554, "34749571");

return;

}

minBufferSize *= mFrameSize;

if (buffer == nullptr) {

bufferSize = minBufferSize; // allocated here.

} else if (minBufferSize > bufferSize) {

android_errorWriteLog(0x534e4554, "38340117");

return;

}

size_t size = sizeof(audio_track_cblk_t);

if (buffer == NULL && alloc == ALLOC_CBLK) {

// check overflow when computing allocation size for streaming tracks.

if (size > SIZE_MAX - bufferSize) {

android_errorWriteLog(0x534e4554, "34749571");

return;

}

size += bufferSize;

}

if (client != 0) {

//请求Client中的MemoryDealer工具类来分配buffer

mCblkMemory = client->heap()->allocate(size);

if (mCblkMemory == 0 ||

(mCblk = static_cast<audio_track_cblk_t *>(mCblkMemory->unsecurePointer())) == NULL) {

ALOGE("%s(%d): not enough memory for AudioTrack size=%zu", __func__, mId, size);

client->heap()->dump("AudioTrack");

mCblkMemory.clear();

return;

}

} else {

mCblk = (audio_track_cblk_t *) malloc(size);

if (mCblk == NULL) {

ALOGE("%s(%d): not enough memory for AudioTrack size=%zu", __func__, mId, size);

return;

}

}

....

....

....

....

mBufferSize = bufferSize;

#ifdef TEE_SINK

mTee.set(sampleRate, mChannelCount, format, NBAIO_Tee::TEE_FLAG_TRACK);

#endif

}

}TrackBase构造过程主要是为音频播放分配共享内存,继续分析一下Track的构造。

// Track constructor must be called with AudioFlinger::mLock and ThreadBase::mLock held

AudioFlinger::PlaybackThread::Track::Track(

PlaybackThread *thread,

const sp<Client>& client,

audio_stream_type_t streamType,

const audio_attributes_t& attr,

...

...

...

mVolumeHandler(new media::VolumeHandler(sampleRate)),

mOpPlayAudioMonitor(OpPlayAudioMonitor::createIfNeeded(uid, attr, id(), streamType)),

// mSinkTimestamp

mFrameCountToBeReady(frameCountToBeReady),

mFastIndex(-1),

mCachedVolume(1.0),

/* The track might not play immediately after being active, similarly as if its volume was 0.

* When the track starts playing, its volume will be computed. */

mFinalVolume(0.f),

mResumeToStopping(false),

mFlushHwPending(false),

mFlags(flags)

{

// client == 0 implies sharedBuffer == 0

ALOG_ASSERT(!(client == 0 && sharedBuffer != 0));

ALOGV_IF(sharedBuffer != 0, "%s(%d): sharedBuffer: %p, size: %zu",

__func__, mId, sharedBuffer->unsecurePointer(), sharedBuffer->size());

if (mCblk == NULL) {

return;

}

if (!thread->isTrackAllowed_l(channelMask, format, sessionId, uid)) {

ALOGE("%s(%d): no more tracks available", __func__, mId);

releaseCblk(); // this makes the track invalid.

return;

}

if (sharedBuffer == 0) { //stream模式

mAudioTrackServerProxy = new AudioTrackServerProxy(mCblk, mBuffer, frameCount,

mFrameSize, !isExternalTrack(), sampleRate);

} else { //static模式

mAudioTrackServerProxy = new StaticAudioTrackServerProxy(mCblk, mBuffer, frameCount,

mFrameSize, sampleRate);

}

mServerProxy = mAudioTrackServerProxy;

...

...

...

...

}在Track构造中。依据不同的播放模式,创建不同的代理对象。

在构造Client对象时,创建了一个内存分配工具对象MemoryDealer,同一时候创建了一块大小为2M的匿名共享内存。这里就是使用MemoryDealer对象在这块匿名共享内存上分配指定大小的buffer。

sp<IMemory> MemoryDealer::allocate(size_t size)

{

sp<IMemory> memory;

//分配size大小的共享内存,并返回该buffer的偏移量

const ssize_t offset = allocator()->allocate(size);

if (offset >= 0) {

将分配的buffer封装为Allocation对象

memory = new Allocation(this, heap(), offset, size);

}

return memory;

}

size_t SimpleBestFitAllocator::allocate(size_t size, uint32_t flags)

{

Mutex::Autolock _l(mLock);

ssize_t offset = alloc(size, flags);

return offset;

}

ssize_t SimpleBestFitAllocator::alloc(size_t size, uint32_t flags)

{

if (size == 0) {

return 0;

}

size = (size + kMemoryAlign-1) / kMemoryAlign;

chunk_t* free_chunk = nullptr;

chunk_t* cur = mList.head();

size_t pagesize = getpagesize();

while (cur) {

int extra = 0;

if (flags & PAGE_ALIGNED)

extra = ( -cur->start & ((pagesize/kMemoryAlign)-1) ) ;

// best fit

if (cur->free && (cur->size >= (size+extra))) {

if ((!free_chunk) || (cur->size < free_chunk->size)) {

free_chunk = cur;

}

if (cur->size == size) {

break;

}

}

cur = cur->next;

}

if (free_chunk) {

const size_t free_size = free_chunk->size;

free_chunk->free = 0;

free_chunk->size = size;

if (free_size > size) {

int extra = 0;

if (flags & PAGE_ALIGNED)

extra = ( -free_chunk->start & ((pagesize/kMemoryAlign)-1) ) ;

if (extra) {

chunk_t* split = new chunk_t(free_chunk->start, extra);

free_chunk->start += extra;

mList.insertBefore(free_chunk, split);

}

ALOGE_IF((flags&PAGE_ALIGNED) &&

((free_chunk->start*kMemoryAlign)&(pagesize-1)),

"PAGE_ALIGNED requested, but page is not aligned!!!");

const ssize_t tail_free = free_size - (size+extra);

if (tail_free > 0) {

chunk_t* split = new chunk_t(

free_chunk->start + free_chunk->size, tail_free);

mList.insertAfter(free_chunk, split);

}

}

return (free_chunk->start)*kMemoryAlign;

}

return NO_MEMORY;

}

createTrack时由AudioFlinger申请对应的内存,然后通过IMemory接口返回AudioTrack,这样AudioTrack和AudioFlinger管理着同一个audio_track_cblk_t,通过它实现了环形数据共享区。AudioTrack向数据共享区中写入音频数据,AudioFlinger从数据共享区中读取音频数据,经Mixer后送给AudioHardware进行播放。这里AudioTrack是生产者,AudioFlinger是消费者。

总结一下放音的流程:

1、Java层通过JNI创建AudioTrack对象。

2、根据StreamType等參数,查找已打开的音频输出设备。如果查找不到匹配的音频输出设备。则请求AudioFlinger打开新的音频输出设备

3、AudioFlinger为该输出设备创建混音线程MixerThread,并把该线程的id作为getOutput()的返回值返回给AudioTrack;

4、AudioTrack通过binder机制调用AudioFlinger的createTrack()创建Track。而且创建TrackHandle Binder本地对象,同时返回IAudioTrack的代理对象。

5、AudioFlinger注冊该Track到MixerThread中。

6、AudioTrack通过IAudioTrack接口,得到在AudioFlinger中创建的数据共享区。

7、AudioTrack调用start,开始写入数据,AudioFlinger读取数据,进行放音。

1043

1043

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?