以Kaggle 2015年举办的Otto Group Product Classification Challenge竞赛数据为例,进行XGBoost参数调优探索。

竞赛官网:https://www.kaggle.com/c/otto-group-product-classification-challenge/data

# 导入模块,读取数据

from xgboost import XGBClassifier

import xgboost as xgb

import pandas as pd

import numpy as np

from sklearn.model_selection import GridSearchCV

from sklearn.model_selection import StratifiedKFold

from sklearn.metrics import log_loss

from matplotlib import pyplot

import seaborn as sns

%matplotlib inline

dpath = 'F:/Python_demo/XGBoost/data/'

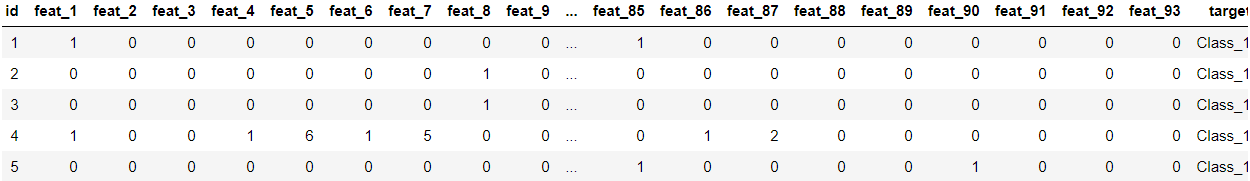

train = pd.read_csv(dpath +"Otto_train.csv")

train.head()

1.看样本分布是否均衡

sns.countplot(train.target);

pyplot.xlabel('target');

pyplot.ylabel('Number of occurrences');

每类样本分布不是很均匀,所以交叉验证时也考虑各类样本按比例抽取

2. 提取训练和标签

y_train = train['target']

y_train = y_train.map(lambda s: s[6:]) # .map 迭代进行函数计算,提取出对应的标签1,2.3. Class_1中标签1位置为s[6:]

y_train = y_train.map(lambda s: int(s)-1) # 将标签转化为0开始

train = train.drop(["id", "target"], axis=1) # 删除id,target行

X_train = np.array(train) # 输入变量3.设置交叉验证,各类样本不均衡,交叉验证是采用StratifiedKFold,在每折采样时各类样本按比例采样

kfold = StratifiedKFold(n_splits=5, shuffle=True, random_state=3)4. 设置xgboost默认参数,开始对树个数进行调整,

def modelfit(alg, X_train, y_train, useTrainCV=True, cv_folds=None, early_stopping_rounds=50):

if useTrainCV:

xgb_param = alg.get_xgb_params()

xgb_param['num_class'] = 9 # 设置分类类别数目

xgtrain = xgb.DMatrix(X_train, label = y_train) # DMatrix 是 xgb 存储信息的单位,本步骤把数据放进这里面去

cvresult = xgb.cv(xgb_param, xgtrain, num_boost_round=alg.get_params()['n_estimators'], folds =cv_folds,

metrics='mlogloss', early_sto

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

264

264

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?