ctb系列数据集划分训练集、验证集、测试集

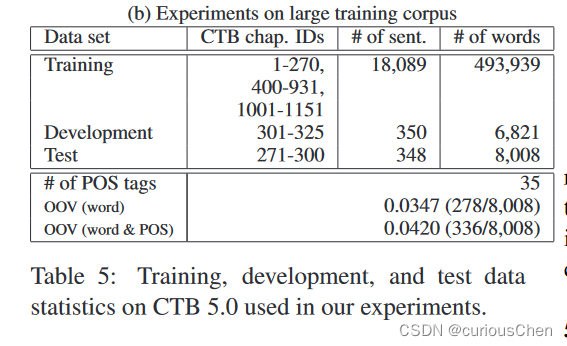

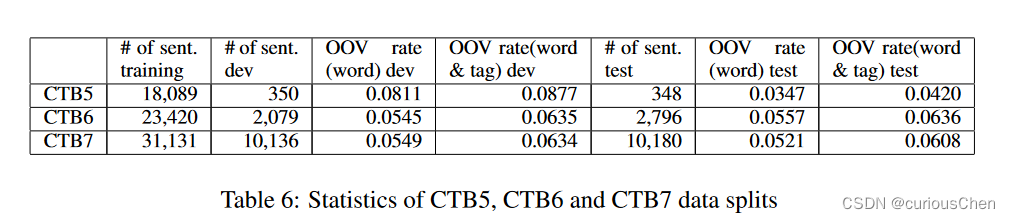

ctb5

- Kruengkrai, Canasai, Kiyotaka Uchimoto, Jun’ichi Kazama, Yiou Wang, Kentaro Torisawa和Hitoshi Isahara. 《An Error-Driven Word-Character Hybrid Model for Joint Chinese Word Segmentation and POS Tagging》. 收入 Proceedings of the Joint Conference of the 47th Annual Meeting of the ACL and the 4th International Joint Conference on Natural Language Processing of the AFNLP, 513–21. Suntec, Singapore: Association for Computational Linguistics, 2009. https://aclanthology.org/P09-1058.

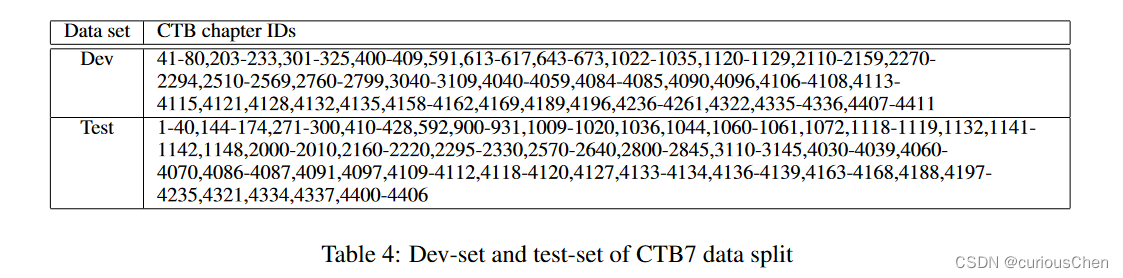

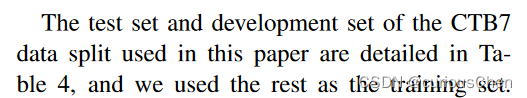

CTB7

-

Wang, Yiou, Jun Kazama, Yoshimasa Tsuruoka, Wenliang Chen, Yujie Zhang和Kentaro Torisawa. 《Improving Chinese Word Segmentation and POS Tagging with Semi-supervised Methods Using Large Auto-Analyzed Data》, 2011.

-

-

-

ctb8 & ctb9

- Shao, Yan, Christian Hardmeier, Jörg Tiedemann和Joakim Nivre. 《Character-based Joint Segmentation and POS Tagging for Chinese using Bidirectional RNN-CRF》. 收入 Proceedings of the Eighth International Joint Conference on Natural Language Processing (Volume 1: Long Papers), 173–83. Taipei, Taiwan: Asian Federation of Natural Language Processing, 2017. https://aclanthology.org/I17-1018.

CTB8 & CTB9 (何晗大佬倡导)

-

https://bbs.hankcs.com/t/topic/3024

-

每个文件以8结尾的划入开发集,以9结尾的划入测试集,否则划入训练集

-

ctb9的拆分在学术界已有定论,根据IJCNLP 2017上Shao et. al的论文,ctb9拆分遗漏了51个文件,亦即

4000-4050这个区间。而且该拆分在各个领域(genre)上的分布比较不均匀。ctb9一共8个领域,分别为:- nw 新闻

- mz 杂志

- bn 广播新闻

- bc 广播访谈

- wb 博客

- df 论坛

- sc 短信

- cs 聊天

-

在这些领域上,学术界拆分导致的文件数统计如下:

-

Shao et. al (2017) 拆分统计

-

genre train dev test nw 604(79.47%) 25(3.29%) 131(17.24%) mz 117(90.00%) 0(0.00%) 13(10.00%) bn 965(79.95%) 122(10.11%) 120(9.94%) bc 69(80.23%) 9(10.47%) 8(9.30%) wb 171(79.91%) 22(10.28%) 21(9.81%) df 447(79.96%) 46(8.23%) 66(11.81%) sc 561(80.03%) 70(9.99%) 70(9.99%) cs 14(77.78%) 1(5.56%) 3(16.67%)

-

-

考虑到上述样本遗漏、样本不均衡以及拆分规则复杂的问题,HanLP提出如下拆分,推荐给工业界和开源界人士:

-

每个文件以8结尾的划入开发集,以9结尾的划入测试集,否则划入训练集。

-

这个简单直白的划分不仅操作简单,而且能够保证各个领域的均衡比例。该划分的统计信息如下

-

-

genre train dev test nw 655(80.76%) 78(9.62%) 78(9.62%) mz 105(80.77%) 13(10.00%) 12(9.23%) bn 967(80.12%) 120(9.94%) 120(9.94%) bc 70(81.40%) 8(9.30%) 8(9.30%) wb 170(79.44%) 22(10.28%) 22(10.28%) df 448(80.14%) 56(10.02%) 55(9.84%) sc 561(80.03%) 70(9.99%) 70(9.99%) cs 16(88.89%) 1(5.56%) 1(5.56%)

划分代码

-

安装必要的运行库

-

pip install hanlp

-

-

定义数据集的train、dev、test

-

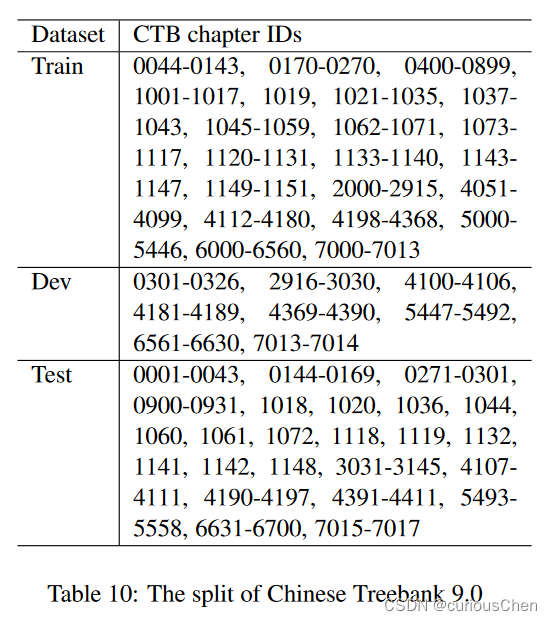

CTB9_ACADEMIA_SPLITS = { 'train': ''' 0044-0143, 0170-0270, 0400-0899, 1001-1017, 1019, 1021-1035, 1037- 1043, 1045-1059, 1062-1071, 1073- 1117, 1120-1131, 1133-1140, 1143- 1147, 1149-1151, 2000-2915, 4051- 4099, 4112-4180, 4198-4368, 5000- 5446, 6000-6560, 7000-7013 ''', 'dev': ''' 0301-0326, 2916-3030, 4100-4106, 4181-4189, 4369-4390, 5447-5492, 6561-6630, 7013-7014 ''', 'test': ''' 0001-0043, 0144-0169, 0271-0301, 0900-0931, 1018, 1020, 1036, 1044, 1060, 1061, 1072, 1118, 1119, 1132, 1141, 1142, 1148, 3031-3145, 4107- 4111, 4190-4197, 4391-4411, 5493- 5558, 6631-6700, 7015-7017 ''' }

-

-

分割,将字符串转换为list表

-

def _make_splits(splits: Dict[str, str]): total = set() for part, text in list(splits.items()): if not isinstance(text, str): continue lines = text.replace('\n', '').split() cids = set() for line in lines: for each in line.split(','): each = each.strip() if not each: continue if '-' in each: start, end = each.split('-') start, end = map(lambda x: int(x), [start, end]) cids.update(range(start, end + 1)) # cids.update(map(lambda x: f'{x:04d}', range(start, end))) else: cids.add(int(each)) cids = set(f'{x:04d}' for x in cids) assert len(cids & total) == 0, f'Overlap found in {part}' splits[part] = cids return splits # ctb9_splits = _make_splits(CTB9_ACADEMIA_SPLITS)

-

-

使用hanlp的方法make_ctb,制作nlp四个任务(cws,dep,par,pos)的标准数据集

-

from hanlp.datasets.parsing.loaders._ctb_utils import make_ctb make_ctb(ctb_home='./data/ctb9.0/data',splits=ctb9_splits) -

ctb_home:ctb系列原始数据下的data目录地址

-

splits[option]:上一步制定的划分规则,默认每个文件以8结尾的划入开发集,以9结尾的划入测试集,否则划入训练集的规则划分

-

-

完整代码

''' coding:utf-8 @Software:PyCharm @Time:2023/2/8 10:17 @Author:curious See Shao et al., 2017, 采用学术界认可的ctb9拆分方案 ''' from typing import Dict from hanlp.datasets.parsing.loaders._ctb_utils import make_ctb CTB9_ACADEMIA_SPLITS = { 'train': ''' 0044-0143, 0170-0270, 0400-0899, 1001-1017, 1019, 1021-1035, 1037- 1043, 1045-1059, 1062-1071, 1073- 1117, 1120-1131, 1133-1140, 1143- 1147, 1149-1151, 2000-2915, 4051- 4099, 4112-4180, 4198-4368, 5000- 5446, 6000-6560, 7000-7013 ''', 'dev': ''' 0301-0326, 2916-3030, 4100-4106, 4181-4189, 4369-4390, 5447-5492, 6561-6630, 7013-7014 ''', 'test': ''' 0001-0043, 0144-0169, 0271-0301, 0900-0931, 1018, 1020, 1036, 1044, 1060, 1061, 1072, 1118, 1119, 1132, 1141, 1142, 1148, 3031-3145, 4107- 4111, 4190-4197, 4391-4411, 5493- 5558, 6631-6700, 7015-7017 ''' } def _make_splits(splits: Dict[str, str]): total = set() for part, text in list(splits.items()): if not isinstance(text, str): continue lines = text.replace('\n', '').split() cids = set() for line in lines: for each in line.split(','): each = each.strip() if not each: continue if '-' in each: start, end = each.split('-') start, end = map(lambda x: int(x), [start, end]) cids.update(range(start, end + 1)) # cids.update(map(lambda x: f'{x:04d}', range(start, end))) else: cids.add(int(each)) cids = set(f'{x:04d}' for x in cids) assert len(cids & total) == 0, f'Overlap found in {part}' splits[part] = cids return splits ctb9_splits = _make_splits(CTB9_ACADEMIA_SPLITS) make_ctb(ctb_home='./data/ctb9.0/data',splits=ctb9_splits)

注意

- windows下运行make_ctb可能会出问题,建议在Linux下运行

- 需要划分好的数据集的,私聊我

文章介绍了如何按照不同的规则对CTB系列数据集(如CTB5、CTB7、CTB8、CTB9)进行训练集、验证集和测试集的划分,特别是何晗提出的CTB8&CTB9的拆分方案,旨在解决样本遗漏、不均衡和复杂性问题。HanLP提供的工具make_ctb用于制作NLP任务的标准数据集,包括词性标注和依存解析等任务。建议在Linux环境下运行此代码。

文章介绍了如何按照不同的规则对CTB系列数据集(如CTB5、CTB7、CTB8、CTB9)进行训练集、验证集和测试集的划分,特别是何晗提出的CTB8&CTB9的拆分方案,旨在解决样本遗漏、不均衡和复杂性问题。HanLP提供的工具make_ctb用于制作NLP任务的标准数据集,包括词性标注和依存解析等任务。建议在Linux环境下运行此代码。

1153

1153

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?