- 基于iris数据 建立KNN模型实现数据分类

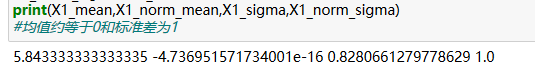

- 对数据进行标准环处理,选择一个维度可视化处理后效果

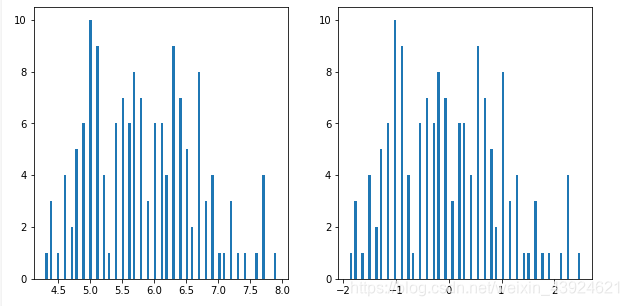

- 进行与元数据等维度PCA,查看各主成分的方差比例

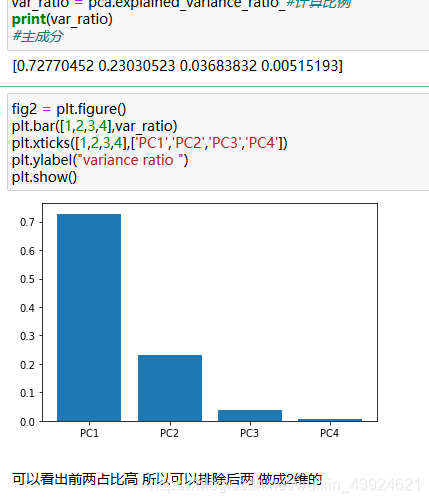

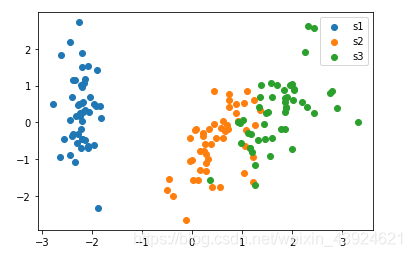

- 保留合适的主成分,可视化降维后的数据

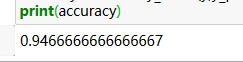

- 基于降维后数据建立KNN模型,与元数据表现进行对比

import pandas as pd

import numpy as np

data = pd.read_csv('iris_data.csv')

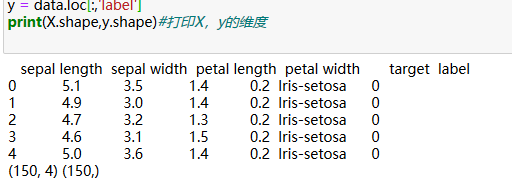

print(data.head())

X = data.drop(['target','label'],axis=1)

y = data.loc[:,'label']

print(X.shape,y.shape)#打印X,y的维度

#建立模型-预测-计算准确率

from sklearn.neighbors import KNeighborsClassifier

KNN = KNeighborsClassifier(n_neighbors=3)

KNN.fit(X,y)

y_predict = KNN.predict(X)

from sklearn.metrics import accuracy_score

accuracy = accuracy_score(y,y_predict)

print(accuracy)

0.96

#数据标准化处理

from sklearn.preprocessing import StandardScaler

X_norm = StandardScaler().fit_transform(X)

X1_mean = X.loc[:,'sepal length'].mean()

X1_norm_mean = X_norm[:,0].mean()

X1_sigma = X.loc[:,'sepal length'].std()

X1_norm_sigma = X_norm[:,0].std()

print(X1_mean,X1_norm_mean,X1_sigma,X1_norm_sigma)

#均值约等于0和标准差为1

%matplotlib inline

from matplotlib import pyplot as plt

fig1 = plt.figure(figsize=(10,5))

plt.subplot(121)

plt.hist(X.loc[:,'sepal length'],bins=100)#X有四个维度 取一个

plt.subplot(122)

plt.hist(X_norm[:,0],bins=100)

plt.show()

print(X.shape)

#4维的

from sklearn.decomposition import PCA

pca = PCA(n_components=4)

X_pca = pca.fit_transform(X_norm)

var_ratio = pca.explained_variance_ratio_#计算比例

print(var_ratio)

#主成分

fig2 = plt.figure()

plt.bar([1,2,3,4],var_ratio)

plt.xticks([1,2,3,4],['PC1','PC2','PC3','PC4'])

plt.ylabel("variance ratio ")

plt.show()

from sklearn.decomposition import PCA

pca = PCA(n_components=2)

X_pca = pca.fit_transform(X_norm)

print(type(X_pca))

fig3 = plt.figure()

s1= plt.scatter(X_pca[:,0][y==0],X_pca[:,1][y==0])

s2 = plt.scatter(X_pca[:,0][y==1],X_pca[:,1][y==1])

s3 = plt.scatter(X_pca[:,0][y==2],X_pca[:,1][y==2])

plt.legend((s1,s2,s3),('s1','s2','s3'))

plt.show()

#准确率

# from sklearn.neighbors import KNeighborsClassifier

KNN = KNeighborsClassifier(n_neighbors=3)

KNN.fit(X_pca,y)

y_predict_pca = KNN.predict(X_pca)

from sklearn.metrics import accuracy_score

accuracy = accuracy_score(y,y_predict_pca)

print(accuracy)

维度下降了 信息仍然保留了

16万+

16万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?