学习 MediaPipe 手部检测和手势识别

1 代码学习

1.0 Demo

import time

import cv2

import mediapipe as mp

mpHands = mp.solutions.hands

hands = mpHands.Hands(model_complexity=0)

mpDraw = mp.solutions.drawing_utils

cap = cv2.VideoCapture(0)

timep, timen = 0, 0

while True:

ret, img = cap.read()

if ret:

img_rgb = cv2.cvtColor(img, cv2.COLOR_BGR2RGB)

result = hands.process(img_rgb)

if result.multi_hand_landmarks:

for handLms in result.multi_hand_landmarks:

mpDraw.draw_landmarks(img, handLms, mpHands.HAND_CONNECTIONS)

timen = time.time()

fps = 1/(timen-timep)

timep = timen

cv2.putText(img, f"FPS: {fps:.2f}", (50, 50), cv2.FONT_HERSHEY_SIMPLEX, 1, (255, 0, 0), 1, cv2.LINE_AA)

cv2.imshow("IMG", img)

if cv2.waitKey(1) == ord('q'):

break

1.1 mediapipe.solutions.hands.Hands

首先看看 MediaPipe 中的 Hands 类。

class Hands(SolutionBase):

"""MediaPipe Hands.

MediaPipe Hands processes an RGB image and returns the hand landmarks and

handedness (left v.s. right hand) of each detected hand.

Note that it determines handedness assuming the input image is mirrored,

i.e., taken with a front-facing/selfie camera (

https://en.wikipedia.org/wiki/Front-facing_camera) with images flipped

horizontally. If that is not the case, use, for instance, cv2.flip(image, 1)

to flip the image first for a correct handedness output.

Please refer to https://solutions.mediapipe.dev/hands#python-solution-api for

usage examples.

"""

MediaPipe 提供的 Hands 类,处理 RGB 图片,并返回检测到的每只手的关节点(手地标,handlandmarks)和手性(左/右手,handedness)。

注意:图像水平翻转会影响手性的识别。先使用 cv2.flip(image, 1) 水平翻转图片,可以获得正确的手性。

1.1.1 Hands 初始化

Hands 接收 5 个初始化参数:

static_image_mode:静态图片输入模式,默认值为 False。是否将输入图片视为一批不相关的静态图片。max_num_hands:识别手掌的最大数目,默认值为 2。model_complexity:模型复杂度,默认值为 1,取值 0/1。值越大,模型越复杂,识别越精确,耗时越久。min_detection_confidence:最低检测置信度,默认值为 0.5,取值 0.0 ~ 1.0。值越大,对手掌筛选越精确,越难识别出手掌,反之越容易误识别。min_tracking_confidence:最低追踪置信度,默认值为 0.5,取值 0.0 ~ 1.0。值越大,对手掌追踪筛选越精确,越容易跟丢手掌,反之越容易误识别。

def __init__(self,

static_image_mode=False,

max_num_hands=2,

model_complexity=1,

min_detection_confidence=0.5,

min_tracking_confidence=0.5):

"""Initializes a MediaPipe Hand object.

Args:

static_image_mode: Whether to treat the input images as a batch of static

and possibly unrelated images, or a video stream. See details in

https://solutions.mediapipe.dev/hands#static_image_mode.

max_num_hands: Maximum number of hands to detect. See details in

https://solutions.mediapipe.dev/hands#max_num_hands.

model_complexity: Complexity of the hand landmark model: 0 or 1.

Landmark accuracy as well as inference latency generally go up with the

model complexity. See details in

https://solutions.mediapipe.dev/hands#model_complexity.

min_detection_confidence: Minimum confidence value ([0.0, 1.0]) for hand

detection to be considered successful. See details in

https://solutions.mediapipe.dev/hands#min_detection_confidence.

min_tracking_confidence: Minimum confidence value ([0.0, 1.0]) for the

hand landmarks to be considered tracked successfully. See details in

https://solutions.mediapipe.dev/hands#min_tracking_confidence.

"""

super().__init__(

binary_graph_path=_BINARYPB_FILE_PATH,

side_inputs={

'model_complexity': model_complexity,

'num_hands': max_num_hands,

'use_prev_landmarks': not static_image_mode,

},

calculator_params={

'palmdetectioncpu__TensorsToDetectionsCalculator.min_score_thresh':

min_detection_confidence,

'handlandmarkcpu__ThresholdingCalculator.threshold':

min_tracking_confidence,

},

outputs=[

'multi_hand_landmarks', 'multi_hand_world_landmarks',

'multi_handedness'

])

在此基础上,Hands 的父类还接收 1 个常数 _BINARYPB_FILE_PATH。

_BINARYPB_FILE_PATH = 'mediapipe/modules/hand_landmark/hand_landmark_tracking_cpu.binarypb'

1.1.2 process 检测

函数 process 接收 RGB 格式的 numpy 数组,返回包含 3 个字段的 具名元组(NamedTuple):

multi_hand_landmarks:每只手的关节点坐标。multi_hand_world_landmarks:每只手的关节点在真实世界中的3D坐标(以 米m 为单位),原点位于手的近似几何中心。multi_handedness:每只手的手性(左/右手)。

def process(self, image: np.ndarray) -> NamedTuple:

"""Processes an RGB image and returns the hand landmarks and handedness of each detected hand.

Args:

image: An RGB image represented as a numpy ndarray.

Raises:

RuntimeError: If the underlying graph throws any error.

ValueError: If the input image is not three channel RGB.

Returns:

A NamedTuple object with the following fields:

1) a "multi_hand_landmarks" field that contains the hand landmarks on

each detected hand.

2) a "multi_hand_world_landmarks" field that contains the hand landmarks

on each detected hand in real-world 3D coordinates that are in meters

with the origin at the hand's approximate geometric center.

3) a "multi_handedness" field that contains the handedness (left v.s.

right hand) of the detected hand.

"""

return super().process(input_data={'image': image})

查看函数返回值的类型:

print(type(result))

print(result)

<class 'type'>

<class 'mediapipe.python.solution_base.SolutionOutputs'>

解析 multi_hand_landmarks,返回的坐标值为相对图片的归一化后的坐标。

print(type(result.multi_hand_landmarks))

print(result.multi_hand_landmarks)

for handLms in result.multi_hand_landmarks:

print(type(handLms))

print(handLms)

print(type(handLms.landmark))

print(handLms.landmark)

for index, lm in enumerate(handLms.landmark):

print(type(lm))

print(lm)

print(type(lm.x))

print(index, lm.x, lm.y, lm.z)

# result.multi_hand_landmarks

<class 'list'>

[landmark {

x: 0.871795416

y: 1.01455748

z: 1.16892895e-008

}

...]

# handLms

<class 'mediapipe.framework.formats.landmark_pb2.NormalizedLandmarkList'>

landmark {

x: 0.871795416

y: 1.01455748

z: 1.16892895e-008

}

...

# handLms.landmark

<class 'google._upb._message.RepeatedCompositeContainer'>

[x: 0.871795416

y: 1.01455748

z: 1.16892895e-008

,

...]

# lm

<class 'mediapipe.framework.formats.landmark_pb2.NormalizedLandmark'>

x: 0.871795416

y: 1.01455748

z: 1.16892895e-008

# lm.x

<class 'float'>

0 0.8717954158782959 1.0145574808120728 1.1689289536320757e-08

解析 multi_hand_world_landmarks,结构与 multi_world_landmarks 相同,区别在于单位为 m。

# handWLms

<class 'mediapipe.framework.formats.landmark_pb2.LandmarkList'>

# lm

<class 'mediapipe.framework.formats.landmark_pb2.Landmark'>

解析 multi_handedness,返回:序号、置信度、手性。

print(type(result.multi_handedness))

print(result.multi_handedness)

for handedness in result.multi_handedness:

print(type(handedness))

print(handedness)

print(type(handedness.classification))

print(handedness.classification)

for index, cf in enumerate(handedness.classification):

print(type(cf))

print(cf)

print(type(cf.index))

print(cf.index)

print(type(cf.score))

print(cf.score)

print(type(cf.label))

print(cf.label)

# result.multi_handedness

<class 'list'>

[classification {

index: 1

score: 0.71049273

label: "Right"

}

...]

# handedness

<class 'mediapipe.framework.formats.classification_pb2.ClassificationList'>

classification {

index: 1

score: 0.71049273

label: "Right"

}

...

# handedness.classification

<class 'google._upb._message.RepeatedCompositeContainer'>

[index: 1

score: 0.71049273

label: "Right",

...]

# cf

<class 'mediapipe.framework.formats.classification_pb2.Classification'>

index: 1

score: 0.71049273

label: "Right"

# cf.index

<class 'int'>

1

# cf.score

<class 'float'>

0.710492730140686

# cf.label

<class 'str'>

Right

1.2 mediapipe.solutions.drawing_utils

1.2.1 类 DrawingSpec

DrawingSpec 类包含 color、thickness 和 circle_radius 3 个元素。

@dataclasses.dataclass

class DrawingSpec:

# Color for drawing the annotation. Default to the white color.

color: Tuple[int, int, int] = WHITE_COLOR

# Thickness for drawing the annotation. Default to 2 pixels.

thickness: int = 2

# Circle radius. Default to 2 pixels.

circle_radius: int = 2

1.2.2 函数 draw_landmarks

函数 draw_landmarks 在图片中绘制关节点和骨骼,接收6个输入参数:

image:BGR 三通道 numpy 数组;landmark_list:需要标注在图片上的、标准化后的关节点列表(landmark_pb2.NormalizedLandmarkList);connections:关节点索引列表,指定关节点连接方式,默认值为 None,不绘制;landmark_drawing_spec:指定关节点的绘图设定,输入可以是DrawingSpec或者Mapping[int, DrawingSpec],传入 None 时不绘制关节点,默认值为 DrawingSpec(color=RED_COLOR);connection_drawing_spec:指定骨骼的绘制设定,输入可以是DrawingSpec或者Mapping[int, DrawingSpec],传入 None 时不绘制关节点,默认值为 DrawingSpec();is_drawing_landmarks:是否绘制关节点,默认值为 True。

def draw_landmarks(

image: np.ndarray,

landmark_list: landmark_pb2.NormalizedLandmarkList,

connections: Optional[List[Tuple[int, int]]] = None,

landmark_drawing_spec: Optional[

Union[DrawingSpec, Mapping[int, DrawingSpec]]

] = DrawingSpec(color=RED_COLOR),

connection_drawing_spec: Union[

DrawingSpec, Mapping[Tuple[int, int], DrawingSpec]

] = DrawingSpec(),

is_drawing_landmarks: bool = True,

):

"""Draws the landmarks and the connections on the image.

Args:

image: A three channel BGR image represented as numpy ndarray.

landmark_list: A normalized landmark list proto message to be annotated on

the image.

connections: A list of landmark index tuples that specifies how landmarks to

be connected in the drawing.

landmark_drawing_spec: Either a DrawingSpec object or a mapping from hand

landmarks to the DrawingSpecs that specifies the landmarks' drawing

settings such as color, line thickness, and circle radius. If this

argument is explicitly set to None, no landmarks will be drawn.

connection_drawing_spec: Either a DrawingSpec object or a mapping from hand

connections to the DrawingSpecs that specifies the connections' drawing

settings such as color and line thickness. If this argument is explicitly

set to None, no landmark connections will be drawn.

is_drawing_landmarks: Whether to draw landmarks. If set false, skip drawing

landmarks, only contours will be drawed.

Raises:

ValueError: If one of the followings:

a) If the input image is not three channel BGR.

b) If any connetions contain invalid landmark index.

"""

自定义关节点颜色的部分代码:

mpDraw = mp.solutions.drawing_utils

# 创建Mapping[int, DrawingSpec]

landmarks_specs = {}

# 根据键的范围分配颜色

for i in range(21):

if 0 <= i <= 4:

color = (0, 0, 255)

elif 5 <= i <= 8:

color = (0, 255, 0)

elif 9 <= i <= 12:

color = (255, 0, 0)

elif 13 <= i <= 16:

color = (255, 255, 0)

elif 17 <= i <= 20:

color = (0, 255, 255)

# 创建DrawingSpec实例并添加到映射中

landmarks_specs[i] = mpDraw.DrawingSpec(color=color)

# 中间代码省略,具体参考 1.0 Demo #

for handLms in result.multi_hand_landmarks:

mpDraw.draw_landmarks(img, handLms, mpHands.HAND_CONNECTIONS, landmarks_specs)

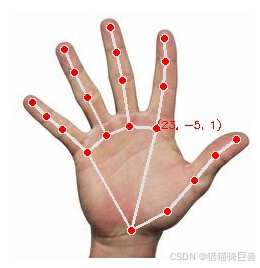

1.2.3 常数 HAND_CONNECTIONS

HAND_CONNECTIONS 是 mediapipe.solutions.hands 从 mediapipe.solutions.hands_connections 中引入的常数,是一个不可变的元组集合,表示连接在一起的关节点序号。

HAND_PALM_CONNECTIONS = ((0, 1), (0, 5), (9, 13), (13, 17), (5, 9), (0, 17))

HAND_THUMB_CONNECTIONS = ((1, 2), (2, 3), (3, 4))

HAND_INDEX_FINGER_CONNECTIONS = ((5, 6), (6, 7), (7, 8))

HAND_MIDDLE_FINGER_CONNECTIONS = ((9, 10), (10, 11), (11, 12))

HAND_RING_FINGER_CONNECTIONS = ((13, 14), (14, 15), (15, 16))

HAND_PINKY_FINGER_CONNECTIONS = ((17, 18), (18, 19), (19, 20))

HAND_CONNECTIONS = frozenset().union(*[

HAND_PALM_CONNECTIONS, HAND_THUMB_CONNECTIONS,

HAND_INDEX_FINGER_CONNECTIONS, HAND_MIDDLE_FINGER_CONNECTIONS,

HAND_RING_FINGER_CONNECTIONS, HAND_PINKY_FINGER_CONNECTIONS

])

2 识别结果分析

所有图片均来自互联网。

2.1 手性(handedness)

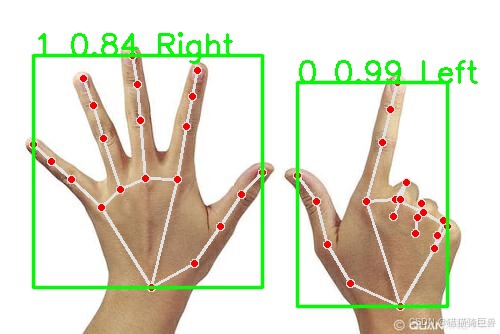

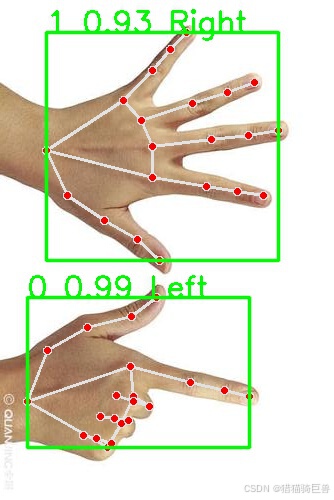

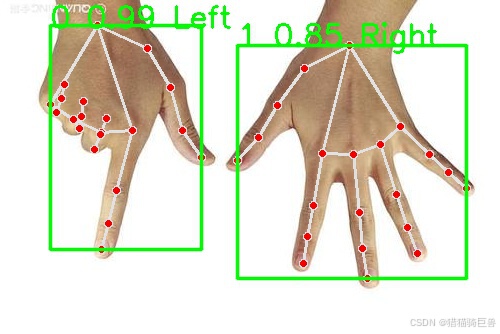

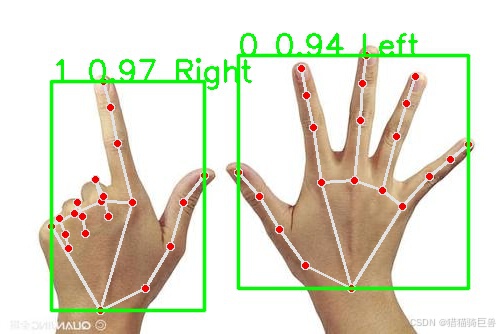

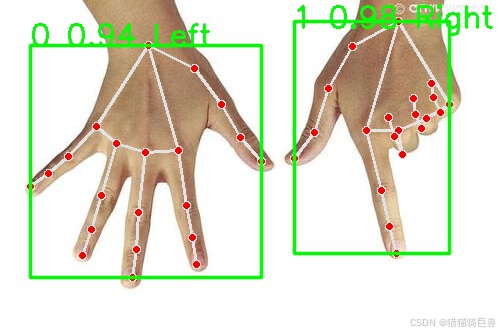

图片从左到右依次是:原图、顺时针选择90°、选择180°、水平翻转、垂直翻转。可以发现,MediaPipe 始终将左手识别为右手,将右手识别为左手(类似照镜子的感觉)。

2.2 序号

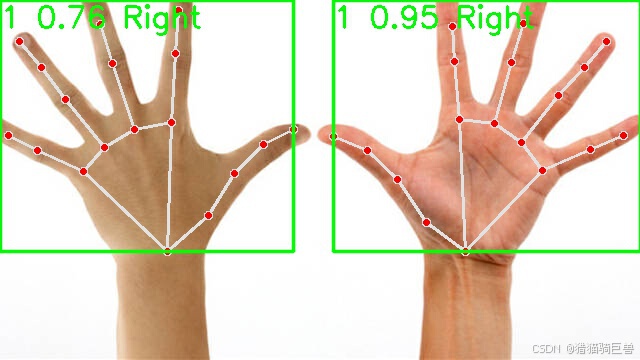

可以发现,Left 对应 0 ,Right 对应 1。同时出现多个相同手性的手掌时,置信度会逐步降低。

2.3 模型识别效果

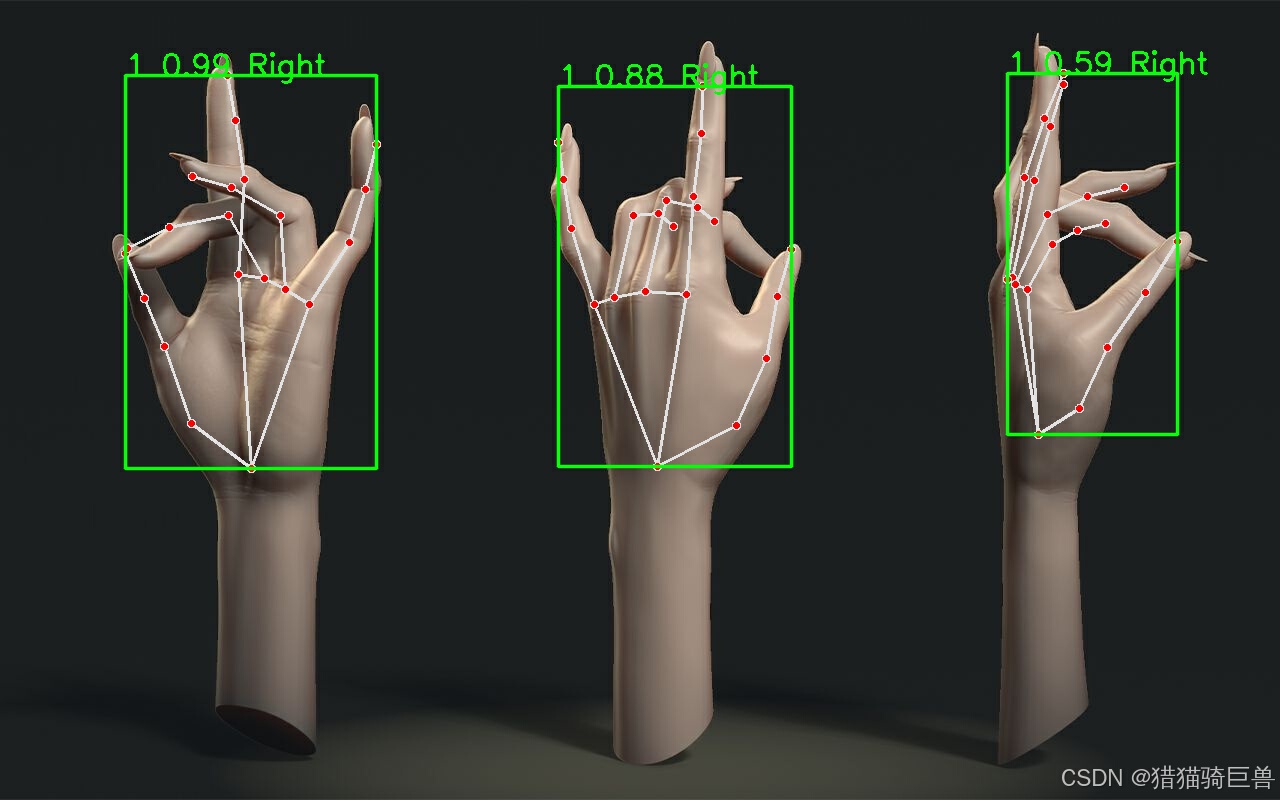

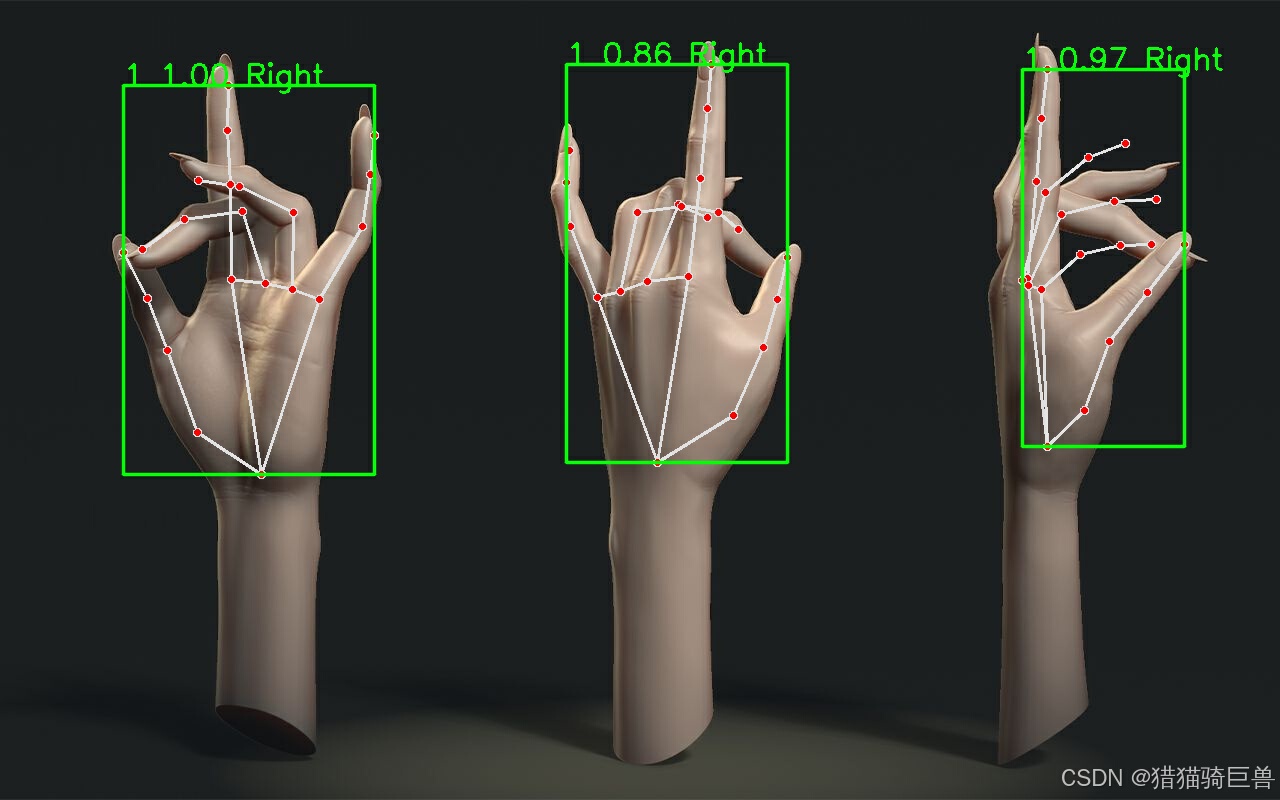

第一张图片为 model_complexity=0,第二张图片为 model_complexity=1。可以看出,1 模型的识别效果不一定比 0 模型好。识别效果依次是:手心、手背、手侧。

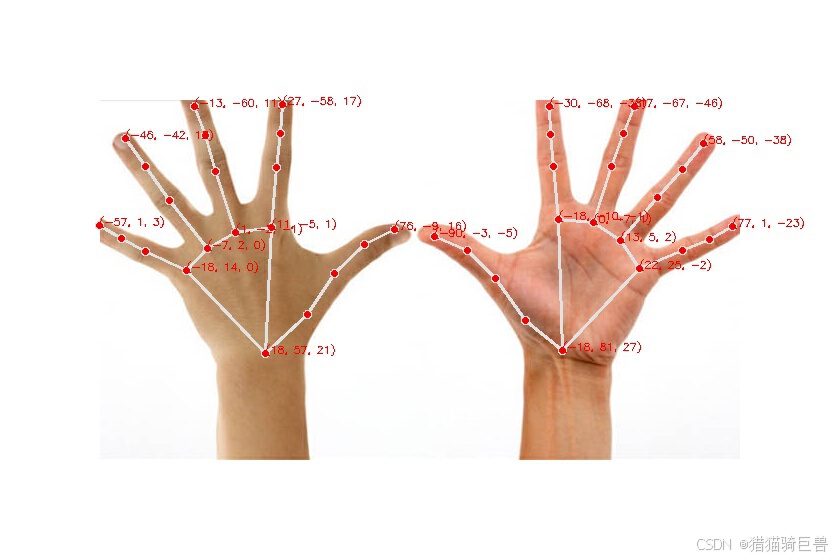

2.4 手关节世界坐标(multi_hand_world_landmarks)

从下列图片可以看出,世界坐标是一个相对坐标,原点是上述的手的近似几何中心。随着手逐步变小,坐标没有明显变化。x、y、z 轴的方向分别为:左——>右,上——>下,外——>内。要想判断手掌到相机的距离,可能使用 multi_hand_landmarks 根据像素点换算是比较好的方案。

写的比较啰嗦,手势识别的内容放下一篇文章。

592

592

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?