风控数据挖掘方法

生肖属相单变量分析

import pandas as pd

import numpy as np

f = open(r'C:\Users\meizihang\Documents\A

LLCODE\课程资料\七月在线\金融风控实战\02 树模型与规则挖掘\星座生肖单变量分析\ft_zodiac.txt', encoding='utf-8')

ft_zodiac = pd.read_csv(f)

print(ft_zodiac.shape)

ft_zodiac.head()

“”“

pd15作为好坏的分割节点。>15 为坏人,<15为好人?

15天以上的人为坏,5天以内的人为好。

”“”

l = open(r'C:\Users\meizihang\Documents\ALLCODE\课程资料\七月在线\金融风控实战\02 树模型与规则挖掘\星座生肖单变量分析\zodiac_label.txt')

zodiac_label=pd.read_csv(l)

ft_label = zodiac_label[zodiac_label['label'] != 2]

ft_label.head()

data = pd.merge(ft_label,ft_zodiac,on = 'order_id',how = 'inner')

data.head()

badrate = bad/toal

zodiac_list = set(data.zodiac)

chinese_zodiac_list = set(data.chinese_zodiac)

zodiac_list

chinese_zodiac_list

#星座

zodiac_badrate = {}

for x in zodiac_list:

a = data[data.zodiac == x]

bad = a[a.label == 1]['label'].count()

good = a[a.label == 0]['label'].count()

zodiac_badrate[x] = bad/(bad+good)

zodiac_badrate

f = zip(zodiac_badrate.keys(),zodiac_badrate.values())

f = sorted(f,key = lambda x : x[1],reverse = True )

zodiac_badrate = pd.DataFrame(f)

zodiac_badrate.columns = pd.Series(['星座','badrate'])

zodiac_badrate

from pyecharts import Line

x = zodiac_badrate['星座']

y = zodiac_badrate['badrate']

line = Line('星座')

line.add(1,x,y)

#生肖

chinese_zodiac_badrate = {}

for x in chinese_zodiac_list:

a = data[data.chinese_zodiac == x]

bad = a[a.label == 1]['label'].count()

good = a[a.label == 0]['label'].count()

chinese_zodiac_badrate[x] = bad/(bad+good)

chinese_zodiac_badrate

f = zip(chinese_zodiac_badrate.keys(),chinese_zodiac_badrate.values())

f = sorted(f,key = lambda x : x[1],reverse = True )

chinese_zodiac_badrate = pd.DataFrame(f)

chinese_zodiac_badrate.columns = pd.Series(['生肖','badrate'])

chinese_zodiac_badrate

from pyecharts import Line

x = chinese_zodiac_badrate['生肖']

y = chinese_zodiac_badrate['badrate']

line = Line('生肖')

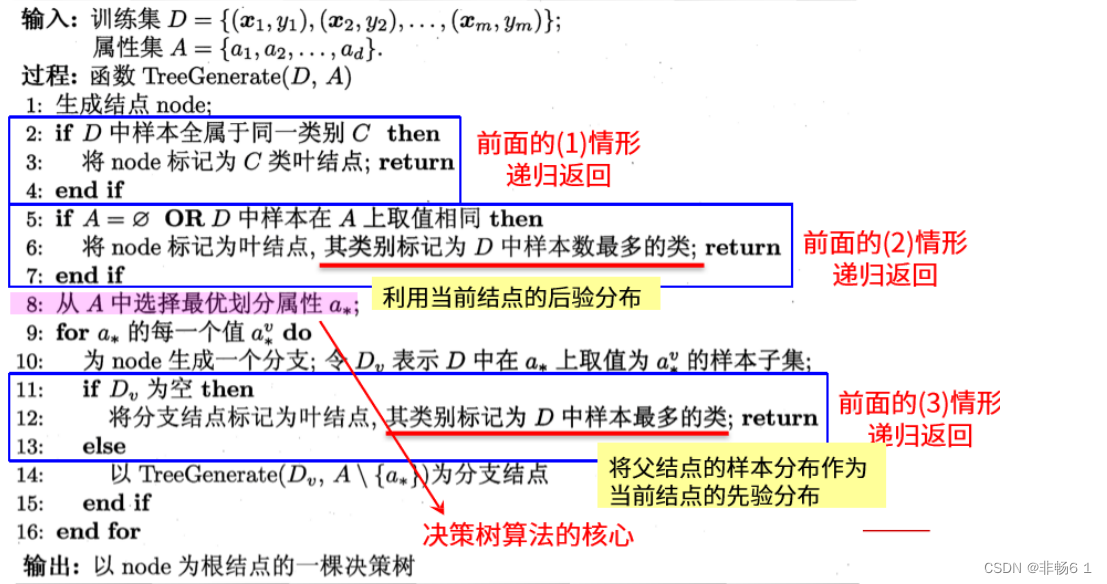

line.add(1,x,y)决策树总体流程:

决策树规则挖掘

import pandas as pd

import numpy as np

import os

os.environ["PATH"] += os.pathsep + 'C:/Program Files (x86)/Graphviz2.38/bin/'

path = 'C:\\Users\\meizihang\\Documents\\ALLCODE\\课程资料\\七月在线\\金融风控实战\\02 树模型与规则挖掘\\油品数据挖掘\\'

data = pd.read_excel(path + 'oil_data_for_tree.xlsx')

data.head()

set(data.class_new)

org_lst = ['uid','create_dt','oil_actv_dt','class_new','bad_ind']

agg_lst = ['oil_amount','discount_amount','sale_amount','amount','pay_amount','coupon_amount','payment_coupon_amount']

dstc_lst = ['channel_code','oil_code','scene','source_app','call_source']

df = data[org_lst].copy()

df[agg_lst] = data[agg_lst].copy()

df[dstc_lst] = data[dstc_lst].copy()

df.isna().sum()

df.describe()

def time_isna(x,y):

if str(x) == 'NaT':

x = y

else:

x = x

return x

df2 = df.sort_values(['uid','create_dt'],ascending = False)

df2['create_dt'] = df2.apply(lambda x: time_isna(x.create_dt,x.oil_actv_dt),axis = 1)

df2['dtn'] = (df2.oil_actv_dt - df2.create_dt).apply(lambda x :x.days)

df = df2[df2['dtn']<180]

df.head()

base = df[org_lst]

base['dtn'] = df['dtn']

base = base.sort_values(['uid','create_dt'],ascending = False)

base = base.drop_duplicates(['uid'],keep = 'first')

base.shape

#做变量衍生

gn = pd.DataFrame()

for i in agg_lst:

tp = pd.DataFrame(df.groupby('uid').apply(lambda df:len(df[i])).reset_index())

tp.columns = ['uid',i + '_cnt']

if gn.empty == True:

gn = tp

else:

gn = pd.merge(gn,tp,on = 'uid',how = 'left')

tp = pd.DataFrame(df.groupby('uid').apply(lambda df:np.where(df[i]>0,1,0).sum()).reset_index())

tp.columns = ['uid',i + '_num']

if gn.empty == True:

gn = tp

else:

gn = pd.merge(gn,tp,on = 'uid',how = 'left')

tp = pd.DataFrame(df.groupby('uid').apply(lambda df:np.nansum(df[i])).reset_index())

tp.columns = ['uid',i + '_tot']

if gn.empty == True:

gn = tp

else:

gn = pd.merge(gn,tp,on = 'uid',how = 'left')

tp = pd.DataFrame(df.groupby('uid').apply(lambda df:np.nanmean(df[i])).reset_index())

tp.columns = ['uid',i + '_avg']

if gn.empty == True:

gn = tp

else:

gn = pd.merge(gn,tp,on = 'uid',how = 'left')

tp = pd.DataFrame(df.groupby('uid').apply(lambda df:np.nanmax(df[i])).reset_index())

tp.columns = ['uid',i + '_max']

if gn.empty == True:

gn = tp

else:

gn = pd.merge(gn,tp,on = 'uid',how = 'left')

tp = pd.DataFrame(df.groupby('uid').apply(lambda df:np.nanmin(df[i])).reset_index())

tp.columns = ['uid',i + '_min']

if gn.empty == True:

gn = tp

else:

gn = pd.merge(gn,tp,on = 'uid',how = 'left')

tp = pd.DataFrame(df.groupby('uid').apply(lambda df:np.nanvar(df[i])).reset_index())

tp.columns = ['uid',i + '_var']

if gn.empty == True:

gn = tp

else:

gn = pd.merge(gn,tp,on = 'uid',how = 'left')

tp = pd.DataFrame(df.groupby('uid').apply(lambda df:np.nanmax(df[i]) -np.nanmin(df[i]) ).reset_index())

tp.columns = ['uid',i + '_var']

if gn.empty == True:

gn = tp

else:

gn = pd.merge(gn,tp,on = 'uid',how = 'left')

tp = pd.DataFrame(df.groupby('uid').apply(lambda df:np.nanmean(df[i])/max(np.nanvar(df[i]),1)).reset_index())

tp.columns = ['uid',i + '_var']

if gn.empty == True:

gn = tp

else:

gn = pd.merge(gn,tp,on = 'uid',how = 'left')

#对dstc_lst变量求distinct个数

gc = pd.DataFrame()

for i in dstc_lst:

tp = pd.DataFrame(df.groupby('uid').apply(lambda df: len(set(df[i]))).reset_index())

tp.columns = ['uid',i + '_dstc']

if gc.empty == True:

gc = tp

else:

gc = pd.merge(gc,tp,on = 'uid',how = 'left')

#将变量组合在一起

fn = pd.merge(base,gn,on= 'uid')

fn = pd.merge(fn,gc,on= 'uid')

fn.shape

fn = fn.fillna(0)

fn.head(100)

#决策树模型

x = fn.drop(['uid','oil_actv_dt','create_dt','bad_ind','class_new'],axis = 1)

y = fn.bad_ind.copy()

from sklearn import tree

dtree = tree.DecisionTreeRegressor(max_depth = 2,min_samples_leaf = 500,min_samples_split = 5000)

dtree = dtree.fit(x,y)

#输出决策树图像,并作出决策

import pydotplus

from IPython.display import Image

from sklearn.externals.six import StringIO

import os

os.environ["PATH"] += os.pathsep + 'C:/Program Files (x86)/Graphviz2.38/bin/'

with open(path + "dt.dot", "w") as f:

tree.export_graphviz(dtree, out_file=f)

dot_data = StringIO()

tree.export_graphviz(dtree, out_file=dot_data,

feature_names=x.columns,

class_names=['bad_ind'],

filled=True, rounded=True,

special_characters=True)

graph = pydotplus.graph_from_dot_data(dot_data.getvalue())

Image(graph.create_png())

#value = badrate

sum(fn.bad_ind)/len(fn.bad_ind)

302

302

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?