一.python3.5下载(跳过)

下载地址:

[https://www.python.org/downloads/release/python-350/]

安装方法:

[https://jingyan.baidu.com/article/29697b9158e688ab21de3c75.html]

二.下载anaconda(自带python 3.5)

三.下载安装tensorflow

在anaconda prompt 里敲命令安装tensorflow(一定要用管理员打开anaconda prompt不然可能报cmd 不是内部指令 若无法解决自行百度)

安装方法

https://www.cnblogs.com/ming-4/p/11516728.html

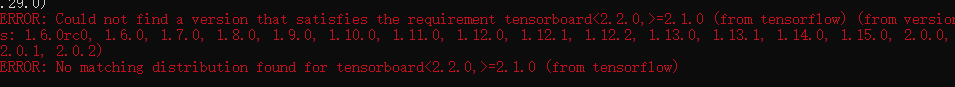

如果出现错误

解决办法

如果该解决方法里的命令不对,看他的评论

然后又遇到了

查看python版本和pip版本

实在不行,考虑换镜像。

pip install https://mirrors.tuna.tsinghua.edu.cn/tensorflow/windows/cpu/tensorflow-1.1.0-cp35-cp35m-win_amd64.whl

很明显我是小白,走到弯路不比你们少。

(到这的步骤已经搞了一上午)

用Spyder 测试Tensorflow安装成功(感谢凡哥提供,转载于 悲恋花丶无心之人)

如果进入Spyder import tensorflow 没找到 tensorflow 点击参照方法二

四.python 手写体识别

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

import os

os.environ['TF_CPP_MIN_LOG_LEVEL'] = '2'

# 定义一个全局对象来获取参数的值,在程序中使用(eg:FLAGS.iteration)来引用参数

FLAGS = tf.app.flags.FLAGS

# 设置训练相关参数

tf.app.flags.DEFINE_integer("iteration", 10001, "Iterations to train [1e4]")

tf.app.flags.DEFINE_integer("disp_freq", 200, "Display the current results every display_freq iterations [1e2]")

tf.app.flags.DEFINE_integer("train_batch_size", 100, "The size of batch images [128]")

tf.app.flags.DEFINE_float("learning_rate", 0.1, "Learning rate of for adam [0.01]")

tf.app.flags.DEFINE_string("log_dir", "logs", "Directory of logs.")

def main(argv=None):

# 0、准备训练/验证/测试数据集

mnist = input_data.read_data_sets('MNIST_data', one_hot=True)

# 1、数据输入设计:使用 placeholder 将数据送入网络,None 表示张量的第一个维度可以是任意长度的

with tf.name_scope('Input'):

X = tf.placeholder(dtype=tf.float32, shape=[None, 784], name='X_placeholder')

Y = tf.placeholder(dtype=tf.int32, shape=[None, 10], name='Y_placeholder')

# 2、前向网络设计

with tf.name_scope('Inference'):

W = tf.Variable(initial_value=tf.random_normal(shape=[784, 10], stddev=0.01), name='Weights')

b = tf.Variable(initial_value=tf.zeros(shape=[10]), name='bias')

logits = tf.matmul(X, W) + b

Y_pred = tf.nn.softmax(logits=logits)

# 3、损失函数设计

with tf.name_scope('Loss'):

# 求交叉熵损失

cross_entropy = tf.nn.softmax_cross_entropy_with_logits(labels=Y, logits=logits, name='cross_entropy')

# 求平均

loss = tf.reduce_mean(cross_entropy, name='loss')

# 4、参数学习算法设计

with tf.name_scope('Optimization'):

optimizer = tf.train.GradientDescentOptimizer(FLAGS.learning_rate).minimize(loss)

# 5、评估节点设计

with tf.name_scope('Evaluate'):

# 返回验证集/测试集预测正确或错误的布尔值

correct_prediction = tf.equal(tf.argmax(Y_pred, 1), tf.argmax(Y, 1))

# 将布尔值转换为浮点数后,求平均准确率

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

print('~~~~~~~~~~~开始执行计算图~~~~~~~~~~~~~~')

with tf.Session() as sess:

summary_writer = tf.summary.FileWriter(logdir=FLAGS.log_dir, graph=sess.graph)

# 初始化所有变量

sess.run(tf.global_variables_initializer())

total_loss = 0

for i in range(0, FLAGS.iteration):

X_batch, Y_batch = mnist.train.next_batch(FLAGS.train_batch_size)

_, loss_batch = sess.run([optimizer, loss], feed_dict={X: X_batch, Y: Y_batch})

total_loss += loss_batch

if i % FLAGS.disp_freq == 0:

val_acc = sess.run(accuracy, feed_dict={X: mnist.validation.images, Y: mnist.validation.labels})

if i == 0:

print('step: {}, train_loss: {}, val_acc: {}'.format(i, total_loss, val_acc))

else:

print('step: {}, train_loss: {}, val_acc: {}'.format(i, total_loss / FLAGS.disp_freq, val_acc))

total_loss = 0

test_acc = sess.run(accuracy, feed_dict={X: mnist.test.images, Y: mnist.test.labels})

print('test accuracy: {}'.format(test_acc))

summary_writer.close()

# 执行main函数

if __name__ == '__main__':

tf.app.run()

# -*- coding: utf-8 -*-

"""

Created on Mon Feb 24 16:18:26 2020

TREEGER

@author: Administrator

"""

import tensorflow as tf

import ssl

ssl._create_default_https_context = ssl._create_unverified_context

from tensorflow.examples.tutorials.mnist import input_data

mnist = input_data.read_data_sets("./mnist_data/", one_hot=True)

learning_rate = 0.005

training_epochs = 20

batch_size = 100

batch_count = int(mnist.train.num_examples / batch_size)

n_hidden_1 = 256

n_hidden_2 = 256

n_input = 784

n_classes = 10 # (0-9 数字)

X = tf.placeholder("float", [None, n_input])

Y = tf.placeholder("float", [None, n_classes])

weights = {

'weight1': tf.Variable(tf.random_normal([n_input, n_hidden_1])),

'weight2': tf.Variable(tf.random_normal([n_hidden_1, n_hidden_2])),

'out': tf.Variable(tf.random_normal([n_hidden_2, n_classes]))

}

biases = {

'bias1': tf.Variable(tf.random_normal([n_hidden_1])),

'bias2': tf.Variable(tf.random_normal([n_hidden_2])),

'out': tf.Variable(tf.random_normal([n_classes]))

}

def multilayer_perceptron_model(x):

layer_1 = tf.add(tf.matmul(x, weights['weight1']), biases['bias1'])

layer_2 = tf.add(tf.matmul(layer_1, weights['weight2']), biases['bias2'])

out_layer = tf.matmul(layer_2, weights['out']) + biases['out']

return out_layer

logits = multilayer_perceptron_model(X)

loss_op = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(logits=logits, labels=Y))

optimizer = tf.train.GradientDescentOptimizer(learning_rate)

# optimizer = tf.train.MomentumOptimizer(learning_rate,0.2)

# optimizer = tf.train.AdagradOptimizer(learning_rate)

# optimizer = tf.train.AdamOptimizer(learning_rate)

train_op = optimizer.minimize(loss_op)

init = tf.global_variables_initializer() # 参数初始化

with tf.Session() as sess:

sess.run(init)

for epoch in range(training_epochs): # range(150):training_epochs

avg_cost = 0.

for i in range(batch_count):

train_x, train_y = mnist.train.next_batch(batch_size)

_, c = sess.run([train_op, loss_op], feed_dict={X: train_x, Y: train_y})

avg_cost += c / batch_count

print("Epoch:", '%02d' % (epoch + 1), "avg cost={:.6f}".format(avg_cost))

pred = tf.nn.softmax(logits) # Apply softmax to logits

correct_prediction = tf.equal(tf.argmax(pred, 1), tf.argmax(Y, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, "float"))

print("Accuracy:", accuracy.eval({X: mnist.test.images, Y: mnist.test.labels}))

1326

1326

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?