目录

2. Stochastic Gradient Descent

1. 调整learning rate——Adagrad

2. Stochastic Gradient Descent

每看一个数据,就做一次梯度下降

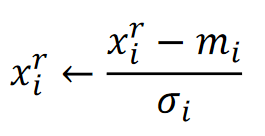

3. Feature Scaling

把不同参数的尺度调到一样的大小:mean normalization(均值归一化)

4. 梯度下降的局限

4.1 Very slow at the plateau

4.2 Stuck at saddle point

4.3 Stuck at local minima

97

97

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?