处理对象:已经做好分词准备的List

例如:

[[‘今晚’, ‘吃’, ‘五花肉’, ‘土豆’, ‘盖浇饭’],

[‘茄子’, ‘盖浇饭’,‘好吃’],

[‘盖浇饭’, ‘辣味’, ‘真香’, ‘土豆’]]

1、 需要的包

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

from wordcloud import WordCloud

2、流程

2.1 词频统计

sentence=[['今晚', '吃', '五花肉', '土豆', '盖浇饭'],

['茄子', '盖浇饭','好吃'],

['盖浇饭', '辣味', '真香', '土豆']]

def count_word(word_list):

word_result=[]

for sen in word_list:

for word in sen:

word_result.append(word)

words_df = pd.DataFrame({'word':word_result})

#按照关键字groupby分组统计词频,并按照计数降序排序

words_stat = words_df.groupby(by = ['word'])['word'].agg({np.size})

words_stat=words_stat.reset_index().sort_values(by=['size'],ascending=False)

return words_stat

print(count_word(sentence))

输出:

word count

5 盖浇饭 3

3 土豆 2

0 五花肉 1

1 今晚 1

2 吃 1

4 好吃 1

6 真香 1

7 茄子 1

8 辣味 1

2.2 关键字提取

def Topic_word(word_list,num):

#list中每行词语大于Num才进行关键字提取

result=[]

for sen in word_list:

if len(sen) > num:

keywords = " ".join(jieba.analyse.extract_tags(",".join(word for word in sen) , topK=num))

sperate_word = keywords.split(" ")

else:

sperate_word = sen

result.append(sperate_word)

return result

print(Topic_word(sentence,3))

输出:

[[‘盖浇饭’, ‘五花肉’, ‘土豆’],

[‘茄子’, ‘盖浇饭’, ‘好吃’],

[‘盖浇饭’, ‘真香’, ‘辣味’]]

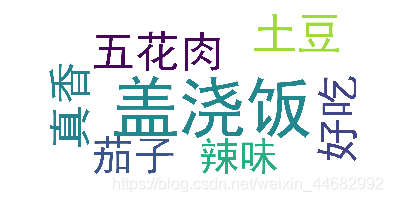

2.3 词云制作

def my_word_cloud(word_list):

words_stat = count_word(word_list)

ziti = 'C:/windows/Fonts/simhei.ttf' #字体地址

wordcloud=WordCloud(font_path=ziti,scale=5,background_color="white",max_font_size=80)

#scale代表像素,若需要清晰的图片可加大该值,也需要考虑电脑计算能力,可以加到40自行感受一下

word_frequence = {x[0]:x[1] for x in words_stat.head(5).values} #其中head()内的数字可自定义

wordcloud=wordcloud.fit_words(word_frequence)

plt.imshow(wordcloud) #词云展示

plt.axis('off')

wordcloud.to_file(r'wordcloud_1.jpg') #保存词云图片

输出:

2269

2269

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?