本文只比较深度可分离卷积与标准卷积的参数量、运算量、时间,模型性能请看Mobilenet系列论文。

1. Model

- 深度可分离卷积

import torch

import torch.nn as nn

import torch.nn.functional as F

import time

from torchstat import stat

class Depthpoint_conv(nn.Module):

def __init__(self, C_in, C_out, kernel_size, stride, padding, affine=True):

super(Depthpoint_conv, self).__init__()

self.op = nn.Sequential(

nn.Conv2d(C_in, C_in, kernel_size=kernel_size, stride=stride, padding=padding, groups=C_in, bias=False),

nn.Conv2d(C_in, C_out, kernel_size=1, padding=0, bias=False),

nn.BatchNorm2d(C_out, affine=affine),

nn.LeakyReLU()

)

def forward(self, x):

out = self.op(x)

return out

class Net1(nn.Module):

def __init__(self):

super(Net1, self).__init__()

self.op = nn.Sequential(

Depthpoint_conv(3, 128, 3, 1, 1),

Depthpoint_conv(128, 128, 3, 1, 1),

Depthpoint_conv(128, 128, 3, 1, 1),

Depthpoint_conv(128, 128, 3, 1, 1),

Depthpoint_conv(128, 128, 3, 1, 1),

Depthpoint_conv(128, 128, 3, 1, 1),

Depthpoint_conv(128, 128, 3, 1, 1),

Depthpoint_conv(128, 128, 3, 1, 1),

Depthpoint_conv(128, 128, 3, 1, 1),

Depthpoint_conv(128, 128, 3, 1, 1)

)

def forward(self, x):

out = self.op(x)

return out

model_1 = Net1()

model_1.eval()

x = torch.randn((1, 3, 256, 256)).cuda()

stat(model_1, (3, 256, 256))

- 标准卷积

import torch

import torch.nn as nn

import torch.nn.functional as F

import time

from torchstat import stat

class Conv2d_block(nn.Module):

def __init__(self, C_in, C_out, kernel_size, stride, padding, affine=True):

super(Conv2d_block, self).__init__()

self.op = nn.Sequential(

nn.Conv2d(C_in, C_out, kernel_size=kernel_size, stride=stride, padding=padding, bias=False),

nn.BatchNorm2d(C_out, affine=affine),

nn.LeakyReLU()

)

def forward(self, x):

out = self.op(x)

return out

class Net2(nn.Module):

def __init__(self):

super(Net2, self).__init__()

self.op = nn.Sequential(

Conv2d_block(3, 128, 3, 1, 1),

Conv2d_block(128, 128, 3, 1, 1),

Conv2d_block(128, 128, 3, 1, 1),

Conv2d_block(128, 128, 3, 1, 1),

Conv2d_block(128, 128, 3, 1, 1),

Conv2d_block(128, 128, 3, 1, 1),

Conv2d_block(128, 128, 3, 1, 1),

Conv2d_block(128, 128, 3, 1, 1),

Conv2d_block(128, 128, 3, 1, 1),

Conv2d_block(128, 128, 3, 1, 1)

)

def forward(self, x):

out = self.op(x)

return out

model_2 = Net2()

model_2.eval()

x = torch.randn((1, 3, 256, 256)).cuda()

stat(model_2, (3, 256, 256))

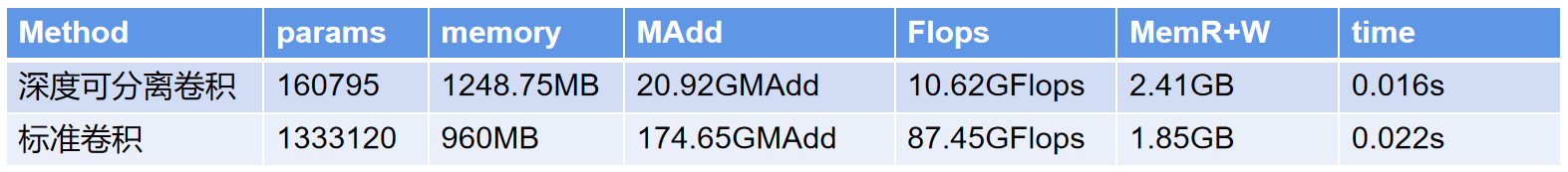

2. 结果

避坑指南:不能简单地用深度可分离卷积代替标准卷积,模型性能会变得很差

1381

1381

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?