1. adreno_platform_driver

static struct adreno_device device_3d0;

static const struct of_device_id adreno_match_table[] = {

{ .compatible = "qcom,kgsl-3d0", .data = &device_3d0 },

{ },

};

static struct platform_driver adreno_platform_driver = {

// kgsl probe函数[见第2节]

.probe = adreno_probe,

.remove = adreno_remove,

// device_driver

.driver = {

.name = "kgsl-3d",

//

.pm = &adreno_pm_ops,

.of_match_table = of_match_ptr(adreno_match_table),

}

};

2. adreno_probe

static int adreno_probe(struct platform_device *pdev)

{

struct component_match *match = NULL;

adreno_add_gmu_components(&pdev->dev, &match);

if (match)

return component_master_add_with_match(&pdev->dev,

&adreno_ops, match);

else

// 绑定adreno设备[见2.1节]

return adreno_bind(&pdev->dev);

}

2.1 adreno_bind

static int adreno_bind(struct device *dev)

{

struct platform_device *pdev = to_platform_device(dev);

const struct adreno_gpu_core *gpucore;

int ret;

u32 chipid;

// 判断GPU是否被禁用

if (adreno_is_gpu_disabled(pdev)) {

dev_err(&pdev->dev, "adreno: GPU is disabled on this device\n");

return -ENODEV;

}

// 识别GPU型号[见2.2节]

gpucore = adreno_identify_gpu(pdev, &chipid);

if (IS_ERR(gpucore))

return PTR_ERR(gpucore);

// 调用2.5节中adreno_a6xx_gmu_gpudev定义的a6xx_gmu_device_probe

ret = gpucore->gpudev->probe(pdev, chipid, gpucore);

if (!ret) {

struct kgsl_device *device = dev_get_drvdata(dev);

device->pdev_loaded = true;

}

return ret;

}

2.2 adreno_identify_gpu

static const struct adreno_gpu_core *

adreno_identify_gpu(struct platform_device *pdev, u32 *chipid)

{

const struct adreno_gpu_core *gpucore;

// 解析dts文件qcom,chipid节点, 获取GPU型号

if (adreno_get_chipid(pdev, chipid)) {

dev_crit(&pdev->dev, "Unable to get the GPU chip ID\n");

return ERR_PTR(-ENODEV);

}

// 根据GPU型号查找对应的adreno_gpu_core[见2.3节]

gpucore = _get_gpu_core(pdev, chipid);

if (!gpucore) {

dev_crit(&pdev->dev, "Unknown GPU chip ID %8.8x\n", *chipid);

return ERR_PTR(-ENODEV);

}

/*

* Identify non-longer supported targets and spins and print a helpful

* message

*/

if (gpucore->features & ADRENO_DEPRECATED) {

if (gpucore->compatible)

dev_err(&pdev->dev,

"Support for GPU %s has been deprecated\n",

gpucore->compatible);

else

dev_err(&pdev->dev,

"Support for GPU %x.%d.%x.%d has been deprecated\n",

gpucore->core, gpucore->major,

gpucore->minor, gpucore->patchid);

return ERR_PTR(-ENODEV);

}

// 返回adreno_gpu_core

return gpucore;

}

2.3 _get_gpu_core

static const struct adreno_gpu_core *

_get_gpu_core(struct platform_device *pdev, unsigned int chipid)

{

// GPU 系列号

unsigned int core = ADRENO_CHIPID_CORE(chipid);

// GPU 主版本号

unsigned int major = ADRENO_CHIPID_MAJOR(chipid);

// GPU 副版本号

unsigned int minor = ADRENO_CHIPID_MINOR(chipid);

// GPU patchid

unsigned int patchid = ADRENO_CHIPID_PATCH(chipid);

int i;

struct device_node *node;

/*

* When "qcom,gpu-models" is defined, use gpu model node to match

* on a compatible string, otherwise match using legacy way.

*/

node = adreno_get_gpu_model_node(pdev);

if (!node || !of_find_property(node, "compatible", NULL))

node = pdev->dev.of_node;

/* Check to see if any of the entries match on a compatible string */

for (i = 0; i < ARRAY_SIZE(adreno_gpulist); i++) {

if (adreno_gpulist[i]->compatible &&

of_device_is_compatible(node,

adreno_gpulist[i]->compatible))

return adreno_gpulist[i];

}

// 遍历adreno_gpulist并选择匹配的adreno_gpu_core:adreno_gpu_core_a640[见2.4节]

for (i = 0; i < ARRAY_SIZE(adreno_gpulist); i++) {

if (core == adreno_gpulist[i]->core &&

_rev_match(major, adreno_gpulist[i]->major) &&

_rev_match(minor, adreno_gpulist[i]->minor) &&

_rev_match(patchid, adreno_gpulist[i]->patchid))

return adreno_gpulist[i];

}

return NULL;

}

2.4 adreno_gpu_core_a640

static const struct adreno_a6xx_core adreno_gpu_core_a640 = {

.base = {

// 版本

DEFINE_ADRENO_REV(ADRENO_REV_A640, 6, 4, 0, ANY_ID),

// 特性

.features = ADRENO_RPMH | ADRENO_GPMU |

ADRENO_CONTENT_PROTECTION | ADRENO_IOCOHERENT |

ADRENO_IFPC | ADRENO_PREEMPTION,

// adreno_gpudev:adreno_a6xx_gmu_gpudev[见2.5节]

.gpudev = &adreno_a6xx_gmu_gpudev,

// 默认的性能计数器

.perfcounters = &adreno_a6xx_legacy_perfcounters,

.gmem_size = SZ_1M, //Verified 1MB

.bus_width = 32,

.snapshot_size = 2 * SZ_1M,

},

.prim_fifo_threshold = 0x00200000,

.gmu_major = 2,

.gmu_minor = 0,

.sqefw_name = "a630_sqe.fw",

.gmufw_name = "a640_gmu.bin",

.zap_name = "a640_zap",

.hwcg = a640_hwcg_regs,

.hwcg_count = ARRAY_SIZE(a640_hwcg_regs),

.vbif = a640_vbif_regs,

.vbif_count = ARRAY_SIZE(a640_vbif_regs),

.hang_detect_cycles = 0xcfffff,

.protected_regs = a630_protected_regs,

.disable_tseskip = true,

.highest_bank_bit = 15,

};

2.5 adreno_a6xx_gmu_gpudev

const struct adreno_gpudev adreno_a6xx_gmu_gpudev = {

.reg_offsets = a6xx_register_offsets,

// gmu probe函数

.probe = a6xx_gmu_device_probe,

.start = a6xx_start,

.snapshot = a6xx_gmu_snapshot,

.init = a6xx_init,

.irq_handler = a6xx_irq_handler,

.rb_start = a6xx_rb_start,

.regulator_enable = a6xx_gmu_sptprac_enable,

.regulator_disable = a6xx_gmu_sptprac_disable,

.read_throttling_counters = a6xx_read_throttling_counters,

.microcode_read = a6xx_microcode_read,

.gpu_keepalive = a6xx_gpu_keepalive,

.hw_isidle = a6xx_hw_isidle,

.iommu_fault_block = a6xx_iommu_fault_block,

.reset = a6xx_gmu_restart,

.preemption_pre_ibsubmit = a6xx_preemption_pre_ibsubmit,

.preemption_post_ibsubmit = a6xx_preemption_post_ibsubmit,

.preemption_init = a6xx_preemption_init,

.preemption_schedule = a6xx_preemption_schedule,

.set_marker = a6xx_set_marker,

.preemption_context_init = a6xx_preemption_context_init,

.sptprac_is_on = a6xx_gmu_sptprac_is_on,

.ccu_invalidate = a6xx_ccu_invalidate,

#ifdef CONFIG_QCOM_KGSL_CORESIGHT

.coresight = {&a6xx_coresight, &a6xx_coresight_cx},

#endif

.read_alwayson = a6xx_read_alwayson,

.power_ops = &a6xx_gmu_power_ops,

};

2.6 a6xx_gmu_device_probe

int a6xx_gmu_device_probe(struct platform_device *pdev,

u32 chipid, const struct adreno_gpu_core *gpucore)

{

struct adreno_device *adreno_dev;

struct kgsl_device *device;

struct a6xx_device *a6xx_dev;

int ret;

// 创建a6xx_device

a6xx_dev = devm_kzalloc(&pdev->dev, sizeof(*a6xx_dev),

GFP_KERNEL);

if (!a6xx_dev)

return -ENOMEM;

// 取a6xx_device的成员adreno_device

adreno_dev = &a6xx_dev->adreno_dev;

// gmu probe[见第3节]

ret = a6xx_probe_common(pdev, adreno_dev, chipid, gpucore);

if (ret)

return ret;

// 取adreno_device的成员kgsl_device

device = KGSL_DEVICE(adreno_dev);

INIT_WORK(&device->idle_check_ws, gmu_idle_check);

timer_setup(&device->idle_timer, gmu_idle_timer, 0);

adreno_dev->irq_mask = A6XX_INT_MASK;

return 0;

}

3. a6xx_probe_common

int a6xx_probe_common(struct platform_device *pdev,

struct adreno_device *adreno_dev, u32 chipid,

const struct adreno_gpu_core *gpucore)

{

const struct adreno_gpudev *gpudev = gpucore->gpudev;

// 初始化adreno_device

adreno_dev->gpucore = gpucore;

adreno_dev->chipid = chipid;

adreno_reg_offset_init(gpudev->reg_offsets);

adreno_dev->hwcg_enabled = true;

adreno_dev->preempt.preempt_level = 1;

adreno_dev->preempt.skipsaverestore = true;

adreno_dev->preempt.usesgmem = true;

/* Set the GPU busy counter for frequency scaling */

adreno_dev->perfctr_pwr_lo = A6XX_GMU_CX_GMU_POWER_COUNTER_XOCLK_0_L;

/* Set the counter for IFPC */

if (ADRENO_FEATURE(adreno_dev, ADRENO_IFPC))

adreno_dev->perfctr_ifpc_lo =

A6XX_GMU_CX_GMU_POWER_COUNTER_XOCLK_4_L;

// adreno_device probe[见第4节]

return adreno_device_probe(pdev, adreno_dev);

}

4. adreno_device_probe

int adreno_device_probe(struct platform_device *pdev,

struct adreno_device *adreno_dev)

{

struct kgsl_device *device = KGSL_DEVICE(adreno_dev);

struct device *dev = &pdev->dev;

unsigned int priv = 0;

int status;

u32 size;

place_marker("M - DRIVER GPU Init");

/* Initialize the adreno device structure */

// 初始化adreno_device[见第5节]

adreno_setup_device(adreno_dev);

dev_set_drvdata(dev, device);

device->pdev = pdev;

adreno_update_soc_hw_revision_quirks(adreno_dev, pdev);

status = adreno_read_speed_bin(pdev);

if (status < 0)

return status;

device->speed_bin = status;

// 获取GPU寄存器物理地址和大小和GPU频率[见第6节]

status = adreno_of_get_power(adreno_dev, pdev);

if (status)

return status;

status = kgsl_bus_init(device, pdev);

if (status)

goto err;

/*

* Bind the GMU components (if applicable) before doing the KGSL

* platform probe

*/

if (of_find_matching_node(dev->of_node, adreno_gmu_match)) {

status = component_bind_all(dev, NULL);

if (status) {

kgsl_bus_close(device);

return status;

}

}

/*

* The SMMU APIs use unsigned long for virtual addresses which means

* that we cannot use 64 bit virtual addresses on a 32 bit kernel even

* though the hardware and the rest of the KGSL driver supports it.

*/

if (adreno_support_64bit(adreno_dev))

kgsl_mmu_set_feature(device, KGSL_MMU_64BIT);

/*

* Set the SMMU aperture on A6XX targets to use per-process pagetables.

*/

if (adreno_is_a6xx(adreno_dev))

kgsl_mmu_set_feature(device, KGSL_MMU_SMMU_APERTURE);

if (ADRENO_FEATURE(adreno_dev, ADRENO_IOCOHERENT))

kgsl_mmu_set_feature(device, KGSL_MMU_IO_COHERENT);

device->pwrctrl.bus_width = adreno_dev->gpucore->bus_width;

device->mmu.secured = (IS_ENABLED(CONFIG_QCOM_SECURE_BUFFER) &&

ADRENO_FEATURE(adreno_dev, ADRENO_CONTENT_PROTECTION));

/* Probe the LLCC - this could return -EPROBE_DEFER */

status = adreno_probe_llcc(adreno_dev, pdev);

if (status)

goto err;

/*

* IF the GPU HTW slice was successsful set the MMU feature so the

* domain can set the appropriate attributes

*/

if (!IS_ERR_OR_NULL(adreno_dev->gpuhtw_llc_slice))

kgsl_mmu_set_feature(device, KGSL_MMU_LLCC_ENABLE);

// [见第7节]

status = kgsl_device_platform_probe(device);

if (status)

goto err;

/* Probe for the optional CX_DBGC block */

adreno_cx_dbgc_probe(device);

/* Probe for the optional CX_MISC block */

adreno_cx_misc_probe(device);

adreno_isense_probe(device);

/* Allocate the memstore for storing timestamps and other useful info */

if (ADRENO_FEATURE(adreno_dev, ADRENO_APRIV))

priv |= KGSL_MEMDESC_PRIVILEGED;

// 分配kgsl_device全局共享的内存: 见"adreno源码系列之Gpu内存申请"

device->memstore = kgsl_allocate_global(device,

KGSL_MEMSTORE_SIZE, 0, 0, priv, "memstore");

status = PTR_ERR_OR_ZERO(device->memstore);

if (status) {

kgsl_device_platform_remove(device);

goto err;

}

/* Initialize the snapshot engine */

size = adreno_dev->gpucore->snapshot_size;

/*

* Use a default size if one wasn't specified, but print a warning so

* the developer knows to fix it

*/

if (WARN(!size, "The snapshot size was not specified in the gpucore\n"))

size = SZ_1M;

kgsl_device_snapshot_probe(device, size);

adreno_debugfs_init(adreno_dev);

adreno_profile_init(adreno_dev);

adreno_sysfs_init(adreno_dev);

kgsl_pwrscale_init(device, pdev, CONFIG_QCOM_ADRENO_DEFAULT_GOVERNOR);

/* Initialize coresight for the target */

adreno_coresight_init(adreno_dev);

#ifdef CONFIG_INPUT

if (!device->pwrctrl.input_disable) {

adreno_input_handler.private = device;

/*

* It isn't fatal if we cannot register the input handler. Sad,

* perhaps, but not fatal

*/

if (input_register_handler(&adreno_input_handler)) {

adreno_input_handler.private = NULL;

dev_err(device->dev,

"Unable to register the input handler\n");

}

}

#endif

place_marker("M - DRIVER GPU Ready");

return 0;

err:

device->pdev = NULL;

if (of_find_matching_node(dev->of_node, adreno_gmu_match))

component_unbind_all(dev, NULL);

kgsl_bus_close(device);

return status;

}

5. adreno_setup_device

static void adreno_setup_device(struct adreno_device *adreno_dev)

{

u32 i;

// 初始化kgsl_device名称

adreno_dev->dev.name = "kgsl-3d0";

// 初始化kgsl_device的函数表[见5.1节]

adreno_dev->dev.ftbl = &adreno_functable;

init_completion(&adreno_dev->dev.hwaccess_gate);

init_completion(&adreno_dev->dev.halt_gate);

idr_init(&adreno_dev->dev.context_idr);

mutex_init(&adreno_dev->dev.mutex);

INIT_LIST_HEAD(&adreno_dev->dev.globals);

/* Set the fault tolerance policy to replay, skip, throttle */

adreno_dev->ft_policy = BIT(KGSL_FT_REPLAY) |

BIT(KGSL_FT_SKIPCMD) | BIT(KGSL_FT_THROTTLE);

/* Enable command timeouts by default */

adreno_dev->long_ib_detect = true;

INIT_WORK(&adreno_dev->input_work, adreno_input_work);

INIT_LIST_HEAD(&adreno_dev->active_list);

spin_lock_init(&adreno_dev->active_list_lock);

// 初始化adreno_ringbuffer

for (i = 0; i < ARRAY_SIZE(adreno_dev->ringbuffers); i++) {

struct adreno_ringbuffer *rb = &adreno_dev->ringbuffers[i];

INIT_LIST_HEAD(&rb->events.group);

}

/*

* Some GPUs needs UCHE GMEM base address to be minimum 0x100000

* and 1MB aligned. Configure UCHE GMEM base based on GMEM size

* and align it one 1MB. This needs to be done based on GMEM size

* because setting it to minimum value 0x100000 will result in RB

* and UCHE GMEM range overlap for GPUs with GMEM size >1MB.

*/

if (!adreno_is_a650_family(adreno_dev))

adreno_dev->uche_gmem_base =

ALIGN(adreno_dev->gpucore->gmem_size, SZ_1M);

}

5.1 adreno_functable

static const struct kgsl_functable adreno_functable = {

/* Mandatory functions */

// 读寄存器

.regread = adreno_regread,

// 写寄存器

.regwrite = adreno_regwrite,

.idle = adreno_idle,

.suspend_context = adreno_suspend_context,

// 打开/dev/kgsl-3d0

.first_open = adreno_first_open,

// 启动adreno

.start = adreno_start,

.stop = adreno_stop,

.last_close = adreno_last_close,

.getproperty = adreno_getproperty,

.getproperty_compat = adreno_getproperty_compat,

.waittimestamp = adreno_waittimestamp,

.readtimestamp = adreno_readtimestamp,

.queue_cmds = adreno_queue_cmds,

// adreno ioctl列表

.ioctl = adreno_ioctl,

.compat_ioctl = adreno_compat_ioctl,

.power_stats = adreno_power_stats,

.gpuid = adreno_gpuid,

.snapshot = adreno_snapshot,

// adreno中断处理函数

.irq_handler = adreno_irq_handler,

.drain = adreno_drain,

.device_private_create = adreno_device_private_create,

.device_private_destroy = adreno_device_private_destroy,

/* Optional functions */

// 创建adreno_context

.drawctxt_create = adreno_drawctxt_create,

.drawctxt_detach = adreno_drawctxt_detach,

.drawctxt_destroy = adreno_drawctxt_destroy,

.drawctxt_dump = adreno_drawctxt_dump,

.setproperty = adreno_setproperty,

.setproperty_compat = adreno_setproperty_compat,

.drawctxt_sched = adreno_drawctxt_sched,

.resume = adreno_dispatcher_start,

.regulator_enable = adreno_regulator_enable,

.prepare_for_power_off = adreno_prepare_for_power_off,

.regulator_disable = adreno_regulator_disable,

.pwrlevel_change_settings = adreno_pwrlevel_change_settings,

.regulator_disable_poll = adreno_regulator_disable_poll,

.clk_set_options = adreno_clk_set_options,

.gpu_model = adreno_gpu_model,

.query_property_list = adreno_query_property_list,

.is_hwcg_on = adreno_is_hwcg_on,

.gpu_clock_set = adreno_gpu_clock_set,

.gpu_bus_set = adreno_gpu_bus_set,

.deassert_gbif_halt = adreno_deassert_gbif_halt,

};

6. adreno_of_get_power

static int adreno_of_get_power(struct adreno_device *adreno_dev,

struct platform_device *pdev)

{

struct kgsl_device *device = KGSL_DEVICE(adreno_dev);

struct resource *res;

int ret;

/* Get starting physical address of device registers */

// 获取GPU的kgsl_3d0_reg_memory寄存器起始物理地址和大小:dts中配置的0x2c00000和256k(0x40000)

res = platform_get_resource_byname(device->pdev, IORESOURCE_MEM,

"kgsl_3d0_reg_memory");

if (res == NULL) {

dev_err(device->dev,

"platform_get_resource_byname failed\n");

return -EINVAL;

}

if (res->start == 0 || resource_size(res) == 0) {

dev_err(device->dev, "dev %d invalid register region\n",

device->id);

return -EINVAL;

}

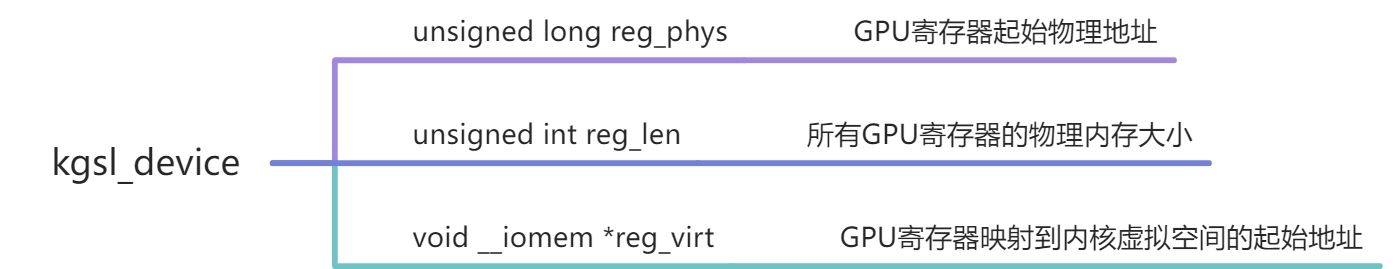

// kgsl_device保存kgsl_3d0_reg_memory寄存器的起始物理地址和大小

device->reg_phys = res->start;

device->reg_len = resource_size(res);

// 解析dts中的qcom,gpu-pwrlevels节点获取GPU频率配置

ret = adreno_of_get_pwrlevels(adreno_dev, pdev->dev.of_node);

if (ret)

return ret;

l3_pwrlevel_probe(device, pdev->dev.of_node);

device->pwrctrl.interval_timeout = CONFIG_QCOM_KGSL_IDLE_TIMEOUT;

device->pwrctrl.minbw_timeout = 10;

/* Set default bus control to true on all targets */

device->pwrctrl.bus_control = true;

device->pwrctrl.input_disable =

of_property_read_bool(pdev->dev.of_node,

"qcom,disable-wake-on-touch");

return 0;

}

7. kgsl_device_platform_probe

int kgsl_device_platform_probe(struct kgsl_device *device)

{

struct platform_device *pdev = device->pdev;

int status = -EINVAL;

// 创建kgsl_device对应的device结构体[见7.1节]

status = _register_device(device);

if (status)

return status;

/* Can return -EPROBE_DEFER */

// 初始化kgsl_pwrctrl:见"adreno源码系列之kgsl_pwrctrl"

status = kgsl_pwrctrl_init(device);

if (status)

goto error;

// 申请访问GPU寄存器内存

if (!devm_request_mem_region(&pdev->dev, device->reg_phys,

device->reg_len, device->name)) {

dev_err(device->dev, "request_mem_region failed\n");

status = -ENODEV;

goto error_pwrctrl_close;

}

// 将GPU寄存器物理内存映射到内核虚拟地址空间, 并返回内核虚拟地址空间起始地址

device->reg_virt = devm_ioremap(&pdev->dev, device->reg_phys,

device->reg_len);

if (device->reg_virt == NULL) {

dev_err(device->dev, "ioremap failed\n");

status = -ENODEV;

goto error_pwrctrl_close;

}

// 注册kgsl中断处理函数kgsl_irq_handler: 见"adreno源码系列之kgsl_irq_handler"

status = kgsl_request_irq(pdev, "kgsl_3d0_irq",

kgsl_irq_handler, device);

if (status < 0)

goto error_pwrctrl_close;

device->pwrctrl.interrupt_num = status;

disable_irq(device->pwrctrl.interrupt_num);

rwlock_init(&device->context_lock);

spin_lock_init(&device->submit_lock);

idr_init(&device->timelines);

spin_lock_init(&device->timelines_lock);

#if defined(OPLUS_FEATURE_SCHED_ASSIST) && defined(CONFIG_OPLUS_FEATURE_SCHED_ASSIST)

if (sysctl_sched_assist_enabled)

device->events_wq = alloc_workqueue("kgsl-events",

WQ_UNBOUND | WQ_MEM_RECLAIM | WQ_SYSFS | WQ_HIGHPRI | WQ_UX, 0);

else

device->events_wq = alloc_workqueue("kgsl-events",

WQ_UNBOUND | WQ_MEM_RECLAIM | WQ_SYSFS | WQ_HIGHPRI, 0);

#else

// 分配一个名为kgsl-events的工作队列

device->events_wq = alloc_workqueue("kgsl-events",

WQ_UNBOUND | WQ_MEM_RECLAIM | WQ_SYSFS | WQ_HIGHPRI, 0);

#endif

if (!device->events_wq) {

dev_err(device->dev, "Failed to allocate events workqueue\n");

status = -ENOMEM;

goto error_pwrctrl_close;

}

/* This can return -EPROBE_DEFER */

// kgsl_mmu: 见"adreno源码系列之kgsl_mmu"

status = kgsl_mmu_probe(device);

if (status != 0)

goto error_pwrctrl_close;

// 创建并初始化/sys/kernel/debug/kgsl/kgsl-3d0

kgsl_device_debugfs_init(device);

dma_set_coherent_mask(&pdev->dev, KGSL_DMA_BIT_MASK);

/* Set up the GPU events for the device */

kgsl_device_events_probe(device);

/* Initialize common sysfs entries */

kgsl_pwrctrl_init_sysfs(device);

return 0;

error_pwrctrl_close:

if (device->events_wq) {

destroy_workqueue(device->events_wq);

device->events_wq = NULL;

}

kgsl_pwrctrl_close(device);

error:

_unregister_device(device);

return status;

}

7.1 _register_device

static int _register_device(struct kgsl_device *device)

{

static u64 dma_mask = DMA_BIT_MASK(64);

static struct device_dma_parameters dma_parms;

int minor, ret;

dev_t dev;

/* Find a minor for the device */

mutex_lock(&kgsl_driver.devlock);

for (minor = 0; minor < ARRAY_SIZE(kgsl_driver.devp); minor++) {

if (kgsl_driver.devp[minor] == NULL) {

kgsl_driver.devp[minor] = device;

break;

}

}

mutex_unlock(&kgsl_driver.devlock);

if (minor == ARRAY_SIZE(kgsl_driver.devp)) {

pr_err("kgsl: minor devices exhausted\n");

return -ENODEV;

}

/* Create the device */

dev = MKDEV(MAJOR(kgsl_driver.major), minor);

// 创建device

device->dev = device_create(kgsl_driver.class,

&device->pdev->dev,

dev, device,

device->name);

if (IS_ERR(device->dev)) {

mutex_lock(&kgsl_driver.devlock);

kgsl_driver.devp[minor] = NULL;

mutex_unlock(&kgsl_driver.devlock);

ret = PTR_ERR(device->dev);

pr_err("kgsl: device_create(%s): %d\n", device->name, ret);

return ret;

}

device->dev->dma_mask = &dma_mask;

device->dev->dma_parms = &dma_parms;

dma_set_max_seg_size(device->dev, DMA_BIT_MASK(32));

// 初始化device的dma_map_ops为NULL

set_dma_ops(device->dev, NULL);

// 初始化/sys/kernel/gpu

if (kobject_init_and_add(&device->gpu_sysfs_kobj, &kgsl_gpu_sysfs_ktype,

kernel_kobj, "gpu"))

dev_err(device->dev, "Unable to add sysfs for gpu\n");

return 0;

}

917

917

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?