windows10更新系统失败_系统重新配置

windows10更新系统失败

电脑更新失败修复尝试

无语了。电脑自动更新windows系统后,出现蓝屏。过了一天后又好了,之后又出现黑屏。电脑一直CPU占用100%,因为电脑C盘之前就只剩10个g,更新系统后C盘只有4g,属实把自己整崩溃了。最后联系联想工程师,做了一些操作后,还是不行,最后没办法,只能重置系统,回到刚购买时的系统。

主要操作如下:

1、开机,快速按F11三到五次,进入安全模式,将新更新系统卸载,然后重启。

2、关闭微软的资讯和兴趣。(工程师说这个更新后可能会访问国外的这个资讯,访问不上一直访问导致cpu100%,最后卡死)

还是不能解决,也考虑到C盘不够了,还是重置系统吧!

1、备份数据,但是电脑卡死,没办法进行备份数据。(联系工程师,后告诉我有一个第三方软件微PE可以进行数据备份,但是要收费),突然想到昨天进入安全模式可以正常使用电脑,于是选择进入安全模式后,将数据备份到移动硬盘上。

2、开机,快速按F11三到五次,进入后疑难解答=》高级设置=》重置此电脑=》删除所有内容(按工程师说这样删除更彻底,保留我的文件的方式可能不能解决我的问题)=》重置电脑=》等待1小时左右,重置系统完成

电脑重置完成,幸运的是D盘数据没有丢失(果然数据资料才是最重要的呀!)。因为之前安装包都下载并安装到D盘了,后续就做一些配置,就可以恢复电脑的使用了。

jdk配置

配置环境变量

因为之前已经安装,所以只要找到安装目录。

java安装位置:D:\Software\Java\jdk1.8.0_291

设置3项属性,JAVA_HOME,PATH,CLASSPATH(不区分大小写),若已存在则点击"编辑",注意用分号与前面的隔开,不存在则点击"新建"。

- 变量名: JAVA_HOME

变量值: C:\Program Files\Java\jdk1.8.0_111 - 变量名: Path

变量值: %JAVA_HOME%\bin;%JAVA_HOME%\jre\bin; - 变量名: CLASSPATH

变量值: .;%JAVA_HOME%\lib\dt.jar;%JAVA_HOME%\lib\tools.jar; 注意:这前面有一个点‘.’

验证

简单测试一下,成功!

C:\Users\Lenovo-T15>java -version

java version "1.8.0_291"

Java(TM) SE Runtime Environment (build 1.8.0_291-b10)

Java HotSpot(TM) 64-Bit Server VM (build 25.291-b10, mixed mode)

完整安装配置jdk可参考:https://blog.csdn.net/Feilinzero/article/details/103836822

scala

配置环境变量

- 变量名: SCALA_HOME

变量值: D:\Software\scala-2.11.8\scala-2.11.8 - 变量名: Path

变量值:%SCALA_HOME%\bin

验证

C:\Users\Lenovo-T15>scala -version

Scala code runner version 2.11.8 -- Copyright 2002-2016, LAMP/EPFL

hadoop

hadoop安装依赖于 JDK,所以首先需要下载、安装和配置 JDK。

windows上使用hadoop需要下载winutils.exe文件,即下载windows专用二进制文件和工具类依赖库。

将下载好winutils.exe后,将这个文件放入到Hadoop的bin目录下

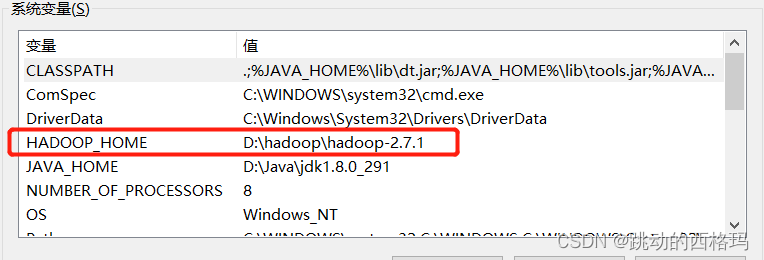

配置环境变量

添加系统环境变量 HADOOP_HOME 指向 Hadoop 安装根目录

- 变量名: HADOOP_HOME

变量值: D:\hadoop\hadoop-2.7 - 变量名: Path

变量值:%HADOOP_HOME%\bin;%HADOOP_HOME%\sbin;

验证

C:\Users\Lenovo-T15>hadoop version

Hadoop 2.7.1

Subversion https://git-wip-us.apache.org/repos/asf/hadoop.git -r 15ecc87ccf4a0228f35af08fc56de536e6ce657a

Compiled by jenkins on 2015-06-29T06:04Z

Compiled with protoc 2.5.0

From source with checksum fc0a1a23fc1868e4d5ee7fa2b28a58a

This command was run using /D:/hadoop/hadoop-2.7.1/share/hadoop/common/hadoop-common-2.7.1.jar

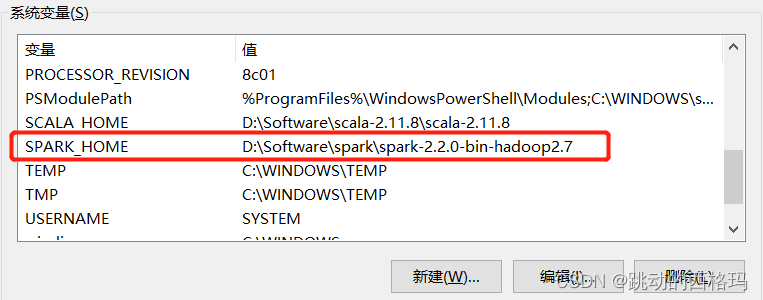

spark

配置环境变量

- 变量名: SPARK_HOME

变量值:D:\Software\spark\spark-2.2.0-bin-hadoop2.7 - 变量名: Path

变量值:%SPARK_HOME%\bin;%SPARK_HOME%\sbin;

验证

C:\Users\Lenovo-T15>spark-shell

Using Spark's default log4j profile: org/apache/spark/log4j-defaults.properties

Setting default log level to "WARN".

To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel).

23/06/19 15:14:40 WARN NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

23/06/19 15:14:43 WARN General: Plugin (Bundle) "org.datanucleus.api.jdo" is already registered. Ensure you dont have multiple JAR versions of the same plugin in the classpath. The URL "file:/D:/Software/spark/spark-2.2.0-bin-hadoop2.7/bin/../jars/datanucleus-api-jdo-3.2.6.jar" is already registered, and you are trying to register an identical plugin located at URL "file:/D:/Software/spark/spark-2.2.0-bin-hadoop2.7/jars/datanucleus-api-jdo-3.2.6.jar."

23/06/19 15:14:43 WARN General: Plugin (Bundle) "org.datanucleus.store.rdbms" is already registered. Ensure you dont have multiple JAR versions of the same plugin in the classpath. The URL "file:/D:/Software/spark/spark-2.2.0-bin-hadoop2.7/jars/datanucleus-rdbms-3.2.9.jar" is already registered, and you are trying to register an identical plugin located at URL "file:/D:/Software/spark/spark-2.2.0-bin-hadoop2.7/bin/../jars/datanucleus-rdbms-3.2.9.jar."

23/06/19 15:14:43 WARN General: Plugin (Bundle) "org.datanucleus" is already registered. Ensure you dont have multiple JAR versions of the same plugin in the classpath. The URL "file:/D:/Software/spark/spark-2.2.0-bin-hadoop2.7/bin/../jars/datanucleus-core-3.2.10.jar" is already registered, and you are trying to register an identical plugin located at URL "file:/D:/Software/spark/spark-2.2.0-bin-hadoop2.7/jars/datanucleus-core-3.2.10.jar."

23/06/19 15:14:46 WARN ObjectStore: Version information not found in metastore. hive.metastore.schema.verification is not enabled so recording the schema version 1.2.0

23/06/19 15:14:46 WARN ObjectStore: Failed to get database default, returning NoSuchObjectException

23/06/19 15:14:47 WARN ObjectStore: Failed to get database global_temp, returning NoSuchObjectException

Spark context Web UI available at http://10.237.124.64:4040

Spark context available as 'sc' (master = local[*], app id = local-1687158881952).

Spark session available as 'spark'.

Welcome to

____ __

/ __/__ ___ _____/ /__

_\ \/ _ \/ _ `/ __/ '_/

/___/ .__/\_,_/_/ /_/\_\ version 2.2.0

/_/

Using Scala version 2.11.8 (Java HotSpot(TM) 64-Bit Server VM, Java 1.8.0_291)

Type in expressions to have them evaluated.

Type :help for more information.

scala、hadoop、spark安装参考:https://xuzheng.blog.csdn.net/article/details/99400732

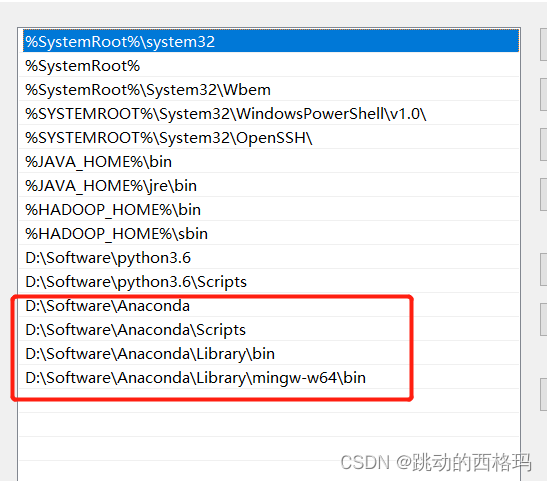

Anaconda

配置环境变量

- 变量名: Path

变量值:D:\Software\Anaconda、

D:\Software\Anaconda\Scripts

D:\Software\Anaconda\Library\bin、

D:\Software\Anaconda\Library\mingw-w64\bin

验证

C:\Users\Lenovo-T15>conda --version

conda 22.9.0

完整安装可参考:https://blog.csdn.net/Python_Smily/article/details/105993200

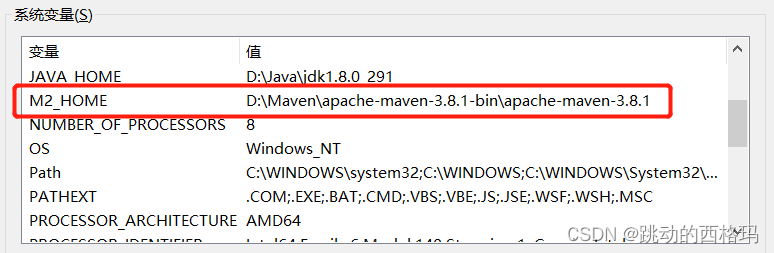

maven

配置环境变量

- 变量名: M2_HOME

变量值:D:\Maven\apache-maven-3.8.1-bin\apache-maven-3.8.1 - 变量名: Path

变量值:%M2_HOME%\bin

验证

C:\Users\Lenovo-T15>mvn --version

Apache Maven 3.8.1 (05c21c65bdfed0f71a2f2ada8b84da59348c4c5d)

Maven home: D:\Maven\apache-maven-3.8.1-bin\apache-maven-3.8.1\bin\..

Java version: 1.8.0_291, vendor: Oracle Corporation, runtime: D:\Java\jdk1.8.0_291\jre

Default locale: zh_CN, platform encoding: GBK

OS name: "windows 10", version: "10.0", arch: "amd64", family: "windows"

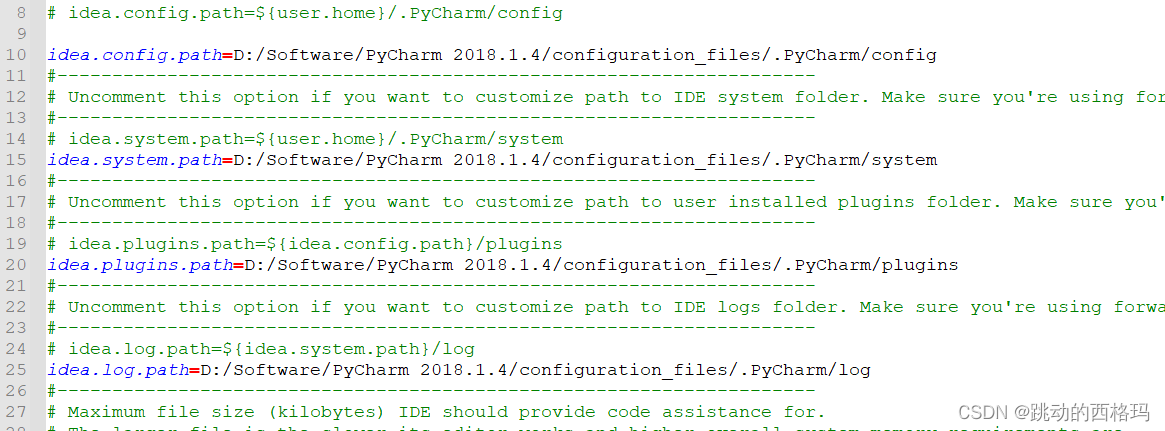

pycharm、idea

因为idea.properties配置文件中将信息都保存到了D盘,所以信息没有丢失。idea类似。

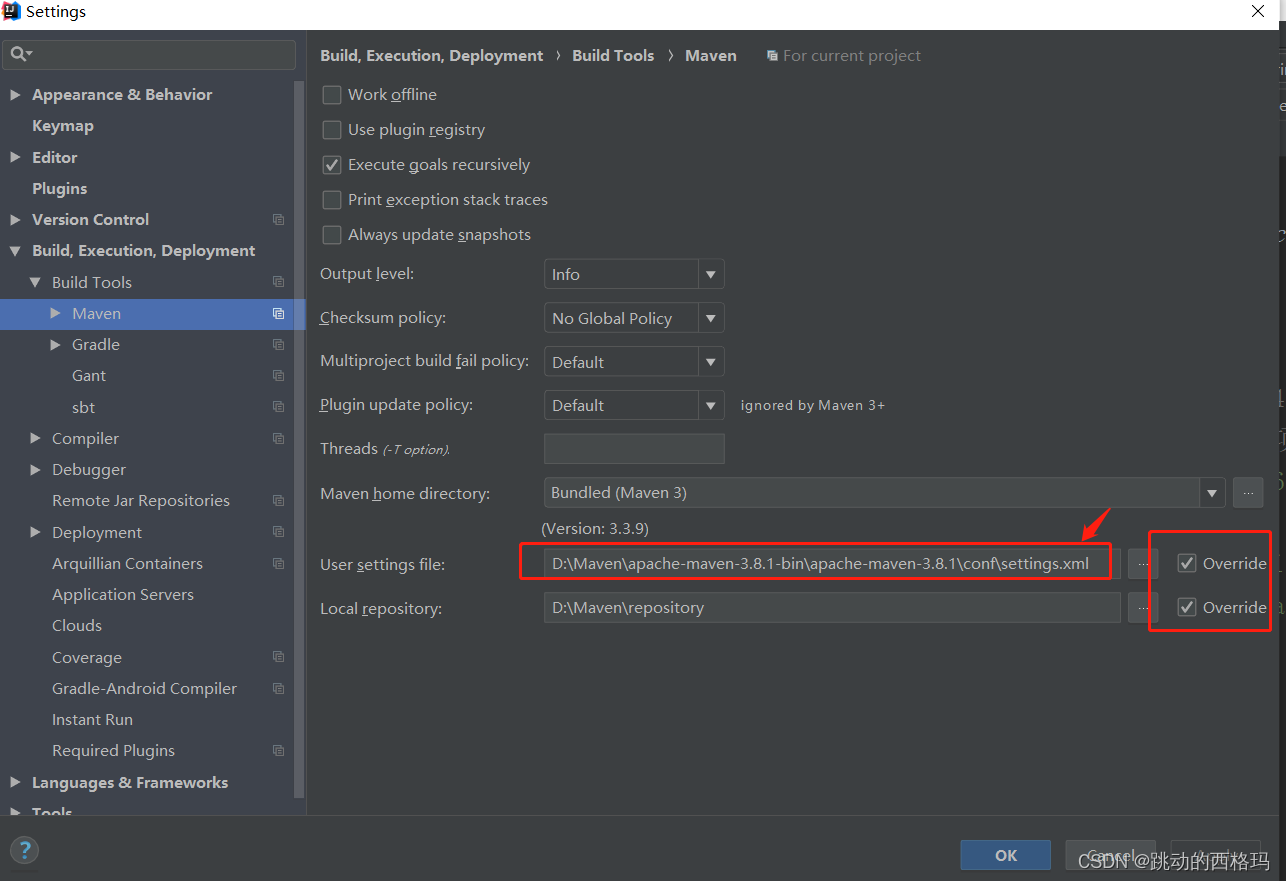

idea中maven配置到D盘本地仓库

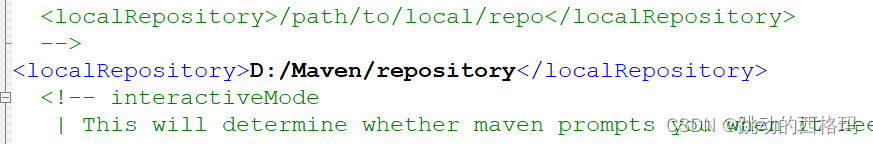

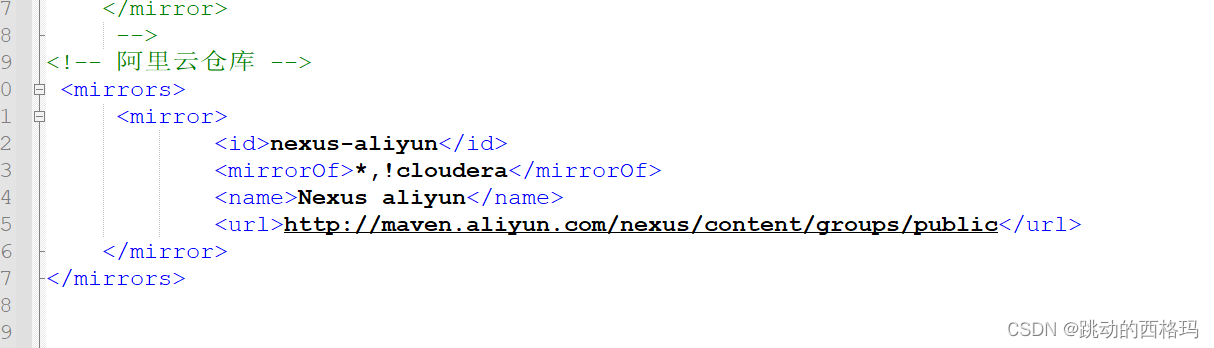

settings.xml配置

电脑现在可以恢复正常使用了。最近一段时间应该不用为C盘空间发愁了!!!

参考:

[1]: http://meta.math.stackexchange.com/questions/5020/mathjax-basic-tutorial-and-quick-reference

[2]: https://mermaidjs.github.io/

[3]: https://mermaidjs.github.io/

[4]: http://adrai.github.io/flowchart.js/

4万+

4万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?