在pytorch中实现RNN

前言:

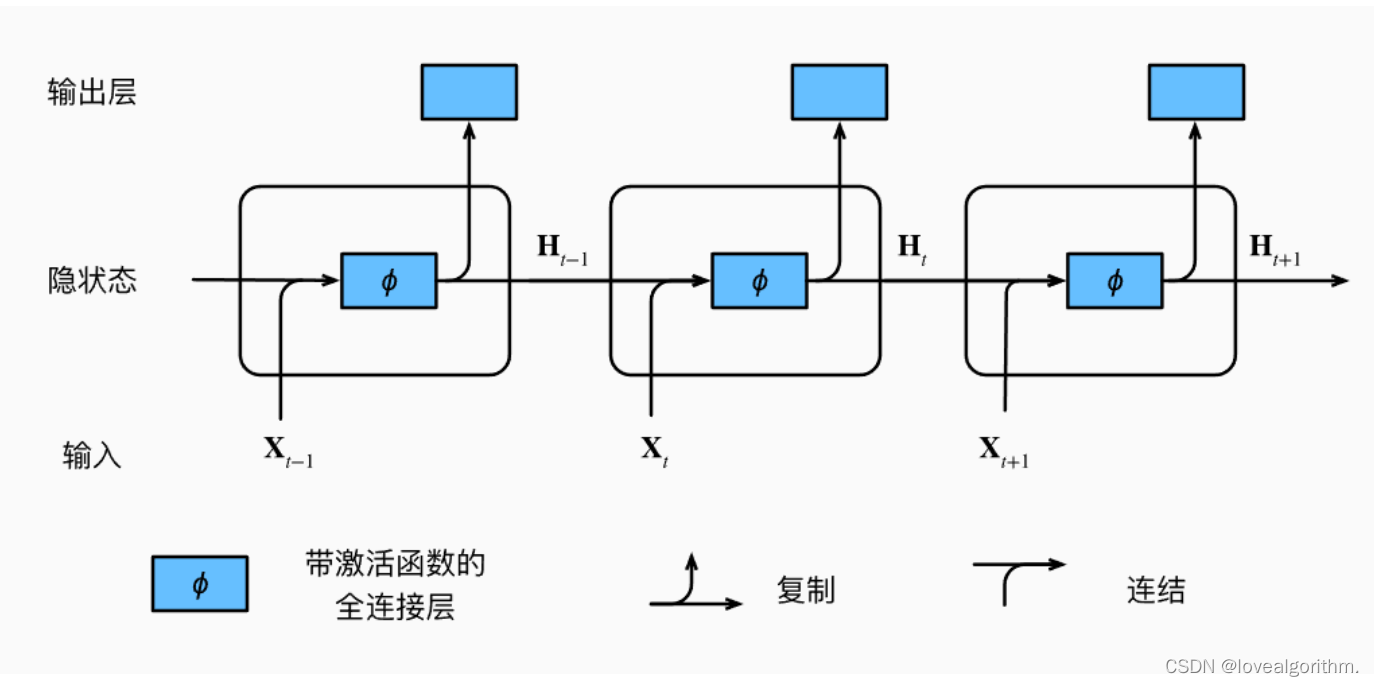

循环神经网络:

输出并不只是完全依赖输入,还会受到上个状态的影响

h

t

=

t

a

n

h

(

W

h

h

h

t

−

1

+

W

x

h

x

t

)

h_t=tanh(W_{hh}h_{t-1}+W_{xh}x_t)

ht=tanh(Whhht−1+Wxhxt)

y t = W h y h t y_t=W_{hy}h_t yt=Whyht

实现:

1.定义循环神经网络层

import torch

from torch import nn

from torch.nn import functional as F

#(单词的features,隐藏元个数,层数)

rnn = nn.RNN(100,10)

2.前向计算

out,ht = forward(x,h0)

- x:[序列长度 seq len,几句话 batch,特征维度 word vec]

- h0/ht:[num layers, batch , 隐藏元个数h dim]

- out:[seq len , batch , h dim]

out是RNN层的最后输出,而ht是最后一个时间戳的隐状态

3.时间序列预测

任务:给定一个正弦函数上的一个点,预测之后的点

(1)初始化参数

num_time_steps = 50

input_size = 1

hidden_size = 16

output_size = 1

lr=0.01

(2) 造数据

输入的x.shape为(1,49,1), 对应的值为sin函数上的前49个点

输出的y.shape为(1,49,1),对应的值为sin图像是的第1~50个点

# start为0~3之间的随机数

start = np.random.randint(3, size=1)

# 取50个时间步

time_steps = np.linspace(start, start + 10, num_time_steps)

data = np.sin(time_steps)

# 改变维度(batch,seq len,features)

# x[0~48] 预测y[1~49]

x = torch.tensor(data[:-1]).float().view(1, num_time_steps - 1, 1)

y = torch.tensor(data[1:]).float().view(1, num_time_steps - 1, 1)

(3)定义网络

#定义网络

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.rnn=nn.RNN(

input_size=input_size,

hidden_size=hidden_size,

num_layers=1,

batch_first=True #batch 放在第一个位置

)

self.linear=nn.Linear(hidden_size,output_size)

def forward(self,x,hidden_prev):

out,hidden_prev = self.rnn(x,hidden_prev)

# [batch,seq,hidden_size] [batch,num_layers,hidden_size]

out = out.view(-1,hidden_size)

# [seq,hidden_size]

out = self.linear(out)

out = out.unsqueeze(dim=0) #增加一个维度

#[batch,seq,hidden_size]

return out, hidden_prev

(4)训练

#训练

model = Net()

criterion = nn.MSELoss()

optimizer = optim.Adam(model.parameters(),lr)

hidden_prev = torch.zeros(1,1,hidden_size) #初始化隐状态

for iter in range(6000):

# start为0~3之间的随机数

start = np.random.randint(3, size=1)

# 取50个时间步

time_steps = np.linspace(start, start + 10, num_time_steps)

data = np.sin(time_steps)

# 改变维度(batch,seq len,features)

# x[0~48] 预测y[1~49]

x = torch.tensor(data[:-1]).float().view(1, num_time_steps - 1, 1)

y = torch.tensor(data[1:]).float().view(1, num_time_steps - 1, 1)

output,hidden_prev = model(x,hidden_prev)

hidden_prev = hidden_prev.detach() #不对它求梯度

loss = criterion(output,y)

model.zero_grad()

loss.backward()

optimizer.step()

if iter%100 ==0:

print("Iteration:{} loss{}".format(iter,loss.item()))

训练6000次,每次都是随机生成50个点,用第1-49个点预测 第2-50个点

(5)预测

#预测

start = np.random.randint(3, size=1)

time_steps = np.linspace(start, start + 10, num_time_steps)

data = np.sin(time_steps)

x = torch.tensor(data[:-1]).float().view(1, num_time_steps - 1, 1)

y = torch.tensor(data[1:]).float().view(1, num_time_steps - 1, 1)

predictions = []

input = x[:, 0, :] #x[1,1]

for _ in range(x.shape[1]):

input = input.view(1, 1, 1)

(pred, hidden_prev) = model(input, hidden_prev)

input = pred

predictions.append(pred.detach().numpy().ravel()[0])

x = x.data.numpy()

y = y.data.numpy()

plt.scatter(time_steps[:-1], x.ravel())

plt.plot(time_steps[:-1], x.ravel())

plt.scatter(time_steps[1:], predictions)

plt.show()

取第一个点,然后用它来预测第二个点,然后用第二个点预测第三个点,一直到预测第49个点结束,对应的就是从第二个时间戳开始预测到的点

结果如下

7241

7241

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?