说明

请使用爬虫Selenium模拟浏览器获取爬取QQ音乐中你喜欢的某位歌手(可以是任意歌手)最受欢迎的前5首歌曲的歌词、流派、歌曲发行时间、评论条数、评论时间、评论点赞次数、评论内容具体(每一首歌的评论>=500条)。

如下图所示:

歌词、流派、歌曲发行时间、评论条数保存在: music_info.csv文件中。

评论时间、评论点赞次数、评论内容具体保存在: comments_info.csv文件中。

统计每首歌的每个评论点赞次数保存在: series.csv文件中。

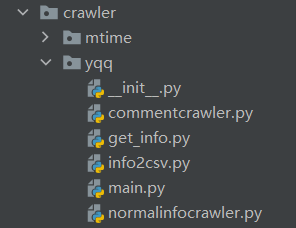

请按照如下形式组织代码:

Code

只需改main.py其他不用改。

红色框中,歌曲链接。

from crawler.yqq.get_info import get_music_info

"""

main.py

"""

# 存放文件的目录

path = r'D:\Study\Python'

# 歌曲的链接

url_list = ['https://y.qq.com/n/yqq/song/000T5eoR4YpW4t.html', 'https://y.qq.com/n/yqq/song/001afu2a1qjiik.html']

# 每个歌曲的评论页数

num_comment_page = [2, 2]

# 爬取睡眠时间, 建议长一点, 防止页面未加载

time_sleep = 5

get_music_info(path, url_list, num_comment_page, time_sleep)

from selenium import webdriver

import time

from crawler.yqq.commentcrawler import get_commnets_praise

from crawler.yqq.info2csv import tocsv

from crawler.yqq.normalinfocrawler import normal_info

"""

get_info.py

"""

def get_music_info(path, url_list, num_comment_page, time_sleep=1):

assert len(url_list) == len(num_comment_page), 'len(url_list) != len(num_comment_page)'

music_dict = {'歌词': [], '流派': [], '歌曲发行时间': [], '评论条数': []}

commnets_praise_dict = {'评论': [], '点赞数': [], '评论时间': []}

driver = webdriver.Chrome()

for i in range(len(url_list)):

driver.get(url_list[i])

time.sleep(time_sleep)

m_d = normal_info(driver)

for key in music_dict:

music_dict[key].append(m_d[key])

c_p_d_page = {'评论': [], '点赞数': [], '评论时间': []}

for j in range(num_comment_page[i]):

c_p_d = get_commnets_praise(driver)

for key in c_p_d_page:

c_p_d_page[key].extend(c_p_d[key])

botton = driver.find_element_by_class_name('next')

botton.click()

time.sleep(time_sleep)

for key in commnets_praise_dict:

commnets_praise_dict[key].append(c_p_d_page[key])

driver.close()

tocsv(path, music_dict, commnets_praise_dict)

# print(music_dict)

# print(commnets_praise_dict)

import bs4

"""

commentcrawler.py

"""

def get_commnets_praise(driver):

pageSource = driver.page_source

soup = bs4.BeautifulSoup(pageSource, 'html.parser')

items = soup.find('ul', class_='js_all_list')

info_dict = {'评论': [], '点赞数': [], '评论时间': []}

for item in items.find_all('li'):

info_dict['评论'].append(item.find('p', class_='js_hot_text').text)

info_dict['点赞数'].append(item.find('span', class_='js_praise_num').text)

info_dict['评论时间'].append(item.find('span', class_='c_tx_thin').text)

return info_dict

import bs4

import re

"""

normalinfocrawler.py

"""

def normal_info(driver):

pageSource = driver.page_source

soup = bs4.BeautifulSoup(pageSource, 'html.parser')

data = soup.find('div', class_='main')

info_dict = {'歌词': [], '流派': [], '歌曲发行时间': [], '评论条数': []}

lyric = ''

for p in data.find('div', class_='lyric__cont_box').find_all('p'):

if p:

lyric += p.text

info_dict['歌词'].append(lyric)

info_dict['流派'].append(data.find('li', class_='js_genre').text.split(':')[-1])

info_dict['歌曲发行时间'].append(

data.find('li', class_='js_public_time').text.split(':')[-1])

info_dict['评论条数'].append(re.findall(r'\d+', data.find('a', class_='js_into_comment').text)[0])

return info_dict

import pandas as pd

"""

info2csv.py

"""

def tocsv(path, music_dict, commnets_praise_dict):

music_info = pd.DataFrame(columns=('歌词', '流派', '歌曲发行时间', '评论条数'))

comments_info = pd.DataFrame(columns=('评论', '点赞数', '评论时间'))

for key in music_dict:

music_info[key] = (music_dict[key])

for key in commnets_praise_dict:

values = []

for item in commnets_praise_dict[key]:

values.extend(item)

comments_info[key] = values

music_info.to_csv(path + r'\music_info.csv', encoding='utf_8_sig')

comments_info.to_csv(path + r'\comments_info.csv', encoding='utf_8_sig')

ser = pd.Series()

for i in range(len(commnets_praise_dict['点赞数'])):

ser['song{}'.format(i)] = sum(list(map(int, commnets_praise_dict['点赞数'][i])))

ser.to_csv(path + r'\series.csv', encoding='utf_8_sig')

1296

1296

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?