计算l1loss mseloss

import torch

from torch.nn import L1Loss

from torch import nn

inputs = torch.tensor([1,2,3],dtype=torch.float32)

targets = torch.tensor([1,2,5],dtype=torch.float32)

inputs = torch.reshape(inputs,(1,1,1,3))

targets = torch.reshape(targets,(1,1,1,3))

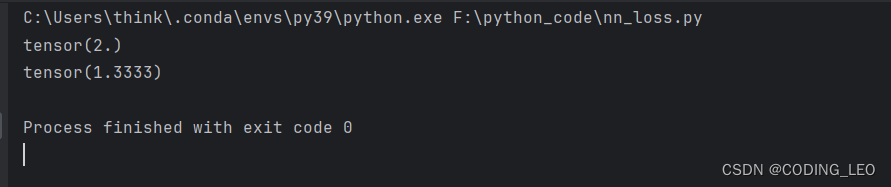

loss = L1Loss(reduction='sum')

result = loss(inputs,targets)

loss_mse = nn.MSELoss()

result_mse = loss_mse(inputs,targets)

print(result)

print(result_mse)

交叉熵·

x=torch.tensor([0.1,0.2,0.3])

y=torch.tensor([1])

x=torch.reshape(x,(1,3))

loss_cross = nn.CrossEntropyLoss()

result_cross = loss_cross(x,y)

print(result_cross)

import torch

import torchvision.datasets

from torch import nn

from torch.nn import Sequential,Conv2d,MaxPool2d,Flatten,Linear

from torch.utils.data import DataLoader

dataset = torchvision.datasets.CIFAR10("../data",train=False,transform=torchvision.transforms.ToTensor(),download=True)

dataloader = DataLoader(dataset,batch_size=1)

class XuZhenyu(nn.Module):

def __init__(self, *args, **kwargs) -> None:

super().__init__(*args, **kwargs)

self.model1 = Sequential(

Conv2d(3,32,5,padding=2),

MaxPool2d(2),

Conv2d(32,32,5,padding=2),

MaxPool2d(2),

Conv2d(32, 64, 5, padding=2),

MaxPool2d(2),

Flatten(),

Linear(1024,64),

Linear(64,10),

)

def forward(self,x):

x=self.model1(x)

return x

loss = nn.CrossEntropyLoss()

xzy = XuZhenyu()

for data in dataloader:

imgs,targets = data

outputs = xzy(imgs)

result_loss = loss(outputs,targets)

print(result_loss)

反向传播grad对参数优化,梯度下降,对参数更新,达到降阶。

import torch

import torchvision.datasets

from torch import nn

from torch.nn import Sequential,Conv2d,MaxPool2d,Flatten,Linear

from torch.utils.data import DataLoader

dataset = torchvision.datasets.CIFAR10("../data",train=False,transform=torchvision.transforms.ToTensor(),download=True)

dataloader = DataLoader(dataset,batch_size=1)

class XuZhenyu(nn.Module):

def __init__(self, *args, **kwargs) -> None:

super().__init__(*args, **kwargs)

self.model1 = Sequential(

Conv2d(3,32,5,padding=2),

MaxPool2d(2),

Conv2d(32,32,5,padding=2),

MaxPool2d(2),

Conv2d(32, 64, 5, padding=2),

MaxPool2d(2),

Flatten(),

Linear(1024,64),

Linear(64,10),

)

def forward(self,x):

x=self.model1(x)

return x

loss = nn.CrossEntropyLoss()

xzy = XuZhenyu()

for data in dataloader:

imgs,targets = data

outputs = xzy(imgs)

result_loss = loss(outputs,targets)

#print(result_loss)

result_loss.backward()

print("ok")

2399

2399

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?