- 🍨 本文为🔗365天深度学习训练营中的学习记录博客

- 🍖 原作者:K同学啊|接辅导、项目定制

Inception v1 论文

Going deeper with convolutions.pdf

一、 理论知识

GoogLeNet首次出现在2014年ILSVRC 比赛中获得冠军。这次的版本通常称其为Inception V1。Inception V1有22层深,参数量为5M。同一时期的VGGNet性能和Inception V1差不多,但是参数量也是远大于Inception V1。

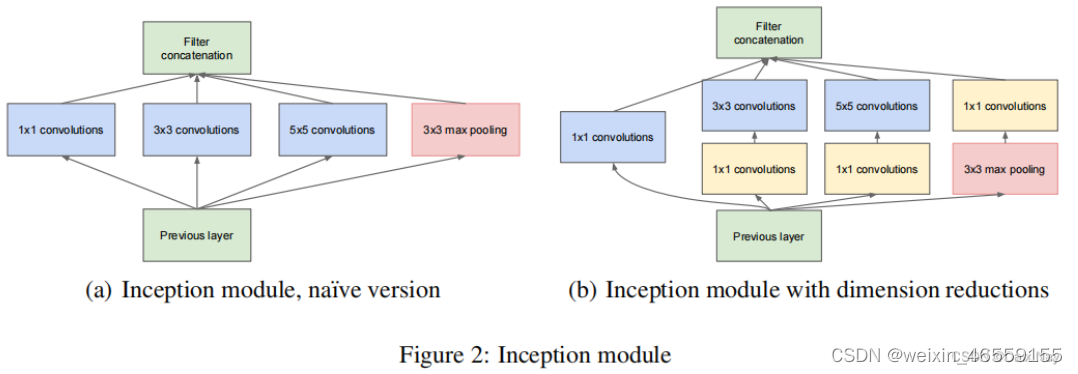

Inception Module是Inception V1的核心组成单元,提出了卷积层的并行结构,实现了在同一层就可以提取不同的特征,如下图。

按照这样的结构来增加网络的深度,虽然可以提升性能,但是还面临计算量大(参数多)的问题。为改善这种现象,Inception Module借鉴Network-in-Network的思想,使用1x1的卷积核实现降维操作(也间接增加了网络的深度),以此来减小网络的参数量与计算量,如上图b所示。

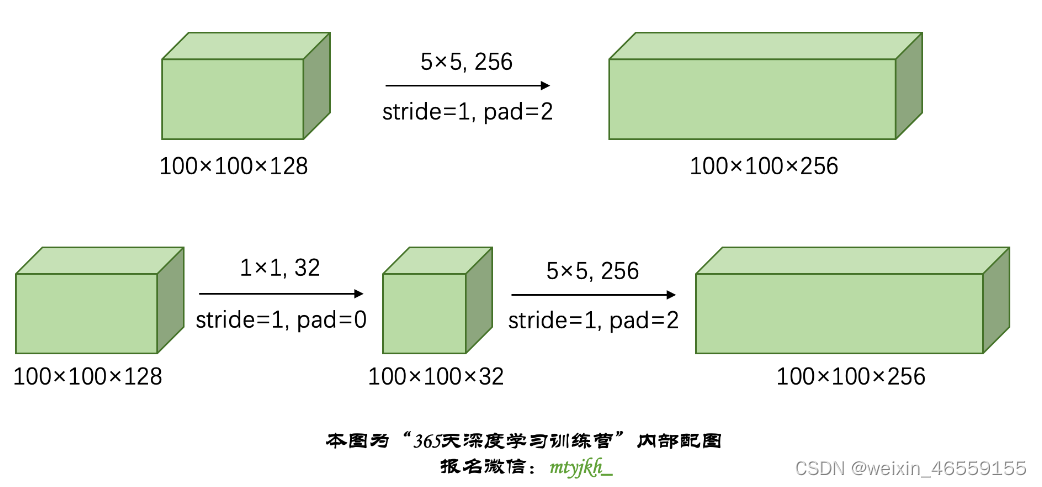

备注举例:假如前一层的输出为100x100x128,经过具有256个5x5卷积核的卷积层之后(stride=1,pad=2),输出数据为100x100x256。其中,卷积层的参数为5x5x128x256+256。假如上一层输出先经过具有32个1x1卷积核的卷积层(1x1卷积降低了通道数,且特征图尺寸不变),再经过具有256个5x5卷积核的卷积层,最终的输出数据仍为为100x100x256,但卷积参数量已经减少为128x1x1x32+32 + 32x5x5x256+256,参数数量减少为原来的约4分之一

1x1卷积核的作用: 1x1卷积核的最大作用是降低输入特征图的通道数,减小网络的参数量与计算量。

最后Inception Module基本由11卷积,33卷积,55卷积,33最大池化四个基本单元组成,对四个基本单元运算结果进行通道上组合,不同大小的卷积核赋予不同大小的感受野,从而提取到图像不同尺度的信息,进行融合,得到图像更好的表征, 就是Inception Module的核心思想。

二、算法结构

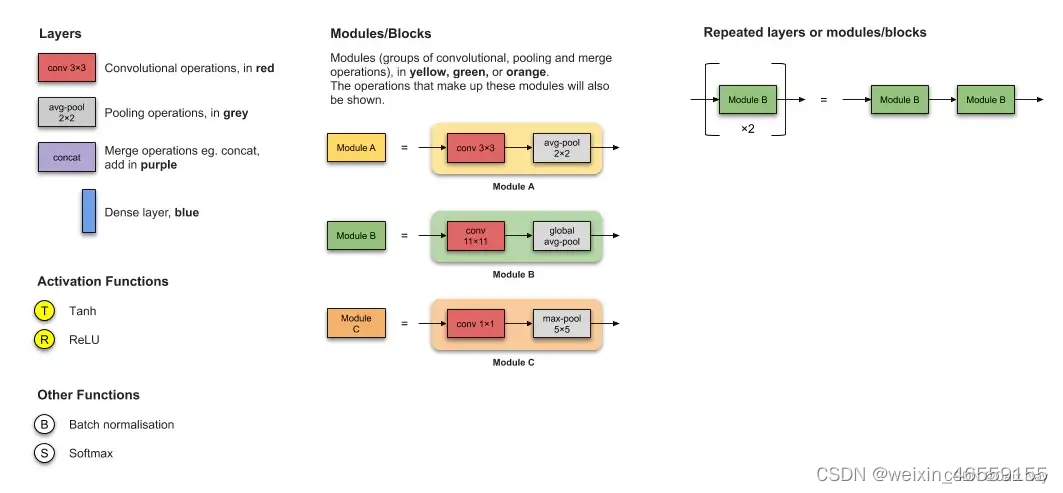

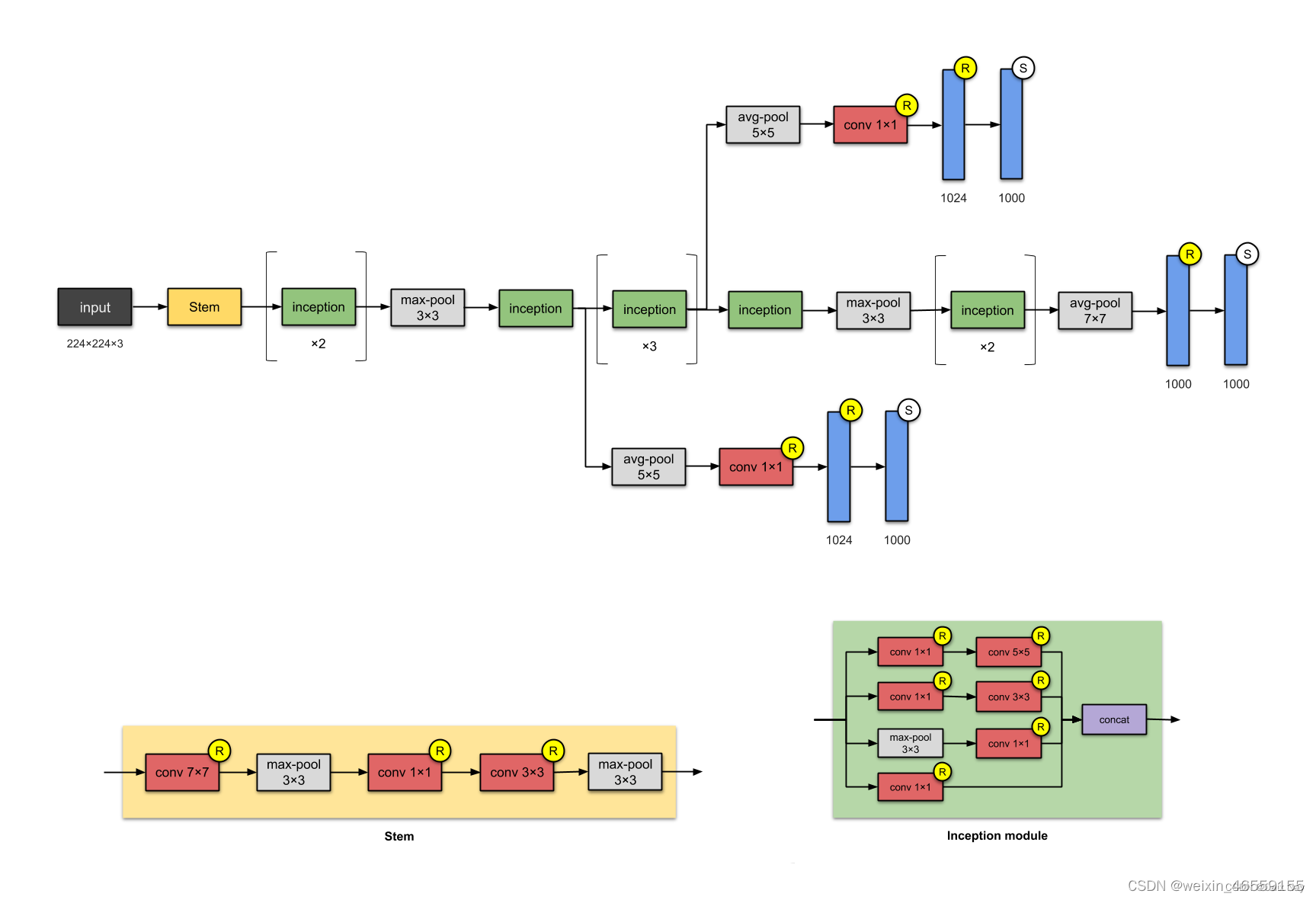

实现的Inception V1网络结构图如下:

注:另外增加了两个辅助分支,作用有两点,一是为了避免梯度消失,用于向前传导梯度。反向传播时如果有一层求导为0,链式求导结果则为0。二是将中间某一层输出用作分类,起到模型融合作用,实际测试时,这两个辅助softmax分支会被去掉,在后续模型的发展中,该方法被采用较少,可以直接绕过,重点学习卷积层的并行结构与1x1卷积核部分的内容即可。

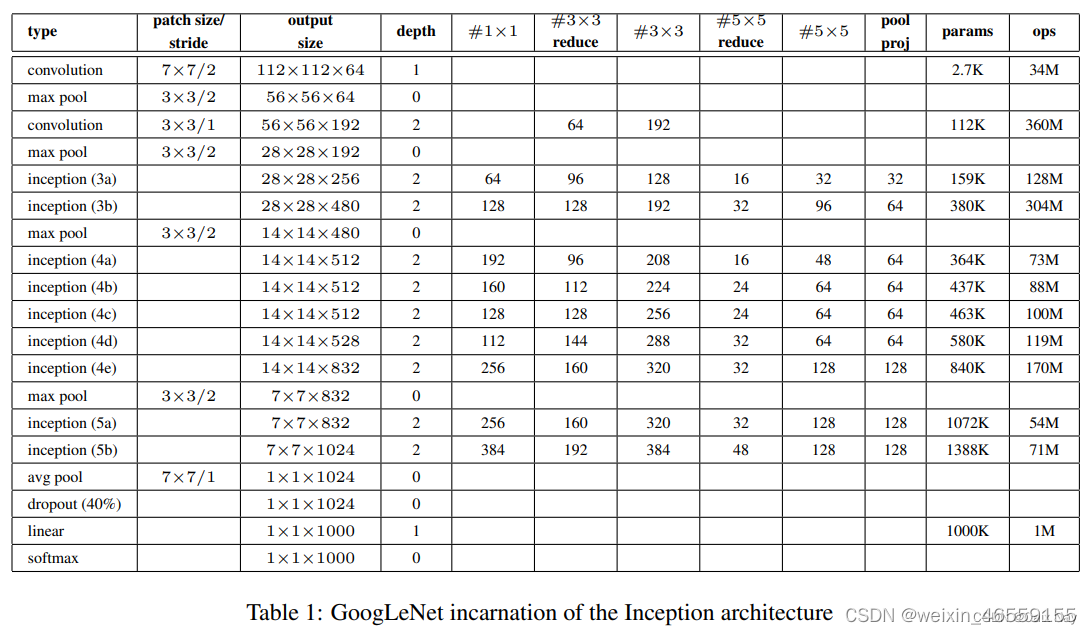

详细网络结构图如下:

三、Pytorch复现

下面的代码是参考上方表格中的网络框架和参数实现的

Inception Module的代码实现

''' InceptionV1 '''

class InceptionV1(nn.Module):

def __init__(self,

include_top=True, # 是否包含位于网络顶部的全链接层

preact=False, # 是否使用预激活

use_bias=True, # 是否对卷积层使用偏置

input_shape=[3, 224, 224],

classes=1000,

pooling=None): # 用于分类图像的可选类数

super(InceptionV1, self).__init__()

self.conv1 = nn.Sequential(

nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3),

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

)

self.conv2 = nn.Sequential(

nn.Conv2d(64, 64, kernel_size=1, stride=1, padding=0),

nn.ReLU(inplace=True),

nn.Conv2d(64, 192, kernel_size=3, stride=1, padding=1),

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

)

self.conv3 = nn.Sequential(

InceptionBlock(192, 64, [96,128], [16,32], 32),

InceptionBlock(256, 128, [128,192], [32,96], 64),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

)

self.conv4 = nn.Sequential(

InceptionBlock(480, 192, [96,208], [16,48], 64),

InceptionBlock(512, 160, [112,224], [24,64], 64),

InceptionBlock(512, 128, [128,256], [24,64], 64),

InceptionBlock(512, 112, [144,288], [32,64], 64),

InceptionBlock(528, 256, [160,320], [32,128], 128),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

)

self.conv5 = nn.Sequential(

InceptionBlock(832, 256, [160,320], [32,128], 128),

InceptionBlock(832, 384, [192,384], [48,128], 128),

nn.AvgPool2d(kernel_size=7,stride=1,padding=0),

nn.Dropout(0.4)

)

self.fc = nn.Sequential(

nn.Flatten(),

nn.Linear(in_features=1024, out_features=1024),

nn.ReLU(inplace=True),

nn.Linear(in_features=1024, out_features=classes),

nn.Softmax(dim=1)

)

def forward(self, x):

x = self.conv1(x)

x = self.conv2(x)

x = self.conv3(x)

x = self.conv4(x)

x = self.conv5(x)

x = self.fc(x)

return x

模型打印

----------------------------------------------------------------

Layer (type) Output Shape Param #

================================================================

Conv2d-1 [-1, 64, 112, 112] 9,472

ReLU-2 [-1, 64, 112, 112] 0

MaxPool2d-3 [-1, 64, 56, 56] 0

Conv2d-4 [-1, 64, 56, 56] 4,160

ReLU-5 [-1, 64, 56, 56] 0

Conv2d-6 [-1, 192, 56, 56] 110,784

ReLU-7 [-1, 192, 56, 56] 0

MaxPool2d-8 [-1, 192, 28, 28] 0

Conv2d-9 [-1, 64, 28, 28] 12,352

BatchNorm2d-10 [-1, 64, 28, 28] 128

ReLU-11 [-1, 64, 28, 28] 0

Conv2d-12 [-1, 96, 28, 28] 18,528

BatchNorm2d-13 [-1, 96, 28, 28] 192

ReLU-14 [-1, 96, 28, 28] 0

Conv2d-15 [-1, 128, 28, 28] 110,720

BatchNorm2d-16 [-1, 128, 28, 28] 256

ReLU-17 [-1, 128, 28, 28] 0

Conv2d-18 [-1, 16, 28, 28] 3,088

BatchNorm2d-19 [-1, 16, 28, 28] 32

ReLU-20 [-1, 16, 28, 28] 0

Conv2d-21 [-1, 32, 28, 28] 12,832

BatchNorm2d-22 [-1, 32, 28, 28] 64

ReLU-23 [-1, 32, 28, 28] 0

MaxPool2d-24 [-1, 192, 28, 28] 0

Conv2d-25 [-1, 32, 28, 28] 6,176

BatchNorm2d-26 [-1, 32, 28, 28] 64

ReLU-27 [-1, 32, 28, 28] 0

InceptionBlock-28 [-1, 256, 28, 28] 0

Conv2d-29 [-1, 128, 28, 28] 32,896

BatchNorm2d-30 [-1, 128, 28, 28] 256

ReLU-31 [-1, 128, 28, 28] 0

Conv2d-32 [-1, 128, 28, 28] 32,896

BatchNorm2d-33 [-1, 128, 28, 28] 256

ReLU-34 [-1, 128, 28, 28] 0

Conv2d-35 [-1, 192, 28, 28] 221,376

BatchNorm2d-36 [-1, 192, 28, 28] 384

ReLU-37 [-1, 192, 28, 28] 0

Conv2d-38 [-1, 32, 28, 28] 8,224

BatchNorm2d-39 [-1, 32, 28, 28] 64

ReLU-40 [-1, 32, 28, 28] 0

Conv2d-41 [-1, 96, 28, 28] 76,896

BatchNorm2d-42 [-1, 96, 28, 28] 192

ReLU-43 [-1, 96, 28, 28] 0

MaxPool2d-44 [-1, 256, 28, 28] 0

Conv2d-45 [-1, 64, 28, 28] 16,448

BatchNorm2d-46 [-1, 64, 28, 28] 128

ReLU-47 [-1, 64, 28, 28] 0

InceptionBlock-48 [-1, 480, 28, 28] 0

MaxPool2d-49 [-1, 480, 14, 14] 0

Conv2d-50 [-1, 192, 14, 14] 92,352

BatchNorm2d-51 [-1, 192, 14, 14] 384

ReLU-52 [-1, 192, 14, 14] 0

Conv2d-53 [-1, 96, 14, 14] 46,176

BatchNorm2d-54 [-1, 96, 14, 14] 192

ReLU-55 [-1, 96, 14, 14] 0

Conv2d-56 [-1, 208, 14, 14] 179,920

BatchNorm2d-57 [-1, 208, 14, 14] 416

ReLU-58 [-1, 208, 14, 14] 0

Conv2d-59 [-1, 16, 14, 14] 7,696

BatchNorm2d-60 [-1, 16, 14, 14] 32

ReLU-61 [-1, 16, 14, 14] 0

Conv2d-62 [-1, 48, 14, 14] 19,248

BatchNorm2d-63 [-1, 48, 14, 14] 96

ReLU-64 [-1, 48, 14, 14] 0

MaxPool2d-65 [-1, 480, 14, 14] 0

Conv2d-66 [-1, 64, 14, 14] 30,784

BatchNorm2d-67 [-1, 64, 14, 14] 128

ReLU-68 [-1, 64, 14, 14] 0

InceptionBlock-69 [-1, 512, 14, 14] 0

Conv2d-70 [-1, 160, 14, 14] 82,080

BatchNorm2d-71 [-1, 160, 14, 14] 320

ReLU-72 [-1, 160, 14, 14] 0

Conv2d-73 [-1, 112, 14, 14] 57,456

BatchNorm2d-74 [-1, 112, 14, 14] 224

ReLU-75 [-1, 112, 14, 14] 0

Conv2d-76 [-1, 224, 14, 14] 226,016

BatchNorm2d-77 [-1, 224, 14, 14] 448

ReLU-78 [-1, 224, 14, 14] 0

Conv2d-79 [-1, 24, 14, 14] 12,312

BatchNorm2d-80 [-1, 24, 14, 14] 48

ReLU-81 [-1, 24, 14, 14] 0

Conv2d-82 [-1, 64, 14, 14] 38,464

BatchNorm2d-83 [-1, 64, 14, 14] 128

ReLU-84 [-1, 64, 14, 14] 0

MaxPool2d-85 [-1, 512, 14, 14] 0

Conv2d-86 [-1, 64, 14, 14] 32,832

BatchNorm2d-87 [-1, 64, 14, 14] 128

ReLU-88 [-1, 64, 14, 14] 0

InceptionBlock-89 [-1, 512, 14, 14] 0

Conv2d-90 [-1, 128, 14, 14] 65,664

BatchNorm2d-91 [-1, 128, 14, 14] 256

ReLU-92 [-1, 128, 14, 14] 0

Conv2d-93 [-1, 128, 14, 14] 65,664

BatchNorm2d-94 [-1, 128, 14, 14] 256

ReLU-95 [-1, 128, 14, 14] 0

Conv2d-96 [-1, 256, 14, 14] 295,168

BatchNorm2d-97 [-1, 256, 14, 14] 512

ReLU-98 [-1, 256, 14, 14] 0

Conv2d-99 [-1, 24, 14, 14] 12,312

BatchNorm2d-100 [-1, 24, 14, 14] 48

ReLU-101 [-1, 24, 14, 14] 0

Conv2d-102 [-1, 64, 14, 14] 38,464

BatchNorm2d-103 [-1, 64, 14, 14] 128

ReLU-104 [-1, 64, 14, 14] 0

MaxPool2d-105 [-1, 512, 14, 14] 0

Conv2d-106 [-1, 64, 14, 14] 32,832

BatchNorm2d-107 [-1, 64, 14, 14] 128

ReLU-108 [-1, 64, 14, 14] 0

InceptionBlock-109 [-1, 512, 14, 14] 0

Conv2d-110 [-1, 112, 14, 14] 57,456

BatchNorm2d-111 [-1, 112, 14, 14] 224

ReLU-112 [-1, 112, 14, 14] 0

Conv2d-113 [-1, 144, 14, 14] 73,872

BatchNorm2d-114 [-1, 144, 14, 14] 288

ReLU-115 [-1, 144, 14, 14] 0

Conv2d-116 [-1, 288, 14, 14] 373,536

BatchNorm2d-117 [-1, 288, 14, 14] 576

ReLU-118 [-1, 288, 14, 14] 0

Conv2d-119 [-1, 32, 14, 14] 16,416

BatchNorm2d-120 [-1, 32, 14, 14] 64

ReLU-121 [-1, 32, 14, 14] 0

Conv2d-122 [-1, 64, 14, 14] 51,264

BatchNorm2d-123 [-1, 64, 14, 14] 128

ReLU-124 [-1, 64, 14, 14] 0

MaxPool2d-125 [-1, 512, 14, 14] 0

Conv2d-126 [-1, 64, 14, 14] 32,832

BatchNorm2d-127 [-1, 64, 14, 14] 128

ReLU-128 [-1, 64, 14, 14] 0

InceptionBlock-129 [-1, 528, 14, 14] 0

Conv2d-130 [-1, 256, 14, 14] 135,424

BatchNorm2d-131 [-1, 256, 14, 14] 512

ReLU-132 [-1, 256, 14, 14] 0

Conv2d-133 [-1, 160, 14, 14] 84,640

BatchNorm2d-134 [-1, 160, 14, 14] 320

ReLU-135 [-1, 160, 14, 14] 0

Conv2d-136 [-1, 320, 14, 14] 461,120

BatchNorm2d-137 [-1, 320, 14, 14] 640

ReLU-138 [-1, 320, 14, 14] 0

Conv2d-139 [-1, 32, 14, 14] 16,928

BatchNorm2d-140 [-1, 32, 14, 14] 64

ReLU-141 [-1, 32, 14, 14] 0

Conv2d-142 [-1, 128, 14, 14] 102,528

BatchNorm2d-143 [-1, 128, 14, 14] 256

ReLU-144 [-1, 128, 14, 14] 0

MaxPool2d-145 [-1, 528, 14, 14] 0

Conv2d-146 [-1, 128, 14, 14] 67,712

BatchNorm2d-147 [-1, 128, 14, 14] 256

ReLU-148 [-1, 128, 14, 14] 0

InceptionBlock-149 [-1, 832, 14, 14] 0

MaxPool2d-150 [-1, 832, 7, 7] 0

Conv2d-151 [-1, 256, 7, 7] 213,248

BatchNorm2d-152 [-1, 256, 7, 7] 512

ReLU-153 [-1, 256, 7, 7] 0

Conv2d-154 [-1, 160, 7, 7] 133,280

BatchNorm2d-155 [-1, 160, 7, 7] 320

ReLU-156 [-1, 160, 7, 7] 0

Conv2d-157 [-1, 320, 7, 7] 461,120

BatchNorm2d-158 [-1, 320, 7, 7] 640

ReLU-159 [-1, 320, 7, 7] 0

Conv2d-160 [-1, 32, 7, 7] 26,656

BatchNorm2d-161 [-1, 32, 7, 7] 64

ReLU-162 [-1, 32, 7, 7] 0

Conv2d-163 [-1, 128, 7, 7] 102,528

BatchNorm2d-164 [-1, 128, 7, 7] 256

ReLU-165 [-1, 128, 7, 7] 0

MaxPool2d-166 [-1, 832, 7, 7] 0

Conv2d-167 [-1, 128, 7, 7] 106,624

BatchNorm2d-168 [-1, 128, 7, 7] 256

ReLU-169 [-1, 128, 7, 7] 0

InceptionBlock-170 [-1, 832, 7, 7] 0

Conv2d-171 [-1, 384, 7, 7] 319,872

BatchNorm2d-172 [-1, 384, 7, 7] 768

ReLU-173 [-1, 384, 7, 7] 0

Conv2d-174 [-1, 192, 7, 7] 159,936

BatchNorm2d-175 [-1, 192, 7, 7] 384

ReLU-176 [-1, 192, 7, 7] 0

Conv2d-177 [-1, 384, 7, 7] 663,936

BatchNorm2d-178 [-1, 384, 7, 7] 768

ReLU-179 [-1, 384, 7, 7] 0

Conv2d-180 [-1, 48, 7, 7] 39,984

BatchNorm2d-181 [-1, 48, 7, 7] 96

ReLU-182 [-1, 48, 7, 7] 0

Conv2d-183 [-1, 128, 7, 7] 153,728

BatchNorm2d-184 [-1, 128, 7, 7] 256

ReLU-185 [-1, 128, 7, 7] 0

MaxPool2d-186 [-1, 832, 7, 7] 0

Conv2d-187 [-1, 128, 7, 7] 106,624

BatchNorm2d-188 [-1, 128, 7, 7] 256

ReLU-189 [-1, 128, 7, 7] 0

InceptionBlock-190 [-1, 1024, 7, 7] 0

AvgPool2d-191 [-1, 1024, 1, 1] 0

Dropout-192 [-1, 1024, 1, 1] 0

Flatten-193 [-1, 1024] 0

Linear-194 [-1, 1024] 1,049,600

ReLU-195 [-1, 1024] 0

Linear-196 [-1, 2] 2,050

Softmax-197 [-1, 2] 0

================================================================

Total params: 7,039,122

Trainable params: 7,039,122

Non-trainable params: 0

----------------------------------------------------------------

Input size (MB): 0.57

Forward/backward pass size (MB): 81.87

Params size (MB): 26.85

Estimated Total Size (MB): 109.30

----------------------------------------------------------------

InceptionV1(

(conv1): Sequential(

(0): Conv2d(3, 64, kernel_size=(7, 7), stride=(2, 2), padding=(3, 3))

(1): ReLU(inplace=True)

(2): MaxPool2d(kernel_size=3, stride=2, padding=1, dilation=1, ceil_mode=False)

)

(conv2): Sequential(

(0): Conv2d(64, 64, kernel_size=(1, 1), stride=(1, 1))

(1): ReLU(inplace=True)

(2): Conv2d(64, 192, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(3): ReLU(inplace=True)

(4): MaxPool2d(kernel_size=3, stride=2, padding=1, dilation=1, ceil_mode=False)

)

(conv3): Sequential(

(0): InceptionBlock(

(conv1): Sequential(

(0): Conv2d(192, 64, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace=True)

)

(conv2): Sequential(

(0): Conv2d(192, 96, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(96, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace=True)

(3): Conv2d(96, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(4): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(5): ReLU(inplace=True)

)

(conv3): Sequential(

(0): Conv2d(192, 16, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(16, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace=True)

(3): Conv2d(16, 32, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))

(4): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(5): ReLU(inplace=True)

)

(conv4): Sequential(

(0): MaxPool2d(kernel_size=3, stride=1, padding=1, dilation=1, ceil_mode=False)

(1): Conv2d(192, 32, kernel_size=(1, 1), stride=(1, 1))

(2): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(3): ReLU(inplace=True)

)

)

(1): InceptionBlock(

(conv1): Sequential(

(0): Conv2d(256, 128, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace=True)

)

(conv2): Sequential(

(0): Conv2d(256, 128, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace=True)

(3): Conv2d(128, 192, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(4): BatchNorm2d(192, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(5): ReLU(inplace=True)

)

(conv3): Sequential(

(0): Conv2d(256, 32, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace=True)

(3): Conv2d(32, 96, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))

(4): BatchNorm2d(96, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(5): ReLU(inplace=True)

)

(conv4): Sequential(

(0): MaxPool2d(kernel_size=3, stride=1, padding=1, dilation=1, ceil_mode=False)

(1): Conv2d(256, 64, kernel_size=(1, 1), stride=(1, 1))

(2): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(3): ReLU(inplace=True)

)

)

(2): MaxPool2d(kernel_size=3, stride=2, padding=1, dilation=1, ceil_mode=False)

)

(conv4): Sequential(

(0): InceptionBlock(

(conv1): Sequential(

(0): Conv2d(480, 192, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(192, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace=True)

)

(conv2): Sequential(

(0): Conv2d(480, 96, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(96, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace=True)

(3): Conv2d(96, 208, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(4): BatchNorm2d(208, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(5): ReLU(inplace=True)

)

(conv3): Sequential(

(0): Conv2d(480, 16, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(16, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace=True)

(3): Conv2d(16, 48, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))

(4): BatchNorm2d(48, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(5): ReLU(inplace=True)

)

(conv4): Sequential(

(0): MaxPool2d(kernel_size=3, stride=1, padding=1, dilation=1, ceil_mode=False)

(1): Conv2d(480, 64, kernel_size=(1, 1), stride=(1, 1))

(2): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(3): ReLU(inplace=True)

)

)

(1): InceptionBlock(

(conv1): Sequential(

(0): Conv2d(512, 160, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(160, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace=True)

)

(conv2): Sequential(

(0): Conv2d(512, 112, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(112, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace=True)

(3): Conv2d(112, 224, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(4): BatchNorm2d(224, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(5): ReLU(inplace=True)

)

(conv3): Sequential(

(0): Conv2d(512, 24, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(24, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace=True)

(3): Conv2d(24, 64, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))

(4): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(5): ReLU(inplace=True)

)

(conv4): Sequential(

(0): MaxPool2d(kernel_size=3, stride=1, padding=1, dilation=1, ceil_mode=False)

(1): Conv2d(512, 64, kernel_size=(1, 1), stride=(1, 1))

(2): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(3): ReLU(inplace=True)

)

)

(2): InceptionBlock(

(conv1): Sequential(

(0): Conv2d(512, 128, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace=True)

)

(conv2): Sequential(

(0): Conv2d(512, 128, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace=True)

(3): Conv2d(128, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(4): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(5): ReLU(inplace=True)

)

(conv3): Sequential(

(0): Conv2d(512, 24, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(24, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace=True)

(3): Conv2d(24, 64, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))

(4): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(5): ReLU(inplace=True)

)

(conv4): Sequential(

(0): MaxPool2d(kernel_size=3, stride=1, padding=1, dilation=1, ceil_mode=False)

(1): Conv2d(512, 64, kernel_size=(1, 1), stride=(1, 1))

(2): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(3): ReLU(inplace=True)

)

)

(3): InceptionBlock(

(conv1): Sequential(

(0): Conv2d(512, 112, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(112, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace=True)

)

(conv2): Sequential(

(0): Conv2d(512, 144, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(144, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace=True)

(3): Conv2d(144, 288, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(4): BatchNorm2d(288, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(5): ReLU(inplace=True)

)

(conv3): Sequential(

(0): Conv2d(512, 32, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace=True)

(3): Conv2d(32, 64, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))

(4): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(5): ReLU(inplace=True)

)

(conv4): Sequential(

(0): MaxPool2d(kernel_size=3, stride=1, padding=1, dilation=1, ceil_mode=False)

(1): Conv2d(512, 64, kernel_size=(1, 1), stride=(1, 1))

(2): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(3): ReLU(inplace=True)

)

)

(4): InceptionBlock(

(conv1): Sequential(

(0): Conv2d(528, 256, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace=True)

)

(conv2): Sequential(

(0): Conv2d(528, 160, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(160, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace=True)

(3): Conv2d(160, 320, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(4): BatchNorm2d(320, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(5): ReLU(inplace=True)

)

(conv3): Sequential(

(0): Conv2d(528, 32, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace=True)

(3): Conv2d(32, 128, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))

(4): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(5): ReLU(inplace=True)

)

(conv4): Sequential(

(0): MaxPool2d(kernel_size=3, stride=1, padding=1, dilation=1, ceil_mode=False)

(1): Conv2d(528, 128, kernel_size=(1, 1), stride=(1, 1))

(2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(3): ReLU(inplace=True)

)

)

(5): MaxPool2d(kernel_size=3, stride=2, padding=1, dilation=1, ceil_mode=False)

)

(conv5): Sequential(

(0): InceptionBlock(

(conv1): Sequential(

(0): Conv2d(832, 256, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace=True)

)

(conv2): Sequential(

(0): Conv2d(832, 160, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(160, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace=True)

(3): Conv2d(160, 320, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(4): BatchNorm2d(320, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(5): ReLU(inplace=True)

)

(conv3): Sequential(

(0): Conv2d(832, 32, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace=True)

(3): Conv2d(32, 128, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))

(4): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(5): ReLU(inplace=True)

)

(conv4): Sequential(

(0): MaxPool2d(kernel_size=3, stride=1, padding=1, dilation=1, ceil_mode=False)

(1): Conv2d(832, 128, kernel_size=(1, 1), stride=(1, 1))

(2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(3): ReLU(inplace=True)

)

)

(1): InceptionBlock(

(conv1): Sequential(

(0): Conv2d(832, 384, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(384, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace=True)

)

(conv2): Sequential(

(0): Conv2d(832, 192, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(192, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace=True)

(3): Conv2d(192, 384, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(4): BatchNorm2d(384, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(5): ReLU(inplace=True)

)

(conv3): Sequential(

(0): Conv2d(832, 48, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(48, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace=True)

(3): Conv2d(48, 128, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))

(4): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(5): ReLU(inplace=True)

)

(conv4): Sequential(

(0): MaxPool2d(kernel_size=3, stride=1, padding=1, dilation=1, ceil_mode=False)

(1): Conv2d(832, 128, kernel_size=(1, 1), stride=(1, 1))

(2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(3): ReLU(inplace=True)

)

)

(2): AvgPool2d(kernel_size=7, stride=1, padding=0)

(3): Dropout(p=0.4, inplace=False)

)

(fc): Sequential(

(0): Flatten(start_dim=1, end_dim=-1)

(1): Linear(in_features=1024, out_features=1024, bias=True)

(2): ReLU(inplace=True)

(3): Linear(in_features=1024, out_features=2, bias=True)

(4): Softmax(dim=1)

)

)

312

312

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?