🍨 本文为🔗365天深度学习训练营 中的学习记录博客

🍖 原作者:K同学啊|接辅导、项目定制

1 理论基础

Inception v3由谷歌研究员Christian Szegedy等人在2015年的论文《Rethinking the Inception Architecture for Computer Vision》中提出。Inception v3是Inception网络系列的第三个版本,它在ImageNet图像识别竞赛中取得了优异成绩,尤其是在大规模图像识别任务中表现出色。

Inception v3的主要特点如下:

1、更深的网络结构:Inception v3比之前的Inception网络结构更深,包含了48层卷积层。这使得网络可以提取更多层次的特征,从而在图像识别任务上取得更好的效果。

2、使用Factorized Convolutions:Inception v3采用了Factorized Convolutions(分解卷积),将较大的卷积核分解为多个较小卷积核。这种方法可以降低网络的参数数量,减少计算复杂度,同时保持良好的性能。

3、使用Batch Normalization:Inception v3在每个卷积层之后都添加了Batch Normalization(BN),这有助于网络的收敛和泛化能力。BN可以减少Internal Covariate Shift(内部协变量偏移)现象,加快训练速度,同时提高模型的鲁棒性。

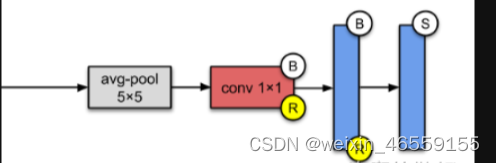

4、辅助分类器:Inception v3引入了辅助分类器,可以在网络训练过程中提供额外的梯度信息,帮助网络更好的学习特征。辅助分类器位于网络的某个中间层,其输出会与主分类器的输出进行加权融合,从而得到最终的预测结果。

5、基于RMSProp的优化器:Inception v3使用了RMSProp优化器进行训练。相比于传统的随机梯度下降(SGD)方法,RMSProp可以自适应地调整学习率,使得训练过程更加稳定,收敛速度更快。

Inception v3在图像分类、物体检测和图像分割等计算机视觉任务中均取得了显著的效果。然而,由于其较大的网络结构和计算复杂度,Inception v3在实际应用中可能需要较高的硬件要求。

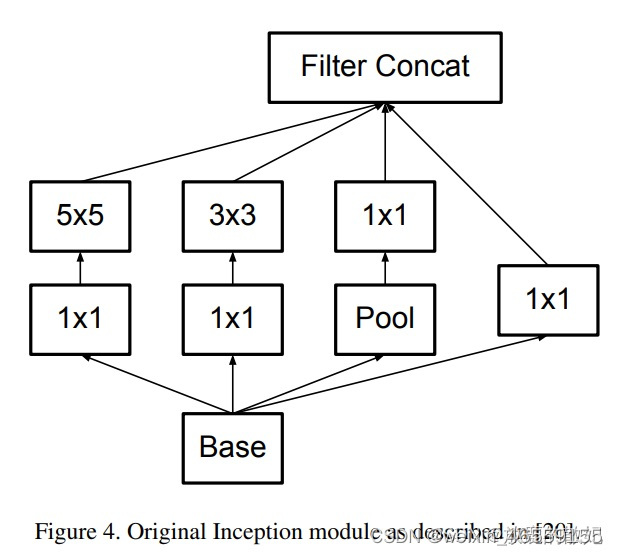

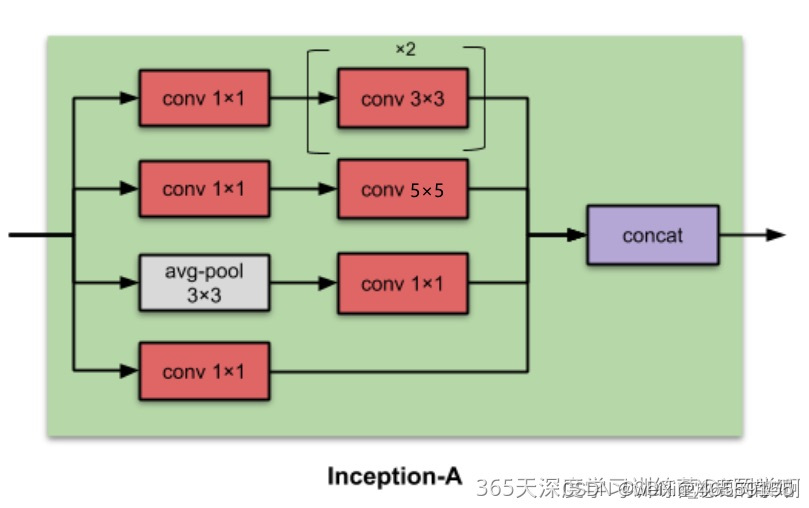

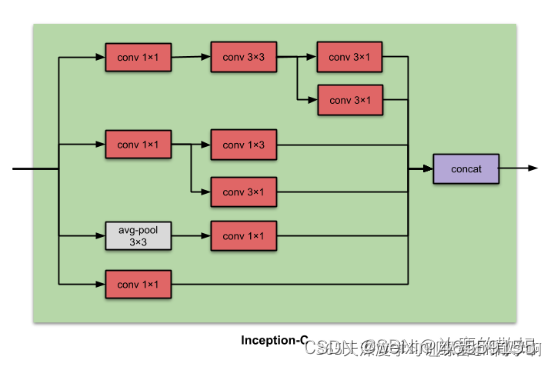

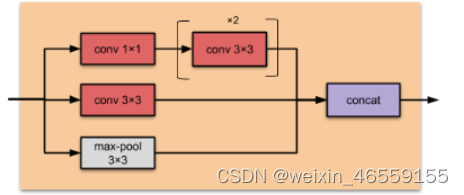

相对于Inception v1的Inception Module结构,Inception v3中做出了如下改动:

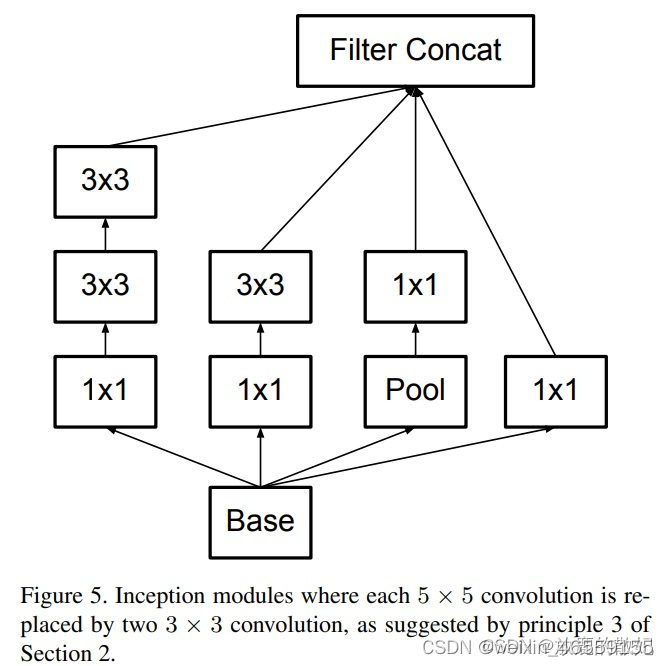

(1)将5x5的卷积分解为两个3x3的卷积运算已提升计算速度。尽管这有点违反直觉,但一个5x5的卷积在计算成本上是一个3x3卷积的2.78倍。所以叠加两个3x3卷积实际上在性能上会有所提升,如下图所示:

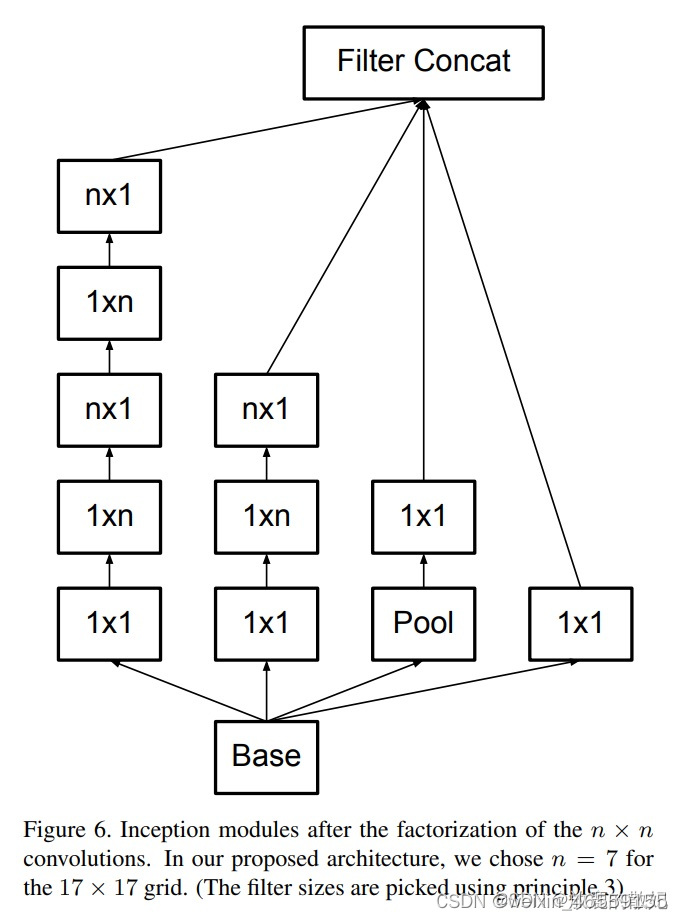

(2)此外,作者将nxn的卷积核尺寸分解为1xn和nx1两个卷积,例如, 一个3x3的卷积等价于首先执行一个1x3的卷积,再执行一个3x1的卷积。他们还发现这种方法在成本上要比单个3x3的卷积降低33%,这一结构如下图所示:

此处如果n=3,则与上一张图像一致。最左侧的5x5卷积可被表示为两个3x3卷积,太慢又可以表示为1x3和3x1卷积。

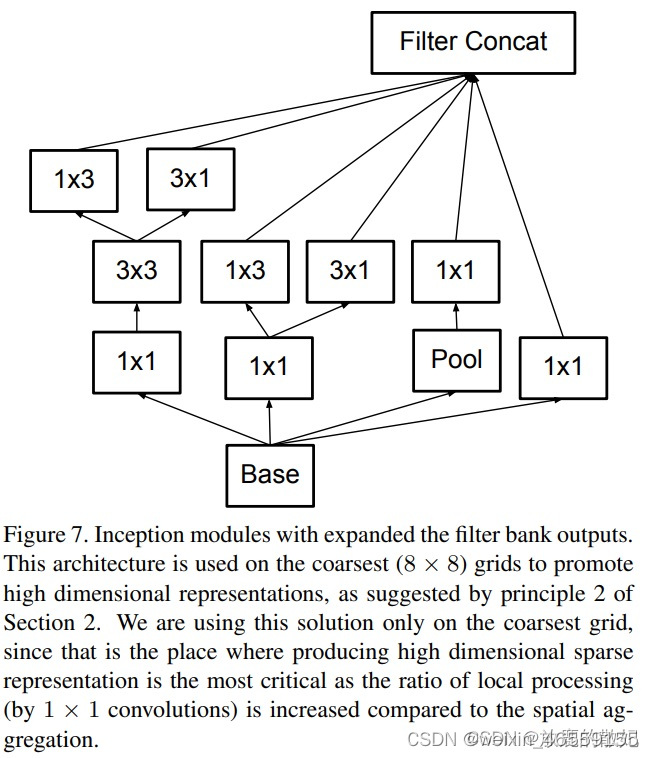

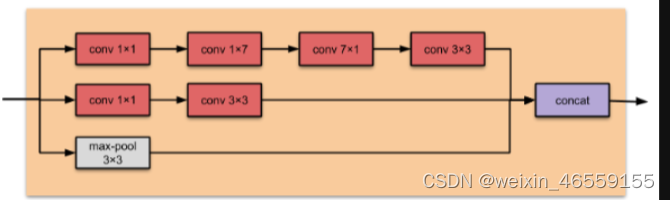

模块中的滤波器组被扩展(即变得更宽而不是更深),以解决表征性瓶颈。如果该模块没有被拓展宽度,而是变得更深,那么维度会过多减少,造成信息损失。如下图所示:

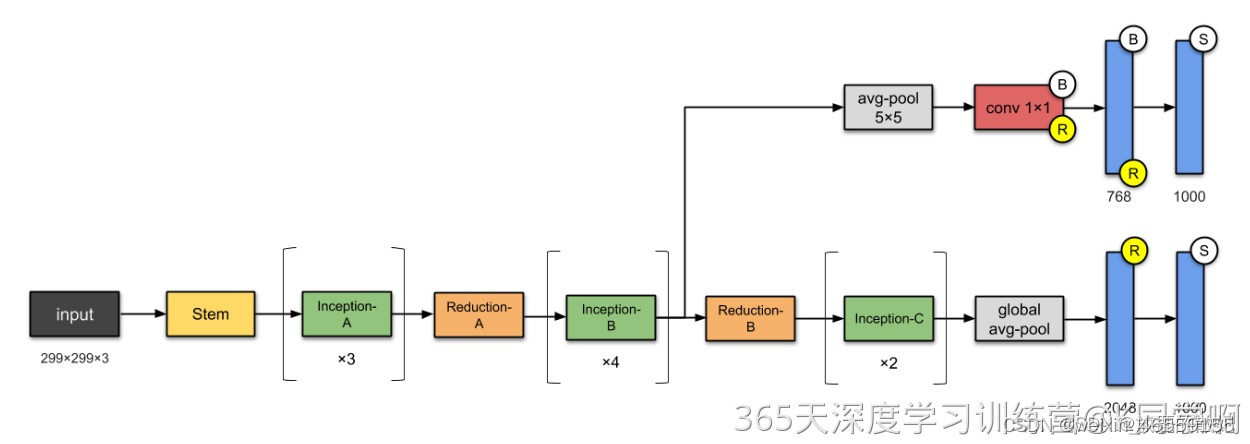

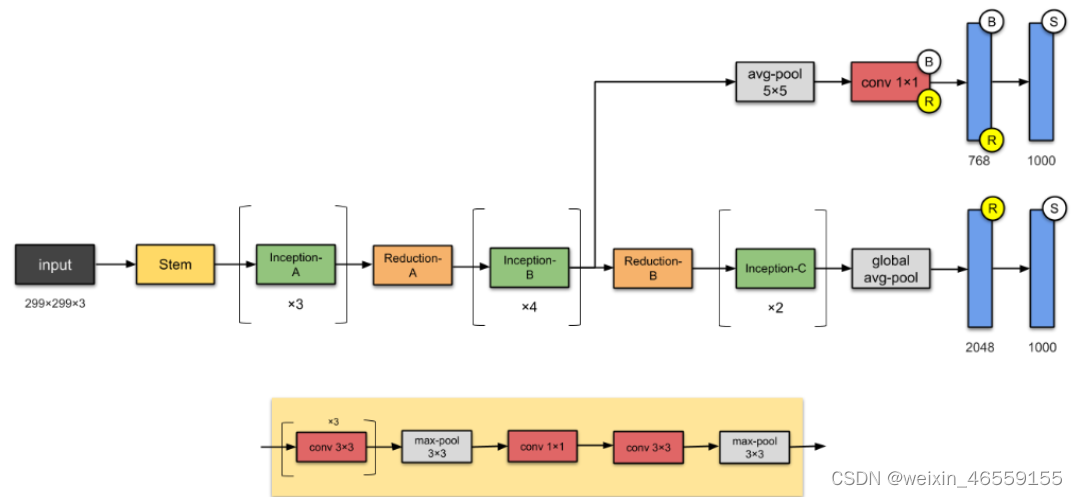

最后实现的inception v3网络是如下所示:

2Inception v3的实现

2.1Inception-A

import torch

import torch.nn as nn

import torch.nn.functional as F

class BasicConv2d(nn.Module):

def __init__(self, in_channels, out_channels, **kwargs):

super(BasicConv2d, self).__init__()

self.conv = nn.Conv2d(in_channels, out_channels, bias=False, **kwargs)

self.bn = nn.BatchNorm2d(out_channels, eps=0.001)

def forward(self, x):

x = self.conv(x)

x = self.bn(x)

return F.relu(x, inplace=True)

class InceptionA(nn.Module):

def __init__(self, in_channels, pool_features):

super(InceptionA, self).__init__()

# 1x1 conv branch

self.branch1x1 = BasicConv2d(in_channels, 64, kernel_size=1) #1

self.branch5x5_1 = BasicConv2d(in_channels, 48, kernel_size=1)

self.branch5x5_2 = BasicConv2d(48, 64, kernel_size=5, padding=2)

self.branch3x3dbl_1 = BasicConv2d(in_channels, 64, kernel_size=1)

self.branch3x3dbl_2 = BasicConv2d(64, 96, kernel_size=3, padding=1)

self.branch3x3dbl_3 = BasicConv2d(96, 96, kernel_size=3, padding=1)

self.branch_pool = BasicConv2d(in_channels, pool_features, kernel_size=1)

def forward(self, x):

branch1x1 = self.branch1x1(x)

branch5x5 = self.branch5x5_1(x)

branch5x5 = self.branch5x5_2(branch5x5)

branch3x3dbl = self.branch3x3dbl_1(x)

branch3x3dbl = self.branch3x3dbl_2(branch3x3dbl)

branch3x3dbl = self.branch3x3dbl_3(branch3x3dbl)

branch_pool = F.avg_pool2d(x, kernel_size=3, stride=1, padding=1)

branch_pool = self.branch_pool(branch_pool)

outputs = [branch1x1, branch5x5, branch3x3dbl, branch_pool]

return torch.cat(outputs, 1)2.2 Inception-B

class InceptionB(nn.Module):

def __init__(self, in_channels, channels_7x7):

super(InceptionB, self).__init__()

# 1x1 conv branch

self.branch1x1 = BasicConv2d(in_channels, 192, kernel_size=1)

c7 = channels_7x7

self.branch7x7_1 = BasicConv2d(in_channels, c7, kernel_size=1)

self.branch7x7_2 = BasicConv2d(c7, c7, kernel_size=(1,7), padding=(0,3))

self.branch7x7_3 = BasicConv2d(c7, 192, kernel_size=(7,1), padding=(3,0))

self.branch7x7dbl_1 = BasicConv2d(in_channels, c7, kernel_size=1)

self.branch7x7dbl_2 = BasicConv2d(c7, c7, kernel_size=(7,1), padding=(3,0))

self.branch7x7dbl_3 = BasicConv2d(c7, c7, kernel_size=(1,7), padding=(0,3))

self.branch7x7dbl_4 = BasicConv2d(c7, c7, kernel_size=(7,1), padding=(3,0))

self.branch7x7dbl_5 = BasicConv2d(c7, 192, kernel_size=(1,7), padding=(0,3))

self.branch_pool = BasicConv2d(in_channels, 192, kernel_size=1)

def forward(self, x):

branch1x1 = self.branch1x1(x)

branch7x7 = self.branch7x7_1(x)

branch7x7 = self.branch7x7_2(branch7x7)

branch7x7 = self.branch7x7_3(branch7x7)

branch7x7dbl = self.branch7x7dbl_1(x)

branch7x7dbl = self.branch7x7dbl_2(branch7x7dbl)

branch7x7dbl = self.branch7x7dbl_3(branch7x7dbl)

branch7x7dbl = self.branch7x7dbl_4(branch7x7dbl)

branch7x7dbl = self.branch7x7dbl_5(branch7x7dbl)

branch_pool = F.avg_pool2d(x, kernel_size=3, stride=1, padding=1)

branch_pool = self.branch_pool(branch_pool)

outputs = [branch1x1, branch7x7, branch7x7dbl, branch_pool]

return torch.cat(outputs, 1)2.3 Inception-C

class InceptionC(nn.Module):

def __init__(self, in_channels):

super(InceptionC, self).__init__()

self.branch1x1 = BasicConv2d(in_channels, 320, kernel_size=1) #1

self.branch3x3_1 = BasicConv2d(in_channels, 384, kernel_size=1)

self.branch3x3_2a = BasicConv2d(384, 384, kernel_size=(1,3), padding=(0,1))

self.branch3x3_2b = BasicConv2d(384, 384, kernel_size=(3,1), padding=(1,0))

self.branch3x3dbl_1 = BasicConv2d(in_channels, 448, kernel_size=1)

self.branch3x3dbl_2 = BasicConv2d(448, 384, kernel_size=3, padding=1)

self.branch3x3dbl_3a = BasicConv2d(384, 384, kernel_size=(1,3), padding=(0,1))

self.branch3x3dbl_3b = BasicConv2d(384, 384, kernel_size=(3,1), padding=(1,0))

self.branch_pool = BasicConv2d(in_channels, 192, kernel_size=1)

def forward(self, x):

branch1x1 = self.branch1x1(x)

branch3x3 = self.branch3x3_1(x)

branch3x3 = [self.branch3x3_2a(branch3x3),

self.branch3x3_2b(branch3x3),

]

branch3x3 = torch.cat(branch3x3, 1)

branch3x3dbl = self.branch3x3dbl_1(x)

branch3x3dbl = self.branch3x3dbl_2(branch3x3dbl)

branch3x3dbl = [self.branch3x3dbl_3a(branch3x3dbl),

self.branch3x3dbl_3b(branch3x3dbl),

]

branch3x3dbl = torch.cat(branch3x3dbl, 1)

branch_pool = F.avg_pool2d(x, kernel_size=3, stride=1, padding=1)

branch_pool = self.branch_pool(branch_pool)

outputs = [branch1x1, branch3x3, branch3x3dbl, branch_pool]

return torch.cat(outputs, 1)2.4 Reduction-A

class ReductionA(nn.Module):

def __init__(self, in_channels):

super(ReductionA, self).__init__()

self.branch3x3 = BasicConv2d(in_channels, 384, kernel_size=3, stride=2)

self.branch3x3dbl_1 = BasicConv2d(in_channels, 64, kernel_size=1)

self.branch3x3dbl_2 = BasicConv2d(64, 96, kernel_size=3, padding=1)

self.branch3x3dbl_3 = BasicConv2d(96, 96, kernel_size=3, stride=2)

def forward(self, x):

branch3x3 = self.branch3x3(x)

branch3x3dbl = self.branch3x3dbl_1(x)

branch3x3dbl = self.branch3x3dbl_2(branch3x3dbl)

branch3x3dbl = self.branch3x3dbl_3(branch3x3dbl)

branch_pool = F.max_pool2d(x, kernel_size=3, stride=2)

outputs = [branch3x3, branch3x3dbl, branch_pool]

return torch.cat(outputs, 1)2.5 Reduction-B

class ReductionB(nn.Module):

def __init__(self, in_channels):

super(ReductionB, self).__init__()

self.branch3x3_1 = BasicConv2d(in_channels, 192, kernel_size=1)

self.branch3x3_2 = BasicConv2d(192, 320, kernel_size=3, stride=2)

self.branch7x7x3_1 = BasicConv2d(in_channels, 192, kernel_size=1)

self.branch7x7x3_2 = BasicConv2d(192, 192, kernel_size=(1,7), padding=(0,3))

self.branch7x7x3_3 = BasicConv2d(192, 192, kernel_size=(7,1), padding=(3,0))

self.branch7x7x3_4 = BasicConv2d(192, 192, kernel_size=3, stride=2)

def forward(self, x):

branch3x3 = self.branch3x3_1(x)

branch3x3 = self.branch3x3_2(branch3x3)

branch7x7x3 = self.branch7x7x3_1(x)

branch7x7x3 = self.branch7x7x3_2(branch7x7x3)

branch7x7x3 = self.branch7x7x3_3(branch7x7x3)

branch7x7x3 = self.branch7x7x3_4(branch7x7x3)

branch_pool = F.max_pool2d(x, kernel_size=3, stride=2)

outputs = [branch3x3, branch7x7x3, branch_pool]

return torch.cat(outputs, 1)2.6 辅助分支

class InceptionAux(nn.Module):

def __init__(self, in_channels, num_classes):

super(InceptionAux, self).__init__()

self.conv0 = BasicConv2d(in_channels, 128, kernel_size=1)

self.conv1 = BasicConv2d(128, 768, kernel_size=5)

self.conv1.stddev = 0.01

self.fc = nn.Linear(768, num_classes)

self.fc.stddev = 0.001

def forward(self, x):

# 17x17x768

x = F.avg_pool2d(x, kernel_size=5, stride=3)

# 5x5x768

x = self.conv0(x)

# 5x5x128

x = self.conv1(x)

# 1x1x128

x = x.view(x.size(0), -1)

# 768

x = self.fc(x)

# num_classes

return x2.7 模型搭建

class InceptionV3(nn.Module):

def __init__(self, num_classes=1000, aux_logits=False, transform_input=False):

super(InceptionV3, self).__init__()

self.aux_logits = aux_logits

self.transform_input = transform_input

self.conv2d_1a_3x3 = BasicConv2d(3, 32, kernel_size=3, stride=2)

self.conv2d_2a_3x3 = BasicConv2d(32, 32, kernel_size=3)

self.conv2d_2b_3x3 = BasicConv2d(32, 64, kernel_size=3, padding=1)

self.conv2d_3b_1x1 = BasicConv2d(64, 80, kernel_size=1)

self.conv2d_4a_3x3 = BasicConv2d(80, 192, kernel_size=3)

self.Mixed_5b = InceptionA(192, pool_features=32)

self.Mixed_5c = InceptionA(256, pool_features=64)

self.Mixed_5d = InceptionA(288, pool_features=64)

self.Mixed_6a = ReductionA(288)

self.Mixed_6b = InceptionB(768, channels_7x7=128)

self.Mixed_6c = InceptionB(768, channels_7x7=160)

self.Mixed_6d = InceptionB(768, channels_7x7=160)

self.Mixed_6e = InceptionB(768, channels_7x7=192)

if aux_logits:

self.AuxLogits = InceptionAux(768, num_classes)

self.Mixed_7a = ReductionB(768)

self.Mixed_7b = InceptionC(1280)

self.Mixed_7c = InceptionC(2048)

self.fc = nn.Linear(2048, num_classes)

def forward(self, x):

if self.transform_input:

x = x.clone()

x[:, 0] = x[:, 0] * (0.229 / 0.5) + (0.485 - 0.5) / 0.5

x[:, 1] = x[:, 1] * (0.224 / 0.5) + (0.456 - 0.5) / 0.5

x[:, 2] = x[:, 2] * (0.225 / 0.5) + (0.406 - 0.5) / 0.5

# 299*299*3

x = self.conv2d_1a_3x3(x)

# 149*149*32

x = self.conv2d_2a_3x3(x)

# 147*147*32

x = self.conv2d_2b_3x3(x)

# 147*147*64

x = F.max_pool2d(x, kernel_size=3, stride=2)

# 73*73*64

x = self.conv2d_3b_1x1(x)

# 73*73*80

x = self.conv2d_4a_3x3(x)

# 71*71*192

x = F.max_pool2d(x, kernel_size=3, stride=2)

# 35*35*192

x = self.Mixed_5b(x)

# 35*35*256

x = self.Mixed_5c(x)

# 35*35*288

x = self.Mixed_5d(x)

# 35*35*288

x = self.Mixed_6a(x)

# 17*17*768

x = self.Mixed_6b(x)

# 17*17*768

x = self.Mixed_6c(x)

# 17*17*768

x = self.Mixed_6d(x)

# 17*17*768

x = self.Mixed_6e(x)

# 17*17*768

if self.training and self.aux_logits:

aux = self.AuxLogits(x)

# 17*17*768

x = self.Mixed_7a(x)

# 8*8*1280

x = self.Mixed_7b(x)

# 8*8*2048

x = self.Mixed_7c(x)

# 8*8*2048

x = F.avg_pool2d(x, kernel_size=8)

# 1*1*2048

x = F.dropout(x, training=self.training)

# 1*1*2048

x = x.view(x.size(0), -1)

# 2048

x = self.fc(x)

# num_classes

if self.training and self.aux_logits:

return x, aux

return x2.8 查看模型详情

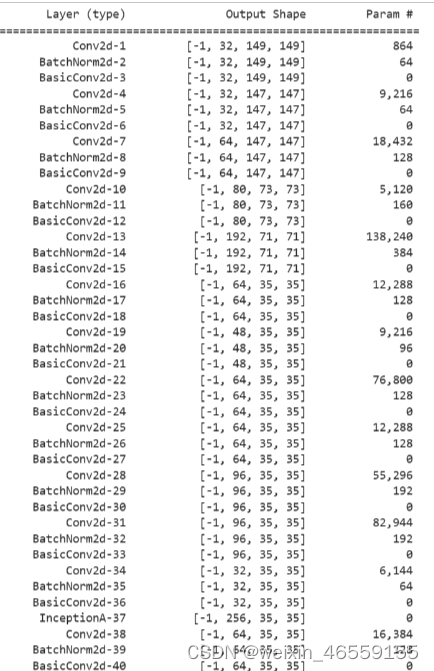

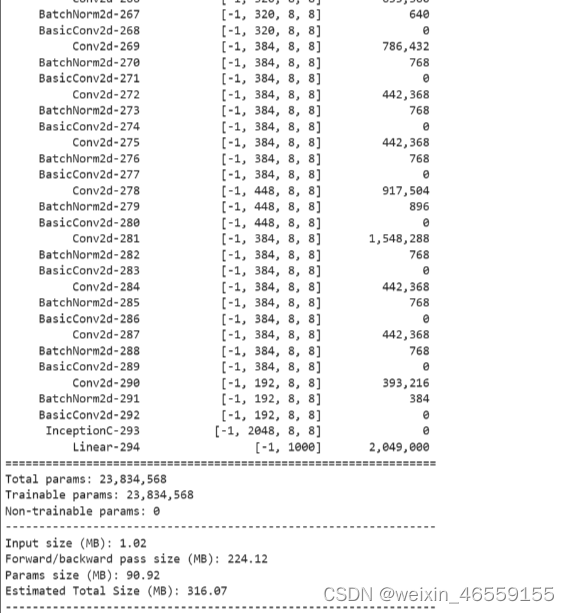

# 统计模型参数量以及其他指标

import torchsummary

# 调用并将模型转移到GPU中

model = InceptionV3().to(device)

# 显示网络结构

torchsummary.summary(model, (3, 299, 299))

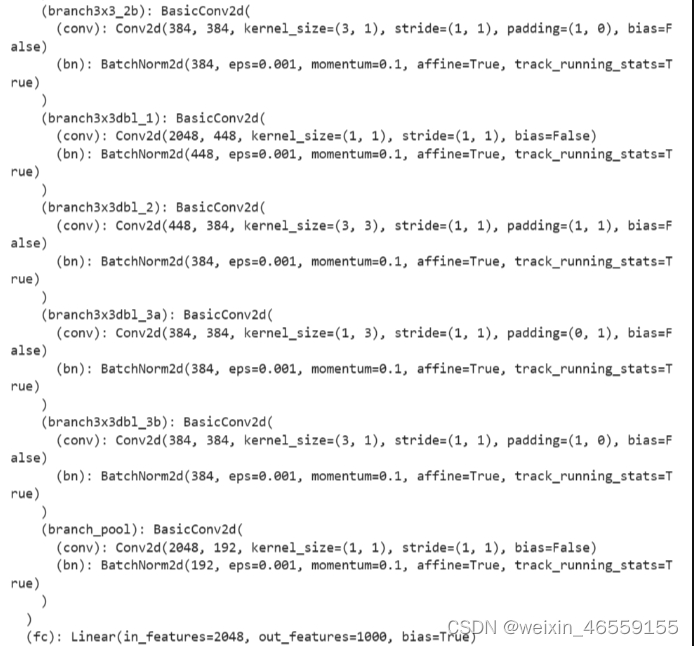

print(model)输出结果如下(由于内容较多,只展示前后部分内容):

641

641

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?