一、torch.nn.Module

所有搭建的神经网络都应该以这个为父类,下面是官方给的源代码

import torch.nn as nn

import torch.nn.functional as F

class Model(nn.Module):

def __init__(self):

super().__init__()

self.conv1 = nn.Conv2d(1, 20, 5)

self.conv2 = nn.Conv2d(20, 20, 5)

def forward(self, x):

x = F.relu(self.conv1(x))

return F.relu(self.conv2(x))手动搭建一个神经网络,实现输入×2,重写__init__、forward方法

import torch

from torch import nn

class Fnn(nn.Module):

def __init__(self):

super().__init__()

# 集成的nn.Module中__call__方法的实现,传入参数直接调用

def forward(self, x):

y = x*2

return y

fnn = Fnn()

a = torch.tensor(1.0)

b = fnn(a)

print(b)二.神经网络结构分析

✅卷积层:

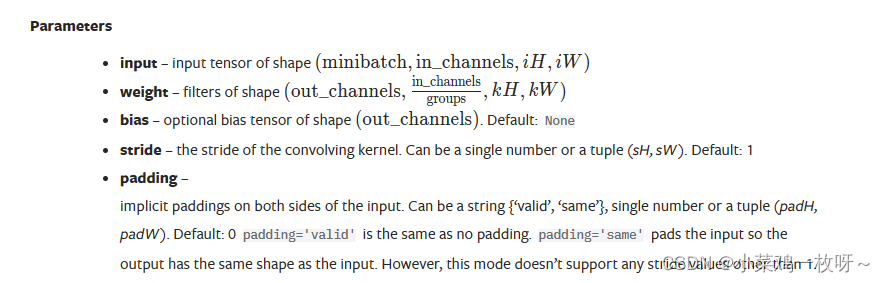

作用:提取图像特征。下面是PyTorch官网给出torch.nn.functional的卷积函数的参数

input:输入图像,默认四维

weight:权重,也就是卷积核。默认四维

bias:偏置

stride:步长,即卷积核每次移动的距离

padding:填充(默认0填充),默认不填充

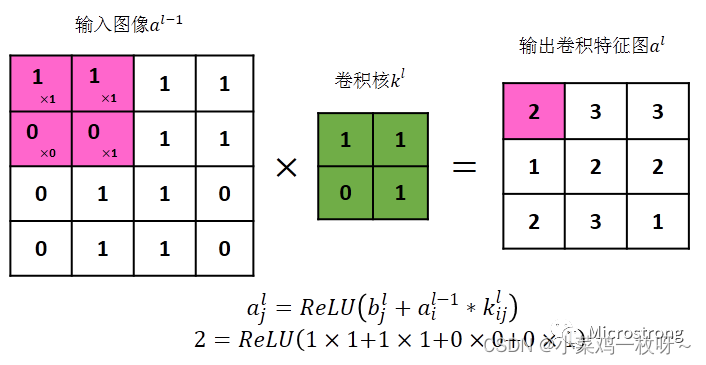

以上图为例,下面是代码实现卷积过程:

import torch

import torch.nn.functional

# 要求输入的类型为tensor

input = torch.Tensor([[1, 1, 1, 1],

[0, 0, 1, 1],

[0, 1, 1, 0],

[0, 1, 1, 0]])

# 定义卷积核

kernel = torch.Tensor([[1, 1],

[0, 1]])

# 要求输入为4个维度

input = torch.reshape(input, (1, 1, 4, 4))

kernel = torch.reshape(kernel, (1, 1, 2, 2))

output = torch.nn.functional.conv2d(input, kernel, stride=1)

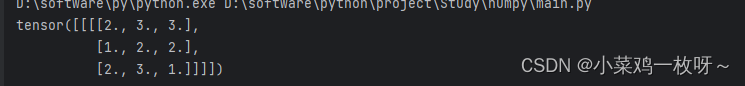

print(output)

建立一个卷积层的神经网络,此时用的是torch.nn中的卷积函数

from torch import nn

import torch

import torchvision

from torch.nn import Conv2d

from torch.utils.data import DataLoader

from torch.utils.tensorboard import SummaryWriter

# 创建测试数据集

val_dataset = torchvision.datasets.CIFAR10(root='./dataset', train=False, transform=torchvision.transforms.ToTensor(), download=True)

# 加载数据

loader = DataLoader(dataset=val_dataset, batch_size=64, shuffle=True, num_workers=0, drop_last=False)

# 编写神经网络

class test_nn(nn.Module):

def __init__(self):

super().__init__()

# 彩色图像通道数为3

self.conv1 = Conv2d(3, 6, 3, 1, 0)

def forward(self,x):

x = self.conv1(x)

return x

Mynn = test_nn()

writer = SummaryWriter('logs')

steps = 0

for data in loader:

imgs, targets = data # 获取输入图像

writer.add_images(tag='first_nn', img_tensor=imgs, global_step=steps)

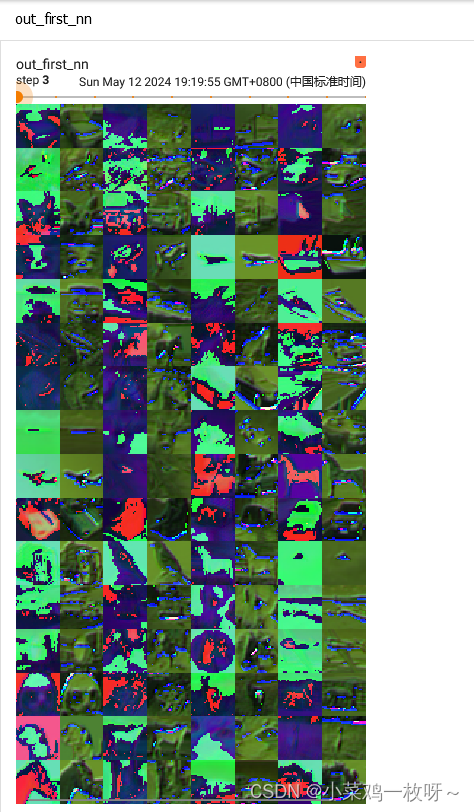

out_imgs = Mynn(imgs) # 获取经过神经网络的图像

# -1代表占位符,具体大小让PyTorch自动计算维度大小,从6个通过转化为3通道

out_imgs = torch.reshape(out_imgs, (-1, 3, 30, 30))

writer.add_images(tag='out_first_nn', img_tensor=out_imgs, global_step=steps)

steps += 1

writer.close()输入的batch-size是64,3个通道,而输出是6个通过,所以是64×2

✅池化层

作用:降低输入图像的维度,从而减少数据量,加快训练速度

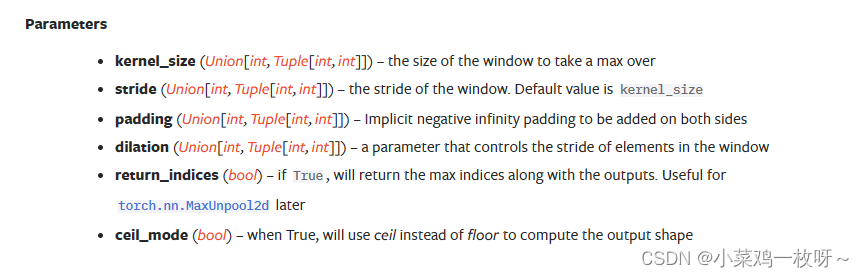

先来看看PyTorch官方给出的参数(以二维池化为例)

kernel_size:池化核,分为最大池化和最小池化

dilation:核中间是否有间隙

ceil_mode:向上/向下取整,向上取整为保留边缘的池化结果

其它的参数在卷积层时已介绍过

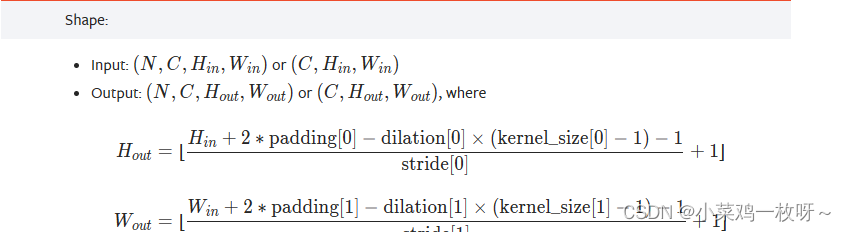

输出图像尺寸

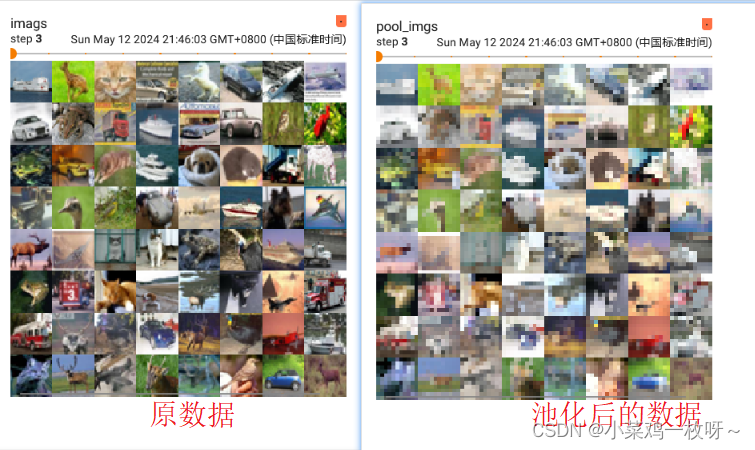

池化操作 代码

池化操作 代码

import torch

import torchvision

from torch.nn import MaxPool2d

from torch import nn

from torch.utils.data import DataLoader

from torch.utils.tensorboard import SummaryWriter

val_dataset = torchvision.datasets.CIFAR10(root='./dataset', train=False, transform=torchvision.transforms.ToTensor(), download=True)

loader = DataLoader(dataset=val_dataset, batch_size=64, shuffle=True, num_workers=0, drop_last=False)

# 自定义池化神经网络

class Poolnn(nn.Module):

def __init__(self):

super().__init__()

self.pool = MaxPool2d(kernel_size=3, ceil_mode=True)

def forward(self, x):

x = self.pool(x)

return x

Mynn = Poolnn()

writer = SummaryWriter('logs')

step = 0

for data in loader:

imgs, targets = data

writer.add_images(tag='imags', img_tensor=imgs, global_step=step)

pool_imgs = Mynn(imgs)

writer.add_images(tag='pool_imgs', img_tensor=pool_imgs, global_step=step)

step += 1

writer.close()

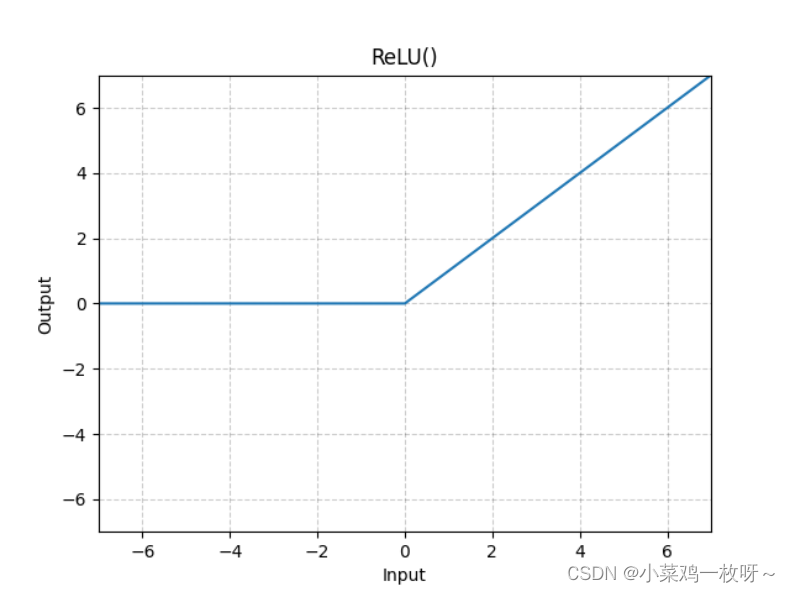

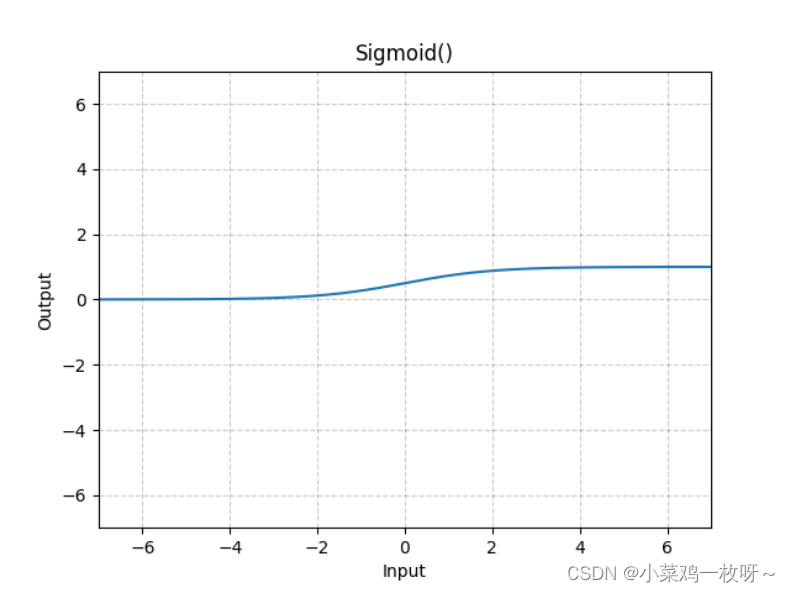

✅ 非线性函数

作用:提高神经网络的非线性能力,增强神经网络的推理和分类能力

常见的激活函数有ReLU,Sigmod

ReLU,输入输出关系如下:

Sigmoid,输入输出关系如下:

![]()

以Sigmoid为例,验证激活函数效果

以Sigmoid为例,验证激活函数效果

import torch

import torchvision

from torch.nn import MaxPool2d, ReLU, Sigmoid

from torch import nn

from torch.utils.data import DataLoader

from torch.utils.tensorboard import SummaryWriter

val_dataset = torchvision.datasets.CIFAR10(root='./dataset', train=False, transform=torchvision.transforms.ToTensor(), download=True)

loader = DataLoader(dataset=val_dataset, batch_size=64, shuffle=True, num_workers=0, drop_last=False)

# 自定义函数非线性神经网络

class nonnn(nn.Module):

def __init__(self):

super().__init__()

self.nonliner = Sigmoid()

def forward(self, x):

x = self.nonliner(x)

return x

Mynn = nonnn()

writer = SummaryWriter('logs')

step = 0

for data in loader:

imgs, targets = data

writer.add_images(tag='imags', img_tensor=imgs, global_step=step)

non_imgs = Mynn(imgs)

writer.add_images(tag='nonliner_imgs', img_tensor=non_imgs, global_step=step)

step += 1

writer.close()

323

323

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?