🚩🚩🚩Transformer实战-系列教程总目录

有任何问题欢迎在下面留言

本篇文章的代码运行界面均在Pycharm中进行

本篇文章配套的代码资源已经上传

点我下载源码

SwinTransformer 算法原理

SwinTransformer 源码解读1(项目配置/SwinTransformer类)

SwinTransformer 源码解读2(PatchEmbed类/BasicLayer类)

SwinTransformer 源码解读3(SwinTransformerBlock类)

SwinTransformer 源码解读4(WindowAttention类)

SwinTransformer 源码解读5(Mlp类/PatchMerging类)

7、Mlp类

class Mlp(nn.Module):

def __init__(self, in_features, hidden_features=None, out_features=None, act_layer=nn.GELU, drop=0.):

super().__init__()

out_features = out_features or in_features

hidden_features = hidden_features or in_features

self.fc1 = nn.Linear(in_features, hidden_features)

self.act = act_layer()

self.fc2 = nn.Linear(hidden_features, out_features)

self.drop = nn.Dropout(drop)

def forward(self, x):

x = self.fc1(x)

x = self.act(x)

x = self.drop(x)

x = self.fc2(x)

x = self.drop(x)

return x

fc1和fc2都是一个全连接层,drop 是两个全连接层对应的Dropout,act 是一个激活函数,是gelu激活函数

- torch.Size([4, 3136, 96]),原始输入

- torch.Size([4, 3136, 384]),经过全连接,增大了向量维度

- 第3个: torch.Size([4, 3136, 384]),经过激活函数,维度不变

- 第4个: torch.Size([4, 3136, 384]),经过Dropout,维度不变

- 第5个: torch.Size([4, 3136, 96]),经过第二次全连接,向量维度降回到原来

- 第6个: torch.Size([4, 3136, 96]),经过Dropout,维度不变

8、PatchMerging类

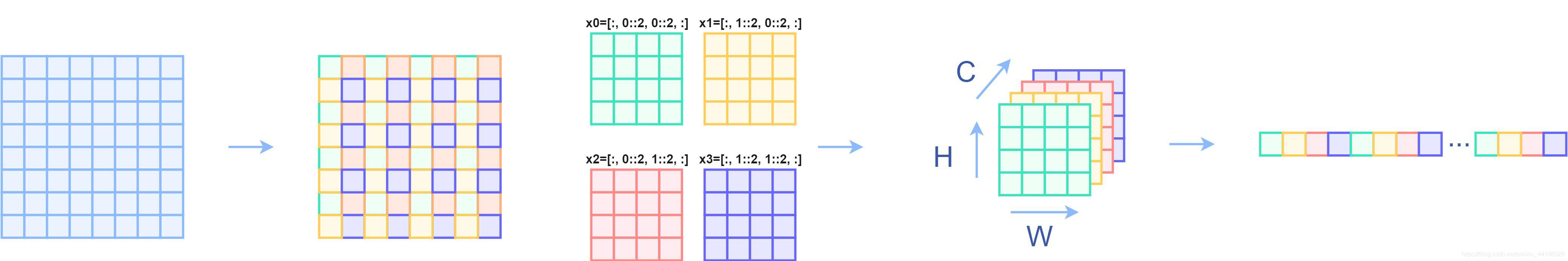

PatchMerging 类是 Swin Transformer 架构中用于降低特征图分辨率的层。这个过程通过合并相邻的patch来减少序列长度,同时增加通道数,以保持信息的密度。

每执行一个stage后,都会执行一个一个下采样操作,也就是PatchMerging类的前向传播。

所谓的下采样操作,主要是把x切片成4个,

x

0

x_0

x0、

x

1

x_1

x1、

x

2

x_2

x2、

x

3

x_3

x3,这四个是按照长宽间隔去选的:

x原来是 8 ∗ 8 8*8 8∗8,取完后变成了4个 4 ∗ 4 4*4 4∗4的,再把4个做一个拼接,拼接完成后再连接一个全连接,使用全连接进行降维。

class PatchMerging(nn.Module):

def __init__(self, input_resolution, dim, norm_layer=nn.LayerNorm):

super().__init__()

self.input_resolution = input_resolution

self.dim = dim

self.reduction = nn.Linear(4 * dim, 2 * dim, bias=False)

self.norm = norm_layer(4 * dim)

def forward(self, x):

H, W = self.input_resolution

B, L, C = x.shape

assert L == H * W, "input feature has wrong size"

assert H % 2 == 0 and W % 2 == 0, f"x size ({H}*{W}) are not even."

x = x.view(B, H, W, C)

x0 = x[:, 0::2, 0::2, :] # B H/2 W/2 C

x1 = x[:, 1::2, 0::2, :] # B H/2 W/2 C

x2 = x[:, 0::2, 1::2, :] # B H/2 W/2 C

x3 = x[:, 1::2, 1::2, :] # B H/2 W/2 C

x = torch.cat([x0, x1, x2, x3], -1) # B H/2 W/2 4*C

x = x.view(B, -1, 4 * C) # B H/2*W/2 4*C

x = self.norm(x)

x = self.reduction(x)

return x

构造函数:

- input_resolution ,dim :输入特征的分辨率和通道数

- reduction ,初始化一个线性变换,用于将相邻四个patch的特征合并成一个patch

前向传播:

- 原始输入: torch.Size([4, 3136, 96])

- H, W = 56 56,输入特征长宽

- B, L, C = 4 3136 96,batch,序列长度,特征维度

- x: torch.Size([4, 56, 56, 96]),将输入特征重塑为四维张量,准备进行patch合并操作

- x0: torch.Size([4, 28, 28, 96])、x1: torch.Size([4, 28, 28, 96])、x2: torch.Size([4, 28, 28, 96])、x3: torch.Size([4, 28, 28, 96]),提取四个相邻patch的特征,每个patch分别来自原始特征图的不同子区域

- x: torch.Size([4, 28, 28, 384]),将四个patch的特征在通道维度上合并

- x: torch.Size([4, 784, 384]),将合并后的特征图重塑,准备进行线性变换

- x: torch.Size([4, 784, 384]),层归一化,维度不变

- x: torch.Size([4, 784, 192]),通过线性变换降低合并后特征的维度,减少通道数

9、Swin Transformer模型参数

整个Swin Transformer模型架构

(patch_embed): PatchEmbed(

(proj): Conv2d(3, 96, kernel_size=(4, 4), stride=(4, 4))

(norm): LayerNorm((96,), eps=1e-05, elementwise_affine=True)

)

(pos_drop): Dropout(p=0.0, inplace=False)

(layers): ModuleList(

(0): BasicLayer(

dim=96, input_resolution=(56, 56), depth=2

(blocks): ModuleList(

(0): SwinTransformerBlock(

dim=96, input_resolution=(56, 56), num_heads=3, window_size=7, shift_size=0, mlp_ratio=4.0

(norm1): LayerNorm((96,), eps=1e-05, elementwise_affine=True)

(attn): WindowAttention(

dim=96, window_size=(7, 7), num_heads=3

(qkv): Linear(in_features=96, out_features=288, bias=True)

(attn_drop): Dropout(p=0.0, inplace=False)

(proj): Linear(in_features=96, out_features=96, bias=True)

(proj_drop): Dropout(p=0.0, inplace=False)

(softmax): Softmax(dim=-1)

)

(drop_path): Identity()

(norm2): LayerNorm((96,), eps=1e-05, elementwise_affine=True)

(mlp): Mlp(

(fc1): Linear(in_features=96, out_features=384, bias=True)

(act): GELU()

(fc2): Linear(in_features=384, out_features=96, bias=True)

(drop): Dropout(p=0.0, inplace=False)

)

)

(1): SwinTransformerBlock(

dim=96, input_resolution=(56, 56), num_heads=3, window_size=7, shift_size=3, mlp_ratio=4.0

(norm1): LayerNorm((96,), eps=1e-05, elementwise_affine=True)

(attn): WindowAttention(

dim=96, window_size=(7, 7), num_heads=3

(qkv): Linear(in_features=96, out_features=288, bias=True)

(attn_drop): Dropout(p=0.0, inplace=False)

(proj): Linear(in_features=96, out_features=96, bias=True)

(proj_drop): Dropout(p=0.0, inplace=False)

(softmax): Softmax(dim=-1)

)

(drop_path): DropPath()

(norm2): LayerNorm((96,), eps=1e-05, elementwise_affine=True)

(mlp): Mlp(

(fc1): Linear(in_features=96, out_features=384, bias=True)

(act): GELU()

(fc2): Linear(in_features=384, out_features=96, bias=True)

(drop): Dropout(p=0.0, inplace=False)

)

)

)

(downsample): PatchMerging(

input_resolution=(56, 56), dim=96

(reduction): Linear(in_features=384, out_features=192, bias=False)

(norm): LayerNorm((384,), eps=1e-05, elementwise_affine=True)

)

)

(1): BasicLayer(

dim=192, input_resolution=(28, 28), depth=2

(blocks): ModuleList(

(0): SwinTransformerBlock(

dim=192, input_resolution=(28, 28), num_heads=6, window_size=7, shift_size=0, mlp_ratio=4.0

(norm1): LayerNorm((192,), eps=1e-05, elementwise_affine=True)

(attn): WindowAttention(

dim=192, window_size=(7, 7), num_heads=6

(qkv): Linear(in_features=192, out_features=576, bias=True)

(attn_drop): Dropout(p=0.0, inplace=False)

(proj): Linear(in_features=192, out_features=192, bias=True)

(proj_drop): Dropout(p=0.0, inplace=False)

(softmax): Softmax(dim=-1)

)

(drop_path): DropPath()

(norm2): LayerNorm((192,), eps=1e-05, elementwise_affine=True)

(mlp): Mlp(

(fc1): Linear(in_features=192, out_features=768, bias=True)

(act): GELU()

(fc2): Linear(in_features=768, out_features=192, bias=True)

(drop): Dropout(p=0.0, inplace=False)

)

)

(1): SwinTransformerBlock(

dim=192, input_resolution=(28, 28), num_heads=6, window_size=7, shift_size=3, mlp_ratio=4.0

(norm1): LayerNorm((192,), eps=1e-05, elementwise_affine=True)

(attn): WindowAttention(

dim=192, window_size=(7, 7), num_heads=6

(qkv): Linear(in_features=192, out_features=576, bias=True)

(attn_drop): Dropout(p=0.0, inplace=False)

(proj): Linear(in_features=192, out_features=192, bias=True)

(proj_drop): Dropout(p=0.0, inplace=False)

(softmax): Softmax(dim=-1)

)

(drop_path): DropPath()

(norm2): LayerNorm((192,), eps=1e-05, elementwise_affine=True)

(mlp): Mlp(

(fc1): Linear(in_features=192, out_features=768, bias=True)

(act): GELU()

(fc2): Linear(in_features=768, out_features=192, bias=True)

(drop): Dropout(p=0.0, inplace=False)

)

)

)

(downsample): PatchMerging(

input_resolution=(28, 28), dim=192

(reduction): Linear(in_features=768, out_features=384, bias=False)

(norm): LayerNorm((768,), eps=1e-05, elementwise_affine=True)

)

)

(2): BasicLayer(

dim=384, input_resolution=(14, 14), depth=6

(blocks): ModuleList(

(0): SwinTransformerBlock(

dim=384, input_resolution=(14, 14), num_heads=12, window_size=7, shift_size=0, mlp_ratio=4.0

(norm1): LayerNorm((384,), eps=1e-05, elementwise_affine=True)

(attn): WindowAttention(

dim=384, window_size=(7, 7), num_heads=12

(qkv): Linear(in_features=384, out_features=1152, bias=True)

(attn_drop): Dropout(p=0.0, inplace=False)

(proj): Linear(in_features=384, out_features=384, bias=True)

(proj_drop): Dropout(p=0.0, inplace=False)

(softmax): Softmax(dim=-1)

)

(drop_path): DropPath()

(norm2): LayerNorm((384,), eps=1e-05, elementwise_affine=True)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, bias=True)

(act): GELU()

(fc2): Linear(in_features=1536, out_features=384, bias=True)

(drop): Dropout(p=0.0, inplace=False)

)

)

(1): SwinTransformerBlock(

dim=384, input_resolution=(14, 14), num_heads=12, window_size=7, shift_size=3, mlp_ratio=4.0

(norm1): LayerNorm((384,), eps=1e-05, elementwise_affine=True)

(attn): WindowAttention(

dim=384, window_size=(7, 7), num_heads=12

(qkv): Linear(in_features=384, out_features=1152, bias=True)

(attn_drop): Dropout(p=0.0, inplace=False)

(proj): Linear(in_features=384, out_features=384, bias=True)

(proj_drop): Dropout(p=0.0, inplace=False)

(softmax): Softmax(dim=-1)

)

(drop_path): DropPath()

(norm2): LayerNorm((384,), eps=1e-05, elementwise_affine=True)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, bias=True)

(act): GELU()

(fc2): Linear(in_features=1536, out_features=384, bias=True)

(drop): Dropout(p=0.0, inplace=False)

)

)

(2): SwinTransformerBlock(

dim=384, input_resolution=(14, 14), num_heads=12, window_size=7, shift_size=0, mlp_ratio=4.0

(norm1): LayerNorm((384,), eps=1e-05, elementwise_affine=True)

(attn): WindowAttention(

dim=384, window_size=(7, 7), num_heads=12

(qkv): Linear(in_features=384, out_features=1152, bias=True)

(attn_drop): Dropout(p=0.0, inplace=False)

(proj): Linear(in_features=384, out_features=384, bias=True)

(proj_drop): Dropout(p=0.0, inplace=False)

(softmax): Softmax(dim=-1)

)

(drop_path): DropPath()

(norm2): LayerNorm((384,), eps=1e-05, elementwise_affine=True)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, bias=True)

(act): GELU()

(fc2): Linear(in_features=1536, out_features=384, bias=True)

(drop): Dropout(p=0.0, inplace=False)

)

)

(3): SwinTransformerBlock(

dim=384, input_resolution=(14, 14), num_heads=12, window_size=7, shift_size=3, mlp_ratio=4.0

(norm1): LayerNorm((384,), eps=1e-05, elementwise_affine=True)

(attn): WindowAttention(

dim=384, window_size=(7, 7), num_heads=12

(qkv): Linear(in_features=384, out_features=1152, bias=True)

(attn_drop): Dropout(p=0.0, inplace=False)

(proj): Linear(in_features=384, out_features=384, bias=True)

(proj_drop): Dropout(p=0.0, inplace=False)

(softmax): Softmax(dim=-1)

)

(drop_path): DropPath()

(norm2): LayerNorm((384,), eps=1e-05, elementwise_affine=True)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, bias=True)

(act): GELU()

(fc2): Linear(in_features=1536, out_features=384, bias=True)

(drop): Dropout(p=0.0, inplace=False)

)

)

(4): SwinTransformerBlock(

dim=384, input_resolution=(14, 14), num_heads=12, window_size=7, shift_size=0, mlp_ratio=4.0

(norm1): LayerNorm((384,), eps=1e-05, elementwise_affine=True)

(attn): WindowAttention(

dim=384, window_size=(7, 7), num_heads=12

(qkv): Linear(in_features=384, out_features=1152, bias=True)

(attn_drop): Dropout(p=0.0, inplace=False)

(proj): Linear(in_features=384, out_features=384, bias=True)

(proj_drop): Dropout(p=0.0, inplace=False)

(softmax): Softmax(dim=-1)

)

(drop_path): DropPath()

(norm2): LayerNorm((384,), eps=1e-05, elementwise_affine=True)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, bias=True)

(act): GELU()

(fc2): Linear(in_features=1536, out_features=384, bias=True)

(drop): Dropout(p=0.0, inplace=False)

)

)

(5): SwinTransformerBlock(

dim=384, input_resolution=(14, 14), num_heads=12, window_size=7, shift_size=3, mlp_ratio=4.0

(norm1): LayerNorm((384,), eps=1e-05, elementwise_affine=True)

(attn): WindowAttention(

dim=384, window_size=(7, 7), num_heads=12

(qkv): Linear(in_features=384, out_features=1152, bias=True)

(attn_drop): Dropout(p=0.0, inplace=False)

(proj): Linear(in_features=384, out_features=384, bias=True)

(proj_drop): Dropout(p=0.0, inplace=False)

(softmax): Softmax(dim=-1)

)

(drop_path): DropPath()

(norm2): LayerNorm((384,), eps=1e-05, elementwise_affine=True)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, bias=True)

(act): GELU()

(fc2): Linear(in_features=1536, out_features=384, bias=True)

(drop): Dropout(p=0.0, inplace=False)

)

)

)

(downsample): PatchMerging(

input_resolution=(14, 14), dim=384

(reduction): Linear(in_features=1536, out_features=768, bias=False)

(norm): LayerNorm((1536,), eps=1e-05, elementwise_affine=True)

)

)

(3): BasicLayer(

dim=768, input_resolution=(7, 7), depth=2

(blocks): ModuleList(

(0): SwinTransformerBlock(

dim=768, input_resolution=(7, 7), num_heads=24, window_size=7, shift_size=0, mlp_ratio=4.0

(norm1): LayerNorm((768,), eps=1e-05, elementwise_affine=True)

(attn): WindowAttention(

dim=768, window_size=(7, 7), num_heads=24

(qkv): Linear(in_features=768, out_features=2304, bias=True)

(attn_drop): Dropout(p=0.0, inplace=False)

(proj): Linear(in_features=768, out_features=768, bias=True)

(proj_drop): Dropout(p=0.0, inplace=False)

(softmax): Softmax(dim=-1)

)

(drop_path): DropPath()

(norm2): LayerNorm((768,), eps=1e-05, elementwise_affine=True)

(mlp): Mlp(

(fc1): Linear(in_features=768, out_features=3072, bias=True)

(act): GELU()

(fc2): Linear(in_features=3072, out_features=768, bias=True)

(drop): Dropout(p=0.0, inplace=False)

)

)

(1): SwinTransformerBlock(

dim=768, input_resolution=(7, 7), num_heads=24, window_size=7, shift_size=0, mlp_ratio=4.0

(norm1): LayerNorm((768,), eps=1e-05, elementwise_affine=True)

(attn): WindowAttention(

dim=768, window_size=(7, 7), num_heads=24

(qkv): Linear(in_features=768, out_features=2304, bias=True)

(attn_drop): Dropout(p=0.0, inplace=False)

(proj): Linear(in_features=768, out_features=768, bias=True)

(proj_drop): Dropout(p=0.0, inplace=False)

(softmax): Softmax(dim=-1)

)

(drop_path): DropPath()

(norm2): LayerNorm((768,), eps=1e-05, elementwise_affine=True)

(mlp): Mlp(

(fc1): Linear(in_features=768, out_features=3072, bias=True)

(act): GELU()

(fc2): Linear(in_features=3072, out_features=768, bias=True)

(drop): Dropout(p=0.0, inplace=False)

)

)

)

)

)

(norm): LayerNorm((768,), eps=1e-05, elementwise_affine=True)

(avgpool): AdaptiveAvgPool1d(output_size=1)

(head): Linear(in_features=768, out_features=1000, bias=True)

)

SwinTransformer 算法原理

SwinTransformer 源码解读1(项目配置/SwinTransformer类)

SwinTransformer 源码解读2(PatchEmbed类/BasicLayer类)

SwinTransformer 源码解读3(SwinTransformerBlock类)

SwinTransformer 源码解读4(WindowAttention类)

SwinTransformer 源码解读5(Mlp类/PatchMerging类)

2144

2144

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?