关键词提取使用的是人民网的粤经济新闻数据,分别实现基于TF-IDF、TextRank和Word2vec词聚类的关键词提取算法。该数据集共包含558个文本文件,每个文件的内容均为标题和摘要。

关键词提取的实现流程

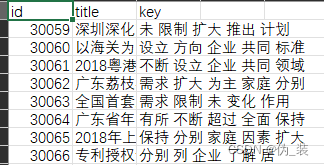

(1)将原始数据处理成result.csv文本,具体编号、标题和摘要,Text.csv数据格式如图所示。

(2)获取每行记录的标题和摘要字段,并拼接这两个字段。

(3)加载自定义停用词表stopWord.txt,然后对拼接的文本进行数据预处理操作,包括分词、去除停用词、用空格分隔文本等。

(4)编写相应算法提取关键词。

(5)将最终结果写进文件进行保存。

项目地址:zz-zik/NLP-Application-and-Practice: 本项目将《自然语言处理与应用实战》原书中代码进行了实现,并在此基础上进行了改进。原书作者:韩少云、裴广战、吴飞等。 (github.com)

数据预处理

基于TF-IDF的关键词提取算法

data_prepare.py:数据预处理,合并文本文件,在data文件夹下生成text.csv文件,内容包括,id、title、key

import os

import csv

# 文本文件合并

def text_combine(path):

# 1.获取文件列表

files = []

for file in os.listdir(path):

if file.endswith(".txt"):

files.append(path+"/"+file)

# 2.创建text.csv文件,保存结果

with open('data/text.csv','w',newline = '',encoding= 'utf-8') as csvfile:

writer = csv.writer(csvfile)

writer.writerow(['id','title','abstract'])

# 3.遍历txt文件,获取文件编号

for file_name in files:

number = (file_name.split('/')[1].split('_')[0])

title,text = '',''

count = 0

# 4.读取标题和内容

with open(file_name,encoding='utf-8-sig') as f:

for line in f:

if count == 0:

title += line.strip()

else:

text += line.strip()

count += 1

res = [number,title,text]

writer.writerow(res)

# 主函数处理

def main():

path = 'text_file'

text_combine(path)

if __name__ == '__main__':

main()

基于TextRank的关键词提取算法

tfidf.py实现基于TextRank的关键词提取算法

# coding=utf-8

import codecs

import pandas as pd

import numpy as np

# 导入jieba分词

import jieba.posseg

import jieba.analyse

# 导入文本向量化函数

from sklearn.feature_extraction.text import TfidfTransformer

# 导入词频统计函数

from sklearn.feature_extraction.text import CountVectorizer

# 读取text.csv文件:分词,去停用词,词性筛选

def data_read(text, stopkey):

l = []

pos = ['n', 'nz', 'v', 'vd', 'vn', 'l', 'a', 'd'] # 定义选取的词性

seg = jieba.posseg.cut(text) # 分词

for i in seg:

if i.word not in stopkey and i.flag in pos: # 去停用词 + 词性筛选

l.append(i.word)

return l

# tf-idf获取文本top10关键词

def words_tfidf(data, stopkey, topK):

idList, titleList, abstractList = \

data['id'], data['title'], data['abstract']

corpus = [] # 将所有文档输出到一个list中,一行就是一个文档

for index in range(len(idList)):

# 拼接标题和摘要

text = '%s。%s' % (titleList[index], abstractList[index])

text = data_read(text, stopkey) # 文本预处理

text = " ".join(text) # 连接成字符串,空格分隔

corpus.append(text)

# 1、构建词频矩阵,将文本中的词语转换成词频矩阵

vectorizer = CountVectorizer()

# 词频矩阵,a[i][j]:表示j词在第i个文本中的词频

X = vectorizer.fit_transform(corpus)

# 2、统计每个词的tf-idf权值

transformer = TfidfTransformer()

tfidf = transformer.fit_transform(X)

# 3、获取词袋模型中的关键词

word = vectorizer.get_feature_names()

# 4、获取tf-idf矩阵,a[i][j]表示j词在i篇文本中的tf-idf权重

weight = tfidf.toarray()

# 5、打印词语权重

ids, titles, keys = [], [], []

for i in range(len(weight)):

print(u"-------这里输出第", i + 1, u"篇文本的词语tf-idf------")

ids.append(idList[i])

titles.append(titleList[i])

df_word, df_weight = [], [] # 当前文章的所有词汇列表、词汇对应权重列表

for j in range(len(word)):

print(word[j], weight[i][j])

df_word.append(word[j])

df_weight.append(weight[i][j])

df_word = pd.DataFrame(df_word, columns=['word'])

df_weight = pd.DataFrame(df_weight, columns=['weight'])

word_weight = pd.concat([df_word, df_weight], axis=1) # 拼接词汇列表和权重列表

word_weight = word_weight.sort_values(by="weight", ascending=False) # 按照权重值降序排列

keyword = np.array(word_weight['word']) # 选择词汇列并转成数组格式

word_split = [keyword[x] for x in range(0, topK)] # 抽取前topK个词汇作为关键词

word_split = " ".join(word_split)

keys.append(word_split.encode("utf-8").decode("utf-8"))

result = pd.DataFrame({"id": ids, "title": titles, "key": keys},

columns=['id', 'title', 'key'])

return result

def main():

# 读取数据集

dataFile = 'data/text.csv'

data = pd.read_csv(dataFile)

# 停用词表

stopkey = [w.strip() for w in codecs.open('data/stopWord.txt', 'r', encoding="utf-8").readlines()]

# tf-idf关键词抽取

result = words_tfidf(data, stopkey, 10)

result.to_csv("result/tfidf.csv", index=False)

if __name__ == '__main__':

main()

构建文本数据的词向量

textrank.py:根据wiki.zh.text.vector词向量模型构建文本数据的词向量,并获取候选词语的词向量。

# coding=utf-8

import pandas as pd

import jieba.analyse

# 处理标题和摘要,提取关键词

def words_textrank(data, topK):

idList, titleList, abstractList = data['id'], data['title'], data['abstract']

ids, titles, keys = [], [], []

for index in range(len(idList)):

# 拼接标题和摘要

text = '%s。%s' % (titleList[index], abstractList[index])

jieba.analyse.set_stop_words("data/stopWord.txt") # 加载自定义停用词表

print("\"", titleList[index], "\"", " 10 Keywords - TextRank :")

# TextRank关键词提取,词性筛选

keywords = jieba.analyse.textrank(text, topK=topK,

allowPOS=('n', 'nz', 'v','vd', 'vn','l', 'a', 'd'))

word_split = " ".join(keywords)

keys.append(word_split.encode("utf-8").decode("utf-8"))

ids.append(idList[index])

titles.append(titleList[index])

result = pd.DataFrame({"id": ids, "title": titles,

"key": keys},

columns=['id', 'title', 'key'])

return result

def main():

dataFile = 'data/text.csv'

data = pd.read_csv(dataFile)

result = words_textrank(data, 10)

result.to_csv("result/textrank.csv", index=False)

if __name__ == '__main__':

main()

基于Word2vec词聚类的关键词提取算法

实现基于Word2vec词聚类的关键词提取算法

(1)编写word2vec_prepare.py,构建候选词向量

# coding=utf-8

import warnings

warnings.filterwarnings(action='ignore',

category=UserWarning,

module='gensim') # 忽略警告

import codecs

import pandas as pd

import numpy as np

import jieba # 分词

import jieba.posseg

import gensim # 加载词向量模型

# 返回特征词向量bai

def word_vecs(wordList, model):

name = []

vecs = []

for word in wordList:

word = word.replace('\n', '')

try:

if word in model: # 模型中存在该词的向量表示

name.append(word.encode('utf8').decode("utf-8"))

vecs.append(model[word])

except KeyError:

continue

a = pd.DataFrame(name, columns=['word'])

b = pd.DataFrame(np.array(vecs, dtype='float'))

return pd.concat([a, b], axis=1)

# 数据预处理操作:分词,去停用词,词性筛选

def data_prepare(text, stopkey):

l = []

# 定义选取的词性

pos = ['n', 'nz', 'v', 'vd', 'vn', 'l', 'a', 'd']

seg = jieba.posseg.cut(text) # 分词

for i in seg:

# 去重 + 去停用词 + 词性筛选

if i.word not in l and i.word\

not in stopkey and i.flag in pos:

# print i.word

l.append(i.word)

return l

# 根据数据获取候选关键词词向量

def build_words_vecs(data, stopkey, model):

idList, titleList, abstractList = data['id'], data['title'], data['abstract']

for index in range(len(idList)):

id = idList[index]

title = titleList[index]

abstract = abstractList[index]

l_ti = data_prepare(title, stopkey) # 处理标题

l_ab = data_prepare(abstract, stopkey) # 处理摘要

# 获取候选关键词的词向量

words = np.append(l_ti, l_ab) # 拼接数组元素

words = list(set(words)) # 数组元素去重,得到候选关键词列表

wordvecs = word_vecs(words, model) # 获取候选关键词的词向量表示

# 词向量写入csv文件,每个词400维

data_vecs = pd.DataFrame(wordvecs)

data_vecs.to_csv('result/vecs/wordvecs_' + str(id) + '.csv', index=False)

print ("document ", id, " well done.")

def main():

# 读取数据集

dataFile = 'data/text.csv'

data = pd.read_csv(dataFile)

# 停用词表

stopkey = [w.strip() for w in codecs.open('data/stopWord.txt', 'r', encoding='utf-8').readlines()]

# 词向量模型

inp = 'wiki.zh.text.vector'

model = gensim.models.KeyedVectors.load_word2vec_format(inp, binary=False)

build_words_vecs(data, stopkey, model)

if __name__ == '__main__':

main()

(2)编写word2vec_result.py,实现基于WordsVec词聚类的关键词提取算法

# coding=utf-8

import os

# 导入kmeans聚类算法

from sklearn.cluster import KMeans

import pandas as pd

import numpy as np

import math

# 对词向量采用K-means聚类抽取TopK关键词

def words_kmeans(data, topK):

words = data["word"] # 词汇

vecs = data.iloc[:, 1:] # 向量表示

kmeans = KMeans(n_clusters=1, random_state=10).fit(vecs)

labels = kmeans.labels_ # 类别结果标签

labels = pd.DataFrame(labels, columns=['label'])

new_df = pd.concat([labels, vecs], axis=1)

vec_center = kmeans.cluster_centers_ # 聚类中心

# 计算距离(相似性) 采用欧几里得距离(欧式距离)

distances = []

vec_words = np.array(vecs) # 候选关键词向量,dataFrame转array

vec_center = vec_center[0] # 第一个类别聚类中心,本例只有一个类别

length = len(vec_center) # 向量维度

for index in range(len(vec_words)): # 候选关键词个数

cur_wordvec = vec_words[index] # 当前词语的词向量

dis = 0 # 向量距离

for index2 in range(length):

dis += (vec_center[index2] - cur_wordvec[index2]) * \

(vec_center[index2] - cur_wordvec[index2])

dis = math.sqrt(dis)

distances.append(dis)

distances = pd.DataFrame(distances, columns=['dis'])

# 拼接词语与其对应中心点的距离

result = pd.concat([words, labels, distances], axis=1)

# 按照距离大小进行升序排序

result = result.sort_values(by="dis", ascending=True)

# 抽取排名前topK个词语作为文本关键词

wordlist = np.array(result['word'])

# 抽取前topK个词汇

word_split = [wordlist[x] for x in range(0, topK)]

word_split = " ".join(word_split)

return word_split

def main():

# 读取数据集

dataFile = 'data/text.csv'

articleData = pd.read_csv(dataFile)

ids, titles, keys = [], [], []

rootdir = "result/vecs" # 词向量文件根目录

fileList = os.listdir(rootdir) # 列出文件夹下所有的目录与文件

# 遍历文件

for i in range(len(fileList)):

filename = fileList[i]

path = os.path.join(rootdir, filename)

if os.path.isfile(path):

# 读取词向量文件数据

data = pd.read_csv(path, encoding='utf-8')

# 聚类算法得到当前文件的关键词

artile_keys = words_kmeans(data, 5)

# 根据文件名获得文章id以及标题

(shortname, extension) = os.path.splitext(filename)

t = shortname.split("_")

article_id = int(t[len(t) - 1]) # 获得文章id

# 获得文章标题

artile_tit = articleData[articleData.id ==

article_id]['title']

print(artile_tit)

print(list(artile_tit))

artile_tit = list(artile_tit)[0] # series转成字符串

ids.append(article_id)

titles.append(artile_tit)

keys.append(artile_keys.encode("utf-8").decode("utf-8"))

# 所有结果写入文件

result = pd.DataFrame({"id": ids, "title": titles, "key": keys},

columns=['id', 'title', 'key'])

result = result.sort_values(by="id", ascending=True) # 排序

result.to_csv("result/word2vec.csv", index=False,

encoding='utf_8_sig')

if __name__ == '__main__':

main()

本文介绍了使用人民网粤经济新闻数据,通过TF-IDF、TextRank和Word2vec算法对新闻标题和摘要进行关键词提取的过程,包括数据预处理、分词、去停用词和聚类分析,以实现新闻内容的自动摘要和关键信息提取。

本文介绍了使用人民网粤经济新闻数据,通过TF-IDF、TextRank和Word2vec算法对新闻标题和摘要进行关键词提取的过程,包括数据预处理、分词、去停用词和聚类分析,以实现新闻内容的自动摘要和关键信息提取。

179

179

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?