1.问题引入

使用SAC训练机器人导航至目标点时报错,网络使用的是自定义的CNN,然后在运行的时候出现了如标题所示的这种小错误。

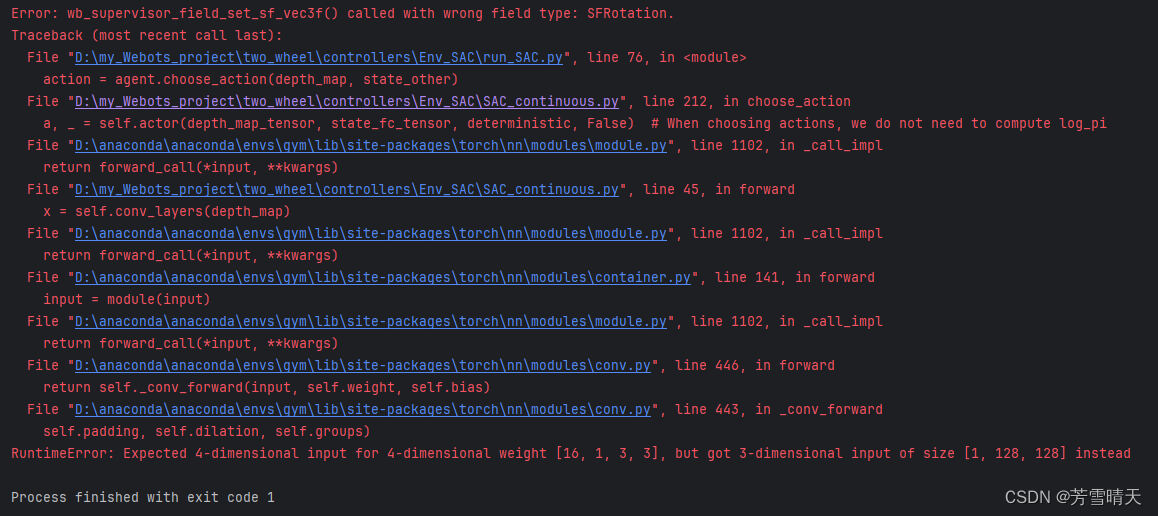

RuntimeError: Expected 4-dimensional input for 4-dimensional weight [16, 1, 3, 3], but got 3-dimensional input of size [1, 128, 128] instead

2.我的代码

首先是我自己自定义的CNN网络如下所示

class Actor(nn.Module):

# max_action动作的最大值

def __init__(self, state_dim_fc, action_dim, hidden_width, max_action):

super(Actor, self).__init__()

self.max_action = max_action

self.conv_layers = nn.Sequential(

nn.Conv2d(1, 16, kernel_size=3, stride=1, padding=1),

nn.ReLU(inplace=True),

# 添加第一个最大池化层,池化核大小为2x2,步幅为2

nn.MaxPool2d(kernel_size=2, stride=2),

nn.Conv2d(16, 32, kernel_size=3, stride=1, padding=1),

nn.ReLU(inplace=True),

# 添加第二个最大池化层,池化核大小为2x2,步幅为2

nn.MaxPool2d(kernel_size=2, stride=2),

nn.Conv2d(32, 64, kernel_size=3, stride=1, padding=1),

nn.ReLU(inplace=True),

# 添加第三个最大池化层,池化核大小为2x2,步幅为2

nn.MaxPool2d(kernel_size=2, stride=2)

)

feature_map_size = 64 * (128 // 8) * (128 // 8) # 假设每个卷积层都使特征图大小减半

# 将卷积层的输出和其他坐标信息结合

self.fc_input_dim = feature_map_size + state_dim_fc

self.l1 = nn.Linear(self.fc_input_dim, hidden_width)

self.l2 = nn.Linear(hidden_width, hidden_width)

# 两个输出层:mean_layer用于输出动作的均值,log_std_layer用于输出动作的对数标准差

self.mean_layer = nn.Linear(hidden_width, action_dim)

self.log_std_layer = nn.Linear(hidden_width, action_dim)

# 连续动作空间 基于高斯策略

def forward(self, depth_map, state_fc, deterministic=False, with_logprob=True):

# 深度图通过卷积层

x = self.conv_layers(depth_map)

# 将卷积层的输出flatten

x = x.view(x.size(0), -1)

forward函数的第一行显示有报错,报错内容如下

Error: wb_supervisor_field_set_sf_vec3f() called with wrong field type: SFRotation.

Traceback (most recent call last):

File "D:\my_Webots_project\two_wheel\controllers\Env_SAC\run_SAC.py", line 76, in <module>

action = agent.choose_action(depth_map, state_other)

File "D:\my_Webots_project\two_wheel\controllers\Env_SAC\SAC_continuous.py", line 212, in choose_action

a, _ = self.actor(depth_map_tensor, state_fc_tensor, deterministic, False) # When choosing actions, we do not need to compute log_pi

File "D:\anaconda\anaconda\envs\gym\lib\site-packages\torch\nn\modules\module.py", line 1102, in _call_impl

return forward_call(*input, **kwargs)

File "D:\my_Webots_project\two_wheel\controllers\Env_SAC\SAC_continuous.py", line 45, in forward

x = self.conv_layers(depth_map)

File "D:\anaconda\anaconda\envs\gym\lib\site-packages\torch\nn\modules\module.py", line 1102, in _call_impl

return forward_call(*input, **kwargs)

File "D:\anaconda\anaconda\envs\gym\lib\site-packages\torch\nn\modules\container.py", line 141, in forward

input = module(input)

File "D:\anaconda\anaconda\envs\gym\lib\site-packages\torch\nn\modules\module.py", line 1102, in _call_impl

return forward_call(*input, **kwargs)

File "D:\anaconda\anaconda\envs\gym\lib\site-packages\torch\nn\modules\conv.py", line 446, in forward

return self._conv_forward(input, self.weight, self.bias)

File "D:\anaconda\anaconda\envs\gym\lib\site-packages\torch\nn\modules\conv.py", line 443, in _conv_forward

self.padding, self.dilation, self.groups)

RuntimeError: Expected 4-dimensional input for 4-dimensional weight [16, 1, 3, 3], but got 3-dimensional input of size [1, 128, 128] instead可以看到这句提示,大致就是我们传入的数据输入到CNN网络,然后由于维度不同导致的。因为我们输入的是四维,但是得到的却是三维。

3.解决办法

在PyTorch中,对于卷积神经网络(CNN),通常期望的输入是一个四维的张量(Tensor),其形状为 [batch_size, channels, height, width]

我传入的depth_map的形状是[1, 128, 128],那么它缺少了一个维度,即channels维度。对于灰度图像,通常channels维度应该是1,但是你需要确保这个维度在传递给卷积层之前被正确地包含在输入张量中。

为了解决这个问题,你需要确保depth_map的形状是[batch_size, 1, 128, 128]。如果你的depth_map是一个单个图像(batch_size为1),那么你可以在传递给卷积层之前添加一个通道维度。这可以通过使用unsqueeze方法来实现,如下所示:

def forward(self, depth_map, state_fc, deterministic=False, with_logprob=True):

# 深度图通过卷积层

# 确保depth_map是四维的,添加一个通道维度如果它是三维的

if len(depth_map.shape) == 3:

depth_map = depth_map.unsqueeze(1) # batch_size是1

x = self.conv_layers(depth_map)

315

315

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?