目录

一、代码及详细注释

Mnist数据集

## 1.引入数据集dataset

## 2.数据加载器dataloader

## 3.批量加载数据集

## 4.数据集可视化

## 5.定义模型

## 6.定义损失函数和优化器

## 7.模型训练

## 8.模型评估

import torch

from torch import nn

import torchvision

from torchvision import transforms

import matplotlib.pyplot as plt

from PIL import Image

import numpy as np

torch.__version__

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

## 1.引入数据集dataset

train_dataset = torchvision.datasets.MNIST(root="../mnist",

train=True,

transform=transforms.ToTensor(),

download=True)

test_dataset = torchvision.datasets.MNIST(root="./mnist",

train=False,

transform=transforms.ToTensor(),

download=True)

## 2.数据加载器dataloader

train_dataloader = torch.utils.data.DataLoader(dataset = train_dataset,

shuffle = True,

batch_size = 64)

test_dataloader = torch.utils.data.DataLoader(dataset = test_dataset,

shuffle = False,

batch_size = 64)

## 3.批量加载数据集

for batch_idx, (images, labels) in enumerate(train_dataloader):

images = images.to(device) # 将图形的tensor重塑为二维数组并输送到设备上

images = images.reshape(-1,28,28)

if batch_idx == len(train_dataloader)-1: # 判断是否是最后一个批次,如果是执行下面的代码块

print(images.shape, labels.shape) # 打印输出最后一个批次的图像和标签信息

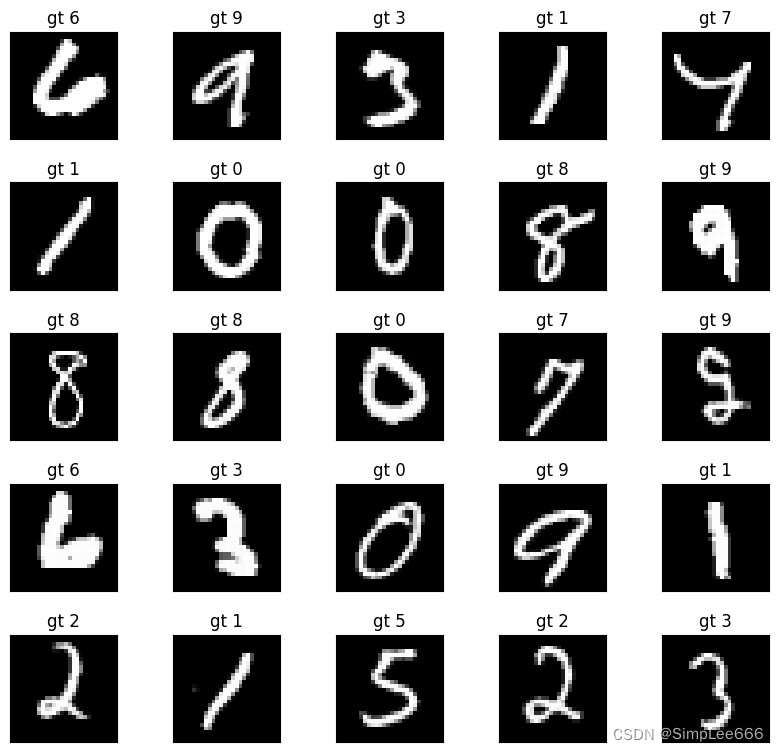

## 4.数据集可视化

images, labels = next(iter(train_dataloader)) # 由于上面打开了shuffle所以是随机取得一个批次,并返回到images和labels

print(images.shape,labels.shape) # torch.Size([64, 1, 28, 28]) torch.Size([64])只有一个通道(1)

fig = plt.figure(figsize=(8, 8))

for i in range(25):

plt.subplot(5,5,i+1)

plt.tight_layout()

plt.imshow(images[i][0], cmap='gray', interpolation='none')

plt.title("gt {}".format(labels[i])) # "ground truth" = gt = 真实标签和结果

plt.xticks([])

plt.yticks([])

# subplot函数==>显示5x5图像网格,subplot图像索引从1开始故i+1

# tight_layout函数==>调用会自动调整子图参数,使之填充整个图像区域,同时减少子图之间的重叠

# images[i]是从images张量中取出的第i+1个图像(因为i从0开始),[0]表示我们取出的是第一个通道(对于灰度图像,只有一个通道)

# cmap='gray'指定了颜色映射,因为我们正在显示灰度图像

# interpolation='none'表示在放大图像时不使用插值,这样可以避免图像变得模糊

# format()将括号里的内容装进{}

# plt.xticks([]) # 隐藏刻度

# plt.xticks([0, 2, 4], ['zero', 'two', 'four']) # 设置刻度标签

# plt.xticks(color='red', size=10, rotation=45) # 设置刻度线样式

## 5.定义模型

class SimpleNN(nn.Module):

def __init__(self):

super(SimpleNN, self).__init__()

self.fc1 = nn.Linear(28*28, 128)

self.fc2 = nn.Linear(128, 10) # 输出层有10个类别

def forward(self, x):

x = x.view(-1, 28*28) # 将输入展平为一维向量

x = nn.functional.relu(self.fc1(x))

x = self.fc2(x)

return x

model = SimpleNN().to(device)

## 6.定义损失函数和优化器

criterion = nn.CrossEntropyLoss()

optimizer = torch.optim.SGD(model.parameters(), lr=0.01)

## 7.模型训练

num_epochs = 30

for epoch in range(num_epochs):

model.train()

for images, labels in train_dataloader:

images, labels = images.to(device), labels.to(device)

optimizer.zero_grad()

outputs = model(images)

loss = criterion(outputs, labels)

loss.backward()

optimizer.step()

print(f'Epoch {epoch+1}, Loss: {loss.item()}')

## 8.模型评估

model.eval()

with torch.no_grad():

correct = 0

total = 0

for images, labels in test_dataloader:

images, labels = images.to(device), labels.to(device)

outputs = model(images)

_, predicted = torch.max(outputs.data, 1)

total += labels.size(0)

correct += (predicted == labels).sum().item()

accuracy = correct / total

print(f'Accuracy on test set: {100 * accuracy:.2f}%')

Epoch 1, Loss: 0.6197516322135925

Epoch 2, Loss: 0.34904736280441284

Epoch 3, Loss: 0.43813589215278625

Epoch 4, Loss: 0.47811999917030334

Epoch 5, Loss: 0.4017272889614105

Epoch 6, Loss: 0.32648470997810364

Epoch 7, Loss: 0.32351598143577576

Epoch 8, Loss: 0.18087905645370483

Epoch 9, Loss: 0.35925230383872986

Epoch 10, Loss: 0.2774750590324402

Epoch 11, Loss: 0.289463609457016

Epoch 12, Loss: 0.16053856909275055

Epoch 13, Loss: 0.31605976819992065

Epoch 14, Loss: 0.26499032974243164

Epoch 15, Loss: 0.27009227871894836

Epoch 16, Loss: 0.10569143295288086

Epoch 17, Loss: 0.10770886391401291

Epoch 18, Loss: 0.05340247601270676

Epoch 19, Loss: 0.1449984759092331

Epoch 20, Loss: 0.3005489110946655

Epoch 21, Loss: 0.5192596316337585

Epoch 22, Loss: 0.04057912528514862

Epoch 23, Loss: 0.2609449028968811

Epoch 24, Loss: 0.21828599274158478

Epoch 25, Loss: 0.08382154256105423

...

Epoch 28, Loss: 0.09783965349197388

Epoch 29, Loss: 0.05186847969889641

Epoch 30, Loss: 0.07308951765298843

Accuracy on test set: 95.58%FashionMnist数据集

import torch

from torch import nn

import torchvision

from torchvision import transforms

import matplotlib.pyplot as plt

from PIL import Image

import numpy as np

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

## 1.引入数据集dataset

train_dataset = torchvision.datasets.FashionMNIST(root="../fashionmnist",

train=True,

transform=transforms.ToTensor(),

download=True)

test_dataset = torchvision.datasets.FashionMNIST(root="./fashionmnist",

train=False,

transform=transforms.ToTensor(),

download=True)

## 2.数据加载器dataloader

train_dataloader = torch.utils.data.DataLoader(dataset = train_dataset,

shuffle = True,

batch_size = 64)

test_dataloader = torch.utils.data.DataLoader(dataset = test_dataset,

shuffle = False,

batch_size = 64)

## 3.批量加载数据集

for batch_idx, (images, labels) in enumerate(train_dataloader):

images = images.to(device) # 将图形的tensor重塑为二维数组并输送到设备上

images = images.reshape(-1,28,28)

if batch_idx == len(train_dataloader)-1: # 判断是否是最后一个批次,如果是执行下面的代码块

print(images.shape, labels.shape) # 打印输出最后一个批次的图像和标签信息

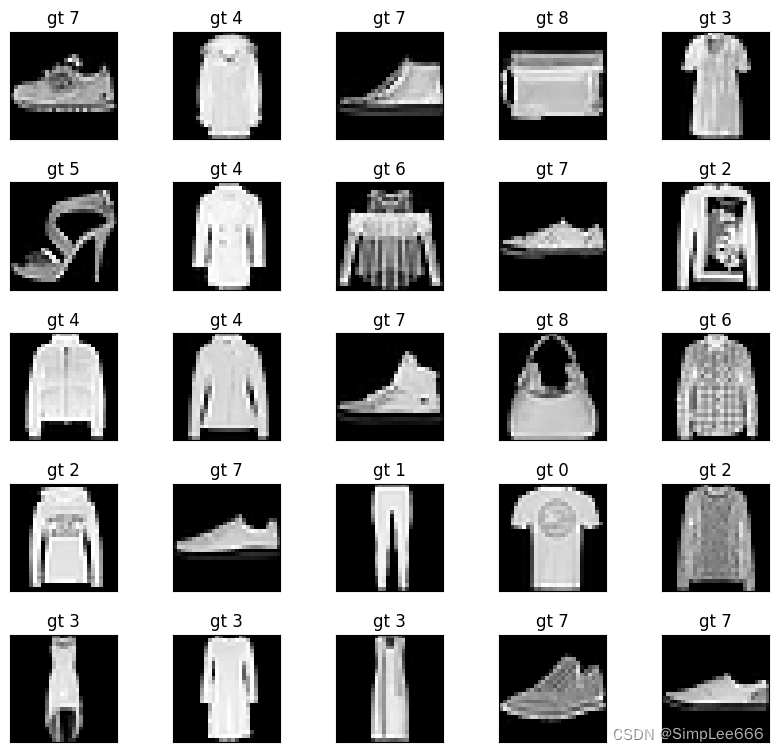

## 4.数据集可视化

images, labels = next(iter(train_dataloader)) # 由于上面打开了shuffle所以是随机取得一个批次,并返回到images和labels

print(images.shape,labels.shape) # torch.Size([64, 1, 28, 28]) torch.Size([64])只有一个通道(1)

fig = plt.figure(figsize=(8, 8))

for i in range(25):

plt.subplot(5,5,i+1)

plt.tight_layout()

plt.imshow(images[i][0], cmap='gray', interpolation='none')

plt.title("gt {}".format(labels[i])) # "ground truth" = gt = 真实标签和结果

plt.xticks([])

plt.yticks([])

# subplot函数==>显示5x5图像网格,subplot图像索引从1开始故i+1

# tight_layout函数==>调用会自动调整子图参数,使之填充整个图像区域,同时减少子图之间的重叠

# images[i]是从images张量中取出的第i+1个图像(因为i从0开始),[0]表示我们取出的是第一个通道(对于灰度图像,只有一个通道)

# cmap='gray'指定了颜色映射,因为我们正在显示灰度图像

# interpolation='none'表示在放大图像时不使用插值,这样可以避免图像变得模糊

# format()将括号里的内容装进{}

# plt.xticks([]) # 隐藏刻度

# plt.xticks([0, 2, 4], ['zero', 'two', 'four']) # 设置刻度标签

# plt.xticks(color='red', size=10, rotation=45) # 设置刻度线样式CIFAR10彩色数据集

import torch

from torch import nn

import torchvision

from torchvision import transforms

import matplotlib.pyplot as plt

from PIL import Image

import numpy as np

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

### 1.引入数据集dataset

train_dataset = torchvision.datasets.CIFAR10(root="../fashionmnist",

train=True,

transform=transforms.ToTensor(),

download=True)

test_dataset = torchvision.datasets.CIFAR10(root="./fashionmnist",

train=False,

transform=transforms.ToTensor(),

download=True)

# print(train_dataset.classes) # 检查dataset类别

### 2.数据加载器dataloader

train_dataloader = torch.utils.data.DataLoader(dataset = train_dataset,

shuffle = True,

batch_size = 64)

test_dataloader = torch.utils.data.DataLoader(dataset = test_dataset,

shuffle = False,

batch_size = 64)

# print(len(train_dataloader)) # 检查dataloader的batch组数

# print(len(train_dataset) // 64) # 求batch组数

# print(len(train_dataset) % 64) # 求最后一组batch的元素剩下个数=16

### 3.批量加载数据集

# for batch_idx, (images, labels) in enumerate(train_dataloader):

# images = images.to(device) # 将图形的tensor重塑为二维数组并输送到设备上

# images = images.reshape(-1,28,28) # 每张图像展平后大小28x28

# if batch_idx == len(train_dataloader)-1: # 判断是否是最后一个批次,如果是执行下面的代码块

# print(images.shape, labels.shape) # 打印输出最后一个批次的图像和标签信息

### 4.数据集可视化-灰色

# images, labels = next(iter(train_dataloader)) # 打开了shuffle所以是随机取得一个批次,并返回到images和labels

# print(images.shape,labels.shape)

# fig = plt.figure(figsize=(8, 8))

# for i in range(25):

# plt.subplot(5,5,i+1)

# plt.tight_layout()

# plt.imshow(images[i][0], cmap='gray', interpolation='none')

# plt.title("gt {}".format(labels[i]))

# plt.xticks([])

# plt.yticks([])

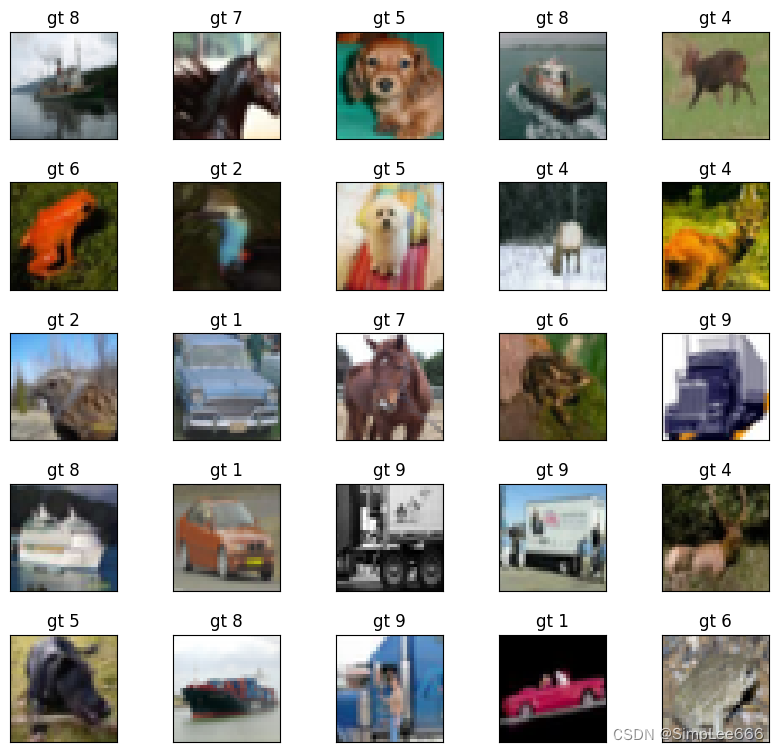

### 4.数据集可视化-彩色

images, labels = next(iter(train_dataloader)) # 打开了shuffle所以是随机取得一个批次,并返回到images和labels

print(images.shape,labels.shape) # torch.Size([64, 3, 32, 32]) torch.Size([64])有三个通道(3)即RGB

fig = plt.figure(figsize=(8, 8))

for i in range(25):

plt.subplot(5,5,i+1)

plt.tight_layout()

plt.imshow(np.transpose(images[i], (1,2,0)),interpolation='none') # np.transpose() # 维度变换

plt.title("gt {}".format(labels[i]))

plt.xticks([])

plt.yticks([])二、数据集属性查询方法

shape、classes、dtype、min/max、mean、std、len

1.尺寸shape

print(train_dataset.data.shape,train_dataset.targets.shape) # 训练集60000

print(test_dataset.data.shape,test_dataset.targets.shape) # 测试集100002.类别classes

print(train_dataset.classes)

print(test_dataset.classes)3.数据类型dtype

print(train_dataset.data.dtype) # 数据类型uint8

print(test_dataset.data.dtype)4.样本度量

print(train_dataset.data.min(),train_dataset.data.max()) # tensor范围0-255

print(train_dataset.data.float().mean(),train_dataset.data.float().std()) # 平均值和标准差std5.长度len

image,label = train_dataset[0] # 第一个元素

image.shape

label

len(train_dataset)笔记参考视频:[动手写神经网络] 01 认识 pytorch 中的 dataset、dataloader(mnist、fashionmnist、cifar10)_哔哩哔哩_bilibili

本文详细介绍了如何在PyTorch中使用Mnist、FashionMnist和CIFAR10数据集进行基础的深度学习模型构建,包括数据加载、数据预处理、模型定义、损失函数和优化器设置,以及模型的训练和评估过程。

本文详细介绍了如何在PyTorch中使用Mnist、FashionMnist和CIFAR10数据集进行基础的深度学习模型构建,包括数据加载、数据预处理、模型定义、损失函数和优化器设置,以及模型的训练和评估过程。

3938

3938

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?