文章目录

Service详解

Service介绍

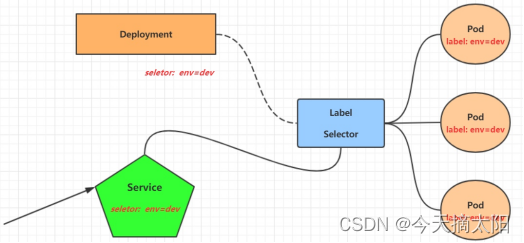

在kubernetes中,pod是应用程序的载体,我们可以通过pod的ip来访问应用程序,但是pod的ip地址不是固定的,这也就意味着不方便直接采用pod的ip对服务进行访问。

为了解决这个问题,kubernetes提供了Service资源,Service会对提供同一个服务的多个pod进行聚合,并且提供一个统一的入口地址。通过访问Service的入口地址就能访问到后面的pod服务。

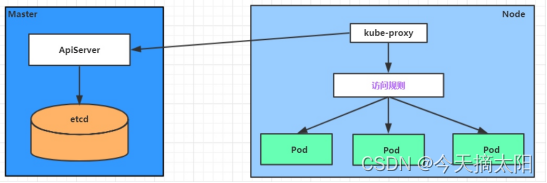

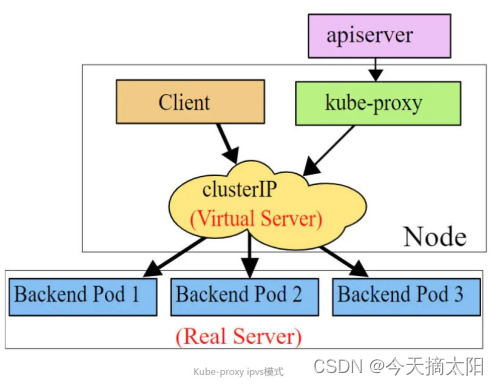

Service在很多情况下只是一个概念,真正起作用的其实是kube-proxy服务进程,每个Node节点上都运行着一个kube-proxy服务进程。当创建Service的时候会通过api-server向etcd写入创建的service的信息,而kube-proxy会基于监听的机制发现这种Service的变动,然后它会将最新的Service信息转换成对应的访问规则。

# 10.97.97.97:80 是service提供的访问入口# 当访问这个入口的时候,可以发现后面有三个pod的服务在等待调用,# kube-proxy会基于rr(轮询)的策略,将请求分发到其中一个pod上去# 这个规则会同时在集群内的所有节点上都生成,所以在任何一个节点上访问都可以。

此模块必须安装ipvs内核,否则会降级为iptables

如果未安装ipvs,则先安装ipvs

[root@master ~]# ipvsadm -ln

-bash: ipvsadm: 未找到命令

[root@master ~]# yum list installed | grep ipvsadm

[root@master ~]# yum -y install ipvsadm

[root@master ~]# ipvsadm -ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

#开启ipvs

[root@master ~]# kubectl edit cm kube-proxy -n kube-system

configmap/kube-proxy edited

删除pod后,自动重建

[root@master ~]# kubectl delete pod -l k8s-app=kube-proxy -n kube-system

pod "kube-proxy-cmmpk" deleted

pod "kube-proxy-qh7qf" deleted

pod "kube-proxy-t68kh" deleted

[root@master ~]# ipvsadm -Ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 172.17.0.1:30050 rr

-> 10.244.2.3:80 Masq 1 0 0

TCP 172.17.0.1:32407 rr

-> 10.244.1.3:80 Masq 1 0 0

TCP 192.168.193.128:30050 rr

-> 10.244.2.3:80 Masq 1 0 0

TCP 192.168.193.128:32407 rr

-> 10.244.1.3:80 Masq 1 0 0

TCP 10.96.0.1:443 rr

-> 192.168.193.128:6443 Masq 1 0 0

TCP 10.96.0.10:53 rr

-> 10.244.0.4:53 Masq 1 0 0

-> 10.244.0.5:53 Masq 1 0 0

TCP 10.96.0.10:9153 rr

-> 10.244.0.4:9153 Masq 1 0 0

-> 10.244.0.5:9153 Masq 1 0 0

TCP 10.97.237.88:80 rr

-> 10.244.2.3:80 Masq 1 0 0

TCP 10.110.93.150:80 rr

-> 10.244.1.3:80 Masq 1 0 0

TCP 10.110.167.164:80 rr

-> 10.244.1.4:80 Masq 1 0 0

-> 10.244.1.5:80 Masq 1 0 0

-> 10.244.2.4:80 Masq 1 0 0

TCP 10.244.0.0:30050 rr

-> 10.244.2.3:80 Masq 1 0 0

TCP 10.244.0.0:32407 rr

-> 10.244.1.3:80 Masq 1 0 0

TCP 10.244.0.1:30050 rr

-> 10.244.2.3:80 Masq 1 0 0

TCP 10.244.0.1:32407 rr

-> 10.244.1.3:80 Masq 1 0 0

UDP 10.96.0.10:53 rr

-> 10.244.0.4:53 Masq 1 0 0

-> 10.244.0.5:53 Masq 1 0 0

[root@master ~]#

//查看iptables防火墙规则

[root@master ~]# iptables -t nat -nvL

kube-proxy目前支持三种工作模式:

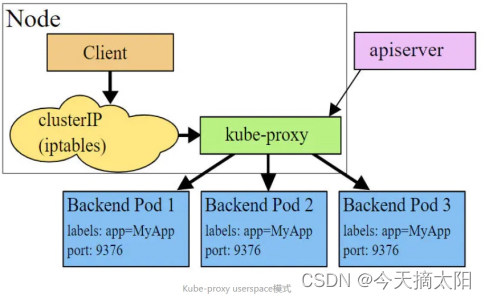

userspace 模式

userspace模式下,kube-proxy会为每一个Service创建一个监听端口,发向Cluster IP的请求被Iptables规则重定向到kube-proxy监听的端口上,kube-proxy根据LB算法选择一个提供服务的Pod并和其建立链接,以将请求转发到Pod上。 该模式下,kube-proxy充当了一个四层负责均衡器的角色。由于kube-proxy运行在userspace中,在进行转发处理时会增加内核和用户空间之间的数据拷贝,虽然比较稳定,但是效率比较低。

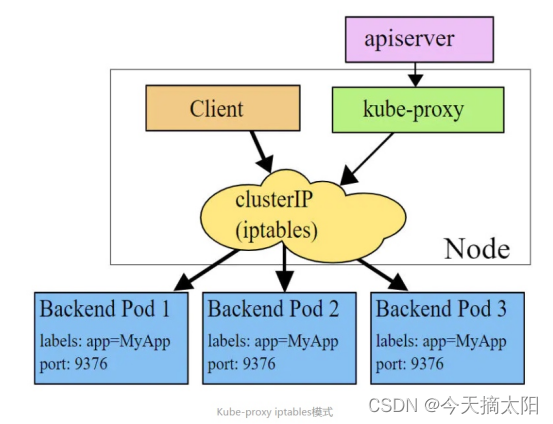

iptables 模式

iptables模式下,kube-proxy为service后端的每个Pod创建对应的iptables规则,直接将发向Cluster IP的请求重定向到一个Pod IP。 该模式下kube-proxy不承担四层负责均衡器的角色,只负责创建iptables规则。该模式的优点是较userspace模式效率更高,但不能提供灵活的LB策略,当后端Pod不可用时也无法进行重试。

ipvs 模式

ipvs模式和iptables类似,kube-proxy监控Pod的变化并创建相应的ipvs规则。ipvs相对iptables转发效率更高。除此以外,ipvs支持更多的LB算法。

Service类型

Service的资源清单文件:

kind: Service # 资源类型

apiVersion: v1 # 资源版本

metadata: # 元数据

name: service # 资源名称

namespace: dev # 命名空间

spec: # 描述

selector: # 标签选择器,用于确定当前service代理哪些pod

app: nginx

type: # Service类型,指定service的访问方式

clusterIP: # 虚拟服务的ip地址

sessionAffinity: # session亲和性,支持ClientIP、None两个选项

ports: # 端口信息

- protocol: TCP

port: 3017 # service端口

targetPort: 5003 # pod端口

nodePort: 31122 # 主机端口

ClusterIP:默认值,它是Kubernetes系统自动分配的虚拟IP,只能在集群内部访问

NodePort:将Service通过指定的Node上的端口暴露给外部,通过此方法,就可以在集群外部访问服务

LoadBalancer:使用外接负载均衡器完成到服务的负载分发,注意此模式需要外部云环境支持

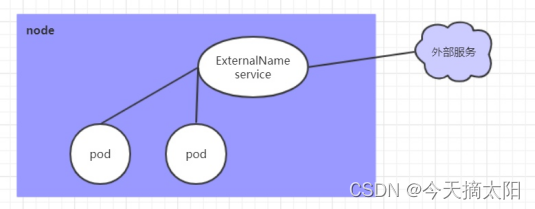

ExternalName: 把集群外部的服务引入集群内部,直接使用

Service使用

实验环境准备

在使用service之前,首先利用Deployment创建出3个pod,注意要为pod设置app=nginx-pod的标签

创建deployment.yaml,内容如下:

apiVersion: apps/v1

kind: Deployment

metadata:

name: pc-deployment

namespace: dev

spec:

replicas: 3

selector:

matchLabels:

app: nginx-pod

template:

metadata:

labels:

app: nginx-pod

spec:

containers:

- name: nginx

image: nginx:1.17.1

ports:

- containerPort: 80

[root@master ~]# kubectl create -f deployment.yaml

deployment.apps/pc-deployment created

# 查看pod详情

[root@master ~]# kubectl get pods -n dev -o wide --show-labels

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES LABELS

pc-deployment-66d5c85c96-6qxj7 1/1 Running 0 44s 10.244.1.6 node1.example.com <none> <none> app=nginx-pod,pod-template-hash=66d5c85c96

pc-deployment-66d5c85c96-lhvpv 1/1 Running 0 44s 10.244.2.6 node2.example.com <none> <none> app=nginx-pod,pod-template-hash=66d5c85c96

pc-deployment-66d5c85c96-nd72j 1/1 Running 0 44s 10.244.2.5 node2.example.com <none> <none> app=nginx-pod,pod-template-hash=66d5c85c96

# 为了方便后面的测试,修改下三台nginx的index.html页面(三台修改的IP地址不一致)# kubectl exec -it pc-deployment-66cb59b984-8p84h -n dev /bin/sh# echo "10.244.1.39" > /usr/share/nginx/html/index.html

#修改完毕之后,访问测试

[root@master ~]# curl 10.244.1.6

[root@master ~]# curl 10.244.2.6

[root@master ~]# curl 10.244.2.5

3.2 ClusterIP类型的Service

创建service-clusterip.yaml文件

apiVersion: v1

kind: Service

metadata:

name: service-clusterip

namespace: dev

spec:

selector:

app: nginx-pod

clusterIP: # service的ip地址,如果不写,默认会生成一个

type: ClusterIP

ports:

- port: 80 # Service端口

targetPort: 80 # pod端口

# 创建service

[root@master ~]# kubectl create -f service-clusterip.yaml

service/service-clusterip created

# 查看service

[root@master ~]# kubectl get svc -n dev -o wide

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

service-clusterip ClusterIP 10.104.90.145 <none> 80/TCP 38s app=nginx-pod

# 查看service的详细信息# 在这里有一个Endpoints列表,里面就是当前service可以负载到的服务入口

[root@master ~]# kubectl describe svc service-clusterip -n dev

Name: service-clusterip

Namespace: dev

Labels: <none>

Annotations: <none>

Selector: app=nginx-pod

Type: ClusterIP

IP Family Policy: SingleStack

IP Families: IPv4

IP: 10.104.90.145

IPs: 10.104.90.145

Port: <unset> 80/TCP

TargetPort: 80/TCP

Endpoints: 10.244.1.6:80,10.244.2.5:80,10.244.2.6:80

Session Affinity: None

Events: <none>

# 查看ipvs的映射规则

[root@master ~]# ipvsadm -Ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 172.17.0.1:30050 rr

-> 10.244.2.3:80 Masq 1 0 0

TCP 172.17.0.1:32407 rr

-> 10.244.1.3:80 Masq 1 0 0

TCP 192.168.193.128:30050 rr

-> 10.244.2.3:80 Masq 1 0 0

TCP 192.168.193.128:32407 rr

-> 10.244.1.3:80 Masq 1 0 0

TCP 10.96.0.1:443 rr

-> 192.168.193.128:6443 Masq 1 0 0

TCP 10.96.0.10:53 rr

-> 10.244.0.4:53 Masq 1 0 0

-> 10.244.0.5:53 Masq 1 0 0

TCP 10.96.0.10:9153 rr

-> 10.244.0.4:9153 Masq 1 0 0

-> 10.244.0.5:9153 Masq 1 0 0

TCP 10.97.237.88:80 rr

-> 10.244.2.3:80 Masq 1 0 0

TCP 10.104.90.145:80 rr

-> 10.244.1.6:80 Masq 1 0 0

-> 10.244.2.5:80 Masq 1 0 0

-> 10.244.2.6:80 Masq 1 0 0

TCP 10.110.93.150:80 rr

-> 10.244.1.3:80 Masq 1 0 0

TCP 10.110.167.164:80 rr

-> 10.244.1.4:80 Masq 1 0 0

-> 10.244.1.5:80 Masq 1 0 0

-> 10.244.2.4:80 Masq 1 0 0

TCP 10.244.0.0:30050 rr

-> 10.244.2.3:80 Masq 1 0 0

TCP 10.244.0.0:32407 rr

-> 10.244.1.3:80 Masq 1 0 0

TCP 10.244.0.1:30050 rr

-> 10.244.2.3:80 Masq 1 0 0

TCP 10.244.0.1:32407 rr

-> 10.244.1.3:80 Masq 1 0 0

UDP 10.96.0.10:53 rr

-> 10.244.0.4:53 Masq 1 0 0

-> 10.244.0.5:53 Masq 1 0 0

# 访问10.97.97.97:80观察效果

[root@master ~]# curl 10.104.90.145

Endpoint

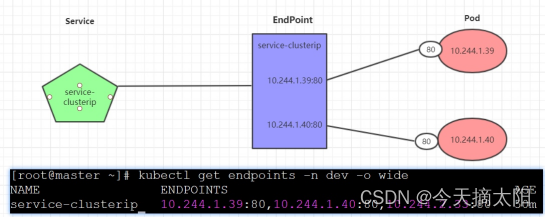

Endpoint是kubernetes中的一个资源对象,存储在etcd中,用来记录一个service对应的所有pod的访问地址,它是根据service配置文件中selector描述产生的。

一个Service由一组Pod组成,这些Pod通过Endpoints暴露出来,Endpoints是实现实际服务的端点集合。换句话说,service和pod之间的联系是通过endpoints实现的。

[root@master ~]# kubectl get endpoints -n dev -o wide

NAME ENDPOINTS AGE

service-clusterip 10.244.1.6:80,10.244.2.5:80,10.244.2.6:80 5m29s

负载分发策略

对Service的访问被分发到了后端的Pod上去,目前kubernetes提供了两种负载分发策略:

如果不定义,默认使用kube-proxy的策略,比如随机、轮询

基于客户端地址的会话保持模式,即来自同一个客户端发起的所有请求都会转发到固定的一个Pod上

此模式可以使在spec中添加sessionAffinity:ClientIP选项

# 查看ipvs的映射规则【rr 轮询】

[root@master ~]# ipvsadm -Ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 172.17.0.1:30050 rr

-> 10.244.2.3:80 Masq 1 0 0

TCP 172.17.0.1:32407 rr

-> 10.244.1.3:80 Masq 1 0 0

TCP 192.168.193.128:30050 rr

-> 10.244.2.3:80 Masq 1 0 0

TCP 192.168.193.128:32407 rr

-> 10.244.1.3:80 Masq 1 0 0

TCP 10.96.0.1:443 rr

-> 192.168.193.128:6443 Masq 1 0 0

TCP 10.96.0.10:53 rr

-> 10.244.0.4:53 Masq 1 0 0

-> 10.244.0.5:53 Masq 1 0 0

TCP 10.96.0.10:9153 rr

-> 10.244.0.4:9153 Masq 1 0 0

-> 10.244.0.5:9153 Masq 1 0 0

TCP 10.97.237.88:80 rr

-> 10.244.2.3:80 Masq 1 0 0

TCP 10.104.90.145:80 rr

-> 10.244.1.6:80 Masq 1 0 0

-> 10.244.2.5:80 Masq 1 0 0

-> 10.244.2.6:80 Masq 1 0 0

TCP 10.110.93.150:80 rr

-> 10.244.1.3:80 Masq 1 0 0

TCP 10.110.167.164:80 rr

-> 10.244.1.4:80 Masq 1 0 0

-> 10.244.1.5:80 Masq 1 0 0

-> 10.244.2.4:80 Masq 1 0 0

TCP 10.244.0.0:30050 rr

-> 10.244.2.3:80 Masq 1 0 0

TCP 10.244.0.0:32407 rr

-> 10.244.1.3:80 Masq 1 0 0

TCP 10.244.0.1:30050 rr

-> 10.244.2.3:80 Masq 1 0 0

TCP 10.244.0.1:32407 rr

-> 10.244.1.3:80 Masq 1 0 0

UDP 10.96.0.10:53 rr

-> 10.244.0.4:53 Masq 1 0 0

-> 10.244.0.5:53 Masq 1 0 0

# 循环访问测试

[root@master ~]# while true;do curl 10.110.167.164:80; sleep 5; done;

Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a>

Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a>

Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a>

Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a>

Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a>

Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a>

Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a>

# 修改分发策略----sessionAffinity:ClientIP

# 查看ipvs规则【persistent 代表持久】

[root@master ~]# vim service-clusterip.yaml

[root@master ~]# cat service-clusterip.yaml

apiVersion: v1

kind: Service

metadata:

name: service-clusterip

namespace: dev

spec:

sessionAffinity: ClientIP

selector:

app: nginx-pod

clusterIP:

type: ClusterIP

ports:

- port: 80

targetPort: 80

[root@master ~]# kubectl apply -f service-clusterip.yaml

Warning: resource services/service-clusterip is missing the kubectl.kubernetes.io/last-applied-configuration annotation which is required by kubectl apply. kubectl apply should only be used on resources created declaratively by either kubectl create --save-config or kubectl apply. The missing annotation will be patched automatically.

service/service-clusterip configured

[root@master ~]# ipvsadm -Ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 172.17.0.1:30050 rr

-> 10.244.2.3:80 Masq 1 0 0

TCP 172.17.0.1:32407 rr

-> 10.244.1.3:80 Masq 1 0 0

TCP 192.168.193.128:30050 rr

-> 10.244.2.3:80 Masq 1 0 0

TCP 192.168.193.128:32407 rr

-> 10.244.1.3:80 Masq 1 0 0

TCP 10.96.0.1:443 rr

-> 192.168.193.128:6443 Masq 1 0 0

TCP 10.96.0.10:53 rr

-> 10.244.0.4:53 Masq 1 0 0

-> 10.244.0.5:53 Masq 1 0 0

TCP 10.96.0.10:9153 rr

-> 10.244.0.4:9153 Masq 1 0 0

-> 10.244.0.5:9153 Masq 1 0 0

TCP 10.97.237.88:80 rr

-> 10.244.2.3:80 Masq 1 0 0

TCP 10.104.90.145:80 rr persistent 10800

-> 10.244.1.6:80 Masq 1 0 0

-> 10.244.2.5:80 Masq 1 0 0

-> 10.244.2.6:80 Masq 1 0 0

TCP 10.110.93.150:80 rr

-> 10.244.1.3:80 Masq 1 0 0

TCP 10.110.167.164:80 rr

-> 10.244.1.4:80 Masq 1 0 0

-> 10.244.1.5:80 Masq 1 0 0

-> 10.244.2.4:80 Masq 1 0 0

TCP 10.244.0.0:30050 rr

-> 10.244.2.3:80 Masq 1 0 0

TCP 10.244.0.0:32407 rr

-> 10.244.1.3:80 Masq 1 0 0

TCP 10.244.0.1:30050 rr

-> 10.244.2.3:80 Masq 1 0 0

TCP 10.244.0.1:32407 rr

-> 10.244.1.3:80 Masq 1 0 0

UDP 10.96.0.10:53 rr

-> 10.244.0.4:53 Masq 1 0 0

-> 10.244.0.5:53 Masq 1 0 0

[root@master ~]# while true;do curl 10.110.167.164:80; sleep 5; done;

Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a>

Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a>

HeadLiness类型的Service

在某些场景中,开发人员可能不想使用Service提供的负载均衡功能,而希望自己来控制负载均衡策略,针对这种情况,kubernetes提供了HeadLiness Service,这类Service不会分配Cluster IP,如果想要访问service,只能通过service的域名进行查询。

创建service-headliness.yaml

[root@master ~]# vim service-headliness.yaml

[root@master ~]# cat service-headliness.yaml

apiVersion: v1

kind: Service

metadata:

name: service-headliness

namespace: dev

spec:

selector:

app: nginx-pod

clusterIP: None # 将clusterIP设置为None,即可创建headliness Service

type: ClusterIP

ports:

- port: 80

targetPort: 80

# 创建service

[root@master ~]# kubectl create -f service-headliness.yaml

service/service-headliness created

# 获取service, 发现CLUSTER-IP未分配

[root@master ~]# kubectl get svc service-headliness -n dev -o wide

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

service-headliness ClusterIP None <none> 80/TCP 23m app=nginx-pod

# 查看service详情

[root@master ~]# kubectl describe svc service-headliness -n dev

Name: service-headliness

Namespace: dev

Labels: <none>

Annotations: <none>

Selector: app=nginx-pod

Type: ClusterIP

IP Family Policy: SingleStack

IP Families: IPv4

IP: None

IPs: None

Port: <unset> 80/TCP

TargetPort: 80/TCP

Endpoints: 10.244.1.6:80,10.244.2.5:80,10.244.2.6:80

Session Affinity: None

Events: <none>

# 查看域名的解析情况

[root@master ~]# kubectl get pods -n dev

NAME READY STATUS RESTARTS AGE

pc-deployment-66d5c85c96-6qxj7 1/1 Running 0 46m

pc-deployment-66d5c85c96-lhvpv 1/1 Running 0 46m

pc-deployment-66d5c85c96-nd72j 1/1 Running 0 46m

[root@master ~]# kubectl exec -it pc-deployment-66d5c85c96-6qxj7 -n dev /bin/sh

kubectl exec [POD] [COMMAND] is DEPRECATED and will be removed in a future version. Use kubectl exec [POD] -- [COMMAND] instead.

# cat /etc/resolv.conf

search dev.svc.cluster.local svc.cluster.local cluster.local localdomain example.com

nameserver 10.96.0.10

options ndots:5

# exit

[root@master ~]# dig @10.96.0.10 service-headliness.dev.svc.cluster.local

; <<>> DiG 9.11.36-RedHat-9.11.36-2.el8 <<>> @10.96.0.10 service-headliness.dev.svc.cluster.local

; (1 server found)

;; global options: +cmd

;; Got answer:

;; WARNING: .local is reserved for Multicast DNS

;; You are currently testing what happens when an mDNS query is leaked to DNS

;; ->>HEADER<<- opcode: QUERY, status: NOERROR, id: 1287

;; flags: qr aa rd; QUERY: 1, ANSWER: 3, AUTHORITY: 0, ADDITIONAL: 1

;; WARNING: recursion requested but not available

;; OPT PSEUDOSECTION:

; EDNS: version: 0, flags:; udp: 4096

; COOKIE: eb9a342a8074d3ec (echoed)

;; QUESTION SECTION:

;service-headliness.dev.svc.cluster.local. IN A

;; ANSWER SECTION:

service-headliness.dev.svc.cluster.local. 30 IN A 10.244.2.5

service-headliness.dev.svc.cluster.local. 30 IN A 10.244.1.6

service-headliness.dev.svc.cluster.local. 30 IN A 10.244.2.6

;; Query time: 0 msec

;; SERVER: 10.96.0.10#53(10.96.0.10)

;; WHEN: Sun Dec 04 19:44:41 CST 2022

;; MSG SIZE rcvd: 249

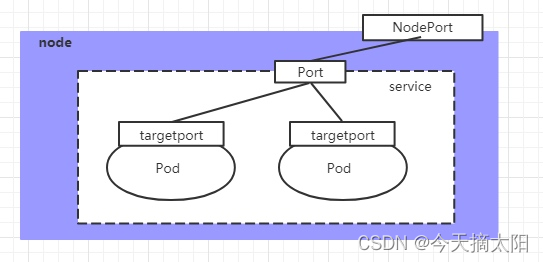

NodePort类型的Service

在之前的样例中,创建的Service的ip地址只有集群内部才可以访问,如果希望将Service暴露给集群外部使用,那么就要使用到另外一种类型的Service,称为NodePort类型。NodePort的工作原理其实就是将service的端口映射到Node的一个端口上,然后就可以通过NodeIp:NodePort来访问service了。

创建service-nodeport.yaml

[root@master ~]# vim service-nodeport.yaml

[root@master ~]# cat service-nodeport.yaml

apiVersion: v1

kind: Service

metadata:

name: service-nodeport

namespace: dev

spec:

selector:

app: nginx-pod

type: NodePort # service类型

ports:

- port: 80

nodePort: 30002 # 指定绑定的node的端口(默认的取值范围是:30000-32767), 如果不指定,会默认分配

targetPort: 80

# 创建service

[root@master ~]# kubectl create -f service-nodeport.yaml

service/service-nodeport created

# service类型

[root@master ~]# kubectl get svc -n dev -o wide

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

service-clusterip ClusterIP 10.104.90.145 <none> 80/TCP 46m app=nginx-pod

service-headliness ClusterIP None <none> 80/TCP 29m app=nginx-pod

service-nodeport NodePort 10.96.51.88 <none> 80:30002/TCP 50s app=nginx-pod

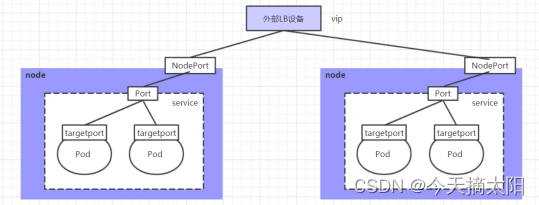

LoadBalancer类型的Service

LoadBalancer和NodePort很相似,目的都是向外部暴露一个端口,区别在于LoadBalancer会在集群的外部再来做一个负载均衡设备,而这个设备需要外部环境支持的,外部服务发送到这个设备上的请求,会被设备负载之后转发到集群中。

ExternalName类型的Service

ExternalName类型的Service用于引入集群外部的服务,它通过externalName属性指定外部一个服务的地址,然后在集群内部访问此service就可以访问到外部的服务了。

[root@master ~]# vim service-externalname.yaml

[root@master ~]# cat service-externalname.yaml

apiVersion: v1

kind: Service

metadata:

name: service-externalname

namespace: dev

spec:

type: ExternalName # service类型

externalName: www.baidu.com #改成ip地址也可以

# 创建service

[root@master ~]# kubectl create -f service-externalname.yaml

service/service-externalname created

# 域名解析

[root@master ~]# dig @10.96.0.10 service-externalname.dev.svc.cluster.local

; <<>> DiG 9.11.36-RedHat-9.11.36-2.el8 <<>> @10.96.0.10 service-externalname.dev.svc.cluster.local

; (1 server found)

;; global options: +cmd

;; Got answer:

;; WARNING: .local is reserved for Multicast DNS

;; You are currently testing what happens when an mDNS query is leaked to DNS

;; ->>HEADER<<- opcode: QUERY, status: NOERROR, id: 37167

;; flags: qr aa rd; QUERY: 1, ANSWER: 4, AUTHORITY: 0, ADDITIONAL: 1

;; WARNING: recursion requested but not available

;; OPT PSEUDOSECTION:

; EDNS: version: 0, flags:; udp: 4096

; COOKIE: 436480323023db0f (echoed)

;; QUESTION SECTION:

;service-externalname.dev.svc.cluster.local. IN A

;; ANSWER SECTION:

service-externalname.dev.svc.cluster.local. 5 IN CNAME www.baidu.com.

www.baidu.com. 5 IN CNAME www.a.shifen.com.

www.a.shifen.com. 5 IN A 14.215.177.39

www.a.shifen.com. 5 IN A 14.215.177.38

;; Query time: 13 msec

;; SERVER: 10.96.0.10#53(10.96.0.10)

;; WHEN: Sun Dec 04 19:49:55 CST 2022

;; MSG SIZE rcvd: 259

Ingress介绍

在前面课程中已经提到,Service对集群之外暴露服务的主要方式有两种:NotePort和LoadBalancer,但是这两种方式,都有一定的缺点:

NodePort方式的缺点是会占用很多集群机器的端口,那么当集群服务变多的时候,这个缺点就愈发明显

LB方式的缺点是每个service需要一个LB,浪费、麻烦,并且需要kubernetes之外设备的支持

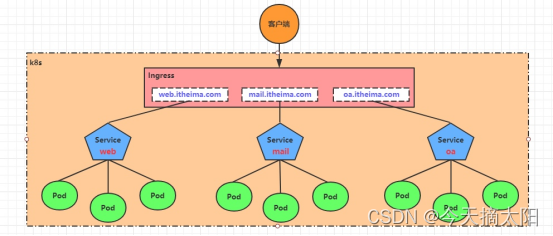

基于这种现状,kubernetes提供了Ingress资源对象,Ingress只需要一个NodePort或者一个LB就可以满足暴露多个Service的需求。工作机制大致如下图表示:

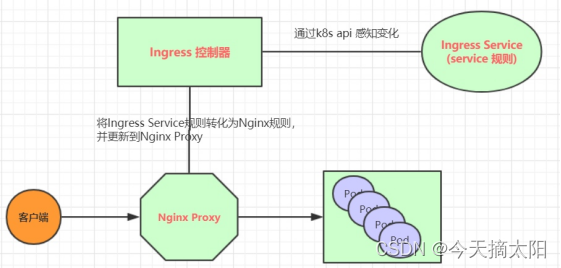

实际上,Ingress相当于一个7层的负载均衡器,是kubernetes对反向代理的一个抽象,它的工作原理类似于Nginx,可以理解成在Ingress里建立诸多映射规则,Ingress Controller通过监听这些配置规则并转化成Nginx的反向代理配置 , 然后对外部提供服务。在这里有两个核心概念:

ingress:kubernetes中的一个对象,作用是定义请求如何转发到service的规则

ingress controller:具体实现反向代理及负载均衡的程序,对ingress定义的规则进行解析,根据配置的规则来实现请求转发,实现方式有很多,比如Nginx, Contour, Haproxy等等

Ingress(以Nginx为例)的工作原理如下:

1.用户编写Ingress规则,说明哪个域名对应kubernetes集群中的哪个Service

2.Ingress控制器动态感知Ingress服务规则的变化,然后生成一段对应的Nginx反向代理配置

3.Ingress控制器会将生成的Nginx配置写入到一个运行着的Nginx服务中,并动态更新

4.到此为止,其实真正在工作的就是一个Nginx了,内部配置了用户定义的请求转发规则

Ingress使用

环境准备

搭建ingress环境

创建文件夹

[root@master ~]# mkdir ingress-controller

[root@master ~]# cd ingress-controller/

去github官网查找ingress,需要找到对应的yaml文件,并将其下载下来

[root@master ingress-controller]# wget https://raw.githubusercontent.com/kubernetes/ingress-nginx/main/deploy/static/provider/baremetal/deploy.yaml

[root@master ingress-controller]# vim deploy.yaml

[root@master ingress-controller]# vim deploy.yaml

[root@master ingress-controller]# kubectl apply -f deploy.yaml

namespace/ingress-nginx created

serviceaccount/ingress-nginx created

serviceaccount/ingress-nginx-admission created

role.rbac.authorization.k8s.io/ingress-nginx created

role.rbac.authorization.k8s.io/ingress-nginx-admission created

clusterrole.rbac.authorization.k8s.io/ingress-nginx created

clusterrole.rbac.authorization.k8s.io/ingress-nginx-admission created

rolebinding.rbac.authorization.k8s.io/ingress-nginx created

rolebinding.rbac.authorization.k8s.io/ingress-nginx-admission created

clusterrolebinding.rbac.authorization.k8s.io/ingress-nginx created

clusterrolebinding.rbac.authorization.k8s.io/ingress-nginx-admission created

configmap/ingress-nginx-controller created

service/ingress-nginx-controller created

service/ingress-nginx-controller-admission created

deployment.apps/ingress-nginx-controller created

job.batch/ingress-nginx-admission-create created

job.batch/ingress-nginx-admission-patch created

ingressclass.networking.k8s.io/nginx created

validatingwebhookconfiguration.admissionregistration.k8s.io/ingress-nginx-admission created

[root@master ingress-controller]# kubectl get pod -n ingress-nginx

NAME READY STATUS RESTARTS AGE

ingress-nginx-admission-create-bj6nz 0/1 Completed 0 2m9s

ingress-nginx-admission-patch-6626v 0/1 Completed 0 2m9s

ingress-nginx-controller-6f66fd4bdb-nl9rz 1/1 Running 0 2m9s

# 查看service

[root@master ingress-controller]# kubectl get svc -n ingress-nginx

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

ingress-nginx-controller NodePort 10.105.39.188 <none> 80:30219/TCP,443:32762/TCP 30s

ingress-nginx-controller-admission ClusterIP 10.96.71.224 <none> 443/TCP 29s

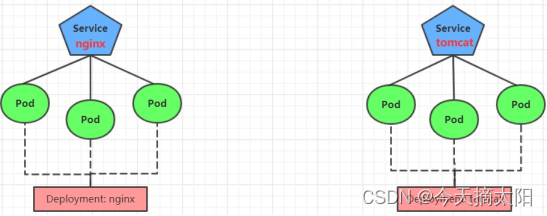

准备service和pod

为了后面的实验比较方便,创建如下图所示的模型

创建tomcat-nginx.yaml

[root@master ingress-controller]# cat tomcat-nginx.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-deployment

namespace: dev

spec:

replicas: 3

selector:

matchLabels:

app: nginx-pod

template:

metadata:

labels:

app: nginx-pod

spec:

containers:

- name: nginx

image: nginx:1.17.1

ports:

- containerPort: 80

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: tomcat-deployment

namespace: dev

spec:

replicas: 3

selector:

matchLabels:

app: tomcat-pod

template:

metadata:

labels:

app: tomcat-pod

spec:

containers:

- name: tomcat

image: tomcat:8.5-jre10-slim

ports:

- containerPort: 8080

---

apiVersion: v1

kind: Service

metadata:

name: nginx-service

namespace: dev

spec:

selector:

app: nginx-pod

clusterIP: None

type: ClusterIP

ports:

- port: 80

targetPort: 80

---

apiVersion: v1

kind: Service

metadata:

name: tomcat-service

namespace: dev

spec:

selector:

app: tomcat-pod

clusterIP: None

type: ClusterIP

ports:

- port: 8080

targetPort: 8080

# 创建

[root@master ingress-controller]# kubectl create -f tomcat-nginx.yaml

deployment.apps/nginx-deployment created

deployment.apps/tomcat-deployment created

service/nginx-service created

service/tomcat-service created

# 查看

[root@master ingress-controller]# kubectl get svc -n dev

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

nginx-service ClusterIP None <none> 80/TCP 13s

tomcat-service ClusterIP None <none> 8080/TCP 13s

Http代理

创建ingress-http.yaml

[root@master ingress-controller]# vim ingress-http.yaml

[root@master ingress-controller]# cat ingress-http.yaml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: ingress-http

namespace: dev

spec:

ingressClassName: nginx

rules:

- host: nginx.chenyu.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: nginx-service

port:

number: 80

- host: tomcat.chenyu.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: tomcat-service

port:

number: 8080

# 创建

[root@master ingress-controller]# kubectl create -f ingress-http.yaml

ingress.networking.k8s.io/ingress-http created

# 查看

[root@master ingress-controller]# kubectl get -f ingress-http.yaml

NAME CLASS HOSTS ADDRESS PORTS AGE

ingress-http nginx nginx.chenyu.com,tomcat.chenyu.com 80 8s

# 查看详情

[root@master ingress-controller]# kubectl describe ingress ingress-http -n dev

Name: ingress-http

Labels: <none>

Namespace: dev

Address: 192.168.193.129

Ingress Class: nginx

Default backend: <default>

Rules:

Host Path Backends

---- ---- --------

nginx.chenyu.com

/ nginx-service:80 (10.244.1.7:80,10.244.1.8:80,10.244.2.5:80)

tomcat.chenyu.com

/ tomcat-service:8080 (10.244.1.6:8080,10.244.2.6:8080,10.244.2.7:8080)

Annotations: <none>

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Sync 29s (x2 over 76s) nginx-ingress-controller Scheduled for sync

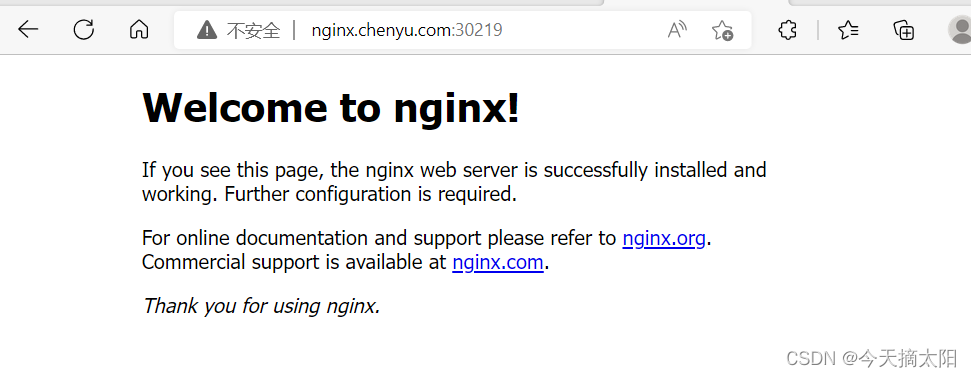

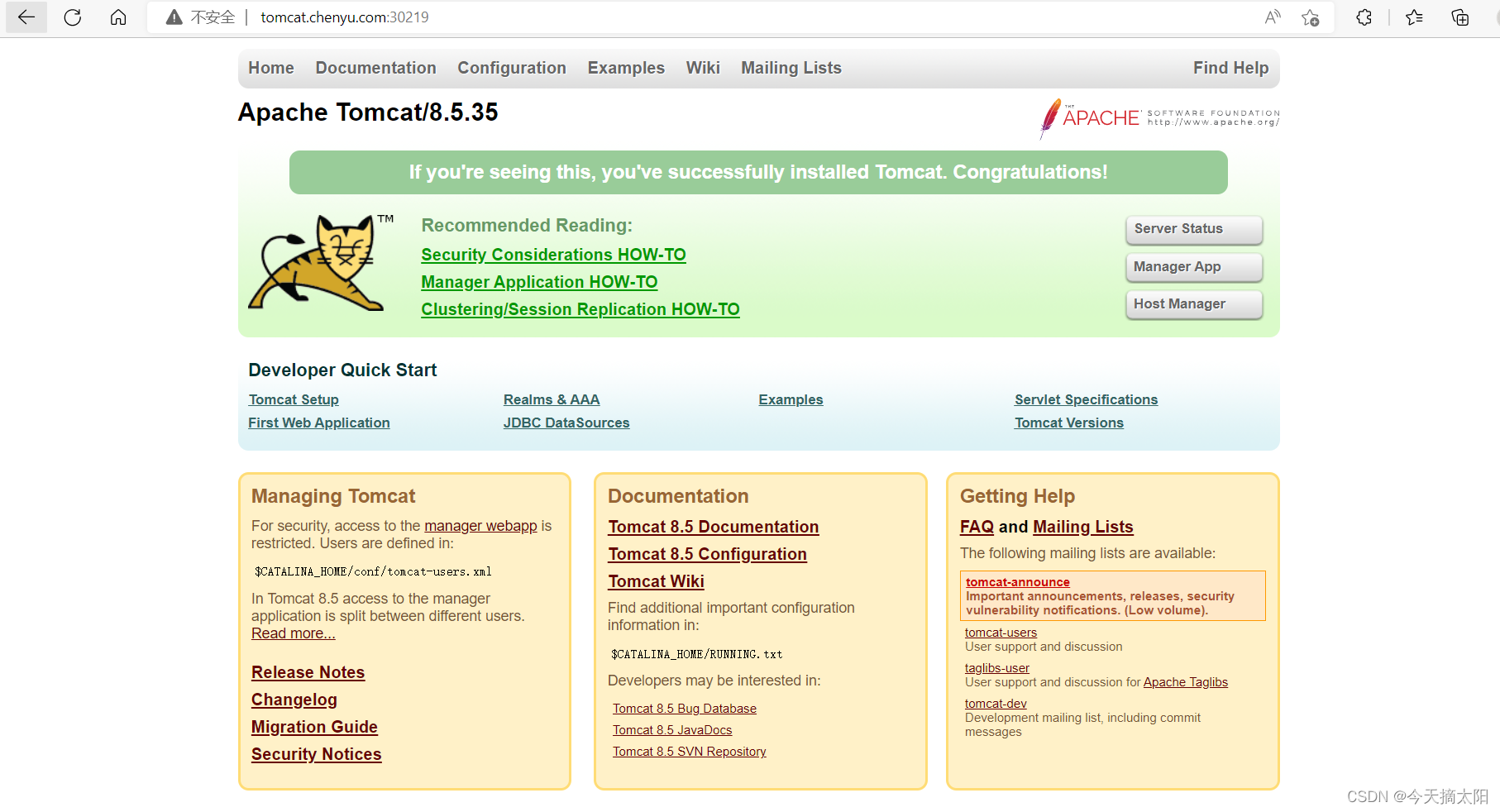

接下来,在本地电脑上配置host文件,解析上面的两个域名到192.168.193.128(master)上# 然后,就可以分别访问tomcat.example.com:32240 和 nginx.example.com:32240 查看效果了

Https代理

//注意http和https只能定义一个,先删除http的

创建证书

生成证书

[root@master ingress-controller]# openssl req -x509 -sha256 -nodes -days 365 -newkey rsa:2048 -keyout tls.key -out tls.crt -subj "/C=CN/ST=BJ/L=BJ/O=nginx/CN=chenyu.com"

Generating a RSA private key

..........+++++

..+++++

writing new private key to 'tls.key'

-----

# 创建密钥

[root@master ingress-controller]# kubectl create secret tls tls-secret --key tls.key --cert tls.crt

secret/tls-secret created

创建ingress-https.yaml

[root@master ingress-controller]# vim ingress-https.yaml

[root@master ingress-controller]# cat ingress-https.yaml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: ingress-https

namespace: dev

spec:

tls:

- hosts:

- nginx.chenyu.com

- tomcat.chenyu.com

secretName: tls-secret

ingressClassName: nginx

rules:

- host: nginx.chenyu.com

http:

paths:

- path: /

pathType: Prefix

backend:

Service:

name: nginx-service

port:

number: 80

- host: tomcat.chenyu.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: tomcat-service

port:

number: 8080

[root@master ingress-controller]#

# 创建

[root@master ~]# kubectl create -f ingress-https.yaml

ingress.extensions/ingress-https created

# 查看

[root@master ~]# kubectl get ing ingress-https -n dev

NAME HOSTS ADDRESS PORTS AGE

ingress-https nginx.chenyu.com,tomcat.chenyu.com 10.104.184.38 80, 443 2m42s

# 查看详情

[root@master ~]# kubectl describe ing ingress-https -n dev

...TLS:

tls-secret terminates nginx.chenyu.com,tomcat.chenyu.com

Rules:

Host Path Backends

---- ---- --------

nginx.chenyu.com / nginx-service:80 (10.244.1.97:80,10.244.1.98:80,10.244.2.119:80)

tomcat.chenyu.com / tomcat-service:8080(10.244.1.99:8080,10.244.2.117:8080,10.244.2.120:8080)

...

# 下面可以通过浏览器访问https://nginx.chenyu.com:31335 和 https://tomcat.chenyu.com:31335来查看了

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?