1.安装依赖

pip install scrapy-ajax-utils -i https://pypi.tuna.tsinghua.edu.cn/simple源码地址:GitHub - kingronjan/scrapy_ajax_utils: utils for crawl ajax page in scrapy project.

2.修改spider文件

①为spider加上装饰器@selenium_support

②将spider.Request()方法换成SeleniumRequest()【重写start_requests方法】

import scrapy

from scrapy.http import HtmlResponse

from scrapy_ajax_utils import selenium_support, SeleniumRequest

@selenium_support

class TestspiderSpider(scrapy.Spider):

name = 'testspider'

allowed_domains = ['www.baidu.com']

start_urls = ['https://www.baidu.com' for i in range(20)]

def start_requests(self):

for url in self.start_urls:

yield SeleniumRequest(url, callback=self.parse, dont_filter=True)

def parse(self, response: HtmlResponse):

pass

3.配置:settings.py(如果使用的是webdriver-manager,如何查看驱动地址:看下面第4点)

# 只支持两种浏览器驱动 chrome(默认) & firefox.

SELENIUM_DRIVER_NAME = 'chrome'

# 浏览器驱动地址(必填)

SELENIUM_DRIVER_PATH = r'C:\Users\sunziwen\.wdm\drivers\chromedriver\win32\98.0.4758\chromedriver.exe'

# 是否为无头浏览器

SELENIUM_HEADLESS = False

# 页面加载超时时间

SELENIUM_DRIVER_PAGE_LOAD_TIMEOUT = 30

# selenium的最小并发数

SELENIUM_MIN_DRIVERS = 5

# selenium的最大并发数

SELENIUM_MAX_DRIVERS = 10或者也可以直接赋值:

from selenium.webdriver.chrome.service import Service as ChromeService

from webdriver_manager.chrome import ChromeDriverManager

# 只支持两种浏览器驱动 chrome(默认) & firefox.

SELENIUM_DRIVER_NAME = 'chrome'

# 浏览器驱动地址(必填)

# SELENIUM_DRIVER_PATH = r'C:\Users\sunziwen\.wdm\drivers\chromedriver\win32\98.0.4758\chromedriver.exe'

SELENIUM_DRIVER_PATH = ChromeService(executable_path=ChromeDriverManager().install()).path

# 是否为无头浏览器

SELENIUM_HEADLESS = False

# 页面加载超时时间

SELENIUM_DRIVER_PAGE_LOAD_TIMEOUT = 30

# selenium的最小并发数

SELENIUM_MIN_DRIVERS = 4

# selenium的最大并发数

SELENIUM_MAX_DRIVERS = 5

4.查看驱动地址:

from selenium.webdriver.chrome.service import Service as ChromeService

from webdriver_manager.chrome import ChromeDriverManager

if __name__ == '__main__':

# 初始化驱动

service = ChromeService(executable_path=ChromeDriverManager().install())

print("驱动地址:",service.path)5.运行测试:

6.操作浏览器驱动

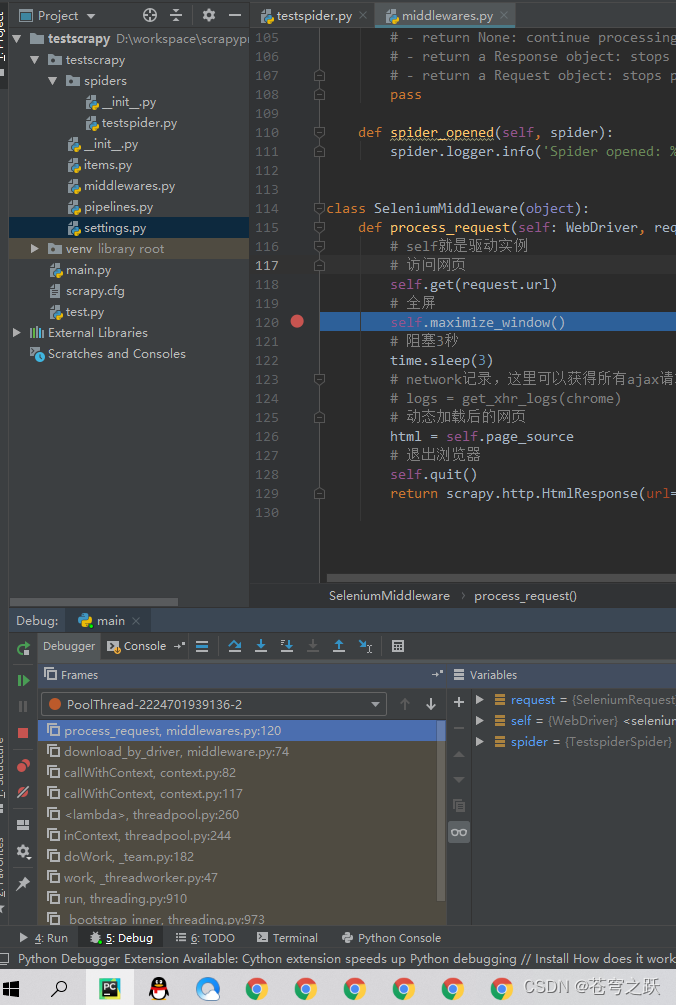

①编写操作中间件:middlewares.py

from selenium.webdriver.chrome.webdriver import WebDriver

from scrapy_ajax_utils import SeleniumRequest

class SeleniumMiddleware(object):

def process_request(self: WebDriver, request: SeleniumRequest, spider):

# self就是驱动实例

# 访问网页

self.get(request.url)

# 全屏

self.maximize_window()

# 阻塞3秒

time.sleep(3)

# network记录,这里可以获得所有ajax请求的结果

# logs = get_xhr_logs(chrome)

# 动态加载后的网页

html = self.page_source

# 退出浏览器

self.quit()

return scrapy.http.HtmlResponse(url=request.url, body=html.encode('utf-8'), encoding='utf-8', request=request)②启动中间件:settings.py

DOWNLOADER_MIDDLEWARES = {

'testscrapy.middlewares.SeleniumMiddleware': 543,

} # 下载中间件③修改spider文件,将SeleniumRequest()的handle参数指定为操作中间件。

7.断点测试

2763

2763

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?