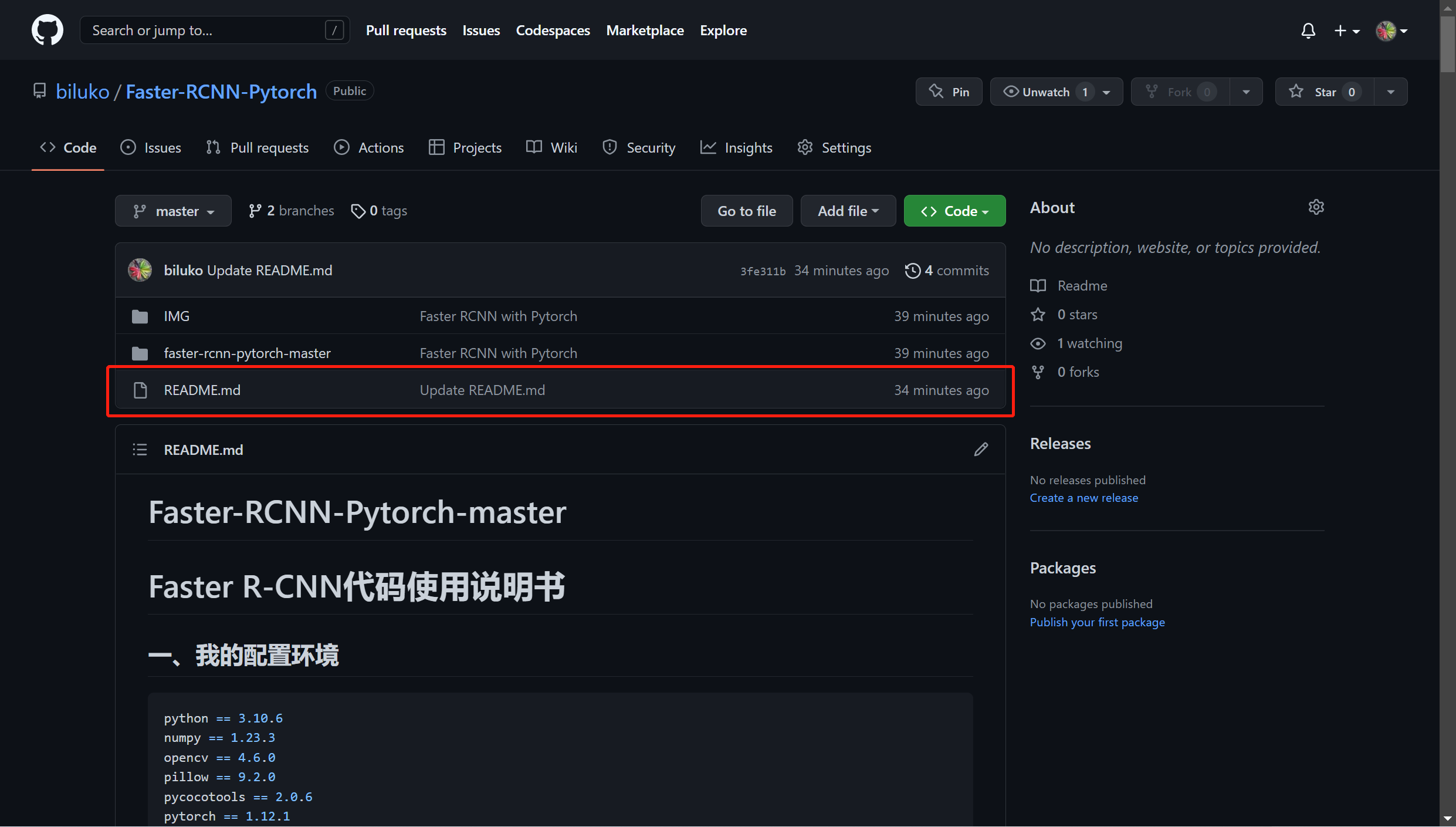

Faster R-CNN 论文复现代码

详细的代码使用守则:

https://github.com/biluko/Faster-RCNN-Pytorch

博客地址为:

https://blog.csdn.net/wzk4869/article/details/128133224?spm=1001.2014.3001.5501

一、nets文件夹下

init.py

classifier.py

import warnings

import torch

from torch import nn

from torchvision.ops import RoIPool

warnings.filterwarnings("ignore")

class VGG16RoIHead(nn.Module):

def __init__(self, n_class, roi_size, spatial_scale, classifier):

super(VGG16RoIHead, self).__init__()

self.classifier = classifier

#--------------------------------------#

# 对ROIPooling后的的结果进行回归预测

#--------------------------------------#

self.cls_loc = nn.Linear(4096, n_class * 4)

#-----------------------------------#

# 对ROIPooling后的的结果进行分类

#-----------------------------------#

self.score = nn.Linear(4096, n_class)

#-----------------------------------#

# 权值初始化

#-----------------------------------#

normal_init(self.cls_loc, 0, 0.001)

normal_init(self.score, 0, 0.01)

self.roi = RoIPool((roi_size, roi_size), spatial_scale)

def forward(self, x, rois, roi_indices, img_size):

n, _, _, _ = x.shape

if x.is_cuda:

roi_indices = roi_indices.cuda()

rois = rois.cuda()

rois = torch.flatten(rois, 0, 1)

roi_indices = torch.flatten(roi_indices, 0, 1)

rois_feature_map = torch.zeros_like(rois)

rois_feature_map[:, [0, 2]] = rois[:, [0, 2]] / img_size[1] * x.size()[3]

rois_feature_map[:, [1, 3]] = rois[:, [1, 3]] / img_size[0] * x.size()[2]

indices_and_rois = torch.cat([roi_indices[:, None], rois_feature_map], dim = 1)

#-----------------------------------#

# 利用建议框对公用特征层进行截取

#-----------------------------------#

pool = self.roi(x, indices_and_rois)

#-----------------------------------#

# 利用classifier网络进行特征提取

#-----------------------------------#

pool = pool.view(pool.size(0), -1)

#--------------------------------------------------------------#

# 当输入为一张图片的时候,这里获得的f7的shape为[300, 4096]

#--------------------------------------------------------------#

fc7 = self.classifier(pool)

roi_cls_locs = self.cls_loc(fc7)

roi_scores = self.score(fc7)

roi_cls_locs = roi_cls_locs.view(n, -1, roi_cls_locs.size(1))

roi_scores = roi_scores.view(n, -1, roi_scores.size(1))

return roi_cls_locs, roi_scores

class Resnet50RoIHead(nn.Module):

def __init__(self, n_class, roi_size, spatial_scale, classifier):

super(Resnet50RoIHead, self).__init__()

self.classifier = classifier

#--------------------------------------#

# 对ROIPooling后的的结果进行回归预测

#--------------------------------------#

self.cls_loc = nn.Linear(2048, n_class * 4)

#-----------------------------------#

# 对ROIPooling后的的结果进行分类

#-----------------------------------#

self.score = nn.Linear(2048, n_class)

#-----------------------------------#

# 权值初始化

#-----------------------------------#

normal_init(self.cls_loc, 0, 0.001)

normal_init(self.score, 0, 0.01)

self.roi = RoIPool((roi_size, roi_size), spatial_scale)

def forward(self, x, rois, roi_indices, img_size):

n, _, _, _ = x.shape

if x.is_cuda:

roi_indices = roi_indices.cuda()

rois = rois.cuda()

rois = torch.flatten(rois, 0, 1)

roi_indices = torch.flatten(roi_indices, 0, 1)

rois_feature_map = torch.zeros_like(rois)

rois_feature_map[:, [0, 2]] = rois[:, [0, 2]] / img_size[1] * x.size()[3]

rois_feature_map[:, [1, 3]] = rois[:, [1, 3]] / img_size[0] * x.size()[2]

indices_and_rois = torch.cat([roi_indices[:, None], rois_feature_map], dim = 1)

#-----------------------------------#

# 利用建议框对公用特征层进行截取

#-----------------------------------#

pool = self.roi(x, indices_and_rois)

#-----------------------------------#

# 利用classifier网络进行特征提取

#-----------------------------------#

fc7 = self.classifier(pool)

#--------------------------------------------------------------#

# 当输入为一张图片的时候,这里获得的f7的shape为[300, 2048]

#--------------------------------------------------------------#

fc7 = fc7.view(fc7.size(0), -1)

roi_cls_locs = self.cls_loc(fc7)

roi_scores = self.score(fc7)

roi_cls_locs = roi_cls_locs.view(n, -1, roi_cls_locs.size(1))

roi_scores = roi_scores.view(n, -1, roi_scores.size(1))

return roi_cls_locs, roi_scores

def normal_init(m, mean, stddev, truncated = False):

if truncated:

m.weight.data.normal_().fmod_(2).mul_(stddev).add_(mean) # not a perfect approximation

else:

m.weight.data.normal_(mean, stddev)

m.bias.data.zero_()

rcnn_training.py

import math

from functools import partial

import numpy as np

import torch

import torch.nn as nn

from torch.nn import functional as F

def bbox_iou(bbox_a, bbox_b):

if bbox_a.shape[1] != 4 or bbox_b.shape[1] != 4:

print(bbox_a, bbox_b)

raise IndexError

tl = np.maximum(bbox_a[:, None, :2], bbox_b[:, :2])

br = np.minimum(bbox_a[:, None, 2:], bbox_b[:, 2:])

area_i = np.prod(br - tl, axis=2) * (tl < br).all(axis=2)

area_a = np.prod(bbox_a[:, 2:] - bbox_a[:, :2], axis=1)

area_b = np.prod(bbox_b[:, 2:] - bbox_b[:, :2], axis=1)

return area_i / (area_a[:, None] + area_b - area_i)

def bbox2loc(src_bbox, dst_bbox):

width = src_bbox[:, 2] - src_bbox[:, 0]

height = src_bbox[:, 3] - src_bbox[:, 1]

ctr_x = src_bbox[:, 0] + 0.5 * width

ctr_y = src_bbox[:, 1] + 0.5 * height

base_width = dst_bbox[:, 2] - dst_bbox[:, 0]

base_height = dst_bbox[:, 3] - dst_bbox[:, 1]

base_ctr_x = dst_bbox[:, 0] + 0.5 * base_width

base_ctr_y = dst_bbox[:, 1] + 0.5 * base_height

eps = np.finfo(height.dtype).eps

width = np.maximum(width, eps)

height = np.maximum(height, eps)

dx = (base_ctr_x - ctr_x) / width

dy = (base_ctr_y - ctr_y) / height

dw = np.log(base_width / width)

dh = np.log(base_height / height)

loc = np.vstack((dx, dy, dw, dh)).transpose()

return loc

class AnchorTargetCreator(object):

def __init__(self, n_sample=256, pos_iou_thresh=0.7, neg_iou_thresh=0.3, pos_ratio=0.5):

self.n_sample = n_sample

self.pos_iou_thresh = pos_iou_thresh

self.neg_iou_thresh = neg_iou_thresh

self.pos_ratio = pos_ratio

def __call__(self, bbox, anchor):

argmax_ious, label = self._create_label(anchor, bbox)

if (label > 0).any():

loc = bbox2loc(anchor, bbox[argmax_ious])

return loc, label

else:

return np.zeros_like(anchor), label

def _calc_ious(self, anchor, bbox):

#----------------------------------------------#

# anchor和bbox的iou

# 获得的ious的shape为[num_anchors, num_gt]

#----------------------------------------------#

ious = bbox_iou(anchor, bbox)

if len(bbox)==0:

return np.zeros(len(anchor), np.int32), np.zeros(len(anchor)), np.zeros(len(bbox))

#---------------------------------------------------------#

# 获得每一个先验框最对应的真实框 [num_anchors, ]

#---------------------------------------------------------#

argmax_ious = ious.argmax(axis=1)

#---------------------------------------------------------#

# 找出每一个先验框最对应的真实框的iou [num_anchors, ]

#---------------------------------------------------------#

max_ious = np.max(ious, axis=1)

#---------------------------------------------------------#

# 获得每一个真实框最对应的先验框 [num_gt, ]

#---------------------------------------------------------#

gt_argmax_ious = ious.argmax(axis=0)

#---------------------------------------------------------#

# 保证每一个真实框都存在对应的先验框

#---------------------------------------------------------#

for i in range(len(gt_argmax_ious)):

argmax_ious[gt_argmax_ious[i]] = i

return argmax_ious, max_ious, gt_argmax_ious

def _create_label(self, anchor, bbox):

# ------------------------------------------ #

# 1是正样本,0是负样本,-1忽略

# 初始化的时候全部设置为-1

# ------------------------------------------ #

label = np.empty((len(anchor),), dtype=np.int32)

label.fill(-1)

# ------------------------------------------------------------------------ #

# argmax_ious为每个先验框对应的最大的真实框的序号 [num_anchors, ]

# max_ious为每个真实框对应的最大的真实框的iou [num_anchors, ]

# gt_argmax_ious为每一个真实框对应的最大的先验框的序号 [num_gt, ]

# ------------------------------------------------------------------------ #

argmax_ious, max_ious, gt_argmax_ious = self._calc_ious(anchor, bbox)

# ----------------------------------------------------- #

# 如果小于门限值则设置为负样本

# 如果大于门限值则设置为正样本

# 每个真实框至少对应一个先验框

# ----------------------------------------------------- #

label[max_ious < self.neg_iou_thresh] = 0

label[max_ious >= self.pos_iou_thresh] = 1

if len(gt_argmax_ious)>0:

label[gt_argmax_ious] = 1

# ----------------------------------------------------- #

# 判断正样本数量是否大于128,如果大于则限制在128

# ----------------------------------------------------- #

n_pos = int(self.pos_ratio * self.n_sample)

pos_index = np.where(label == 1)[0]

if len(pos_index) > n_pos:

disable_index = np.random.choice(pos_index, size=(len(pos_index) - n_pos), replace=False)

label[disable_index] = -1

# ----------------------------------------------------- #

# 平衡正负样本,保持总数量为256

# ----------------------------------------------------- #

n_neg = self.n_sample - np.sum(label == 1)

neg_index = np.where(label == 0)[0]

if len(neg_index) > n_neg:

disable_index = np.random.choice(neg_index, size=(len(neg_index) - n_neg), replace=False)

label[disable_index] = -1

return argmax_ious, label

class ProposalTargetCreator(object):

def __init__(self, n_sample=128, pos_ratio=0.5, pos_iou_thresh=0.5, neg_iou_thresh_high=0.5, neg_iou_thresh_low=0):

self.n_sample = n_sample

self.pos_ratio = pos_ratio

self.pos_roi_per_image = np.round(self.n_sample * self.pos_ratio)

self.pos_iou_thresh = pos_iou_thresh

self.neg_iou_thresh_high = neg_iou_thresh_high

self.neg_iou_thresh_low = neg_iou_thresh_low

def __call__(self, roi, bbox, label, loc_normalize_std=(0.1, 0.1, 0.2, 0.2)):

roi = np.concatenate((roi.detach().cpu().numpy(), bbox), axis=0)

# ----------------------------------------------------- #

# 计算建议框和真实框的重合程度

# ----------------------------------------------------- #

iou = bbox_iou(roi, bbox)

if len(bbox)==0:

gt_assignment = np.zeros(len(roi), np.int32)

max_iou = np.zeros(len(roi))

gt_roi_label = np.zeros(len(roi))

else:

#---------------------------------------------------------#

# 获得每一个建议框最对应的真实框 [num_roi, ]

#---------------------------------------------------------#

gt_assignment = iou.argmax(axis=1)

#---------------------------------------------------------#

# 获得每一个建议框最对应的真实框的iou [num_roi, ]

#---------------------------------------------------------#

max_iou = iou.max(axis=1)

#---------------------------------------------------------#

# 真实框的标签要+1因为有背景的存在

#---------------------------------------------------------#

gt_roi_label = label[gt_assignment] + 1

#----------------------------------------------------------------#

# 满足建议框和真实框重合程度大于neg_iou_thresh_high的作为负样本

# 将正样本的数量限制在self.pos_roi_per_image以内

#----------------------------------------------------------------#

pos_index = np.where(max_iou >= self.pos_iou_thresh)[0]

pos_roi_per_this_image = int(min(self.pos_roi_per_image, pos_index.size))

if pos_index.size > 0:

pos_index = np.random.choice(pos_index, size=pos_roi_per_this_image, replace=False)

#-----------------------------------------------------------------------------------------------------#

# 满足建议框和真实框重合程度小于neg_iou_thresh_high大于neg_iou_thresh_low作为负样本

# 将正样本的数量和负样本的数量的总和固定成self.n_sample

#-----------------------------------------------------------------------------------------------------#

neg_index = np.where((max_iou < self.neg_iou_thresh_high) & (max_iou >= self.neg_iou_thresh_low))[0]

neg_roi_per_this_image = self.n_sample - pos_roi_per_this_image

neg_roi_per_this_image = int(min(neg_roi_per_this_image, neg_index.size))

if neg_index.size > 0:

neg_index = np.random.choice(neg_index, size=neg_roi_per_this_image, replace=False)

#---------------------------------------------------------#

# sample_roi [n_sample, ]

# gt_roi_loc [n_sample, 4]

# gt_roi_label [n_sample, ]

#---------------------------------------------------------#

keep_index = np.append(pos_index, neg_index)

sample_roi = roi[keep_index]

if len(bbox)==0:

return sample_roi, np.zeros_like(sample_roi), gt_roi_label[keep_index]

gt_roi_loc = bbox2loc(sample_roi, bbox[gt_assignment[keep_index]])

gt_roi_loc = (gt_roi_loc / np.array(loc_normalize_std, np.float32))

gt_roi_label = gt_roi_label[keep_index]

gt_roi_label[pos_roi_per_this_image:] = 0

return sample_roi, gt_roi_loc, gt_roi_label

class FasterRCNNTrainer(nn.Module):

def __init__(self, model_train, optimizer):

super(FasterRCNNTrainer, self).__init__()

self.model_train = model_train

self.optimizer = optimizer

self.rpn_sigma = 1

self.roi_sigma = 1

self.anchor_target_creator = AnchorTargetCreator()

self.proposal_target_creator = ProposalTargetCreator()

self.loc_normalize_std = [0.1, 0.1, 0.2, 0.2]

def _fast_rcnn_loc_loss(self, pred_loc, gt_loc, gt_label, sigma):

pred_loc = pred_loc[gt_label > 0]

gt_loc = gt_loc[gt_label > 0]

sigma_squared = sigma ** 2

regression_diff = (gt_loc - pred_loc)

regression_diff = regression_diff.abs().float()

regression_loss = torch.where(

regression_diff < (1. / sigma_squared),

0.5 * sigma_squared * regression_diff ** 2,

regression_diff - 0.5 / sigma_squared

)

regression_loss = regression_loss.sum()

num_pos = (gt_label > 0).sum().float()

regression_loss /= torch.max(num_pos, torch.ones_like(num_pos))

return regression_loss

def forward(self, imgs, bboxes, labels, scale):

n = imgs.shape[0]

img_size = imgs.shape[2:]

#-------------------------------#

# 获取公用特征层

#-------------------------------#

base_feature = self.model_train(imgs, mode = 'extractor')

# -------------------------------------------------- #

# 利用rpn网络获得调整参数、得分、建议框、先验框

# -------------------------------------------------- #

rpn_locs, rpn_scores, rois, roi_indices, anchor = self.model_train(x = [base_feature, img_size], scale = scale, mode = 'rpn')

rpn_loc_loss_all, rpn_cls_loss_all, roi_loc_loss_all, roi_cls_loss_all = 0, 0, 0, 0

sample_rois, sample_indexes, gt_roi_locs, gt_roi_labels = [], [], [], []

for i in range(n):

bbox = bboxes[i]

label = labels[i]

rpn_loc = rpn_locs[i]

rpn_score = rpn_scores[i]

roi = rois[i]

# -------------------------------------------------- #

# 利用真实框和先验框获得建议框网络应该有的预测结果

# 给每个先验框都打上标签

# gt_rpn_loc [num_anchors, 4]

# gt_rpn_label [num_anchors, ]

# -------------------------------------------------- #

gt_rpn_loc, gt_rpn_label = self.anchor_target_creator(bbox, anchor[0].cpu().numpy())

gt_rpn_loc = torch.Tensor(gt_rpn_loc).type_as(rpn_locs)

gt_rpn_label = torch.Tensor(gt_rpn_label).type_as(rpn_locs).long()

# -------------------------------------------------- #

# 分别计算建议框网络的回归损失和分类损失

# -------------------------------------------------- #

rpn_loc_loss = self._fast_rcnn_loc_loss(rpn_loc, gt_rpn_loc, gt_rpn_label, self.rpn_sigma)

rpn_cls_loss = F.cross_entropy(rpn_score, gt_rpn_label, ignore_index=-1)

rpn_loc_loss_all += rpn_loc_loss

rpn_cls_loss_all += rpn_cls_loss

# ------------------------------------------------------ #

# 利用真实框和建议框获得classifier网络应该有的预测结果

# 获得三个变量,分别是sample_roi, gt_roi_loc, gt_roi_label

# sample_roi [n_sample, ]

# gt_roi_loc [n_sample, 4]

# gt_roi_label [n_sample, ]

# ------------------------------------------------------ #

sample_roi, gt_roi_loc, gt_roi_label = self.proposal_target_creator(roi, bbox, label, self.loc_normalize_std)

sample_rois.append(torch.Tensor(sample_roi).type_as(rpn_locs))

sample_indexes.append(torch.ones(len(sample_roi)).type_as(rpn_locs) * roi_indices[i][0])

gt_roi_locs.append(torch.Tensor(gt_roi_loc).type_as(rpn_locs))

gt_roi_labels.append(torch.Tensor(gt_roi_label).type_as(rpn_locs).long())

sample_rois = torch.stack(sample_rois, dim=0)

sample_indexes = torch.stack(sample_indexes, dim=0)

roi_cls_locs, roi_scores = self.model_train([base_feature, sample_rois, sample_indexes, img_size], mode = 'head')

for i in range(n):

# ------------------------------------------------------ #

# 根据建议框的种类,取出对应的回归预测结果

# ------------------------------------------------------ #

n_sample = roi_cls_locs.size()[1]

roi_cls_loc = roi_cls_locs[i]

roi_score = roi_scores[i]

gt_roi_loc = gt_roi_locs[i]

gt_roi_label = gt_roi_labels[i]

roi_cls_loc = roi_cls_loc.view(n_sample, -1, 4)

roi_loc = roi_cls_loc[torch.arange(0, n_sample), gt_roi_label]

# -------------------------------------------------- #

# 分别计算Classifier网络的回归损失和分类损失

# -------------------------------------------------- #

roi_loc_loss = self._fast_rcnn_loc_loss(roi_loc, gt_roi_loc, gt_roi_label.data, self.roi_sigma)

roi_cls_loss = nn.CrossEntropyLoss()(roi_score, gt_roi_label)

roi_loc_loss_all += roi_loc_loss

roi_cls_loss_all += roi_cls_loss

losses = [rpn_loc_loss_all/n, rpn_cls_loss_all/n, roi_loc_loss_all/n, roi_cls_loss_all/n]

losses = losses + [sum(losses)]

return losses

def train_step(self, imgs, bboxes, labels, scale, fp16=False, scaler=None):

self.optimizer.zero_grad()

if not fp16:

losses = self.forward(imgs, bboxes, labels, scale)

losses[-1].backward()

self.optimizer.step()

else:

from torch.cuda.amp import autocast

with autocast():

losses = self.forward(imgs, bboxes, labels, scale)

#----------------------#

# 反向传播

#----------------------#

scaler.scale(losses[-1]).backward()

scaler.step(self.optimizer)

scaler.update()

return losses

def weights_init(net, init_type='normal', init_gain=0.02):

def init_func(m):

classname = m.__class__.__name__

if hasattr(m, 'weight') and classname.find('Conv') != -1:

if init_type == 'normal':

torch.nn.init.normal_(m.weight.data, 0.0, init_gain)

elif init_type == 'xavier':

torch.nn.init.xavier_normal_(m.weight.data, gain=init_gain)

elif init_type == 'kaiming':

torch.nn.init.kaiming_normal_(m.weight.data, a=0, mode='fan_in')

elif init_type == 'orthogonal':

torch.nn.init.orthogonal_(m.weight.data, gain=init_gain)

else:

raise NotImplementedError('initialization method [%s] is not implemented' % init_type)

elif classname.find('BatchNorm2d') != -1:

torch.nn.init.normal_(m.weight.data, 1.0, 0.02)

torch.nn.init.constant_(m.bias.data, 0.0)

print('initialize network with %s type' % init_type)

net.apply(init_func)

def get_lr_scheduler(lr_decay_type, lr, min_lr, total_iters, warmup_iters_ratio = 0.05, warmup_lr_ratio = 0.1, no_aug_iter_ratio = 0.05, step_num = 10):

def yolox_warm_cos_lr(lr, min_lr, total_iters, warmup_total_iters, warmup_lr_start, no_aug_iter, iters):

if iters <= warmup_total_iters:

# lr = (lr - warmup_lr_start) * iters / float(warmup_total_iters) + warmup_lr_start

lr = (lr - warmup_lr_start) * pow(iters / float(warmup_total_iters), 2) + warmup_lr_start

elif iters >= total_iters - no_aug_iter:

lr = min_lr

else:

lr = min_lr + 0.5 * (lr - min_lr) * (

1.0 + math.cos(math.pi* (iters - warmup_total_iters) / (total_iters - warmup_total_iters - no_aug_iter))

)

return lr

def step_lr(lr, decay_rate, step_size, iters):

if step_size < 1:

raise ValueError("step_size must above 1.")

n = iters // step_size

out_lr = lr * decay_rate ** n

return out_lr

if lr_decay_type == "cos":

warmup_total_iters = min(max(warmup_iters_ratio * total_iters, 1), 3)

warmup_lr_start = max(warmup_lr_ratio * lr, 1e-6)

no_aug_iter = min(max(no_aug_iter_ratio * total_iters, 1), 15)

func = partial(yolox_warm_cos_lr ,lr, min_lr, total_iters, warmup_total_iters, warmup_lr_start, no_aug_iter)

else:

decay_rate = (min_lr / lr) ** (1 / (step_num - 1))

step_size = total_iters / step_num

func = partial(step_lr, lr, decay_rate, step_size)

return func

def set_optimizer_lr(optimizer, lr_scheduler_func, epoch):

lr = lr_scheduler_func(epoch)

for param_group in optimizer.param_groups:

param_group['lr'] = lr

frcnn.py

import torch.nn as nn

from nets.classifier import Resnet50RoIHead, VGG16RoIHead

from nets.resnet50 import resnet50

from nets.rpn import RegionProposalNetwork

from nets.vgg16 import decom_vgg16

class FasterRCNN(nn.Module):

def __init__(self, num_classes,

mode = "training",

feat_stride = 16,

anchor_scales = [8, 16, 32],

ratios = [0.5, 1, 2],

backbone = 'vgg',

pretrained = False):

super(FasterRCNN, self).__init__()

self.feat_stride = feat_stride

#---------------------------------#

# 一共存在两个主干

# vgg和resnet50

#---------------------------------#

if backbone == 'vgg':

self.extractor, classifier = decom_vgg16(pretrained)

#---------------------------------#

# 构建建议框网络

#---------------------------------#

self.rpn = RegionProposalNetwork(

512, 512,

ratios = ratios,

anchor_scales = anchor_scales,

feat_stride = self.feat_stride,

mode = mode

)

#---------------------------------#

# 构建分类器网络

#---------------------------------#

self.head = VGG16RoIHead(

n_class = num_classes + 1,

roi_size = 7,

spatial_scale = 1,

classifier = classifier

)

elif backbone == 'resnet50':

self.extractor, classifier = resnet50(pretrained)

#---------------------------------#

# 构建classifier网络

#---------------------------------#

self.rpn = RegionProposalNetwork(

1024, 512,

ratios = ratios,

anchor_scales = anchor_scales,

feat_stride = self.feat_stride,

mode = mode

)

#---------------------------------#

# 构建classifier网络

#---------------------------------#

self.head = Resnet50RoIHead(

n_class = num_classes + 1,

roi_size = 14,

spatial_scale = 1,

classifier = classifier

)

def forward(self, x, scale=1., mode="forward"):

if mode == "forward":

#---------------------------------#

# 计算输入图片的大小

#---------------------------------#

img_size = x.shape[2:]

#---------------------------------#

# 利用主干网络提取特征

#---------------------------------#

base_feature = self.extractor.forward(x)

#---------------------------------#

# 获得建议框

#---------------------------------#

_, _, rois, roi_indices, _ = self.rpn.forward(base_feature, img_size, scale)

#---------------------------------------#

# 获得classifier的分类结果和回归结果

#---------------------------------------#

roi_cls_locs, roi_scores = self.head.forward(base_feature, rois, roi_indices, img_size)

return roi_cls_locs, roi_scores, rois, roi_indices

elif mode == "extractor":

#---------------------------------#

# 利用主干网络提取特征

#---------------------------------#

base_feature = self.extractor.forward(x)

return base_feature

elif mode == "rpn":

base_feature, img_size = x

#---------------------------------#

# 获得建议框

#---------------------------------#

rpn_locs, rpn_scores, rois, roi_indices, anchor = self.rpn.forward(base_feature, img_size, scale)

return rpn_locs, rpn_scores, rois, roi_indices, anchor

elif mode == "head":

base_feature, rois, roi_indices, img_size = x

#---------------------------------------#

# 获得classifier的分类结果和回归结果

#---------------------------------------#

roi_cls_locs, roi_scores = self.head.forward(base_feature, rois, roi_indices, img_size)

return roi_cls_locs, roi_scores

def freeze_bn(self):

for m in self.modules():

if isinstance(m, nn.BatchNorm2d):

m.eval()

resnet50.py

import math

import torch.nn as nn

from torch.hub import load_state_dict_from_url

class Bottleneck(nn.Module):

expansion = 4

def __init__(self, inplanes, planes, stride=1, downsample=None):

super(Bottleneck, self).__init__()

self.conv1 = nn.Conv2d(inplanes, planes, kernel_size=1, stride=stride, bias=False)

self.bn1 = nn.BatchNorm2d(planes)

self.conv2 = nn.Conv2d(planes, planes, kernel_size=3, stride=1, padding=1, bias=False)

self.bn2 = nn.BatchNorm2d(planes)

self.conv3 = nn.Conv2d(planes, planes * 4, kernel_size=1, bias=False)

self.bn3 = nn.BatchNorm2d(planes * 4)

self.relu = nn.ReLU(inplace=True)

self.downsample = downsample

self.stride = stride

def forward(self, x):

residual = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

out = self.relu(out)

out = self.conv3(out)

out = self.bn3(out)

if self.downsample is not None:

residual = self.downsample(x)

out += residual

out = self.relu(out)

return out

class ResNet(nn.Module):

def __init__(self, block, layers, num_classes=1000):

#-----------------------------------#

# 假设输入进来的图片是600,600,3

#-----------------------------------#

self.inplanes = 64

super(ResNet, self).__init__()

# 600,600,3 -> 300,300,64

self.conv1 = nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3, bias=False)

self.bn1 = nn.BatchNorm2d(64)

self.relu = nn.ReLU(inplace=True)

# 300,300,64 -> 150,150,64

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=0, ceil_mode=True)

# 150,150,64 -> 150,150,256

self.layer1 = self._make_layer(block, 64, layers[0])

# 150,150,256 -> 75,75,512

self.layer2 = self._make_layer(block, 128, layers[1], stride=2)

# 75,75,512 -> 38,38,1024 到这里可以获得一个38,38,1024的共享特征层

self.layer3 = self._make_layer(block, 256, layers[2], stride=2)

# self.layer4被用在classifier模型中

self.layer4 = self._make_layer(block, 512, layers[3], stride=2)

self.avgpool = nn.AvgPool2d(7)

self.fc = nn.Linear(512 * block.expansion, num_classes)

for m in self.modules():

if isinstance(m, nn.Conv2d):

n = m.kernel_size[0] * m.kernel_size[1] * m.out_channels

m.weight.data.normal_(0, math.sqrt(2. / n))

elif isinstance(m, nn.BatchNorm2d):

m.weight.data.fill_(1)

m.bias.data.zero_()

def _make_layer(self, block, planes, blocks, stride=1):

downsample = None

#-------------------------------------------------------------------#

# 当模型需要进行高和宽的压缩的时候,就需要用到残差边的downsample

#-------------------------------------------------------------------#

if stride != 1 or self.inplanes != planes * block.expansion:

downsample = nn.Sequential(

nn.Conv2d(self.inplanes, planes * block.expansion,kernel_size=1, stride=stride, bias=False),

nn.BatchNorm2d(planes * block.expansion),

)

layers = []

layers.append(block(self.inplanes, planes, stride, downsample))

self.inplanes = planes * block.expansion

for i in range(1, blocks):

layers.append(block(self.inplanes, planes))

return nn.Sequential(*layers)

def forward(self, x):

x = self.conv1(x)

x = self.bn1(x)

x = self.relu(x)

x = self.maxpool(x)

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x)

x = self.avgpool(x)

x = x.view(x.size(0), -1)

x = self.fc(x)

return x

def resnet50(pretrained = False):

model = ResNet(Bottleneck, [3, 4, 6, 3])

if pretrained:

state_dict = load_state_dict_from_url("https://download.pytorch.org/models/resnet50-19c8e357.pth", model_dir="./model_data")

model.load_state_dict(state_dict)

#----------------------------------------------------------------------------#

# 获取特征提取部分,从conv1到model.layer3,最终获得一个38,38,1024的特征层

#----------------------------------------------------------------------------#

features = list([model.conv1, model.bn1, model.relu, model.maxpool, model.layer1, model.layer2, model.layer3])

#----------------------------------------------------------------------------#

# 获取分类部分,从model.layer4到model.avgpool

#----------------------------------------------------------------------------#

classifier = list([model.layer4, model.avgpool])

features = nn.Sequential(*features)

classifier = nn.Sequential(*classifier)

return features, classifier

rpn.py

import numpy as np

import torch

from torch import nn

from torch.nn import functional as F

from torchvision.ops import nms

from utils.anchors import _enumerate_shifted_anchor, generate_anchor_base

from utils.utils_bbox import loc2bbox

class ProposalCreator():

def __init__(

self,

mode,

nms_iou = 0.7,

n_train_pre_nms = 12000,

n_train_post_nms = 600,

n_test_pre_nms = 3000,

n_test_post_nms = 300,

min_size = 16

):

#-----------------------------------#

# 设置预测还是训练

#-----------------------------------#

self.mode = mode

#-----------------------------------#

# 建议框非极大抑制的iou大小

#-----------------------------------#

self.nms_iou = nms_iou

#-----------------------------------#

# 训练用到的建议框数量

#-----------------------------------#

self.n_train_pre_nms = n_train_pre_nms

self.n_train_post_nms = n_train_post_nms

#-----------------------------------#

# 预测用到的建议框数量

#-----------------------------------#

self.n_test_pre_nms = n_test_pre_nms

self.n_test_post_nms = n_test_post_nms

self.min_size = min_size

def __call__(self, loc, score, anchor, img_size, scale=1.):

if self.mode == "training":

n_pre_nms = self.n_train_pre_nms

n_post_nms = self.n_train_post_nms

else:

n_pre_nms = self.n_test_pre_nms

n_post_nms = self.n_test_post_nms

#-----------------------------------#

# 将先验框转换成tensor

#-----------------------------------#

anchor = torch.from_numpy(anchor).type_as(loc)

#-----------------------------------#

# 将RPN网络预测结果转化成建议框

#-----------------------------------#

roi = loc2bbox(anchor, loc)

#-----------------------------------#

# 防止建议框超出图像边缘

#-----------------------------------#

roi[:, [0, 2]] = torch.clamp(roi[:, [0, 2]], min = 0, max = img_size[1])

roi[:, [1, 3]] = torch.clamp(roi[:, [1, 3]], min = 0, max = img_size[0])

#-----------------------------------#

# 建议框的宽高的最小值不可以小于16

#-----------------------------------#

min_size = self.min_size * scale

keep = torch.where(((roi[:, 2] - roi[:, 0]) >= min_size) & ((roi[:, 3] - roi[:, 1]) >= min_size))[0]

#-----------------------------------#

# 将对应的建议框保留下来

#-----------------------------------#

roi = roi[keep, :]

score = score[keep]

#-----------------------------------#

# 根据得分进行排序,取出建议框

#-----------------------------------#

order = torch.argsort(score, descending=True)

if n_pre_nms > 0:

order = order[:n_pre_nms]

roi = roi[order, :]

score = score[order]

#-----------------------------------#

# 对建议框进行非极大抑制

# 使用官方的非极大抑制会快非常多

#-----------------------------------#

keep = nms(roi, score, self.nms_iou)

if len(keep) < n_post_nms:

index_extra = np.random.choice(range(len(keep)), size=(n_post_nms - len(keep)), replace=True)

keep = torch.cat([keep, keep[index_extra]])

keep = keep[:n_post_nms]

roi = roi[keep]

return roi

class RegionProposalNetwork(nn.Module):

def __init__(

self,

in_channels = 512,

mid_channels = 512,

ratios = [0.5, 1, 2],

anchor_scales = [8, 16, 32],

feat_stride = 16,

mode = "training",

):

super(RegionProposalNetwork, self).__init__()

#-----------------------------------------#

# 生成基础先验框,shape为[9, 4]

#-----------------------------------------#

self.anchor_base = generate_anchor_base(anchor_scales = anchor_scales, ratios = ratios)

n_anchor = self.anchor_base.shape[0]

#-----------------------------------------#

# 先进行一个3x3的卷积,可理解为特征整合

#-----------------------------------------#

self.conv1 = nn.Conv2d(in_channels, mid_channels, 3, 1, 1)

#-----------------------------------------#

# 分类预测先验框内部是否包含物体

#-----------------------------------------#

self.score = nn.Conv2d(mid_channels, n_anchor * 2, 1, 1, 0)

#-----------------------------------------#

# 回归预测对先验框进行调整

#-----------------------------------------#

self.loc = nn.Conv2d(mid_channels, n_anchor * 4, 1, 1, 0)

#-----------------------------------------#

# 特征点间距步长

#-----------------------------------------#

self.feat_stride = feat_stride

#-----------------------------------------#

# 用于对建议框解码并进行非极大抑制

#-----------------------------------------#

self.proposal_layer = ProposalCreator(mode)

#--------------------------------------#

# 对FPN的网络部分进行权值初始化

#--------------------------------------#

normal_init(self.conv1, 0, 0.01)

normal_init(self.score, 0, 0.01)

normal_init(self.loc, 0, 0.01)

def forward(self, x, img_size, scale=1.):

n, _, h, w = x.shape

#-----------------------------------------#

# 先进行一个3x3的卷积,可理解为特征整合

#-----------------------------------------#

x = F.relu(self.conv1(x))

#-----------------------------------------#

# 回归预测对先验框进行调整

#-----------------------------------------#

rpn_locs = self.loc(x)

rpn_locs = rpn_locs.permute(0, 2, 3, 1).contiguous().view(n, -1, 4)

#-----------------------------------------#

# 分类预测先验框内部是否包含物体

#-----------------------------------------#

rpn_scores = self.score(x)

rpn_scores = rpn_scores.permute(0, 2, 3, 1).contiguous().view(n, -1, 2)

#--------------------------------------------------------------------------------------#

# 进行softmax概率计算,每个先验框只有两个判别结果

# 内部包含物体或者内部不包含物体,rpn_softmax_scores[:, :, 1]的内容为包含物体的概率

#--------------------------------------------------------------------------------------#

rpn_softmax_scores = F.softmax(rpn_scores, dim=-1)

rpn_fg_scores = rpn_softmax_scores[:, :, 1].contiguous()

rpn_fg_scores = rpn_fg_scores.view(n, -1)

#------------------------------------------------------------------------------------------------#

# 生成先验框,此时获得的anchor是布满网格点的,当输入图片为600,600,3的时候,shape为(12996, 4)

#------------------------------------------------------------------------------------------------#

anchor = _enumerate_shifted_anchor(np.array(self.anchor_base), self.feat_stride, h, w)

rois = list()

roi_indices = list()

for i in range(n):

roi = self.proposal_layer(rpn_locs[i], rpn_fg_scores[i], anchor, img_size, scale = scale)

batch_index = i * torch.ones((len(roi),))

rois.append(roi.unsqueeze(0))

roi_indices.append(batch_index.unsqueeze(0))

rois = torch.cat(rois, dim=0).type_as(x)

roi_indices = torch.cat(roi_indices, dim=0).type_as(x)

anchor = torch.from_numpy(anchor).unsqueeze(0).float().to(x.device)

return rpn_locs, rpn_scores, rois, roi_indices, anchor

def normal_init(m, mean, stddev, truncated=False):

if truncated:

m.weight.data.normal_().fmod_(2).mul_(stddev).add_(mean) # not a perfect approximation

else:

m.weight.data.normal_(mean, stddev)

m.bias.data.zero_()

vgg16.py

import torch

import torch.nn as nn

from torch.hub import load_state_dict_from_url

#--------------------------------------#

# VGG16的结构

#--------------------------------------#

class VGG(nn.Module):

def __init__(self, features, num_classes=1000, init_weights=True):

super(VGG, self).__init__()

self.features = features

#--------------------------------------#

# 平均池化到7x7大小

#--------------------------------------#

self.avgpool = nn.AdaptiveAvgPool2d((7, 7))

#--------------------------------------#

# 分类部分

#--------------------------------------#

self.classifier = nn.Sequential(

nn.Linear(512 * 7 * 7, 4096),

nn.ReLU(True),

nn.Dropout(),

nn.Linear(4096, 4096),

nn.ReLU(True),

nn.Dropout(),

nn.Linear(4096, num_classes),

)

if init_weights:

self._initialize_weights()

def forward(self, x):

#--------------------------------------#

# 特征提取

#--------------------------------------#

x = self.features(x)

#--------------------------------------#

# 平均池化

#--------------------------------------#

x = self.avgpool(x)

#--------------------------------------#

# 平铺后

#--------------------------------------#

x = torch.flatten(x, 1)

#--------------------------------------#

# 分类部分

#--------------------------------------#

x = self.classifier(x)

return x

def _initialize_weights(self):

for m in self.modules():

if isinstance(m, nn.Conv2d):

nn.init.kaiming_normal_(m.weight, mode = 'fan_out', nonlinearity = 'relu')

if m.bias is not None:

nn.init.constant_(m.bias, 0)

elif isinstance(m, nn.BatchNorm2d):

nn.init.constant_(m.weight, 1)

nn.init.constant_(m.bias, 0)

elif isinstance(m, nn.Linear):

nn.init.normal_(m.weight, 0, 0.01)

nn.init.constant_(m.bias, 0)

'''

假设输入图像为(600, 600, 3),随着cfg的循环,特征层变化如下:

600,600,3 -> 600,600,64 -> 600,600,64 -> 300,300,64 -> 300,300,128 -> 300,300,128 -> 150,150,128 -> 150,150,256 -> 150,150,256 -> 150,150,256

-> 75,75,256 -> 75,75,512 -> 75,75,512 -> 75,75,512 -> 37,37,512 -> 37,37,512 -> 37,37,512 -> 37,37,512

到cfg结束,我们获得了一个37,37,512的特征层

'''

cfg = [64, 64, 'M', 128, 128, 'M', 256, 256, 256, 'M', 512, 512, 512, 'M', 512, 512, 512, 'M']

#--------------------------------------#

# 特征提取部分

#--------------------------------------#

def make_layers(cfg, batch_norm = False):

layers = []

in_channels = 3

for v in cfg:

if v == 'M':

layers += [nn.MaxPool2d(kernel_size = 2, stride = 2)]

else:

conv2d = nn.Conv2d(in_channels, v, kernel_size = 3, padding = 1)

if batch_norm:

layers += [conv2d, nn.BatchNorm2d(v), nn.ReLU(inplace = True)]

else:

layers += [conv2d, nn.ReLU(inplace = True)]

in_channels = v

return nn.Sequential(*layers)

def decom_vgg16(pretrained = False):

model = VGG(make_layers(cfg))

if pretrained:

state_dict = load_state_dict_from_url("https://download.pytorch.org/models/vgg16-397923af.pth", model_dir = "./model_data")

model.load_state_dict(state_dict)

#----------------------------------------------------------------------------#

# 获取特征提取部分,最终获得一个37,37,1024的特征层

#----------------------------------------------------------------------------#

features = list(model.features)[:30]

#----------------------------------------------------------------------------#

# 获取分类部分,需要除去Dropout部分

#----------------------------------------------------------------------------#

classifier = list(model.classifier)

del classifier[6]

del classifier[5]

del classifier[2]

features = nn.Sequential(*features)

classifier = nn.Sequential(*classifier)

return features, classifier

二、utils文件夹下

init.py

anchors.py

import numpy as np

#--------------------------------------------#

# 生成基础的先验框

#--------------------------------------------#

def generate_anchor_base(base_size = 16, ratios = [0.5, 1, 2], anchor_scales = [8, 16, 32]):

anchor_base = np.zeros((len(ratios) * len(anchor_scales), 4), dtype = np.float32)

for i in range(len(ratios)):

for j in range(len(anchor_scales)):

h = base_size * anchor_scales[j] * np.sqrt(ratios[i])

w = base_size * anchor_scales[j] * np.sqrt(1. / ratios[i])

index = i * len(anchor_scales) + j

anchor_base[index, 0] = - h / 2.

anchor_base[index, 1] = - w / 2.

anchor_base[index, 2] = h / 2.

anchor_base[index, 3] = w / 2.

return anchor_base

#--------------------------------------------#

# 对基础先验框进行拓展对应到所有特征点上

#--------------------------------------------#

def _enumerate_shifted_anchor(anchor_base, feat_stride, height, width):

#---------------------------------#

# 计算网格中心点

#---------------------------------#

shift_x = np.arange(0, width * feat_stride, feat_stride)

shift_y = np.arange(0, height * feat_stride, feat_stride)

shift_x, shift_y = np.meshgrid(shift_x, shift_y)

shift = np.stack((shift_x.ravel(), shift_y.ravel(), shift_x.ravel(), shift_y.ravel(),), axis=1)

#---------------------------------#

# 每个网格点上的9个先验框

#---------------------------------#

A = anchor_base.shape[0]

K = shift.shape[0]

anchor = anchor_base.reshape((1, A, 4)) + shift.reshape((K, 1, 4))

#---------------------------------#

# 所有的先验框

#---------------------------------#

anchor = anchor.reshape((K * A, 4)).astype(np.float32)

return anchor

if __name__ == "__main__":

import matplotlib.pyplot as plt

nine_anchors = generate_anchor_base()

print(nine_anchors)

height, width, feat_stride = 38,38,16

anchors_all = _enumerate_shifted_anchor(nine_anchors, feat_stride, height, width)

print(np.shape(anchors_all))

fig = plt.figure()

ax = fig.add_subplot(111)

plt.ylim(-300,900)

plt.xlim(-300,900)

shift_x = np.arange(0, width * feat_stride, feat_stride)

shift_y = np.arange(0, height * feat_stride, feat_stride)

shift_x, shift_y = np.meshgrid(shift_x, shift_y)

plt.scatter(shift_x,shift_y)

box_widths = anchors_all[:,2]-anchors_all[:,0]

box_heights = anchors_all[:,3]-anchors_all[:,1]

for i in [108, 109, 110, 111, 112, 113, 114, 115, 116]:

rect = plt.Rectangle([anchors_all[i, 0],anchors_all[i, 1]],box_widths[i],box_heights[i],color="r",fill=False)

ax.add_patch(rect)

plt.show()

callbacks.py

import os

import matplotlib

import torch

matplotlib.use('Agg')

from matplotlib import pyplot as plt

import scipy.signal

import shutil

import numpy as np

from PIL import Image

from torch.utils.tensorboard import SummaryWriter

from tqdm import tqdm

from .utils import cvtColor, resize_image, preprocess_input, get_new_img_size

from .utils_bbox import DecodeBox

from .utils_map import get_coco_map, get_map

class LossHistory():

def __init__(self, log_dir, model, input_shape):

self.log_dir = log_dir

self.losses = []

self.val_loss = []

os.makedirs(self.log_dir)

self.writer = SummaryWriter(self.log_dir)

# try:

# dummy_input = torch.randn(2, 3, input_shape[0], input_shape[1])

# self.writer.add_graph(model, dummy_input)

# except:

# pass

def append_loss(self, epoch, loss, val_loss):

if not os.path.exists(self.log_dir):

os.makedirs(self.log_dir)

self.losses.append(loss)

self.val_loss.append(val_loss)

with open(os.path.join(self.log_dir, "epoch_loss.txt"), 'a') as f:

f.write(str(loss))

f.write("\n")

with open(os.path.join(self.log_dir, "epoch_val_loss.txt"), 'a') as f:

f.write(str(val_loss))

f.write("\n")

self.writer.add_scalar('loss', loss, epoch)

self.writer.add_scalar('val_loss', val_loss, epoch)

self.loss_plot()

def loss_plot(self):

iters = range(len(self.losses))

plt.figure()

plt.plot(iters, self.losses, 'red', linewidth = 2, label='train loss')

plt.plot(iters, self.val_loss, 'coral', linewidth = 2, label='val loss')

try:

if len(self.losses) < 25:

num = 5

else:

num = 15

plt.plot(iters, scipy.signal.savgol_filter(self.losses, num, 3), 'green', linestyle = '--', linewidth = 2, label='smooth train loss')

plt.plot(iters, scipy.signal.savgol_filter(self.val_loss, num, 3), '#8B4513', linestyle = '--', linewidth = 2, label='smooth val loss')

except:

pass

plt.grid(True)

plt.xlabel('Epoch')

plt.ylabel('Loss')

plt.legend(loc="upper right")

plt.savefig(os.path.join(self.log_dir, "epoch_loss.png"))

plt.cla()

plt.close("all")

class EvalCallback():

def __init__(self, net, input_shape, class_names, num_classes, val_lines, log_dir, cuda, \

map_out_path=".temp_map_out", max_boxes=100, confidence=0.05, nms_iou=0.5, letterbox_image=True, MINOVERLAP=0.5, eval_flag=True, period=1):

super(EvalCallback, self).__init__()

self.net = net

self.input_shape = input_shape

self.class_names = class_names

self.num_classes = num_classes

self.val_lines = val_lines

self.log_dir = log_dir

self.cuda = cuda

self.map_out_path = map_out_path

self.max_boxes = max_boxes

self.confidence = confidence

self.nms_iou = nms_iou

self.letterbox_image = letterbox_image

self.MINOVERLAP = MINOVERLAP

self.eval_flag = eval_flag

self.period = period

self.std = torch.Tensor([0.1, 0.1, 0.2, 0.2]).repeat(self.num_classes + 1)[None]

if self.cuda:

self.std = self.std.cuda()

self.bbox_util = DecodeBox(self.std, self.num_classes)

self.maps = [0]

self.epoches = [0]

if self.eval_flag:

with open(os.path.join(self.log_dir, "epoch_map.txt"), 'a') as f:

f.write(str(0))

f.write("\n")

#---------------------------------------------------#

# 检测图片

#---------------------------------------------------#

def get_map_txt(self, image_id, image, class_names, map_out_path):

f = open(os.path.join(map_out_path, "detection-results/"+image_id+".txt"),"w")

#---------------------------------------------------#

# 计算输入图片的高和宽

#---------------------------------------------------#

image_shape = np.array(np.shape(image)[0:2])

input_shape = get_new_img_size(image_shape[0], image_shape[1])

#---------------------------------------------------------#

# 在这里将图像转换成RGB图像,防止灰度图在预测时报错。

# 代码仅仅支持RGB图像的预测,所有其它类型的图像都会转化成RGB

#---------------------------------------------------------#

image = cvtColor(image)

#---------------------------------------------------------#

# 给原图像进行resize,resize到短边为600的大小上

#---------------------------------------------------------#

image_data = resize_image(image, [input_shape[1], input_shape[0]])

#---------------------------------------------------------#

# 添加上batch_size维度

#---------------------------------------------------------#

image_data = np.expand_dims(np.transpose(preprocess_input(np.array(image_data, dtype='float32')), (2, 0, 1)), 0)

with torch.no_grad():

images = torch.from_numpy(image_data)

if self.cuda:

images = images.cuda()

roi_cls_locs, roi_scores, rois, _ = self.net(images)

#-------------------------------------------------------------#

# 利用classifier的预测结果对建议框进行解码,获得预测框

#-------------------------------------------------------------#

results = self.bbox_util.forward(roi_cls_locs, roi_scores, rois, image_shape, input_shape,

nms_iou = self.nms_iou, confidence = self.confidence)

#--------------------------------------#

# 如果没有检测到物体,则返回原图

#--------------------------------------#

if len(results[0]) <= 0:

return

top_label = np.array(results[0][:, 5], dtype = 'int32')

top_conf = results[0][:, 4]

top_boxes = results[0][:, :4]

top_100 = np.argsort(top_conf)[::-1][:self.max_boxes]

top_boxes = top_boxes[top_100]

top_conf = top_conf[top_100]

top_label = top_label[top_100]

for i, c in list(enumerate(top_label)):

predicted_class = self.class_names[int(c)]

box = top_boxes[i]

score = str(top_conf[i])

top, left, bottom, right = box

if predicted_class not in class_names:

continue

f.write("%s %s %s %s %s %s\n" % (predicted_class, score[:6], str(int(left)), str(int(top)), str(int(right)),str(int(bottom))))

f.close()

return

def on_epoch_end(self, epoch):

if epoch % self.period == 0 and self.eval_flag:

if not os.path.exists(self.map_out_path):

os.makedirs(self.map_out_path)

if not os.path.exists(os.path.join(self.map_out_path, "ground-truth")):

os.makedirs(os.path.join(self.map_out_path, "ground-truth"))

if not os.path.exists(os.path.join(self.map_out_path, "detection-results")):

os.makedirs(os.path.join(self.map_out_path, "detection-results"))

print("Get map.")

for annotation_line in tqdm(self.val_lines):

line = annotation_line.split()

image_id = os.path.basename(line[0]).split('.')[0]

#------------------------------#

# 读取图像并转换成RGB图像

#------------------------------#

image = Image.open(line[0])

#------------------------------#

# 获得预测框

#------------------------------#

gt_boxes = np.array([np.array(list(map(int,box.split(',')))) for box in line[1:]])

#------------------------------#

# 获得预测txt

#------------------------------#

self.get_map_txt(image_id, image, self.class_names, self.map_out_path)

#------------------------------#

# 获得真实框txt

#------------------------------#

with open(os.path.join(self.map_out_path, "ground-truth/"+image_id+".txt"), "w") as new_f:

for box in gt_boxes:

left, top, right, bottom, obj = box

obj_name = self.class_names[obj]

new_f.write("%s %s %s %s %s\n" % (obj_name, left, top, right, bottom))

print("Calculate Map.")

try:

temp_map = get_coco_map(class_names = self.class_names, path = self.map_out_path)[1]

except:

temp_map = get_map(self.MINOVERLAP, False, path = self.map_out_path)

self.maps.append(temp_map)

self.epoches.append(epoch)

with open(os.path.join(self.log_dir, "epoch_map.txt"), 'a') as f:

f.write(str(temp_map))

f.write("\n")

plt.figure()

plt.plot(self.epoches, self.maps, 'red', linewidth = 2, label='train map')

plt.grid(True)

plt.xlabel('Epoch')

plt.ylabel('Map %s'%str(self.MINOVERLAP))

plt.title('A Map Curve')

plt.legend(loc="upper right")

plt.savefig(os.path.join(self.log_dir, "epoch_map.png"))

plt.cla()

plt.close("all")

print("Get map done.")

shutil.rmtree(self.map_out_path)

dataloader.py

import cv2

import numpy as np

import torch

from PIL import Image

from torch.utils.data.dataset import Dataset

from utils.utils import cvtColor, preprocess_input

class FRCNNDataset(Dataset):

def __init__(self, annotation_lines, input_shape = [600, 600], train = True):

self.annotation_lines = annotation_lines

self.length = len(annotation_lines)

self.input_shape = input_shape

self.train = train

def __len__(self):

return self.length

def __getitem__(self, index):

index = index % self.length

#---------------------------------------------------#

# 训练时进行数据的随机增强

# 验证时不进行数据的随机增强

#---------------------------------------------------#

image, y = self.get_random_data(self.annotation_lines[index], self.input_shape[0:2], random = self.train)

image = np.transpose(preprocess_input(np.array(image, dtype=np.float32)), (2, 0, 1))

box_data = np.zeros((len(y), 5))

if len(y) > 0:

box_data[:len(y)] = y

box = box_data[:, :4]

label = box_data[:, -1]

return image, box, label

def rand(self, a=0, b=1):

return np.random.rand()*(b-a) + a

def get_random_data(self, annotation_line, input_shape, jitter=.3, hue=.1, sat=0.7, val=0.4, random=True):

line = annotation_line.split()

#------------------------------#

# 读取图像并转换成RGB图像

#------------------------------#

image = Image.open(line[0])

image = cvtColor(image)

#------------------------------#

# 获得图像的高宽与目标高宽

#------------------------------#

iw, ih = image.size

h, w = input_shape

#------------------------------#

# 获得预测框

#------------------------------#

box = np.array([np.array(list(map(int,box.split(',')))) for box in line[1:]])

if not random:

scale = min(w/iw, h/ih)

nw = int(iw*scale)

nh = int(ih*scale)

dx = (w-nw)//2

dy = (h-nh)//2

#---------------------------------#

# 将图像多余的部分加上灰条

#---------------------------------#

image = image.resize((nw,nh), Image.BICUBIC)

new_image = Image.new('RGB', (w,h), (128,128,128))

new_image.paste(image, (dx, dy))

image_data = np.array(new_image, np.float32)

#---------------------------------#

# 对真实框进行调整

#---------------------------------#

if len(box)>0:

np.random.shuffle(box)

box[:, [0,2]] = box[:, [0,2]]*nw/iw + dx

box[:, [1,3]] = box[:, [1,3]]*nh/ih + dy

box[:, 0:2][box[:, 0:2]<0] = 0

box[:, 2][box[:, 2]>w] = w

box[:, 3][box[:, 3]>h] = h

box_w = box[:, 2] - box[:, 0]

box_h = box[:, 3] - box[:, 1]

box = box[np.logical_and(box_w>1, box_h>1)] # discard invalid box

return image_data, box

#------------------------------------------#

# 对图像进行缩放并且进行长和宽的扭曲

#------------------------------------------#

new_ar = iw/ih * self.rand(1-jitter,1+jitter) / self.rand(1-jitter,1+jitter)

scale = self.rand(.25, 2)

if new_ar < 1:

nh = int(scale*h)

nw = int(nh*new_ar)

else:

nw = int(scale*w)

nh = int(nw/new_ar)

image = image.resize((nw,nh), Image.BICUBIC)

#------------------------------------------#

# 将图像多余的部分加上灰条

#------------------------------------------#

dx = int(self.rand(0, w-nw))

dy = int(self.rand(0, h-nh))

new_image = Image.new('RGB', (w,h), (128,128,128))

new_image.paste(image, (dx, dy))

image = new_image

#------------------------------------------#

# 翻转图像

#------------------------------------------#

flip = self.rand()<.5

if flip: image = image.transpose(Image.FLIP_LEFT_RIGHT)

image_data = np.array(image, np.uint8)

#---------------------------------#

# 对图像进行色域变换

# 计算色域变换的参数

#---------------------------------#

r = np.random.uniform(-1, 1, 3) * [hue, sat, val] + 1

#---------------------------------#

# 将图像转到HSV上

#---------------------------------#

hue, sat, val = cv2.split(cv2.cvtColor(image_data, cv2.COLOR_RGB2HSV))

dtype = image_data.dtype

#---------------------------------#

# 应用变换

#---------------------------------#

x = np.arange(0, 256, dtype=r.dtype)

lut_hue = ((x * r[0]) % 180).astype(dtype)

lut_sat = np.clip(x * r[1], 0, 255).astype(dtype)

lut_val = np.clip(x * r[2], 0, 255).astype(dtype)

image_data = cv2.merge((cv2.LUT(hue, lut_hue), cv2.LUT(sat, lut_sat), cv2.LUT(val, lut_val)))

image_data = cv2.cvtColor(image_data, cv2.COLOR_HSV2RGB)

#---------------------------------#

# 对真实框进行调整

#---------------------------------#

if len(box)>0:

np.random.shuffle(box)

box[:, [0,2]] = box[:, [0,2]]*nw/iw + dx

box[:, [1,3]] = box[:, [1,3]]*nh/ih + dy

if flip: box[:, [0,2]] = w - box[:, [2,0]]

box[:, 0:2][box[:, 0:2]<0] = 0

box[:, 2][box[:, 2]>w] = w

box[:, 3][box[:, 3]>h] = h

box_w = box[:, 2] - box[:, 0]

box_h = box[:, 3] - box[:, 1]

box = box[np.logical_and(box_w>1, box_h>1)]

return image_data, box

# DataLoader中collate_fn使用

def frcnn_dataset_collate(batch):

images = []

bboxes = []

labels = []

for img, box, label in batch:

images.append(img)

bboxes.append(box)

labels.append(label)

images = torch.from_numpy(np.array(images))

return images, bboxes, labels

utils_bbox.py

import numpy as np

import torch

from torch.nn import functional as F

from torchvision.ops import nms

def loc2bbox(src_bbox, loc):

if src_bbox.size()[0] == 0:

return torch.zeros((0, 4), dtype=loc.dtype)

src_width = torch.unsqueeze(src_bbox[:, 2] - src_bbox[:, 0], -1)

src_height = torch.unsqueeze(src_bbox[:, 3] - src_bbox[:, 1], -1)

src_ctr_x = torch.unsqueeze(src_bbox[:, 0], -1) + 0.5 * src_width

src_ctr_y = torch.unsqueeze(src_bbox[:, 1], -1) + 0.5 * src_height

dx = loc[:, 0::4]

dy = loc[:, 1::4]

dw = loc[:, 2::4]

dh = loc[:, 3::4]

ctr_x = dx * src_width + src_ctr_x

ctr_y = dy * src_height + src_ctr_y

w = torch.exp(dw) * src_width

h = torch.exp(dh) * src_height

dst_bbox = torch.zeros_like(loc)

dst_bbox[:, 0::4] = ctr_x - 0.5 * w

dst_bbox[:, 1::4] = ctr_y - 0.5 * h

dst_bbox[:, 2::4] = ctr_x + 0.5 * w

dst_bbox[:, 3::4] = ctr_y + 0.5 * h

return dst_bbox

class DecodeBox():

def __init__(self, std, num_classes):

self.std = std

self.num_classes = num_classes + 1

def frcnn_correct_boxes(self, box_xy, box_wh, input_shape, image_shape):

#-----------------------------------------------------------------#

# 把y轴放前面是因为方便预测框和图像的宽高进行相乘

#-----------------------------------------------------------------#

box_yx = box_xy[..., ::-1]

box_hw = box_wh[..., ::-1]

input_shape = np.array(input_shape)

image_shape = np.array(image_shape)

box_mins = box_yx - (box_hw / 2.)

box_maxes = box_yx + (box_hw / 2.)

boxes = np.concatenate([box_mins[..., 0:1], box_mins[..., 1:2], box_maxes[..., 0:1], box_maxes[..., 1:2]], axis=-1)

boxes *= np.concatenate([image_shape, image_shape], axis=-1)

return boxes

def forward(self, roi_cls_locs, roi_scores, rois, image_shape, input_shape, nms_iou = 0.3, confidence = 0.5):

results = []

bs = len(roi_cls_locs)

#--------------------------------#

# batch_size, num_rois, 4

#--------------------------------#

rois = rois.view((bs, -1, 4))

#----------------------------------------------------------------------------------------------------------------#

# 对每一张图片进行处理,由于在predict.py的时候,我们只输入一张图片,所以for i in range(len(mbox_loc))只进行一次

#----------------------------------------------------------------------------------------------------------------#

for i in range(bs):

#----------------------------------------------------------#

# 对回归参数进行reshape

#----------------------------------------------------------#

roi_cls_loc = roi_cls_locs[i] * self.std

#----------------------------------------------------------#

# 第一维度是建议框的数量,第二维度是每个种类

# 第三维度是对应种类的调整参数

#----------------------------------------------------------#

roi_cls_loc = roi_cls_loc.view([-1, self.num_classes, 4])

#-------------------------------------------------------------#

# 利用classifier网络的预测结果对建议框进行调整获得预测框

# num_rois, 4 -> num_rois, 1, 4 -> num_rois, num_classes, 4

#-------------------------------------------------------------#

roi = rois[i].view((-1, 1, 4)).expand_as(roi_cls_loc)

cls_bbox = loc2bbox(roi.contiguous().view((-1, 4)), roi_cls_loc.contiguous().view((-1, 4)))

cls_bbox = cls_bbox.view([-1, (self.num_classes), 4])

#-------------------------------------------------------------#

# 对预测框进行归一化,调整到0-1之间

#-------------------------------------------------------------#

cls_bbox[..., [0, 2]] = (cls_bbox[..., [0, 2]]) / input_shape[1]

cls_bbox[..., [1, 3]] = (cls_bbox[..., [1, 3]]) / input_shape[0]

roi_score = roi_scores[i]

prob = F.softmax(roi_score, dim=-1)

results.append([])

for c in range(1, self.num_classes):

#--------------------------------#

# 取出属于该类的所有框的置信度

# 判断是否大于门限

#--------------------------------#

c_confs = prob[:, c]

c_confs_m = c_confs > confidence

if len(c_confs[c_confs_m]) > 0:

#-----------------------------------------#

# 取出得分高于confidence的框

#-----------------------------------------#

boxes_to_process = cls_bbox[c_confs_m, c]

confs_to_process = c_confs[c_confs_m]

keep = nms(

boxes_to_process,

confs_to_process,

nms_iou

)

#-----------------------------------------#

# 取出在非极大抑制中效果较好的内容

#-----------------------------------------#

good_boxes = boxes_to_process[keep]

confs = confs_to_process[keep][:, None]

labels = (c - 1) * torch.ones((len(keep), 1)).cuda() if confs.is_cuda else (c - 1) * torch.ones((len(keep), 1))

#-----------------------------------------#

# 将label、置信度、框的位置进行堆叠。

#-----------------------------------------#

c_pred = torch.cat((good_boxes, confs, labels), dim=1).cpu().numpy()

# 添加进result里

results[-1].extend(c_pred)

if len(results[-1]) > 0:

results[-1] = np.array(results[-1])

box_xy, box_wh = (results[-1][:, 0:2] + results[-1][:, 2:4])/2, results[-1][:, 2:4] - results[-1][:, 0:2]

results[-1][:, :4] = self.frcnn_correct_boxes(box_xy, box_wh, input_shape, image_shape)

return results

utils_fit.py

import os

import torch

from tqdm import tqdm

from utils.utils import get_lr

def fit_one_epoch(model, train_util, loss_history, eval_callback, optimizer, epoch, epoch_step, epoch_step_val, gen, gen_val, Epoch, cuda, fp16, scaler, save_period, save_dir):

total_loss = 0

rpn_loc_loss = 0

rpn_cls_loss = 0

roi_loc_loss = 0

roi_cls_loss = 0

val_loss = 0

print('Start Train')

with tqdm(total=epoch_step,desc=f'Epoch {epoch + 1}/{Epoch}',postfix=dict,mininterval=0.3) as pbar:

for iteration, batch in enumerate(gen):

if iteration >= epoch_step:

break

images, boxes, labels = batch[0], batch[1], batch[2]

with torch.no_grad():

if cuda:

images = images.cuda()

rpn_loc, rpn_cls, roi_loc, roi_cls, total = train_util.train_step(images, boxes, labels, 1, fp16, scaler)

total_loss += total.item()

rpn_loc_loss += rpn_loc.item()

rpn_cls_loss += rpn_cls.item()

roi_loc_loss += roi_loc.item()

roi_cls_loss += roi_cls.item()

pbar.set_postfix(**{'total_loss' : total_loss / (iteration + 1),

'rpn_loc' : rpn_loc_loss / (iteration + 1),

'rpn_cls' : rpn_cls_loss / (iteration + 1),

'roi_loc' : roi_loc_loss / (iteration + 1),

'roi_cls' : roi_cls_loss / (iteration + 1),

'lr' : get_lr(optimizer)})

pbar.update(1)

print('Finish Train')

print('Start Validation')

with tqdm(total=epoch_step_val, desc=f'Epoch {epoch + 1}/{Epoch}',postfix=dict,mininterval=0.3) as pbar:

for iteration, batch in enumerate(gen_val):

if iteration >= epoch_step_val:

break

images, boxes, labels = batch[0], batch[1], batch[2]

with torch.no_grad():

if cuda:

images = images.cuda()

train_util.optimizer.zero_grad()

_, _, _, _, val_total = train_util.forward(images, boxes, labels, 1)

val_loss += val_total.item()

pbar.set_postfix(**{'val_loss' : val_loss / (iteration + 1)})

pbar.update(1)

print('Finish Validation')

loss_history.append_loss(epoch + 1, total_loss / epoch_step, val_loss / epoch_step_val)

eval_callback.on_epoch_end(epoch + 1)

print('Epoch:'+ str(epoch + 1) + '/' + str(Epoch))

print('Total Loss: %.3f || Val Loss: %.3f ' % (total_loss / epoch_step, val_loss / epoch_step_val))

#-----------------------------------------------#

# 保存权值

#-----------------------------------------------#

if (epoch + 1) % save_period == 0 or epoch + 1 == Epoch:

torch.save(model.state_dict(), os.path.join(save_dir, 'ep%03d-loss%.3f-val_loss%.3f.pth' % (epoch + 1, total_loss / epoch_step, val_loss / epoch_step_val)))

if len(loss_history.val_loss) <= 1 or (val_loss / epoch_step_val) <= min(loss_history.val_loss):

print('Save best model to best_epoch_weights.pth')

torch.save(model.state_dict(), os.path.join(save_dir, "best_epoch_weights.pth"))

torch.save(model.state_dict(), os.path.join(save_dir, "last_epoch_weights.pth"))

utils_map.py

import glob

import json

import math

import operator

import os

import shutil

import sys

try:

from pycocotools.coco import COCO

from pycocotools.cocoeval import COCOeval

except:

pass

import cv2

import matplotlib

matplotlib.use('Agg')

from matplotlib import pyplot as plt

import numpy as np

'''

0,0 ------> x (width)

|

| (Left,Top)

| *_________

| | |

| |

y |_________|

(height) *

(Right,Bottom)

'''

def log_average_miss_rate(precision, fp_cumsum, num_images):

"""

log-average miss rate:

Calculated by averaging miss rates at 9 evenly spaced FPPI points

between 10e-2 and 10e0, in log-space.

output:

lamr | log-average miss rate

mr | miss rate

fppi | false positives per image

references:

[1] Dollar, Piotr, et al. "Pedestrian Detection: An Evaluation of the

State of the Art." Pattern Analysis and Machine Intelligence, IEEE

Transactions on 34.4 (2012): 743 - 761.

"""

if precision.size == 0:

lamr = 0

mr = 1

fppi = 0

return lamr, mr, fppi

fppi = fp_cumsum / float(num_images)

mr = (1 - precision)

fppi_tmp = np.insert(fppi, 0, -1.0)

mr_tmp = np.insert(mr, 0, 1.0)

ref = np.logspace(-2.0, 0.0, num = 9)

for i, ref_i in enumerate(ref):

j = np.where(fppi_tmp <= ref_i)[-1][-1]

ref[i] = mr_tmp[j]

lamr = math.exp(np.mean(np.log(np.maximum(1e-10, ref))))

return lamr, mr, fppi

"""

throw error and exit

"""

def error(msg):

print(msg)

sys.exit(0)

"""

check if the number is a float between 0.0 and 1.0

"""

def is_float_between_0_and_1(value):

try:

val = float(value)

if val > 0.0 and val < 1.0:

return True

else:

return False

except ValueError:

return False

"""

Calculate the AP given the recall and precision array

1st) We compute a version of the measured precision/recall curve with

precision monotonically decreasing

2nd) We compute the AP as the area under this curve by numerical integration.

"""

def voc_ap(rec, prec):

"""

--- Official matlab code VOC2012---

mrec=[0 ; rec ; 1];

mpre=[0 ; prec ; 0];

for i=numel(mpre)-1:-1:1

mpre(i)=max(mpre(i),mpre(i+1));

end

i=find(mrec(2:end)~=mrec(1:end-1))+1;

ap=sum((mrec(i)-mrec(i-1)).*mpre(i));

"""

rec.insert(0, 0.0) # insert 0.0 at begining of list

rec.append(1.0) # insert 1.0 at end of list

mrec = rec[:]

prec.insert(0, 0.0) # insert 0.0 at begining of list

prec.append(0.0) # insert 0.0 at end of list

mpre = prec[:]

"""

This part makes the precision monotonically decreasing

(goes from the end to the beginning)

matlab: for i=numel(mpre)-1:-1:1

mpre(i)=max(mpre(i),mpre(i+1));

"""

for i in range(len(mpre)-2, -1, -1):

mpre[i] = max(mpre[i], mpre[i+1])

"""

This part creates a list of indexes where the recall changes

matlab: i=find(mrec(2:end)~=mrec(1:end-1))+1;

"""

i_list = []

for i in range(1, len(mrec)):

if mrec[i] != mrec[i-1]:

i_list.append(i) # if it was matlab would be i + 1

"""

The Average Precision (AP) is the area under the curve

(numerical integration)

matlab: ap=sum((mrec(i)-mrec(i-1)).*mpre(i));

"""

ap = 0.0

for i in i_list:

ap += ((mrec[i]-mrec[i-1])*mpre[i])

return ap, mrec, mpre

"""

Convert the lines of a file to a list

"""

def file_lines_to_list(path):

# open txt file lines to a list

with open(path) as f:

content = f.readlines()

# remove whitespace characters like `\n` at the end of each line

content = [x.strip() for x in content]

return content

"""

Draws text in image

"""

def draw_text_in_image(img, text, pos, color, line_width):

font = cv2.FONT_HERSHEY_PLAIN

fontScale = 1

lineType = 1

bottomLeftCornerOfText = pos

cv2.putText(img, text,

bottomLeftCornerOfText,

font,

fontScale,

color,

lineType)

text_width, _ = cv2.getTextSize(text, font, fontScale, lineType)[0]

return img, (line_width + text_width)

"""

Plot - adjust axes

"""

def adjust_axes(r, t, fig, axes):

# get text width for re-scaling

bb = t.get_window_extent(renderer=r)

text_width_inches = bb.width / fig.dpi

# get axis width in inches

current_fig_width = fig.get_figwidth()

new_fig_width = current_fig_width + text_width_inches

propotion = new_fig_width / current_fig_width

# get axis limit

x_lim = axes.get_xlim()

axes.set_xlim([x_lim[0], x_lim[1]*propotion])

"""

Draw plot using Matplotlib

"""

def draw_plot_func(dictionary, n_classes, window_title, plot_title, x_label, output_path, to_show, plot_color, true_p_bar):

# sort the dictionary by decreasing value, into a list of tuples

sorted_dic_by_value = sorted(dictionary.items(), key=operator.itemgetter(1))

# unpacking the list of tuples into two lists

sorted_keys, sorted_values = zip(*sorted_dic_by_value)

#

if true_p_bar != "":

"""

Special case to draw in:

- green -> TP: True Positives (object detected and matches ground-truth)

- red -> FP: False Positives (object detected but does not match ground-truth)

- orange -> FN: False Negatives (object not detected but present in the ground-truth)

"""

fp_sorted = []

tp_sorted = []

for key in sorted_keys:

fp_sorted.append(dictionary[key] - true_p_bar[key])

tp_sorted.append(true_p_bar[key])

plt.barh(range(n_classes), fp_sorted, align='center', color='crimson', label='False Positive')

plt.barh(range(n_classes), tp_sorted, align='center', color='forestgreen', label='True Positive', left=fp_sorted)

# add legend

plt.legend(loc='lower right')

"""

Write number on side of bar

"""

fig = plt.gcf() # gcf - get current figure

axes = plt.gca()

r = fig.canvas.get_renderer()

for i, val in enumerate(sorted_values):

fp_val = fp_sorted[i]

tp_val = tp_sorted[i]

fp_str_val = " " + str(fp_val)

tp_str_val = fp_str_val + " " + str(tp_val)

# trick to paint multicolor with offset:

# first paint everything and then repaint the first number

t = plt.text(val, i, tp_str_val, color='forestgreen', va='center', fontweight='bold')

plt.text(val, i, fp_str_val, color='crimson', va='center', fontweight='bold')

if i == (len(sorted_values)-1): # largest bar

adjust_axes(r, t, fig, axes)

else:

plt.barh(range(n_classes), sorted_values, color=plot_color)

"""

Write number on side of bar

"""

fig = plt.gcf() # gcf - get current figure

axes = plt.gca()

r = fig.canvas.get_renderer()

for i, val in enumerate(sorted_values):

str_val = " " + str(val) # add a space before

if val < 1.0:

str_val = " {0:.2f}".format(val)

t = plt.text(val, i, str_val, color=plot_color, va='center', fontweight='bold')

# re-set axes to show number inside the figure

if i == (len(sorted_values)-1): # largest bar

adjust_axes(r, t, fig, axes)

# set window title

fig.canvas.manager.set_window_title(window_title)

# write classes in y axis

tick_font_size = 12

plt.yticks(range(n_classes), sorted_keys, fontsize=tick_font_size)

"""

Re-scale height accordingly

"""

init_height = fig.get_figheight()

# comput the matrix height in points and inches

dpi = fig.dpi

height_pt = n_classes * (tick_font_size * 1.4) # 1.4 (some spacing)

height_in = height_pt / dpi

# compute the required figure height

top_margin = 0.15 # in percentage of the figure height

bottom_margin = 0.05 # in percentage of the figure height

figure_height = height_in / (1 - top_margin - bottom_margin)

# set new height

if figure_height > init_height:

fig.set_figheight(figure_height)

# set plot title

plt.title(plot_title, fontsize=14)

# set axis titles

# plt.xlabel('classes')

plt.xlabel(x_label, fontsize='large')

# adjust size of window

fig.tight_layout()

# save the plot

fig.savefig(output_path)

# show image

if to_show:

plt.show()

# close the plot

plt.close()

def get_map(MINOVERLAP, draw_plot, score_threhold=0.5, path = './map_out'):

GT_PATH = os.path.join(path, 'ground-truth')

DR_PATH = os.path.join(path, 'detection-results')

IMG_PATH = os.path.join(path, 'images-optional')

TEMP_FILES_PATH = os.path.join(path, '.temp_files')

RESULTS_FILES_PATH = os.path.join(path, 'results')

show_animation = True

if os.path.exists(IMG_PATH):

for dirpath, dirnames, files in os.walk(IMG_PATH):

if not files:

show_animation = False

else:

show_animation = False

if not os.path.exists(TEMP_FILES_PATH):

os.makedirs(TEMP_FILES_PATH)

if os.path.exists(RESULTS_FILES_PATH):

shutil.rmtree(RESULTS_FILES_PATH)

else:

os.makedirs(RESULTS_FILES_PATH)

if draw_plot:

try:

matplotlib.use('TkAgg')

except:

pass

os.makedirs(os.path.join(RESULTS_FILES_PATH, "AP"))

os.makedirs(os.path.join(RESULTS_FILES_PATH, "F1"))

os.makedirs(os.path.join(RESULTS_FILES_PATH, "Recall"))

os.makedirs(os.path.join(RESULTS_FILES_PATH, "Precision"))

if show_animation:

os.makedirs(os.path.join(RESULTS_FILES_PATH, "images", "detections_one_by_one"))

ground_truth_files_list = glob.glob(GT_PATH + '/*.txt')

if len(ground_truth_files_list) == 0:

error("Error: No ground-truth files found!")

ground_truth_files_list.sort()

gt_counter_per_class = {}

counter_images_per_class = {}

for txt_file in ground_truth_files_list:

file_id = txt_file.split(".txt", 1)[0]

file_id = os.path.basename(os.path.normpath(file_id))

temp_path = os.path.join(DR_PATH, (file_id + ".txt"))

if not os.path.exists(temp_path):

error_msg = "Error. File not found: {}\n".format(temp_path)

error(error_msg)

lines_list = file_lines_to_list(txt_file)

bounding_boxes = []

is_difficult = False

already_seen_classes = []

for line in lines_list:

try:

if "difficult" in line:

class_name, left, top, right, bottom, _difficult = line.split()

is_difficult = True

else:

class_name, left, top, right, bottom = line.split()

except:

if "difficult" in line:

line_split = line.split()

_difficult = line_split[-1]

bottom = line_split[-2]

right = line_split[-3]

top = line_split[-4]

left = line_split[-5]

class_name = ""

for name in line_split[:-5]:

class_name += name + " "

class_name = class_name[:-1]

is_difficult = True

else:

line_split = line.split()

bottom = line_split[-1]

right = line_split[-2]

top = line_split[-3]

left = line_split[-4]

class_name = ""

for name in line_split[:-4]:

class_name += name + " "

class_name = class_name[:-1]

bbox = left + " " + top + " " + right + " " + bottom

if is_difficult:

bounding_boxes.append({"class_name":class_name, "bbox":bbox, "used":False, "difficult":True})

is_difficult = False

else:

bounding_boxes.append({"class_name":class_name, "bbox":bbox, "used":False})

if class_name in gt_counter_per_class:

gt_counter_per_class[class_name] += 1

else:

gt_counter_per_class[class_name] = 1

if class_name not in already_seen_classes:

if class_name in counter_images_per_class:

counter_images_per_class[class_name] += 1

else:

counter_images_per_class[class_name] = 1

already_seen_classes.append(class_name)

with open(TEMP_FILES_PATH + "/" + file_id + "_ground_truth.json", 'w') as outfile:

json.dump(bounding_boxes, outfile)

gt_classes = list(gt_counter_per_class.keys())

gt_classes = sorted(gt_classes)

n_classes = len(gt_classes)

dr_files_list = glob.glob(DR_PATH + '/*.txt')

dr_files_list.sort()

for class_index, class_name in enumerate(gt_classes):

bounding_boxes = []