Why Activation Functions

In basic network like perceptron, all of the relationships are linear which can’t include all the situations. Whatever the hidden layer is, the connections are still linear. Therefore, activation functions are needed. After calculating every result with the activation, it is possible to get nonlinear functions.

What is activation functions

Activation is a simple function that can transform the result of linear calculation into nonlinear result.

Several common activation functions

Relu

Relu( The Rectified Linear Unit) is a often used activation function in hidden layers.

Function:

Figure:

Sigmoid

Also known as logistic function. It is usually seen in the study of herd population. In computer science, it can mapping the output into variables between 0 and 1.

Function:

Figure:

Tanh

Tanh (also known as Hyperbolic tangent) is generated by sinh and cosh

Function:

Figure:Softmax

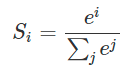

Sometimes, when doing project like recognizing the handwritten numbers, we need the model to output a single result. Therefore, the last dense layer is typically connected to a Softmax function that turns the output values into probabilities.

Function:

Figure:

299

299

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?