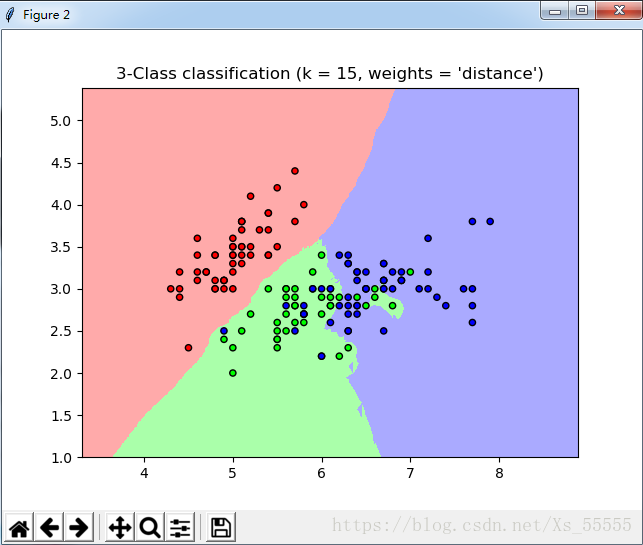

下面是sklearn 关于knn的代码

import numpy as np

import matplotlib.pyplot as plt

from matplotlib.colors import ListedColormap

from sklearn import neighbors, datasets

n_neighbors = 15

iris = datasets.load_iris()

x = iris.data[:, :2]

y = iris.target

h = 0.01

cmap_light = ListedColormap(['#FFAAAA', '#AAFFAA', '#AAAAFF'])

cmap_bold = ListedColormap(['#FF0000', '#00FF00', '#0000FF'])

for i in ['uniform', 'distance']:

clf = neighbors.KNeighborsClassifier(n_neighbors=n_neighbors, weights=i) #n_neighbors 相当于KNN 的K

#参数 weights 表示是否对距离进行加权, unifor 是和距离无关, distance 与距离成反比

clf.fit(x, y)

x_min, x_max = x[:, 0].min() - 1, x[:, 0].max() + 1

y_min, y_max = x[:, 1].min() - 1, x[:, 1].max() + 1

xx, yy = np.meshgrid(np.arange(x_min, x_max, h),

np.arange(y_min, y_max, h))

Z = clf.predict(np.c_[xx.ravel(), yy.ravel()])#xx.ravel() 将xx 散开 xx.reshape(1,N)

#np.c_[a, b] 输出[ab]

#np.r_[a, b] 输出[a;b]

Z = Z.reshape(xx.shape)

plt.figure()

plt.pcolormesh(xx, yy, Z, cmap=cmap_light)

# Plot also the training points

plt.scatter(x[:, 0], x[:, 1], c=y, cmap=cmap_bold,

edgecolor='k', s=20)

plt.xlim(xx.min(), xx.max())

plt.ylim(yy.min(), yy.max())

plt.title("3-Class classification (k = %i, weights = '%s')"

% (n_neighbors, i))

plt.show()输出结果

下面是不用python 库实现Knn

import numpy as np

import matplotlib.pyplot as plt

import math

from sklearn import datasets

k = 5

iris = datasets.load_iris()

x = iris.data[:, :2]

y = iris.target

def Myknn(inputdata, k, datasets, label):

numsampel = datasets.shape[0]

diff = np.tile(inputdata, (numsampel, 1)) - datasets

SquaredDiff = np.power(diff, 2)

squaredDist = np.sum(SquaredDiff, axis=1)#axis=1按列

distance = np.sqrt(squaredDist)

sortdistance = np.argsort(distance)

callasscount = {}

for i in range(k):

vol = label[sortdistance[i]]

callasscount[vol] = callasscount.get(vol, 0) + 1

maxnum = 0

maxitem = ''

for key, value in callasscount.items():

if value > maxnum:

maxnum = value

maxitem = key

return maxitem

test = [5, 1]

outputlabel = Myknn(test, k, x, y)

print('数据{} 的标签是'.format(test), outputlabel)

677

677

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?