1. 概要

Gradient Tree Boosting (别名 GBM, GBRT, GBDT, MART)是一类很常用的集成学习算法,在KDD Cup, Kaggle组织的很多数据挖掘竞赛中多次表现出在分类和回归任务上面最好的performance。同时在2010年Yahoo Learning to Rank Challenge中, 夺得冠军的LambdaMART算法也属于这一类算法。因此Tree Boosting算法和深度学习算法DNN/CNN/RNN等等一样在工业界和学术界中得到了非常广泛的应用。

最近研读了UW Tianqi Chen博士写的关于Gradient Tree Boosting 的Slide和Notes, 牛人就是牛人,可以把算法和模型讲的如此清楚,深入浅出,感觉对Tree Boosting算法的理解进一步加深了一些。本来打算写一篇比较详细的算法解析的文章,后来一想不如记录一些阅读心得和关键点,感兴趣的读者可以直接看英文原版资料如下:

A. Introduction to Boosted Tree. https://xgboost.readthedocs.io/en/latest/model.html

B. Introduction to Boosted Trees. By Tianqi Chen. http://homes.cs.washington.edu/~tqchen/data/pdf/BoostedTree.pdf

C. Tianqi Chen and Carlos Guestrin. XGBoost: A Scalable Tree Boosting System. in KDD '16. http://www.kdd.org/kdd2016/papers/files/rfp0697-chenAemb.pdf

感觉资料B这个slide反倒比资料A Document讲的更细致一些,这个Document跳过了一些slide里面提到的细节。这篇KDD paper的Section 2基本和这个Slide和Dcoument里面提到的公式一样。

2. 阅读笔记 (注释: 这部分摘录的图片出自Tianqi的slide,感谢原作者的精彩分享,我主要加上了一些个人理解性笔记,具体细节可以参考原版slide)

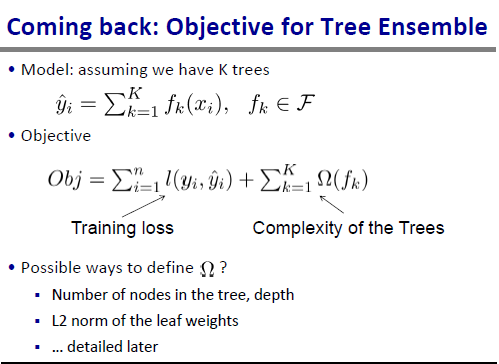

Tianqi的Slide首先给出了监督学习中一些常用基本概念的介绍,然后给出了Tree Ensemble 模型的目标函数定义

监督学习算法的目标函数通常包括Loss和Regularization两部分,这里给出的是一般形式,具体Loss的定义可以是Square Loss, Hinge Loss, Logistic Loss等等,关于Regularization可以是模型参数的L2或者 L1 norm等等。 这里针对Tree Ensemble算法,可以用树的节点数量,深度,叶子节点weights的L2 norm,叶子节点的数目等等来定义模型的复杂度。总体目标是学习出既有足够预测能力又不过于复杂/过拟合训练数据的模型。

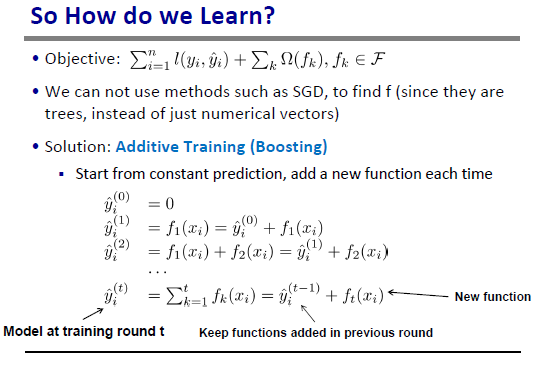

给定模型目标函数,如何进行优化最小化cost得到最优模型参数? 这里SGD就不适用了,因为模型参数是一些Tree Structure的集合而不是数值向量。我们可以用Additive Training (Boosting)算法来进行训练

因此模型的训练分多轮进行,每一轮我们在已经学到的tree的基础上尝试新添加一颗新树,这里显示了每一轮后预测值的变化关系。每一轮我们尝试去寻找最可能最小化目标函数的tree f_t(x_i)加入模型。那么如何寻找这样的tree呢?先来分析一下目标函数:

本文介绍了Gradient Tree Boosting(GBM, GBRT, GBDT, MART)算法,探讨了其在数据挖掘竞赛中的优秀表现,并通过Tianqi Chen的资料深入解析了算法原理。文章涵盖了监督学习目标函数、Additive Training(Boosting)优化过程、泰勒展开与损失函数、以及贪心搜索策略。此外,还提供了基于XGBoost和Scikit-learn的实现示例。"

134490529,11422243,水声通信:ConEncoder并联网络模型实现高准确率调制识别,"['水声通信', '深度学习', '信号处理', '神经网络', '机器学习']

本文介绍了Gradient Tree Boosting(GBM, GBRT, GBDT, MART)算法,探讨了其在数据挖掘竞赛中的优秀表现,并通过Tianqi Chen的资料深入解析了算法原理。文章涵盖了监督学习目标函数、Additive Training(Boosting)优化过程、泰勒展开与损失函数、以及贪心搜索策略。此外,还提供了基于XGBoost和Scikit-learn的实现示例。"

134490529,11422243,水声通信:ConEncoder并联网络模型实现高准确率调制识别,"['水声通信', '深度学习', '信号处理', '神经网络', '机器学习']

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

679

679

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?