一、安装mysql在slave2上操作

wget https://dev.mysql.com/get/mysql80-community-release-el9-3.noarch.rpm

rpm -ivh mysql80-community-release-el9-3.noarch.rpm

dnf install mysql-community-server -y

systemctl enable --now mysqld.service # 开机自启并立即启动mysql

systemctl status mysqld.service # 查看mysql状态

grep "password" /var/log/mysqld.log

# ↓MySQL8密码等级要求较高,首次修改密码时必须包含大小写字母、数字和特殊符号

alter user "root"@"localhost" identified by "test!@123"; # 修改root密码

use mysql; # 使用mysql库

update user set host="%" where user="root"; # 将host修改为所有ip均可以访问

flush privileges; # 刷新权限

show variables like "validate_password%"; # 查看密码策略

set global validate_password.length=5; # 密码长度设置为5位数

set global validate_password.policy=0; # 密码策略改为低

alter user "root"@"%" identified by "12345"; # 之后就可以设置简单的密码啦

grant all privileges on *.* to 'root'@'%' with grant option; # 允许远程连接

flush privileges; # 刷新权限

\q # 退出

mysql -uroot -p123456 # 测试

二、安装hive

1、在msater和slave1上安装hive

- 编辑环境变量

vim /etc/porfile

export HIVE_HOME=hive的安装路径

export PATH=$PATH:$HIVE_HOME/bin

source /etc/porfile

2.修改master\slave1 hive的配置文件env

cd /usr/hive/conf

vim hive-env.sh

#HADOOP_HOME=hadoop的路径

#export HIV_CONF_DIR=conf的路径

#export HIVE_AUX_JARS_PATH=lib的路径

- 修改hive-site.xml配置文件

3.1修改master上的hive-site-xml

<configuration>

<property>

<name>hive.metastore.warehouse.dir</name>

<value>/user/hive_remote/warehouse</value>

</property>

<property>

<name>hive.metastore.local</name>

<value>false</value>

</property>

<property>

<name>hive.metastore.uris</name>

<value>thrift://slave1:9083</value>

</property>

</configuration>

3.2修改slave1上的hive-site-xml

<configuration>

<property>

<name>hive.metastore.warehouse.dir</name>

<value>/user/hive_remote/warehouse</value>

</property>

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>

jdbc:mysql://slave2:3306/hive?createDatabaseIfNotExist=true

</value>

</property>

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

</property>

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>root</value>

</property>

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>123456</value>

</property>

<property>

<name>hive.metastore.schema.verification</name>

<value>false</value>

</property>

<property>

<name>datanucleus.schme,autoCreateAll</name>

<value>true</value>

</property>

</configuration>

- 解决版本冲突和上传jar包

下载mysql-connector-java-8.0.29.jar包请点击

master:为了解决版本冲突将hive/lib/jline-2.12.jar拷贝到hadoop/share/hadoop/yarn/lib

slave1:上传jdbc包到lib下(mysql-connector-java-jar)

- slave1下初始化数据库

schematool -dbType mysql -initSchema 初始数据库

bin/hive --service metastore &

- master下启动hive

bin/hive

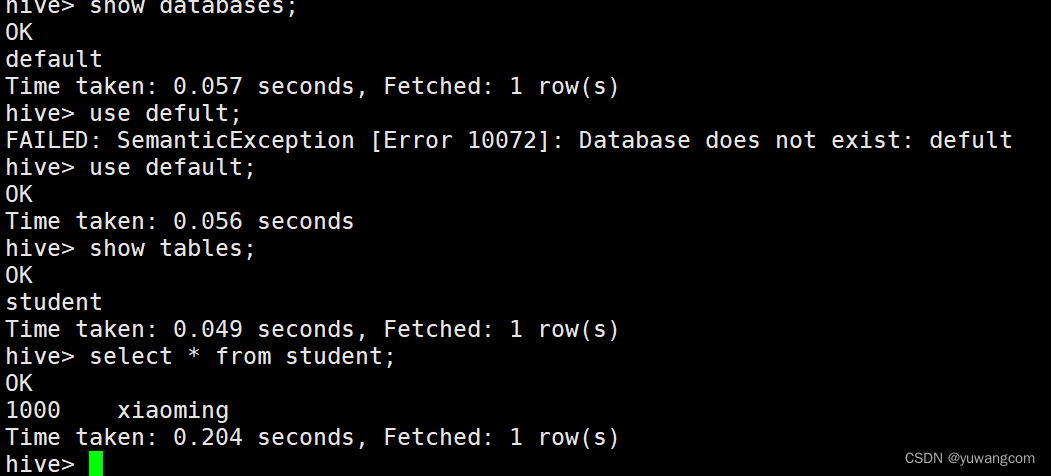

- 可以通过hive基础命令去查看是否安装成功

- 若卡死在

In order to change the average load for a reducer (in bytes):

set hive.exec.reducers.bytes.per.reducer=

In order to limit the maximum number of reducers:

set hive.exec.reducers.max=

In order to set a constant number of reducers:

set mapreduce.job.reduces=

Starting Job = job_1695353662369_0004, Tracking URL = http://master:8088/proxy/application_1695353662369_0004/

Kill Command = /usr/opt/hadoop-3.3.6/bin/mapred job -kill job_1695353662369_0004

Interrupting… Be patient, this might take some time.

Press Ctrl+C again to kill JVM

killing job with: job_1695353662369_0004

可用以下尝试下列方法解决

hive> set hive.exec.mode.local.auto=true;

hive> set hive.exec.mode.local.auto.inputbytes.max=50000000;

hive> set hive.exec.mode.local.auto.input.files.max=5;

最后贴图表示安装成功

3588

3588

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?