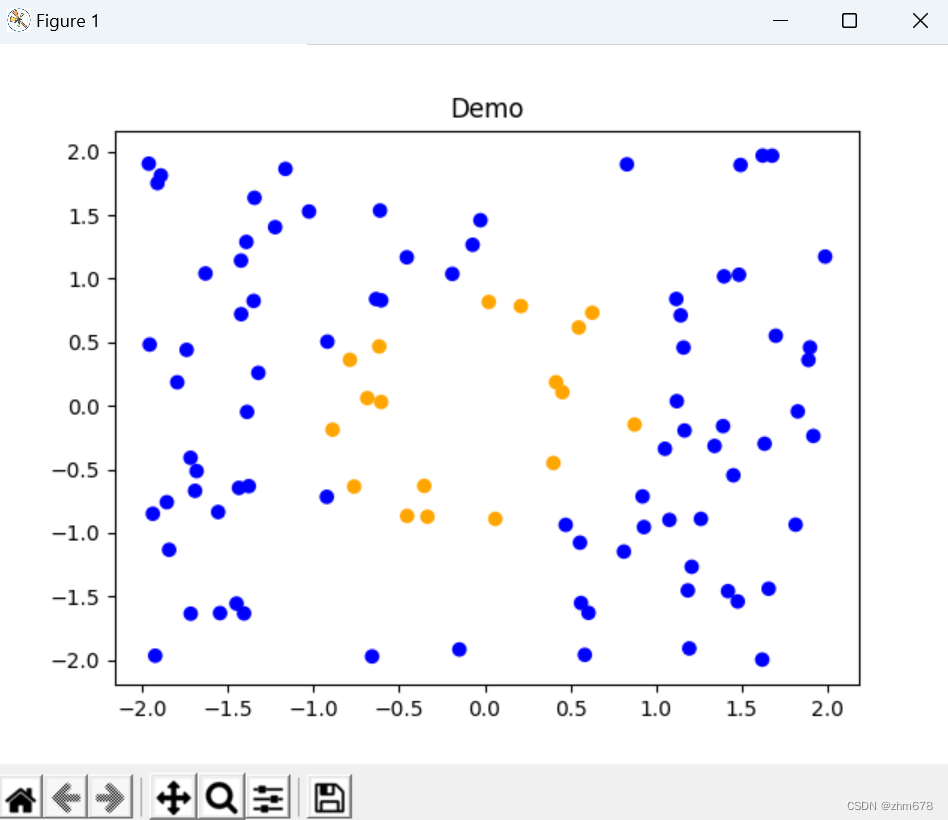

1.生成数据与可视化

生成的数据分为单位圆里与单位圆外的数据,单位圆里的数据的标签为0,单位圆外的数据标签为1

import numpy as np

import math

import random

import matplotlib.pyplot as plt

NUM_OF_DATA = 100

def tag_data(x, y):

if x**2 + y**2 < 1:

tag = 0

else:

tag = 1

return tag

def create_data(num_of_data):

data_list = []

for i in range(num_of_data):

x = random.uniform(-2, 2)

y = random.uniform(-2, 2)

tag = tag_data(x, y)

data = [x, y, tag]

data_list.append(data)

return np.array(data_list)

#-------------------可视化--------------

def plot_data(data, title):

color = []

for i in data[:, 2]:

if i == 0:

color.append("orange")

else:

color.append("blue")

plt.scatter(data[:, 0], data[:, 1], c=color)

plt.title(title)

plt.show()

# ------------------------------------

if __name__ == "__main__":

data = create_data(NUM_OF_DATA)

print(data)

plot_data(data, "Demo")

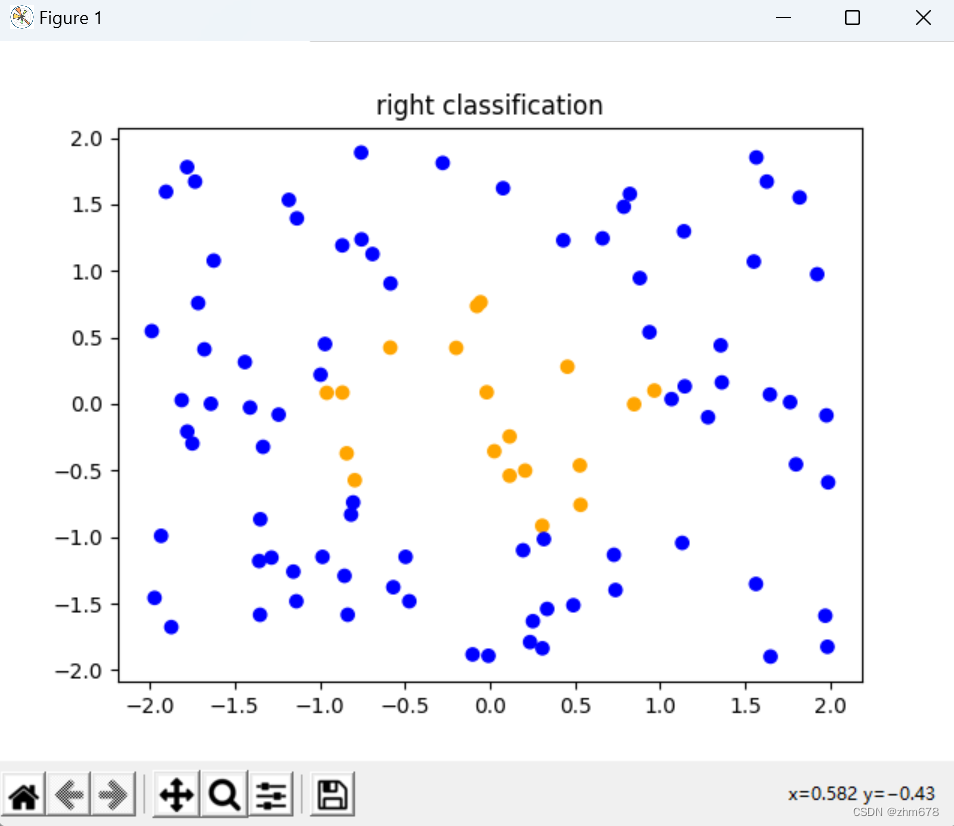

2.调用数据进行推理

import numpy as np

import create_data as cd

def activation_relu(input):

return np.maximum(0, input)

def activation_softmax(input):

max_values = np.max(input, axis=1, keepdims=True)# 取每一行的最大值并返回一个长度为样本量的向量

slide_inputs = input - max_values# input的每一个数减去每一行的最大值后最大值为0,其余为负数,指数函数的性质即横坐标距离相同,数值比值不变,有利于防止指数爆炸

exp_values = np.exp(slide_inputs)

exp_sums = np.sum(exp_values, axis=1, keepdims=True)

return exp_values / exp_sums# 每一行每一个数在整行的概率

# print(activation_softmax(inputs))

# 将数据进行标准化,避免梯度爆炸或梯度消失

def normalize(array):

max_number = np.max(np.absolute(array), axis=1, keepdims=True)

scale_rate = np.where(max_number == 0, 1, 1/max_number)

norm = array * scale_rate

return norm

def classify(probabilities):

classification = np.rint(probabilities[:, 1])

return classification

class Layer():

def __init__(self, n_inputs, n_neurons):

self.weights = np.random.randn(n_inputs, n_neurons)

self.biases = np.random.randn(n_neurons)

def layer_forward(self, inputs):

output = np.dot(inputs, self.weights) + self.biases

# output = activation_relu(sum1)

return output

class Net():

def __init__(self, Net_shape):

self.shape = Net_shape

self.layers = []

for i in range(len(self.shape)-1):

layer = Layer(self.shape[i], self.shape[i+1])

self.layers.append(layer)

def net_forward(self, inputs):

outputs = [inputs]

for i in range(len(self.layers)):

if(i < len(self.layers) - 1):

output = activation_relu(self.layers[i].layer_forward(outputs[i]))

print(output)

output = normalize(output)

print(output)

else:

output = activation_softmax(self.layers[i].layer_forward(outputs[i]))

outputs.append(output)

print(outputs)

return(outputs)

data = cd.create_data(100)

print(data)

cd.plot_data(data, "right classification")

inputs = data[:,(0, 1)]

net1_shape = [2, 4, 5, 2]

net1 = Net(net1_shape)

outputs = net1.net_forward(inputs)

classfication = classify(outputs[-1])

print(classfication)

data[:, 2] = classfication

print(data)

cd.plot_data(data, "before training")

730

730

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?