FAN: Feature Adaptation Network for Surveillance Face Recognition and Normalization

Target

This paper studies face recognition and normalization in surveillance imagery.

- What is face normalization?

Face normalization is a general task of generating an identity-preserved face while removing other non-identity variation including pose, expression, illumination and resolution. Most works of face normalization have focused on specifically removing pose variation, e.g. use affine transformation to keep the eyes horizontal, but side face is always side face. This paper integrate disentangled feature learning \color{red}\text{disentangled feature learning} disentangled feature learning to learn identity and non-identity features to help achieve face normalization for visualization, and identity preserving for face recognition, simultaneously - Data is important

But we can’t always collect paired data to train model. This paper proposed a novel method which is suitable for both paired and unpaired data, and a random scale augmentation strategy.

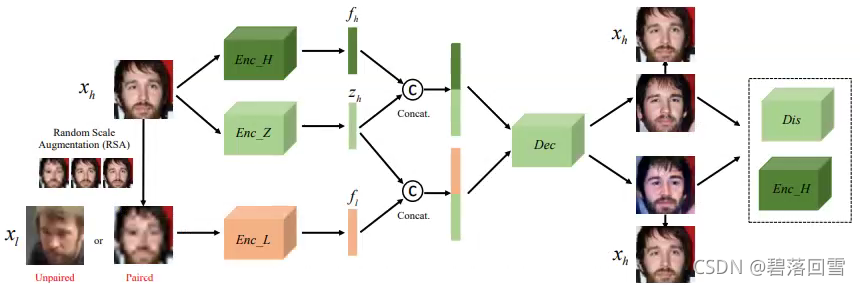

Feature Adaptation Network

Here we must train 4 CNN models.

- Enc_H, for identity-preserve feature from high-resolution images (fixed)

- Enc_Z, for non-identity feature

- Dec, for generating a face image from features

- Enc_L, for identity-preserve feature from low-resolution images

- Dis, for discriminating

The overview of FAN

FAN consists of two stages: disentangled feature leaning and feature adaptation. Dark green is pre-trained and fixed, light green is trained for feature disentanglement. Orange represent the feature adaptation where a LR identity encoder is learned with all other models (green) fixed.

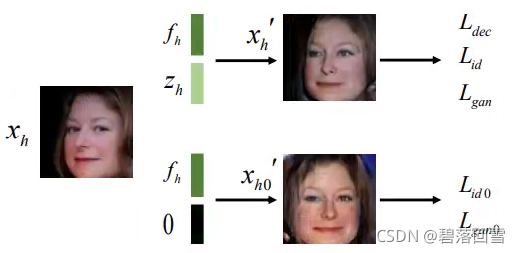

Disentangled Feature Learning

Enc_H which is trained with HR and LR images using standard softmax loss and m- L 2 L_2 L2 regularization [2] remains fixed for all later stages. Then to learn non-identity features z h = E n c Z ( x h ) z_h=Enc_Z(x_h) zh=EncZ(xh) by performing adversarial training and image reconstruction.

The disentangled features are combined to generate a face image x h ′ = D e c ( f h , z h ) x'_h=Dec(f_h, z_h) xh′=Dec(fh,zh). As f h f_h fh is discriminative for face recognition, the non-identity components will be discarded from f h f_h fh in the first step.

In this framework, we hope that if we set

z

h

=

0

z_h=0

zh=0, Dec can generate a normalized face image like

Paired and Unpaired Feature Adaptation

Aim to learn a feature extractor that works well for input faces with various resolutions. So this part is just to train Enc_L (another way for super resolution)

The important thing in this part is the scale augmentation strategy, Random Scale Augmentation (RSA).

Given a HR input

x

h

∈

R

N

h

×

N

h

x_h \in \mathscr{R}^{N_h \times N_h}

xh∈RNh×Nh, down-sample the image to a random resolution to obtain

x

l

∈

R

K

×

K

x_l \in \mathscr{R}^{K \times K}

xl∈RK×K, where

K

∈

[

N

l

,

N

h

]

K \in [N_l, N_h]

K∈[Nl,Nh] and

N

l

N_l

Nl is the lowest pixel resolution.

(but I thought this is not a contribution)

Loss

Non-identity Loss

L

z

=

∣

∣

F

C

(

z

h

)

−

y

z

∣

∣

2

2

L_z=||FC(z_h)-yz||^2_2

Lz=∣∣FC(zh)−yz∣∣22

where

y

z

=

[

1

N

D

,

.

.

.

,

1

N

D

]

∈

R

N

D

y_z = [\frac{1}{N_D},...,\frac{1}{N_D}] \in \mathscr{R}^{N_D}

yz=[ND1,...,ND1]∈RND and

N

D

N_D

ND is the total number of identities in the training set.

Pixel Loss

L d e c = ∣ ∣ x h ′ − x h ∣ ∣ 2 2 L_{dec}=||x'_h-x_h||^2_2 Ldec=∣∣xh′−xh∣∣22

Identity Loss

K i d = ∣ ∣ E n c H ( x h ′ ) − f h ∣ ∣ 2 2 K_{id}=||Enc_H(x'_h)-f_h||^2_2 Kid=∣∣EncH(xh′)−fh∣∣22

GAN-based discriminator loss

use standard binary cross entropy classification loss

Low Resolution Feature Loss

L e n c = ∣ ∣ E n c L ( x l ) − E n c H ( x h ) ∣ ∣ 2 2 L_{enc}=||Enc_L(x_l)-Enc_H(x_h)||^2_2 Lenc=∣∣EncL(xl)−EncH(xh)∣∣22

SR Loss

L e n c d e c = ∣ ∣ D e c ( f l , E n c Z ( x h ) ) − x h ∣ ∣ 2 2 L_{enc_dec}=||Dec(f_l, Enc_Z(x_h)) - x_h||^2_2 Lencdec=∣∣Dec(fl,EncZ(xh))−xh∣∣22

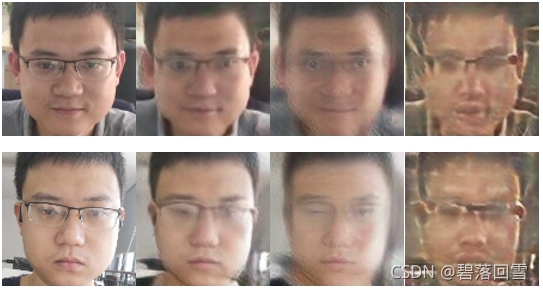

My Work about FAN

I don’t have m-

L

2

L_2

L2 loss and also enough GPU for training, so I employ Circle Loss for Enc_H. Then I removed the Dis and Enc_L, try to learn Enc_Z to verify if I can disentangle the non-identity feature. I get the results like

But I can’t normalize a side face!!! In addition, I found that if retain dropout layer in the Enc_H, the decoder can hardly generate a face image.

[1] Yin, Xi, et al. “Fan: Feature adaptation network for s

urveillance face recognition and normalization.” Proceedings of the Asian Conference on Computer Vision. 2020.

[2] Yin, Xi, et al. “Feature transfer learning for face recognition with under-represented data.” Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 2019.

628

628

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?