之前初学spark用spark-shell执行小程序的时候, 每次执行action操作(比如count,collect或者println),都会报错:

WARN TaskSchedulerImpl: Initial job has not accepted any resources; check your cluster UI to ensure that workers are registered and have sufficient resources

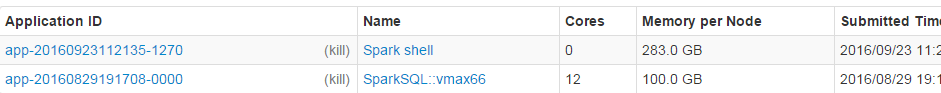

同时如果去spark ui上(公司默认为ip:18080)会看到spark-shell为核数core为0:

原因是启动spark-shell的时候没有给他分配资源, 所以我们应该在启动spark-shell的时候这么写:

/home/mr/spark/bin/spark-shell --executor-memory 4G \

--total-executor-cores 10 \

在使用Spark Shell时遇到任务未接受资源的问题,可以通过设置启动参数解决。如指定executor内存、总CPU核数和每个executor的CPU核数。例如:`--executor-memory 10g --total-executor-cores 10 --executor-cores 1`。在Yarn上运行Spark Shell时,Driver需运行在本地。可通过修改Spark Shell启动脚本,将这些参数设为默认,避免每次手动输入。

在使用Spark Shell时遇到任务未接受资源的问题,可以通过设置启动参数解决。如指定executor内存、总CPU核数和每个executor的CPU核数。例如:`--executor-memory 10g --total-executor-cores 10 --executor-cores 1`。在Yarn上运行Spark Shell时,Driver需运行在本地。可通过修改Spark Shell启动脚本,将这些参数设为默认,避免每次手动输入。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

2590

2590

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?