import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

from sklearn.preprocessing import MinMaxScaler

from tensorflow.keras.models import Sequential

from tensorflow.keras.layers import LSTM, Dense, Activation

#创建LSTM模型

def create_model():

model = Sequential()

#输入数据的shape为(n_samples, timestamps, features)

#隐藏层设置为256, input_shape元组第二个参数1意指features为1

#下面还有个lstm,故return_sequences设置为True

model.add(LSTM(units=256,input_shape=(None,1),return_sequences=True))

model.add(LSTM(units=256))

#后接全连接层,直接输出单个值,故units为1

model.add(Dense(units=1))

model.add(Activation('linear'))

model.compile(loss='mse',optimizer='adam')

return model

导入数据

df=pd.read_excel(r'C:\Users\张逊\Documents\WeChat Files\wxid_a8oh18cik1m211\FileStorage\File\2021-05\合肥历史天气(1).xlsx')

---------------------------------------------------------------------------

FileNotFoundError Traceback (most recent call last)

<ipython-input-2-6b583db91cf3> in <module>

----> 1 df=pd.read_excel(r'C:\Users\张逊\Documents\WeChat Files\wxid_a8oh18cik1m211\FileStorage\File\2021-05\合肥历史天气(1).xlsx')

C:\Anaconda\lib\site-packages\pandas\util\_decorators.py in wrapper(*args, **kwargs)

297 )

298 warnings.warn(msg, FutureWarning, stacklevel=stacklevel)

--> 299 return func(*args, **kwargs)

300

301 return wrapper

C:\Anaconda\lib\site-packages\pandas\io\excel\_base.py in read_excel(io, sheet_name, header, names, index_col, usecols, squeeze, dtype, engine, converters, true_values, false_values, skiprows, nrows, na_values, keep_default_na, na_filter, verbose, parse_dates, date_parser, thousands, comment, skipfooter, convert_float, mangle_dupe_cols, storage_options)

334 if not isinstance(io, ExcelFile):

335 should_close = True

--> 336 io = ExcelFile(io, storage_options=storage_options, engine=engine)

337 elif engine and engine != io.engine:

338 raise ValueError(

C:\Anaconda\lib\site-packages\pandas\io\excel\_base.py in __init__(self, path_or_buffer, engine, storage_options)

1079 else:

1080 ext = inspect_excel_format(

-> 1081 content=path_or_buffer, storage_options=storage_options

1082 )

1083

C:\Anaconda\lib\site-packages\pandas\io\excel\_base.py in inspect_excel_format(path, content, storage_options)

957

958 with get_handle(

--> 959 content_or_path, "rb", storage_options=storage_options, is_text=False

960 ) as handle:

961 stream = handle.handle

C:\Anaconda\lib\site-packages\pandas\io\common.py in get_handle(path_or_buf, mode, encoding, compression, memory_map, is_text, errors, storage_options)

654 else:

655 # Binary mode

--> 656 handle = open(handle, ioargs.mode)

657 handles.append(handle)

658

FileNotFoundError: [Errno 2] No such file or directory: 'C:\\Users\\张逊\\Documents\\WeChat Files\\wxid_a8oh18cik1m211\\FileStorage\\File\\2021-05\\合肥历史天气(1).xlsx'

#取前1000行

df=df[['wind/m/sec']]

df=df.iloc[0:1000,:]

df

| wind/m/sec | |

|---|---|

| 0 | 2.67 |

| 1 | 2.73 |

| 2 | 2.26 |

| 3 | 1.44 |

| 4 | 2.17 |

| ... | ... |

| 995 | 4.12 |

| 996 | 5.41 |

| 997 | 4.43 |

| 998 | 3.43 |

| 999 | 3.30 |

1000 rows × 1 columns

data

---------------------------------------------------------------------------

NameError Traceback (most recent call last)

<ipython-input-4-c5d84736ba45> in <module>

----> 1 data

NameError: name 'data' is not defined

数据归一化,构造训练数据结构

scaler_minmax = MinMaxScaler()

data = scaler_minmax.fit_transform(df)

infer_seq_length = 10#用于推断的历史序列长度

d = []

for i in range(data.shape[0]-infer_seq_length):

d.append(data[i:i+infer_seq_length+1].tolist())

d = np.array(d)

d

array([[[0.35871404],

[0.36886633],

[0.2893401 ],

...,

[0.44500846],

[0.34179357],

[0.19966159]],

[[0.36886633],

[0.2893401 ],

[0.15059222],

...,

[0.34179357],

[0.19966159],

[0.22165821]],

[[0.2893401 ],

[0.15059222],

[0.27411168],

...,

[0.19966159],

[0.22165821],

[0.37563452]],

...,

[[0.08121827],

[0.28257191],

[0.35025381],

...,

[0.60406091],

[0.82233503],

[0.65651438]],

[[0.28257191],

[0.35025381],

[0.36209814],

...,

[0.82233503],

[0.65651438],

[0.48730964]],

[[0.35025381],

[0.36209814],

[0.321489 ],

...,

[0.65651438],

[0.48730964],

[0.46531303]]])

划分训练集

split_rate = 0.9

X_train, y_train = d[:int(d.shape[0]*split_rate),:-1], d[:int(d.shape[0]*split_rate),-1]

y_train

array([[0.19966159],

[0.22165821],

[0.37563452],

[0.50761421],

[0.49746193],

[0.22673435],

[0.27241963],

[0.30964467],

[0.38240271],

[0.21827411],

[0.13705584],

[0.34686971],

[0.23350254],

[0.54145516],

[0.55837563],

[0.26903553],

[0.46869712],

[0.38747885],

[0.6142132 ],

[0.84263959],

[0.49069374],

[0.12521151],

[0.21658206],

[0.26565144],

[0.14890017],

[0.22335025],

[0.25380711],

[0.27411168],

[0.14213198],

[0.31133672],

[0.47038917],

[0.43654822],

[0.32656514],

[0.54314721],

[0.2927242 ],

[0.50423012],

[0.69204738],

[0.43824027],

[0.642978 ],

[0.1928934 ],

[0.6142132 ],

[0.38747885],

[0.61759729],

[0.75972927],

[0.52622673],

[0.20812183],

[0.23857868],

[0.43485618],

[0.34010152],

[0.31133672],

[0.17089679],

[0.13536379],

[0.1962775 ],

[0.39593909],

[0.24534687],

[0.1962775 ],

[0.29441624],

[0.20304569],

[0.48730964],

[0.58714044],

[0.45516074],

[0.40778342],

[0.31302876],

[0.36379019],

[0.39255499],

[0.23011844],

[0.48392555],

[0.34686971],

[0.23350254],

[0.37732657],

[0.16582064],

[0.14551607],

[0.68020305],

[0.64467005],

[0.60406091],

[0.3248731 ],

[0.41116751],

[0.28426396],

[0.01015228],

[0.10998308],

[0.14213198],

[0.46192893],

[0.74788494],

[0.3891709 ],

[0.22165821],

[0.43485618],

[0.59052453],

[0.32825719],

[0.27241963],

[0.38071066],

[0.60067682],

[0.7394247 ],

[0.46531303],

[0.33333333],

[0.0676819 ],

[0.26734349],

[0.43993232],

[0.40101523],

[0.25211506],

[0.42808799],

[0.70558376],

[0.3536379 ],

[0.45516074],

[0.13536379],

[0.38240271],

[0.19796954],

[0.36040609],

[0.2605753 ],

[0.84602369],

[0.39593909],

[0.46023689],

[0.39255499],

[0.43316413],

[0.41116751],

[0.68866328],

[0.50423012],

[0.19796954],

[0.31810491],

[0.35532995],

[0.1641286 ],

[0.24365482],

[0.20981387],

[0.29441624],

[0.43147208],

[0.59052453],

[0.80541455],

[0.63113367],

[0.49746193],

[0.34517766],

[0.33333333],

[0.14043993],

[0.40778342],

[0.20304569],

[0.14382403],

[0.33333333],

[0.34517766],

[0.2893401 ],

[0.47546531],

[0.39086294],

[0.47715736],

[0.16582064],

[0.07952623],

[0.1607445 ],

[0.27411168],

[0.25380711],

[0.3536379 ],

[0.34686971],

[0.5177665 ],

[0.73265651],

[0.46700508],

[0.46362098],

[0.11844332],

[0.09306261],

[0.25380711],

[0.24196277],

[0.31133672],

[0.49576988],

[0.36209814],

[0.37901861],

[0.29610829],

[0.40101523],

[0.06598985],

[0.25211506],

[0.52284264],

[0.59052453],

[0.37732657],

[0.24703892],

[0.10490694],

[0.07106599],

[0.35025381],

[0.43147208],

[0.48392555],

[0.33333333],

[0.09813875],

[0.36717428],

[0.39424704],

[0.54483926],

[0.68020305],

[0.37394247],

[0.30795262],

[0.09475465],

[0.1928934 ],

[0.321489 ],

[0.46192893],

[0.54822335],

[0.52622673],

[0.54145516],

[0.63282572],

[0.4213198 ],

[0.32994924],

[0.08629442],

[0.2284264 ],

[0.2571912 ],

[0.39593909],

[0.357022 ],

[0.11844332],

[0.15905245],

[0.02368866],

[0.08121827],

[0.39763113],

[0.48392555],

[0.49238579],

[0.43654822],

[0.19796954],

[0.08967851],

[0.37394247],

[0.47038917],

[0.27749577],

[0.14382403],

[0.19458545],

[0.19458545],

[0.09137056],

[0.27411168],

[0.34179357],

[0.31810491],

[0.1928934 ],

[0.12351946],

[0.21996616],

[0.44670051],

[0.37732657],

[0.43485618],

[0.70219966],

[0.89170897],

[0.30626058],

[0.11675127],

[0.31810491],

[0.2605753 ],

[0.24196277],

[0.40101523],

[0.43993232],

[0.16920474],

[0.34348562],

[0.83756345],

[0.97292724],

[0.40947547],

[0.24196277],

[0.30626058],

[0.36379019],

[0.36040609],

[0.34179357],

[0.65651438],

[0.75126904],

[0.37055838],

[0.49915398],

[0.33333333],

[0.43993232],

[0.30964467],

[0.31472081],

[0.18781726],

[0.4213198 ],

[0.23857868],

[0.17935702],

[0.27411168],

[0.23519459],

[0.25211506],

[0.43147208],

[0.50592217],

[0.34179357],

[0.29103215],

[0.17935702],

[0.21827411],

[0.24534687],

[0.21319797],

[0.29103215],

[0.29610829],

[0.11167513],

[0.10998308],

[0.12690355],

[0.39763113],

[0.31641286],

[0.3248731 ],

[0.59052453],

[0.32656514],

[0.12690355],

[0.07783418],

[0.15566836],

[0.11505922],

[0.0676819 ],

[0.05414552],

[0.11167513],

[0.35871404],

[0.29103215],

[0.24534687],

[0.35194585],

[0.26226734],

[0.27918782],

[0.56175973],

[0.41285956],

[0.10490694],

[0.12521151],

[0.07614213],

[0.14213198],

[0.25549915],

[0.16582064],

[0.10152284],

[0.32825719],

[0.07275804],

[0.18950931],

[0.07783418],

[0.08121827],

[0.49576988],

[0.63959391],

[0.91032149],

[0.09306261],

[0.21319797],

[0.15228426],

[0.14043993],

[0.10998308],

[0.2250423 ],

[0.32994924],

[0.11505922],

[0.19458545],

[0.321489 ],

[0.82064298],

[0.24027073],

[0.06598985],

[0.27918782],

[0.11675127],

[0.18950931],

[0.14890017],

[0.14043993],

[0.27580372],

[0.40270728],

[1. ],

[0.59898477],

[0.34010152],

[0.08121827],

[0.18274112],

[0.24365482],

[0.34856176],

[0.1928934 ],

[0.13367174],

[0.18612521],

[0.50930626],

[0.59729272],

[0.29780034],

[0.12013536],

[0.25549915],

[0.31302876],

[0.47038917],

[0.40270728],

[0.15566836],

[0.17089679],

[0.28087986],

[0.35532995],

[0.35025381],

[0.17258883],

[0.20642978],

[0.51945854],

[0.34179357],

[0.39424704],

[0.10998308],

[0.46362098],

[0.14382403],

[0.53807107],

[0.49069374],

[0.23181049],

[0.13536379],

[0.1641286 ],

[0.14382403],

[0.20135364],

[0.357022 ],

[0.32318105],

[0.33164129],

[0.23350254],

[0.24365482],

[0.38071066],

[0.30287648],

[0.15736041],

[0.23519459],

[0.21827411],

[0.48392555],

[0.69543147],

[0.27580372],

[0.11336717],

[0.30456853],

[0.47546531],

[0.43147208],

[0.20981387],

[0.14551607],

[0.3536379 ],

[0.4500846 ],

[0.52961083],

[0.42808799],

[0.47208122],

[0.28087986],

[0.19458545],

[0.19796954],

[0.11505922],

[0.17258883],

[0.26226734],

[0.10829103],

[0.09306261],

[0.44331641],

[0.32825719],

[0.18443316],

[0.30118443],

[0.84771574],

[0.59390863],

[0.3857868 ],

[0.30118443],

[0.25888325],

[0.36886633],

[0.24534687],

[0.48054146],

[0.54145516],

[0.32656514],

[0.50084602],

[0.36209814],

[0.60575296],

[0.59729272],

[0.33333333],

[0.24534687],

[0.85448393],

[0.20812183],

[0.03553299],

[0.33502538],

[0.19966159],

[0.12690355],

[0.48730964],

[0.59898477],

[0.63790186],

[0.33671743],

[0.62605753],

[0.3857868 ],

[0.31472081],

[0.33671743],

[0.68697124],

[0.33333333],

[0.10659898],

[0.14720812],

[0.33502538],

[0.4534687 ],

[0.5786802 ],

[0.84094755],

[0.33502538],

[0.23181049],

[0.40609137],

[0.4500846 ],

[0.65989848],

[0.47715736],

[0.33502538],

[0.27241963],

[0.37563452],

[0.28257191],

[0.26395939],

[0.25211506],

[0.24365482],

[0.20642978],

[0.17935702],

[0.34348562],

[0.27580372],

[0.30287648],

[0.29610829],

[0.30964467],

[0.4822335 ],

[0.48730964],

[0.2927242 ],

[0.17597293],

[0.1641286 ],

[0.52453469],

[0.678511 ],

[0.56175973],

[0.22335025],

[0.14720812],

[0.14043993],

[0.11336717],

[0.22673435],

[0.24703892],

[0.39932318],

[0.39932318],

[0.13705584],

[0.29441624],

[0.26395939],

[0.29441624],

[0.39255499],

[0.08967851],

[0.27918782],

[0.55837563],

[0.54483926],

[0.53299492],

[0.25380711],

[0.30118443],

[0.23519459],

[0.20642978],

[0.12013536],

[0.11844332],

[0.27749577],

[0.15397631],

[0.48900169],

[0.30964467],

[0.20642978],

[0.38071066],

[0.2284264 ],

[0.26226734],

[0.30456853],

[0.26395939],

[0.25549915],

[0.37055838],

[0.642978 ],

[0.48730964],

[0.28764805],

[0.21658206],

[0.48392555],

[0.25211506],

[0.29441624],

[0.40101523],

[0.34686971],

[0.11336717],

[0.11336717],

[0.17258883],

[0.11675127],

[0.24703892],

[0.40439932],

[0.25888325],

[0.60406091],

[0.70219966],

[0.36040609],

[0.36040609],

[0.36548223],

[0.30795262],

[0.17428088],

[0.15736041],

[0.17935702],

[0.07106599],

[0.19458545],

[0.21658206],

[0.13536379],

[0.09475465],

[0.12013536],

[0.08967851],

[0.18443316],

[0.38409475],

[0.53130288],

[0.4856176 ],

[0.37901861],

[0.31810491],

[0.15059222],

[0.36886633],

[0.21996616],

[0.30964467],

[0.65651438],

[0.27580372],

[0.28595601],

[0.31641286],

[0.25380711],

[0.2284264 ],

[0.2893401 ],

[0.39932318],

[0.25211506],

[0.31641286],

[0.18612521],

[0.16243655],

[0.14551607],

[0.28257191],

[0.36717428],

[0.4179357 ],

[0.51269036],

[0.29780034],

[0.29780034],

[0.28087986],

[0.10152284],

[0.13705584],

[0.17428088],

[0.23350254],

[0.1607445 ],

[0.15566836],

[0.26226734],

[0.08798646],

[0.10829103],

[0.12013536],

[0.2927242 ],

[0.43824027],

[0.52453469],

[0.41116751],

[0.13536379],

[0.04568528],

[0.14551607],

[0.17089679],

[0.18781726],

[0.07614213],

[0.19796954],

[0.38240271],

[0.26395939],

[0.26565144],

[0.25549915],

[0.23011844],

[0.20981387],

[0.26226734],

[0.2250423 ],

[0.17597293],

[0.0998308 ],

[0.2893401 ],

[0.31979695],

[0.25549915],

[0. ],

[0.14213198],

[0.16751269],

[0.23181049],

[0.09306261],

[0.18950931],

[0.34856176],

[0.26903553],

[0.36548223],

[0.36886633],

[0.29441624],

[0.21150592],

[0.14213198],

[0.3891709 ],

[0.33502538],

[0.27918782],

[0.17089679],

[0.31133672],

[0.39763113],

[0.36717428],

[0.14551607],

[0.41455161],

[0.27241963],

[0.22335025],

[0.21319797],

[0.35194585],

[0.27241963],

[0.25211506],

[0.39424704],

[0.40270728],

[0.22673435],

[0.40270728],

[0.2927242 ],

[0.29780034],

[0.18781726],

[0.61928934],

[0.34010152],

[0.11505922],

[0.15566836],

[0.12521151],

[0.30456853],

[0.24196277],

[0.16582064],

[0.07952623],

[0.10152284],

[0.12690355],

[0.46531303],

[0.38240271],

[0.24534687],

[0.15566836],

[0.2927242 ],

[0.34856176],

[0.14551607],

[0.16243655],

[0.21319797],

[0.17766497],

[0.33333333],

[0.35194585],

[0.62436548],

[0.46023689],

[0.14043993],

[0.10152284],

[0.1607445 ],

[0.21319797],

[0.20642978],

[0.18104907],

[0.3857868 ],

[0.46362098],

[0.52284264],

[0.30118443],

[0.54822335],

[0.15736041],

[0.23688663],

[0.30795262],

[0.1607445 ],

[0.14720812],

[0.23181049],

[0.22165821],

[0.18443316],

[0.04737733],

[0.08629442],

[0.24196277],

[0.49576988],

[0.2284264 ],

[0.11675127],

[0.12013536],

[0.32825719],

[0.69543147],

[0.41285956],

[0.18274112],

[0.15397631],

[0.10659898],

[0.12521151],

[0.15736041],

[0.35194585],

[0.20135364],

[0.14043993],

[0.30964467],

[0.23857868],

[0.13028765],

[0.46362098],

[0.3248731 ],

[0.43824027],

[0.357022 ],

[0.34517766],

[0.23350254],

[0.20135364],

[0.2284264 ],

[0.17089679],

[0.12690355],

[0.53130288],

[0.25042301],

[0.37394247],

[0.1607445 ],

[0.26226734],

[0.2284264 ],

[0.50761421],

[0.78172589],

[0.58714044],

[0.4534687 ],

[0.60067682],

[0.4500846 ],

[0.16243655],

[0.14551607],

[0.09306261],

[0.20473773],

[0.10829103],

[0.12013536],

[0.30964467],

[0.23350254],

[0.47715736],

[0.19458545],

[0.17766497],

[0.23181049],

[0.24365482],

[0.15228426],

[0.10998308],

[0.60406091],

[0.25042301],

[0.11505922],

[0.30626058],

[0.16243655],

[0.2284264 ],

[0.29780034],

[0.0642978 ],

[0.357022 ],

[0.09137056],

[0.0998308 ],

[0.11167513],

[0.19966159],

[0.1319797 ],

[0.17428088],

[0.32994924],

[0.38409475],

[0.65989848],

[0.54314721],

[0.30287648],

[0.18104907],

[0.29441624],

[0.1928934 ],

[0.15059222],

[0.29949239],

[0.39086294],

[0.34348562],

[0.22335025],

[0.19966159],

[0.18781726],

[0.33164129],

[0.2250423 ],

[0.24703892],

[0.36379019],

[0.47038917],

[0.23857868],

[0.30118443],

[0.44670051],

[0.16243655],

[0.11167513],

[0.21658206],

[0.63451777],

[0.17428088],

[0.23688663],

[0.26565144],

[0.46023689],

[0.52791878],

[0.30626058],

[0.73604061],

[0.7072758 ],

[0.61252115],

[0.53637902],

[0.46869712],

[0.21996616],

[0.19966159],

[0.0998308 ],

[0.0676819 ],

[0.24873096],

[0.13874788],

[0.31979695],

[0.30456853],

[0.29780034],

[0.69543147],

[0.79357022],

[0.30964467],

[0.09475465],

[0.41624365],

[0.4534687 ],

[0.45516074],

[0.49238579],

[0.24873096],

[0.11167513],

[0.45854484],

[0.33502538],

[0.17428088],

[0.1319797 ],

[0.12351946],

[0.39932318],

[0.15397631],

[0.10659898],

[0.26903553],

[0.27411168],

[0.10998308],

[0.34010152],

[0.62944162],

[0.58375635],

[0.39086294],

[0.52453469],

[0.38409475],

[0.47038917],

[0.2250423 ],

[0.14213198],

[0.29103215],

[0.46700508],

[0.43824027],

[0.77664975],

[0.40947547],

[0.27749577],

[0.45516074],

[0.5465313 ],

[0.15397631],

[0.19796954],

[0.357022 ],

[0.36548223],

[0.14720812],

[0.24534687],

[0.24703892],

[0.47884941],

[0.11844332],

[0.59052453],

[0.76649746],

[0.5465313 ],

[0.54822335],

[0.66666667],

[0.52284264],

[0.46700508],

[0.357022 ],

[0.57360406],

[0.43993232],

[0.53468697],

[0.20981387],

[0.20642978],

[0.3857868 ],

[0.08629442],

[0.39424704],

[0.5143824 ],

[0.0998308 ],

[0.1285956 ],

[0.30795262],

[0.27411168],

[0.21658206],

[0.33840948],

[0.08629442],

[0.16582064],

[0.34348562],

[0.08629442],

[0.26734349],

[0.30626058],

[0.30626058],

[0.2927242 ],

[0.50930626],

[0.43147208],

[0.32656514],

[0.19966159],

[0.37901861],

[0.54145516],

[0.19120135],

[0.5786802 ],

[0.63620981],

[0.2250423 ],

[0.50592217],

[0.321489 ],

[0.28087986],

[0.64974619],

[0.52791878],

[0.47377327],

[0.2893401 ],

[0.3248731 ],

[0.43147208]])

model =create_model()

model.fit(X_train, y_train, batch_size=20,epochs=100,validation_split=0.1)

Epoch 1/100

41/41 [==============================] - 1s 28ms/step - loss: 0.0356 - val_loss: 0.0273

Epoch 2/100

41/41 [==============================] - 1s 17ms/step - loss: 0.0285 - val_loss: 0.0287

Epoch 3/100

41/41 [==============================] - 1s 18ms/step - loss: 0.0286 - val_loss: 0.0268

Epoch 4/100

41/41 [==============================] - 1s 17ms/step - loss: 0.0266 - val_loss: 0.0267

Epoch 5/100

41/41 [==============================] - 1s 17ms/step - loss: 0.0252 - val_loss: 0.0266

Epoch 6/100

41/41 [==============================] - 1s 17ms/step - loss: 0.0239 - val_loss: 0.0276

Epoch 7/100

41/41 [==============================] - 1s 17ms/step - loss: 0.0235 - val_loss: 0.0299

Epoch 8/100

41/41 [==============================] - 1s 18ms/step - loss: 0.0251 - val_loss: 0.0291

Epoch 9/100

41/41 [==============================] - 1s 18ms/step - loss: 0.0235 - val_loss: 0.0278

Epoch 10/100

41/41 [==============================] - 1s 17ms/step - loss: 0.0233 - val_loss: 0.0273

Epoch 11/100

41/41 [==============================] - 1s 17ms/step - loss: 0.0234 - val_loss: 0.0279

Epoch 12/100

41/41 [==============================] - 1s 17ms/step - loss: 0.0236 - val_loss: 0.0274

Epoch 13/100

41/41 [==============================] - 1s 17ms/step - loss: 0.0234 - val_loss: 0.0277

Epoch 14/100

41/41 [==============================] - 1s 18ms/step - loss: 0.0240 - val_loss: 0.0273

Epoch 15/100

41/41 [==============================] - 1s 18ms/step - loss: 0.0251 - val_loss: 0.0312

Epoch 16/100

41/41 [==============================] - 1s 18ms/step - loss: 0.0242 - val_loss: 0.0282

Epoch 17/100

41/41 [==============================] - 1s 18ms/step - loss: 0.0235 - val_loss: 0.0321

Epoch 18/100

41/41 [==============================] - 1s 18ms/step - loss: 0.0238 - val_loss: 0.0281

Epoch 19/100

41/41 [==============================] - 1s 18ms/step - loss: 0.0234 - val_loss: 0.0286

Epoch 20/100

41/41 [==============================] - 1s 18ms/step - loss: 0.0233 - val_loss: 0.0273

Epoch 21/100

41/41 [==============================] - 1s 18ms/step - loss: 0.0233 - val_loss: 0.0302

Epoch 22/100

41/41 [==============================] - 1s 17ms/step - loss: 0.0232 - val_loss: 0.0284

Epoch 23/100

41/41 [==============================] - 1s 18ms/step - loss: 0.0235 - val_loss: 0.0288

Epoch 24/100

41/41 [==============================] - 1s 17ms/step - loss: 0.0235 - val_loss: 0.0280

Epoch 25/100

41/41 [==============================] - 1s 17ms/step - loss: 0.0236 - val_loss: 0.0273

Epoch 26/100

41/41 [==============================] - 1s 17ms/step - loss: 0.0232 - val_loss: 0.0297

Epoch 27/100

41/41 [==============================] - 1s 17ms/step - loss: 0.0238 - val_loss: 0.0276

Epoch 28/100

41/41 [==============================] - 1s 17ms/step - loss: 0.0238 - val_loss: 0.0286

Epoch 29/100

41/41 [==============================] - 1s 17ms/step - loss: 0.0234 - val_loss: 0.0287

Epoch 30/100

41/41 [==============================] - 1s 18ms/step - loss: 0.0232 - val_loss: 0.0272

Epoch 31/100

41/41 [==============================] - 1s 18ms/step - loss: 0.0240 - val_loss: 0.0309

Epoch 32/100

41/41 [==============================] - 1s 18ms/step - loss: 0.0233 - val_loss: 0.0285

Epoch 33/100

41/41 [==============================] - 1s 18ms/step - loss: 0.0231 - val_loss: 0.0290

Epoch 34/100

41/41 [==============================] - 1s 18ms/step - loss: 0.0235 - val_loss: 0.0284

Epoch 35/100

41/41 [==============================] - 1s 18ms/step - loss: 0.0232 - val_loss: 0.0286

Epoch 36/100

41/41 [==============================] - 1s 18ms/step - loss: 0.0233 - val_loss: 0.0289

Epoch 37/100

41/41 [==============================] - 1s 18ms/step - loss: 0.0233 - val_loss: 0.0279

Epoch 38/100

41/41 [==============================] - 1s 18ms/step - loss: 0.0234 - val_loss: 0.0276

Epoch 39/100

41/41 [==============================] - 1s 18ms/step - loss: 0.0235 - val_loss: 0.0286

Epoch 40/100

41/41 [==============================] - 1s 18ms/step - loss: 0.0231 - val_loss: 0.0277

Epoch 41/100

41/41 [==============================] - 1s 18ms/step - loss: 0.0231 - val_loss: 0.0274

Epoch 42/100

41/41 [==============================] - 1s 18ms/step - loss: 0.0230 - val_loss: 0.0281

Epoch 43/100

41/41 [==============================] - 1s 18ms/step - loss: 0.0232 - val_loss: 0.0285

Epoch 44/100

41/41 [==============================] - 1s 18ms/step - loss: 0.0234 - val_loss: 0.0303

Epoch 45/100

41/41 [==============================] - 1s 18ms/step - loss: 0.0234 - val_loss: 0.0308

Epoch 46/100

41/41 [==============================] - 1s 18ms/step - loss: 0.0236 - val_loss: 0.0283

Epoch 47/100

41/41 [==============================] - 1s 18ms/step - loss: 0.0234 - val_loss: 0.0278

Epoch 48/100

41/41 [==============================] - 1s 18ms/step - loss: 0.0229 - val_loss: 0.0280

Epoch 49/100

41/41 [==============================] - 1s 18ms/step - loss: 0.0232 - val_loss: 0.0294

Epoch 50/100

41/41 [==============================] - 1s 18ms/step - loss: 0.0230 - val_loss: 0.0328

Epoch 51/100

41/41 [==============================] - 1s 19ms/step - loss: 0.0239 - val_loss: 0.0282

Epoch 52/100

41/41 [==============================] - 1s 18ms/step - loss: 0.0230 - val_loss: 0.0287

Epoch 53/100

41/41 [==============================] - 1s 20ms/step - loss: 0.0234 - val_loss: 0.0274

Epoch 54/100

41/41 [==============================] - 1s 22ms/step - loss: 0.0231 - val_loss: 0.0287

Epoch 55/100

41/41 [==============================] - 1s 22ms/step - loss: 0.0228 - val_loss: 0.0285

Epoch 56/100

41/41 [==============================] - 1s 22ms/step - loss: 0.0230 - val_loss: 0.0286

Epoch 57/100

41/41 [==============================] - 1s 22ms/step - loss: 0.0227 - val_loss: 0.0296

Epoch 58/100

41/41 [==============================] - 1s 21ms/step - loss: 0.0230 - val_loss: 0.0284

Epoch 59/100

41/41 [==============================] - 1s 22ms/step - loss: 0.0229 - val_loss: 0.0284

Epoch 60/100

41/41 [==============================] - 1s 21ms/step - loss: 0.0234 - val_loss: 0.0314

Epoch 61/100

41/41 [==============================] - 1s 21ms/step - loss: 0.0232 - val_loss: 0.0292

Epoch 62/100

41/41 [==============================] - 1s 21ms/step - loss: 0.0229 - val_loss: 0.0294

Epoch 63/100

41/41 [==============================] - 1s 21ms/step - loss: 0.0229 - val_loss: 0.0301

Epoch 64/100

41/41 [==============================] - 1s 22ms/step - loss: 0.0230 - val_loss: 0.0299

Epoch 65/100

41/41 [==============================] - 1s 21ms/step - loss: 0.0229 - val_loss: 0.0299

Epoch 66/100

41/41 [==============================] - 1s 21ms/step - loss: 0.0233 - val_loss: 0.0285

Epoch 67/100

41/41 [==============================] - 1s 21ms/step - loss: 0.0228 - val_loss: 0.0291

Epoch 68/100

41/41 [==============================] - 1s 20ms/step - loss: 0.0230 - val_loss: 0.0302

Epoch 69/100

41/41 [==============================] - 1s 20ms/step - loss: 0.0228 - val_loss: 0.0314

Epoch 70/100

41/41 [==============================] - 1s 20ms/step - loss: 0.0232 - val_loss: 0.0296

Epoch 71/100

41/41 [==============================] - 1s 21ms/step - loss: 0.0229 - val_loss: 0.0313

Epoch 72/100

41/41 [==============================] - 1s 21ms/step - loss: 0.0228 - val_loss: 0.0292

Epoch 73/100

41/41 [==============================] - 1s 20ms/step - loss: 0.0229 - val_loss: 0.0300

Epoch 74/100

41/41 [==============================] - 1s 21ms/step - loss: 0.0230 - val_loss: 0.0293

Epoch 75/100

41/41 [==============================] - 1s 20ms/step - loss: 0.0228 - val_loss: 0.0302

Epoch 76/100

41/41 [==============================] - 1s 19ms/step - loss: 0.0231 - val_loss: 0.0293

Epoch 77/100

41/41 [==============================] - 1s 20ms/step - loss: 0.0230 - val_loss: 0.0316

Epoch 78/100

41/41 [==============================] - 1s 20ms/step - loss: 0.0229 - val_loss: 0.0303

Epoch 79/100

41/41 [==============================] - 1s 20ms/step - loss: 0.0233 - val_loss: 0.0295

Epoch 80/100

41/41 [==============================] - 1s 19ms/step - loss: 0.0229 - val_loss: 0.0297

Epoch 81/100

41/41 [==============================] - 1s 20ms/step - loss: 0.0231 - val_loss: 0.0299

Epoch 82/100

41/41 [==============================] - 1s 19ms/step - loss: 0.0233 - val_loss: 0.0300

Epoch 83/100

41/41 [==============================] - 1s 19ms/step - loss: 0.0229 - val_loss: 0.0317

Epoch 84/100

41/41 [==============================] - 1s 19ms/step - loss: 0.0229 - val_loss: 0.0304

Epoch 85/100

41/41 [==============================] - 1s 19ms/step - loss: 0.0230 - val_loss: 0.0299

Epoch 86/100

41/41 [==============================] - 1s 19ms/step - loss: 0.0230 - val_loss: 0.0293

Epoch 87/100

41/41 [==============================] - 1s 19ms/step - loss: 0.0239 - val_loss: 0.0300

Epoch 88/100

41/41 [==============================] - 1s 19ms/step - loss: 0.0234 - val_loss: 0.0295

Epoch 89/100

41/41 [==============================] - 1s 19ms/step - loss: 0.0227 - val_loss: 0.0298

Epoch 90/100

41/41 [==============================] - 1s 19ms/step - loss: 0.0227 - val_loss: 0.0308

Epoch 91/100

41/41 [==============================] - 1s 19ms/step - loss: 0.0228 - val_loss: 0.0306

Epoch 92/100

41/41 [==============================] - 1s 19ms/step - loss: 0.0227 - val_loss: 0.0302

Epoch 93/100

41/41 [==============================] - 1s 19ms/step - loss: 0.0226 - val_loss: 0.0296

Epoch 94/100

41/41 [==============================] - 1s 19ms/step - loss: 0.0233 - val_loss: 0.0290

Epoch 95/100

41/41 [==============================] - 1s 19ms/step - loss: 0.0228 - val_loss: 0.0310

Epoch 96/100

41/41 [==============================] - 1s 19ms/step - loss: 0.0227 - val_loss: 0.0302

Epoch 97/100

41/41 [==============================] - 1s 19ms/step - loss: 0.0226 - val_loss: 0.0291

Epoch 98/100

41/41 [==============================] - 1s 19ms/step - loss: 0.0228 - val_loss: 0.0327

Epoch 99/100

41/41 [==============================] - 1s 19ms/step - loss: 0.0228 - val_loss: 0.0308

Epoch 100/100

41/41 [==============================] - 1s 19ms/step - loss: 0.0226 - val_loss: 0.0304

<tensorflow.python.keras.callbacks.History at 0x25ed1caa508>

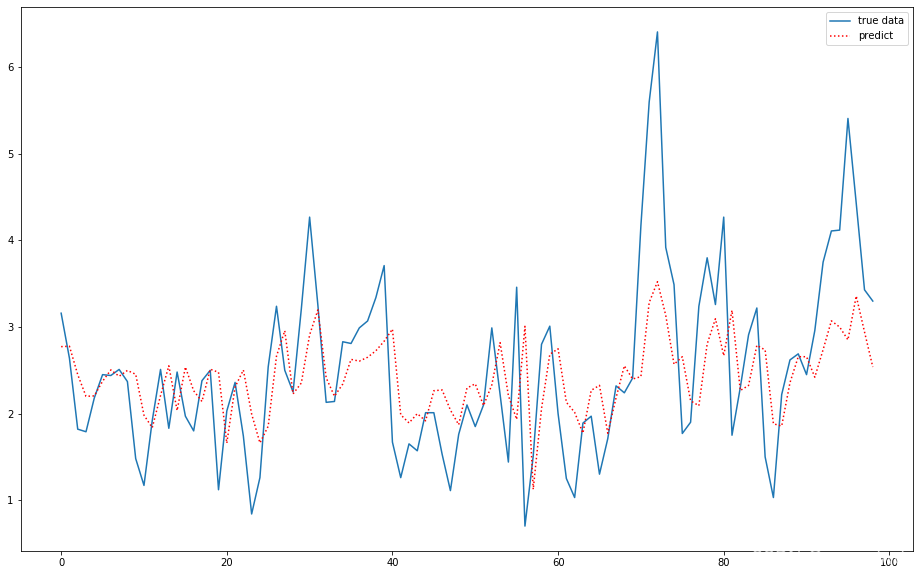

训练集效果

#inverse_transform获得归一化前的原始数据

plt.figure(figsize=(16,10))

plt.plot(scaler_minmax.inverse_transform(d[:,-1]),label='true data')

plt.plot(scaler_minmax.inverse_transform(model.predict(d[:,:-1])),'r:',label='predict')

plt.legend()

pd.DataFrame(scaler_minmax.inverse_transform(model.predict(d[:,:-1])),)

| 0 | |

|---|---|

| 0 | 2.519001 |

| 1 | 2.000833 |

| 2 | 2.232971 |

| 3 | 2.671180 |

| 4 | 2.993458 |

| ... | ... |

| 985 | 2.999729 |

| 986 | 2.851660 |

| 987 | 3.358534 |

| 988 | 2.944040 |

| 989 | 2.537982 |

990 rows × 1 columns

[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-HYpYOtUn-1649237934550)(output_11_1.png)]

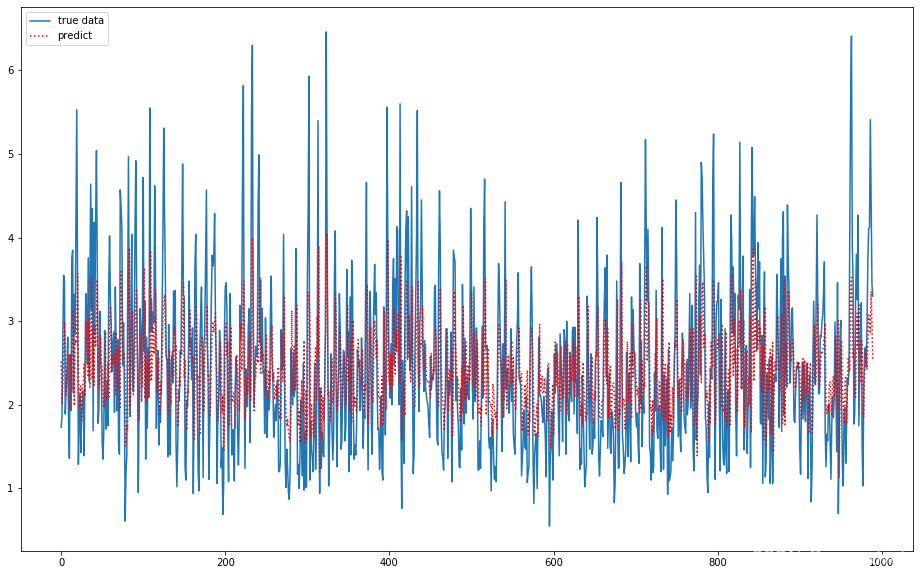

测试集

plt.figure(figsize=(16,10))

plt.plot(scaler_minmax.inverse_transform(d[int(len(d)*split_rate):,-1]),label='true data')

plt.plot(scaler_minmax.inverse_transform(model.predict(d[int(len(d)*split_rate):,:-1])),'r:',label='predict')

plt.legend()

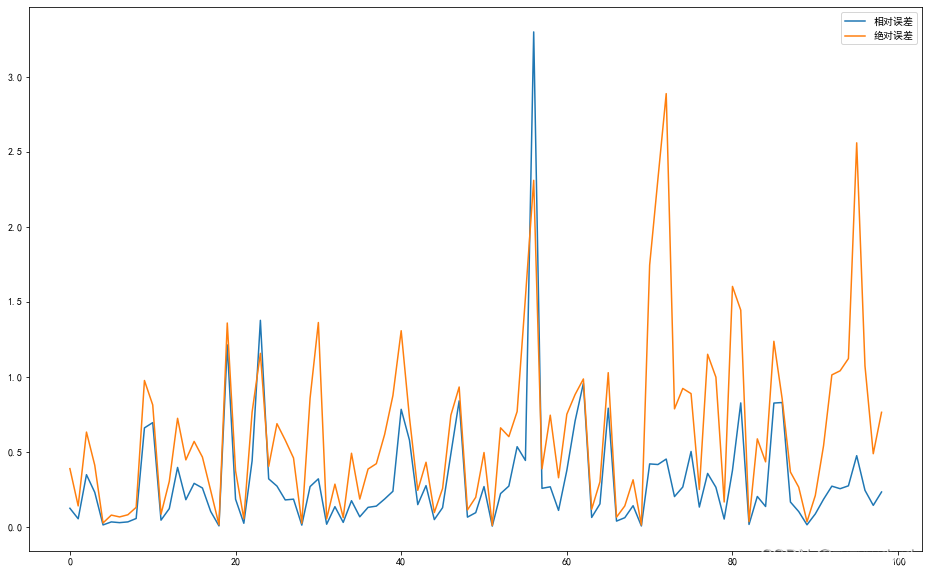

测试

plt.rcParams['font.sans-serif'] = ['SimHei'] # 指定默认字体

plt.rcParams['axes.unicode_minus'] = False # 解决保存图像时负号'-'显示为方块的问题

predict=scaler_minmax.inverse_transform(model.predict(d[int(len(d)*split_rate):,:-1]))

true_data=scaler_minmax.inverse_transform(d[int(len(d)*split_rate):,-1])

plt.figure(figsize=(16,10))

plt.plot(abs(true_data-predict)/true_data,label='相对误差')

plt.plot(abs(true_data-predict),label='绝对误差')

plt.legend()

1812

1812

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?