Algorithms for PP attachment

Alg0

- Dumb one, to label all labels as a default label, say low

Alg1: random baseline

- A random unsupervised baseline would have to label each instance in the test data with a random label, 0 or 1.

- Practically, it is the lower bound of all the other algorithms

The evaluation is performed on the test set, not training set, and test set should never be used until you completely finished your algorithm, and you could use development set to tune the parameters or add features

Observations

- Data set has the characteristics of domain specification

- Too complicate method will lead to overfit

Upper Bound

- Usually, human performance is used for the upper bound. For PP attachment, using the 4 previous features, human accuracy is around 88%. So we could expect an upper bound of 87%

Using linguistic knowledge

- For example, we know the preposition of is much more likely to be associated with low attachment than high attachment (98.7% with 5000+ instances in training set). Therefore, the feature prep_of is very valuable(informative and frequent)

Alg2

If the prep is 'of', label the tuple as 'low'

Else

If the prep is 'to', label the tuple as 'high'

Else

label the tuple as 'low'(default)

The accuracy will be around 60%, proving that using linguistic knowledge will improve the algorithm

Alg2a

If the prep is 'of', label the tuple as 'low'

Else

If the prep is 'to', label the tuple as 'high'

Else

label the tuple as 'high'(default)

After looking at prep ‘of’ and ‘to’, the remaining data set contains more high attachment than low attachment on average, and the accuracy will be around 74%.

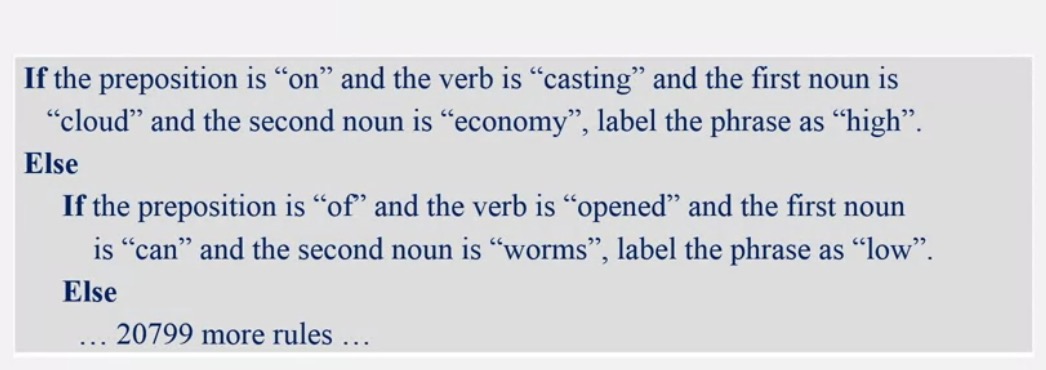

Alg3

We will set rules for every case in the training set, too specific.

Remark: Due to inconsistent human labelers, more context is needed.

5343

5343

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?