- What is the starting point to write this paper?

The distribution of each layer’s inputs changes during training, as the parameters of the previous layers change, resulting in slowing down the training by requiring low learning rates and careful parameter initialization. Besides, the problems in which the changing of distribution of input data resulted compel the next layer to adapt to the new distribution.

Additional, consider a layer with sigmoid activation function Z=G(Wu+b) where u is the layer input, W and b are the parameters which should be learned respectively. As |u| increases, the derivation of Z(u) tends to zero. This means that for all that for all dimensions of x = Wu+b except those with small absolute values, the gradient flowing down to u will vanish and the model will train slowly. However, the value u is affected by the parameters W and b, as well as the previous parameter, the effect is amplified as the network depth increase.

2 How author deal with the above problem!

Based on the above question, one proposed a method as follows:

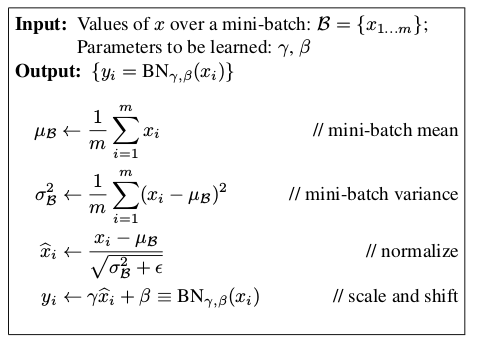

The BN transform can be added to a network to manipulate any activation.

3297

3297

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?